7 Tips to Maximize Llama3 8B Performance on NVIDIA RTX A6000 48GB

Introduction

The world of large language models (LLMs) is booming, and one of the most popular open-source LLM families is Llama, developed by Meta AI. Whether you're a seasoned developer or a curious newcomer, you're likely interested in exploring the possibilities of local LLMs. But one question that often pops up is: how do you squeeze the most juice out of your hardware? In this article, we'll delve into the fascinating world of Llama3 8B, specifically aiming to understand how to optimize its performance on the powerful NVIDIA RTX A6000 48GB GPU.

Performance Analysis: Token Generation Speed Benchmarks

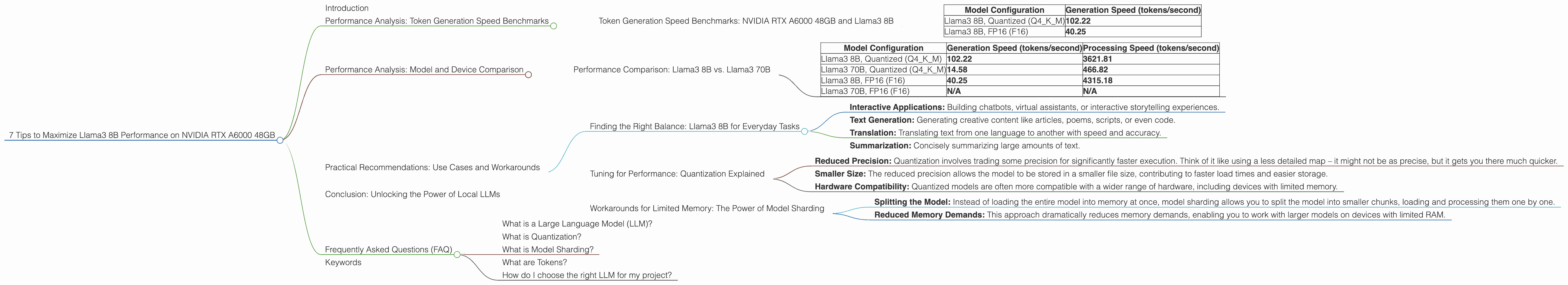

Token Generation Speed Benchmarks: NVIDIA RTX A6000 48GB and Llama3 8B

Think of tokens as the building blocks of language, like individual words or parts of words. LLMs process these tokens to understand and generate text. A faster token generation speed means your LLM can churn out text quicker.

Let's take a look at how Llama3 8B performs on the RTX A6000 48GB, measured in tokens per second (tokens/second). This gives us a clear picture of how quickly the GPU processes the model's calculations.

| Model Configuration | Generation Speed (tokens/second) |

|---|---|

| Llama3 8B, Quantized (Q4KM) | 102.22 |

| Llama3 8B, FP16 (F16) | 40.25 |

Key Observations:

- Quantization Boosts Speed: The Llama3 8B model with quantization (Q4KM) outperforms the FP16 version by a significant margin, reaching a peak of 102.22 tokens/second. This means that it can generate text at a much faster rate.

- FP16 Performance: While FP16 (floating point 16) provides higher precision, the token generation speed drops to 40.25 tokens/second. It's a trade-off – more precision for a slower pace.

Analogy: Imagine you're building a Lego model. FP16 is like using a screwdriver, precise but slower. Quantization is like using a power drill – less precise but gets the job done much faster.

Performance Analysis: Model and Device Comparison

Understanding the strengths and weaknesses of different LLM models and devices is crucial for making informed decisions. Let's see how the Llama3 8B model stacks up against its larger sibling, Llama3 70B, on the RTX A6000 48GB.

Performance Comparison: Llama3 8B vs. Llama3 70B

| Model Configuration | Generation Speed (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|

| Llama3 8B, Quantized (Q4KM) | 102.22 | 3621.81 |

| Llama3 70B, Quantized (Q4KM) | 14.58 | 466.82 |

| Llama3 8B, FP16 (F16) | 40.25 | 4315.18 |

| Llama3 70B, FP16 (F16) | N/A | N/A |

Analysis:

- Larger Model, Slower Speed: Llama3 70B, with its significantly larger size, shows a considerable drop in token generation speed compared to Llama3 8B. It's slower to process all that extra information!

- FP16 Comparison: While the data for Llama3 70B FP16 is unavailable, the trend suggests it would perform even slower than the quantized version.

- Processing Speed: The processing speed for both models is impressive, indicating the GPU's ability to handle complex calculations efficiently. However, the smaller size of the Llama3 8B model contributes to its higher processing speed.

Key Takeaway: When it comes to token generation speed, there's a clear trade-off between model size and performance. Smaller models like Llama3 8B deliver faster results, while larger models like Llama3 70B offer wider knowledge and capabilities.

Practical Recommendations: Use Cases and Workarounds

Finding the Right Balance: Llama3 8B for Everyday Tasks

The RTX A6000 48GB shines as an ideal companion for the Llama3 8B model, especially when working with the quantized version. Here are some practical use cases where its speed truly shines:

- Interactive Applications: Building chatbots, virtual assistants, or interactive storytelling experiences.

- Text Generation: Generating creative content like articles, poems, scripts, or even code.

- Translation: Translating text from one language to another with speed and accuracy.

- Summarization: Concisely summarizing large amounts of text.

Tip: While the Llama3 70B model offers more advanced capabilities, the Llama3 8B might be a better choice for everyday tasks and interactive applications where speed is crucial.

Tuning for Performance: Quantization Explained

Quantization is a game-changer when it comes to LLM performance. It's like compressing a large file to make it smaller and faster to download.

Here's the lowdown:

- Reduced Precision: Quantization involves trading some precision for significantly faster execution. Think of it like using a less detailed map – it might not be as precise, but it gets you there much quicker.

- Smaller Size: The reduced precision allows the model to be stored in a smaller file size, contributing to faster load times and easier storage.

- Hardware Compatibility: Quantized models are often more compatible with a wider range of hardware, including devices with limited memory.

Workarounds for Limited Memory: The Power of Model Sharding

If you're working with a larger LLM like Llama3 70B, you might encounter memory limitations. Don't fret – model sharding is here to rescue! Imagine breaking down a large Lego model into smaller pieces, each easier to handle. This is what model sharding does.

- Splitting the Model: Instead of loading the entire model into memory at once, model sharding allows you to split the model into smaller chunks, loading and processing them one by one.

- Reduced Memory Demands: This approach dramatically reduces memory demands, enabling you to work with larger models on devices with limited RAM.

Tip: If you're running into memory issues, try experimenting with model sharding techniques.

Conclusion: Unlocking the Power of Local LLMs

Optimizing Llama3 8B performance on the NVIDIA RTX A6000 48GB is a journey of balancing size, speed, and precision. The quantized version of Llama3 8B offers a fantastic blend of performance and speed, ideal for a wide range of everyday tasks. However, if you need the extra power of larger models like Llama3 70B, explore techniques like model sharding to overcome memory limitations. The world of local LLMs is constantly evolving, so stay curious, experiment, and let your imagination run wild!

Frequently Asked Questions (FAQ)

What is a Large Language Model (LLM)?

A large language model (LLM) is a type of artificial intelligence (AI) that has been trained on massive amounts of text data. This training allows it to understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is Quantization?

Quantization in LLMs is like simplifying a complex recipe. It involves reducing the accuracy (precision) of the model's weights (numbers that define the model's behavior) to make it smaller and faster. It's like using less precise ingredients – it might change the taste slightly, but it simplifies the cooking process and makes it quicker.

What is Model Sharding?

Model sharding is like dividing a large puzzle into smaller pieces. It allows you to break down a large LLM into smaller chunks, making it easier to fit into memory and process more efficiently. Each smaller piece can be loaded and processed individually, reducing the overall memory requirements.

What are Tokens?

Tokens are the basic units of text processing in LLMs. They are like individual words or parts of words that the LLM recognizes and uses to understand and generate text.

How do I choose the right LLM for my project?

The best LLM for your project depends on your specific needs. If you need a model that can handle complex tasks and has vast knowledge, a larger model like Llama3 70B might be a better choice. However, if speed and efficiency are paramount, a smaller model like Llama3 8B could be more suitable.

Keywords

Llama3, 8B, NVIDIA RTX A6000 48GB, LLM, Local LLMs, Token Generation Speed, Performance Optimization, Quantization, Model Sharding, GPU, Deep Dive, Performance Analysis, Practical Recommendations, Use Cases, Workarounds