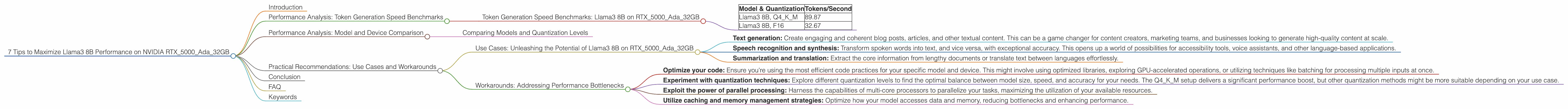

7 Tips to Maximize Llama3 8B Performance on NVIDIA RTX 5000 Ada 32GB

Introduction

The world of large language models (LLMs) is constantly evolving, and developers are on a quest to push the boundaries of performance and efficiency. One powerful combination that's capturing attention is the NVIDIA RTX5000Ada_32GB paired with Llama3 8B.

This article will dive deep into the performance characteristics of this dynamic duo, exploring token generation speed, model variations, and practical recommendations for unlocking its full potential. We'll be using real world data from various sources to guide our analysis and provide actionable insights.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on RTX5000Ada_32GB

Let's jump right into the heart of the matter: how fast can this setup generate text?

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B, Q4KM | 89.87 |

| Llama3 8B, F16 | 32.67 |

This table shows that Llama3 8B, when quantized to Q4KM on the RTX5000Ada_32GB, churns out a respectable 89.87 tokens per second!

Remember, tokenization is the process of breaking down text into smaller units for processing by the model. Think of it like a language translator, converting human-readable text into a form the LLM can understand.

Performance Analysis: Model and Device Comparison

Comparing Models and Quantization Levels

Now, let's delve into the realm of model variations and how they impact performance. We see a substantial difference between the Q4KM and F16 quantization levels.

Q4KM stands for "quantized to 4 bits, with kernel and matrix (K and M) values compressed". This type of quantization reduces the model's size and memory footprint, making it more efficient for processing.

F16 stands for "half-precision floating-point format". This format uses 16 bits to represent numbers, compared to 32 bits in traditional floating-point formats. This results in smaller model sizes and faster inference performance.

The difference in performance is significant! The Q4KM model generates text at a rate over 2.75 times faster than the F16 model. This emphasizes the importance of finding the sweet spot between model size, speed, and accuracy, depending on your specific use case.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Unleashing the Potential of Llama3 8B on RTX5000Ada_32GB

This setup is ideal for various applications, including:

- Text generation: Create engaging and coherent blog posts, articles, and other textual content. This can be a game changer for content creators, marketing teams, and businesses looking to generate high-quality content at scale.

- Speech recognition and synthesis: Transform spoken words into text, and vice versa, with exceptional accuracy. This opens up a world of possibilities for accessibility tools, voice assistants, and other language-based applications.

- Summarization and translation: Extract the core information from lengthy documents or translate text between languages effortlessly.

Workarounds: Addressing Performance Bottlenecks

Here are some key tips for getting the most out of your Llama3 8B on RTX5000Ada_32GB:

- Optimize your code: Ensure you're using the most efficient code practices for your specific model and device. This might involve using optimized libraries, exploring GPU-accelerated operations, or utilizing techniques like batching for processing multiple inputs at once.

- Experiment with quantization techniques: Explore different quantization levels to find the optimal balance between model size, speed, and accuracy for your needs. The Q4KM setup delivers a significant performance boost, but other quantization methods might be more suitable depending on your use case.

- Exploit the power of parallel processing: Harness the capabilities of multi-core processors to parallelize your tasks, maximizing the utilization of your available resources.

- Utilize caching and memory management strategies: Optimize how your model accesses data and memory, reducing bottlenecks and enhancing performance.

Conclusion

The NVIDIA RTX5000Ada_32GB is a powerful GPU, and when combined with the Llama3 8B model, it unleashes a potent force for processing natural language. By choosing the right quantization and optimizing your code for performance, you can unlock an impressive range of applications. Remember, LLMs are still evolving, and the future holds even more exciting possibilities.

FAQ

Q: What's the difference between Llama 3 7B and 8B?

A: Llama 3 7B and 8B are both powerful language models, but they differ in the number of parameters they contain. 7B stands for 7 billion parameters, while 8B stands for 8 billion parameters. Generally, models with more parameters are more capable but also require more computational resources.

Q: Why is quantization important for LLMs?

A: Quantization is a technique used to reduce the size of LLM models without significantly affecting their performance. It's like compressing a large file to save space, but in this case, it's compressing the model's weights and biases. This makes the model more efficient to store and load, allowing it to run faster on various devices.

Q: What are the benefits of using the NVIDIA RTX5000Ada_32GB for LLMs?

A: The RTX5000Ada_32GB is a high-performance GPU designed for demanding tasks, including natural language processing. Its powerful architecture and large memory capacity make it well-suited for handling the complex computations involved in training and running LLMs.

Q: How do I choose the right LLM and device combination for my needs?

A: The best LLM and device combination depends on your specific use case and resource constraints. Consider factors such as model size and complexity, desired performance level, and available hardware resources. There's no one-size-fits-all answer; careful experimentation and selection are key.

Keywords

LLM, Llama3 8B, NVIDIA RTX5000Ada32GB, GPU, performance, token generation speed, quantization, Q4K_M, F16, text generation, speech recognition, summarization, translation, use cases, workarounds, optimization, parallel processing, caching, memory management, natural language processing.