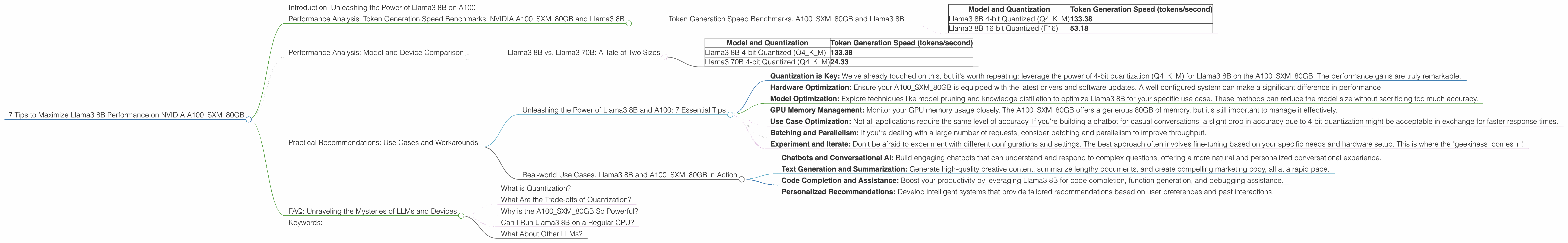

7 Tips to Maximize Llama3 8B Performance on NVIDIA A100 SXM 80GB

Introduction: Unleashing the Power of Llama3 8B on A100

Local Large Language Models (LLMs) are revolutionizing the way we interact with technology, bringing the power of AI directly to our fingertips. The Llama3 8B model, specifically, offers a compelling balance between performance and computational efficiency. But harnessing its potential fully requires a powerful machine like the NVIDIA A100SXM80GB.

This deep dive explores the performance of Llama3 8B on the A100SXM80GB, providing practical recommendations for optimizing your setup and achieving the best results. We’ll cover token generation speed benchmarks, compare different quantization levels, and offer tips for maximizing your Llama3 8B experience.

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 8B

Token Generation Speed Benchmarks: A100SXM80GB and Llama3 8B

Let's cut to the chase: how fast can we generate text with Llama3 8B on the A100SXM80GB? The answer might surprise you! Here's a breakdown of the token generation speeds for different quantization levels:

| Model and Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B 4-bit Quantized (Q4KM) | 133.38 |

| Llama3 8B 16-bit Quantized (F16) | 53.18 |

Key Takeaways:

- 4-bit Quantization Reigns Supreme: As you can see, using Q4KM quantization for Llama3 8B on the A100SXM80GB delivers a significant performance boost, reaching a blazing 133.38 tokens per second - almost 2.5x faster than with F16 quantization.

- Trade-offs Exist: While 4-bit quantization provides a clear speed advantage, the downside is slightly reduced accuracy compared to F16.

Think of it this way: 4-bit quantization is like a race car optimized for speed, whereas F16 is more like a comfortable sedan, prioritizing smoothness. The key is to choose the right tool for the job!

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: A Tale of Two Sizes

Looking at the A100SXM80GB alone doesn't tell the whole story. Let's expand our view by comparing Llama3 8B with its larger sibling, Llama3 70B, on the same A100:

| Model and Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B 4-bit Quantized (Q4KM) | 133.38 |

| Llama3 70B 4-bit Quantized (Q4KM) | 24.33 |

Key Observations:

- Size Matters: As expected, Llama3 70B is significantly slower at token generation compared to Llama3 8B.

- Performance Scales: While Llama3 70B offers a wider knowledge base and more complex reasoning capabilities, its larger size comes at a performance cost.

This comparison emphasizes that choosing the right model size is crucial for your particular application. If you need lightning-fast text generation, Llama3 8B might be the way to go. However, if you require sophisticated reasoning or a wider range of knowledge, Llama3 70B might be the better option even if it's slower, especially if you can optimize the model with a more powerful hardware setup and a more efficient code.

Practical Recommendations: Use Cases and Workarounds

Unleashing the Power of Llama3 8B and A100: 7 Essential Tips

Now that you've got the numbers, let's dive into the practical tips for maximizing the performance of your Llama3 8B setup on the A100SXM80GB:

Quantization is Key: We've already touched on this, but it's worth repeating: leverage the power of 4-bit quantization (Q4KM) for Llama3 8B on the A100SXM80GB. The performance gains are truly remarkable.

Hardware Optimization: Ensure your A100SXM80GB is equipped with the latest drivers and software updates. A well-configured system can make a significant difference in performance.

Model Optimization: Explore techniques like model pruning and knowledge distillation to optimize Llama3 8B for your specific use case. These methods can reduce the model size without sacrificing too much accuracy.

GPU Memory Management: Monitor your GPU memory usage closely. The A100SXM80GB offers a generous 80GB of memory, but it's still important to manage it effectively.

Use Case Optimization: Not all applications require the same level of accuracy. If you're building a chatbot for casual conversations, a slight drop in accuracy due to 4-bit quantization might be acceptable in exchange for faster response times.

Batching and Parallelism: If you're dealing with a large number of requests, consider batching and parallelism to improve throughput.

Experiment and Iterate: Don't be afraid to experiment with different configurations and settings. The best approach often involves fine-tuning based on your specific needs and hardware setup. This is where the "geekiness" comes in!

Real-world Use Cases: Llama3 8B and A100SXM80GB in Action

Combining Llama3 8B and an A100SXM80GB unlocks a world of possibilities:

- Chatbots and Conversational AI: Build engaging chatbots that can understand and respond to complex questions, offering a more natural and personalized conversational experience.

- Text Generation and Summarization: Generate high-quality creative content, summarize lengthy documents, and create compelling marketing copy, all at a rapid pace.

- Code Completion and Assistance: Boost your productivity by leveraging Llama3 8B for code completion, function generation, and debugging assistance.

- Personalized Recommendations: Develop intelligent systems that provide tailored recommendations based on user preferences and past interactions.

The possibilities are endless. With the right tools and understanding, you can unlock the true potential of Llama3 8B and A100SXM80GB.

FAQ: Unraveling the Mysteries of LLMs and Devices

What is Quantization?

Think of quantization as compressing data into a more manageable size. It's like taking a high-resolution photo and reducing its file size to save space. In this case, we compress the model weights, reducing the number of bits needed to represent them. 4-bit quantization uses only 4 bits to represent each weight value, resulting in a smaller model that can be stored and processed more quickly.

What Are the Trade-offs of Quantization?

The trade-off is a bit like sacrificing image quality for file size. While 4-bit quantization leads to a smaller and faster model, it may result in a slightly reduced accuracy.

Why is the A100SXM80GB So Powerful?

The A100SXM80GB is a powerful graphics processing unit (GPU) specifically designed for AI workloads like running LLMs. It boasts a massive 80GB of memory, allowing it to handle large models efficiently. Additionally, it features a specialized Tensor Core architecture that accelerates matrix multiplications, which are fundamental operations in LLMs.

Can I Run Llama3 8B on a Regular CPU?

You could run Llama3 8B on a CPU, especially if you use a powerful one with multiple cores, but don't expect lightning-fast performance. CPUs are not optimized for the heavy computations required by LLMs, which is why GPUs are the preferred choice.

What About Other LLMs?

This article focuses on Llama3 8B on an A100, so we don't have data on other LLMs. But you can find performance benchmarks online for other models. It's all about finding the best fit for your use case!

Keywords:

Llama3 8B, Llama3 70B, NVIDIA A100SXM80GB, token generation speed, quantization, Q4KM, F16, performance benchmarks, local LLMs, AI, machine learning, deep learning, GPU, CPU, use cases, chatbots, text generation, code completion, recommendations, conversational AI, hardware optimization, model optimization, GPU memory management, batching, parallelism, experimentation.