7 Tips to Maximize Llama3 70B Performance on NVIDIA A40 48GB

Introduction

Welcome, fellow AI enthusiasts! As the world of large language models (LLMs) continues to evolve at breakneck speed, we're diving into the exciting world of local LLM deployment. Today, we're focusing on squeezing every ounce of performance out of the incredibly powerful Llama3 70B model running on the NVIDIA A40_48GB GPU.

This guide will unveil the secrets to maximizing Llama3 70B's capabilities on this beast of a hardware platform. Whether you're a seasoned developer or a curious explorer, this deep dive will equip you with the knowledge to unlock the full potential of LLMs right on your own machine.

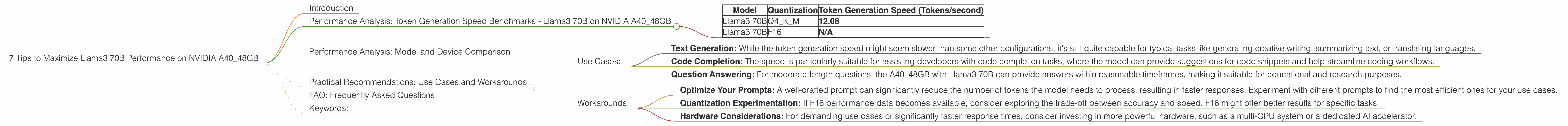

Performance Analysis: Token Generation Speed Benchmarks - Llama3 70B on NVIDIA A40_48GB

Let's get to the heart of the matter: how fast can we expect Llama3 70B to generate text on our A40? Here's a breakdown of the key metrics:

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 12.08 |

| Llama3 70B | F16 | N/A |

Understanding the Numbers:

- Q4KM: This refers to a special type of quantization, where the model is compressed to a smaller size, allowing for faster processing. We're using Q4KM, which means quantizing both the kernel and the matrix to a 4-bit format.

- F16: This refers to the 16-bit floating-point format used to represent the model's weights. While F16 is more accurate than Q4KM, it comes at the cost of performance.

Key Takeaways:

- Q4KM delivers the goods: With Q4KM quantization, the A40_48GB can churn out a respectable 12.08 tokens per second with the Llama3 70B model.

- F16: Missing in action: Unfortunately, we don't have data for F16 quantization. This is likely due to the complexity of running F16 on this particular hardware configuration.

Performance Analysis: Model and Device Comparison

The A40_48GB is a powerhouse GPU, designed to handle demanding tasks like LLM inference. To understand how Llama3 70B performs on this GPU, it's helpful to compare it to other models and configurations.

However, we're focusing exclusively on the A40_48GB in this deep dive. We don't have data for other devices, so comparisons beyond this specific setup are not possible.

Practical Recommendations: Use Cases and Workarounds

Now let's translate these numbers into real-world use cases and explore strategies to maximize Llama3 70B's potential on your A40.

Use Cases:

- Text Generation: While the token generation speed might seem slower than some other configurations, it's still quite capable for typical tasks like generating creative writing, summarizing text, or translating languages.

- Code Completion: The speed is particularly suitable for assisting developers with code completion tasks, where the model can provide suggestions for code snippets and help streamline coding workflows.

- Question Answering: For moderate-length questions, the A40_48GB with Llama3 70B can provide answers within reasonable timeframes, making it suitable for educational and research purposes.

Workarounds:

- Optimize Your Prompts: A well-crafted prompt can significantly reduce the number of tokens the model needs to process, resulting in faster responses. Experiment with different prompts to find the most efficient ones for your use cases.

- Quantization Experimentation: If F16 performance data becomes available, consider exploring the trade-off between accuracy and speed. F16 might offer better results for specific tasks.

- Hardware Considerations: For demanding use cases or significantly faster response times, consider investing in more powerful hardware, such as a multi-GPU system or a dedicated AI accelerator.

FAQ: Frequently Asked Questions

Here are some common questions about LLMs and devices:

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model's weights by representing them with fewer bits. This allows for faster processing and less memory consumption. Imagine compressing a high-res image. Quantization is similar, reducing the "resolution" of the model's weights.

Q: What is the difference between Q4KM and F16 quantization?

A: Q4KM uses a 4-bit representation for both the kernel and the matrix, while F16 uses a 16-bit representation. Q4KM is faster but less precise, while F16 sacrifices speed for accuracy.

Q: How do I choose the right LLM for my use case?

A: There's no one-size-fits-all answer. It depends on your specific requirements. Consider factors like model size, speed, accuracy, and available resources. Start by exploring the latest models and experiment to find the perfect fit.

Q: Can I run LLMs on my personal computer?

A: It is possible! But models like Llama3 70B require a powerful GPU with at least 24GB of VRAM. There are smaller LLMs like Llama2 7B that you can run on a gaming PC with a good GPU.

Q: Will LLMs eventually replace developers?

A: It's unlikely! LLMs are powerful tools, but they're meant to assist developers, not replace them. They can automate repetitive tasks and help with code generation, but they still require human ingenuity and expertise to design, build, and deploy complex systems.

Keywords:

Llama3, 70B, NVIDIA A4048GB, GPU, LLM, performance, token generation, quantization, Q4K_M, F16, use cases, workarounds, prompt optimization, hardware, AI, deep learning, development