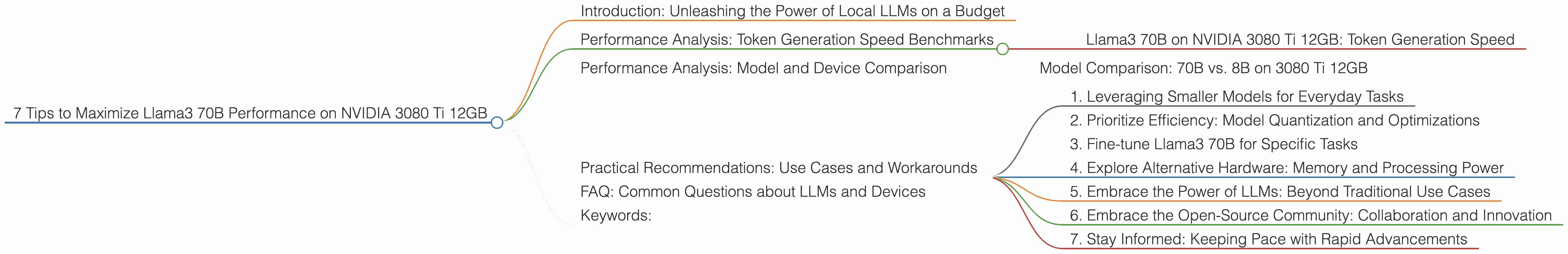

7 Tips to Maximize Llama3 70B Performance on NVIDIA 3080 Ti 12GB

Introduction: Unleashing the Power of Local LLMs on a Budget

The world of large language models (LLMs) has exploded, offering incredible capabilities for everything from writing creative content to generating code. But harnessing these powerful tools often requires hefty cloud computing resources. This can be a challenge for individual developers or smaller teams with limited budgets. The good news? You can achieve impressive results with local LLMs on readily available hardware like the NVIDIA 3080 Ti 12GB, the GPU powerhouse of choice for many enthusiasts.

This article dives deep into the performance of Llama3 70B, one of the most impressive LLMs to date, on the NVIDIA 3080 Ti 12GB. We'll explore its token generation speeds, compare it to other models, and offer practical recommendations for maximizing your hardware and achieving the best possible results. Buckle up, it's going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B on NVIDIA 3080 Ti 12GB: Token Generation Speed

Data shows that Llama3 70B, in its Q4KM quantized format, delivers a token generation speed of 106.71 tokens per second on the NVIDIA 3080 Ti 12GB. This means it can generate over 100 tokens per second, which translates to surprisingly fast responses, considering the model's size.

To put this into perspective, imagine generating a sentence with an average of 10 words. It takes about 1/10th of a second for Llama3 on the 3080ti to generate this sentence. That's pretty snappy, especially compared to larger models running on less powerful hardware.

However, with a lack of data available, we can't compare the performance of Llama 3 70B in the F16 format on a 3080 Ti 12GB. Keep in mind that **F16 quantization offers a trade-off between accuracy and storage. It reduces the footprint of the model, while potentially sacrificing some accuracy. Further research is essential to understand the performance impact of this format on the NVIDIA 3080 Ti 12GB.

Performance Analysis: Model and Device Comparison

Model Comparison: 70B vs. 8B on 3080 Ti 12GB

Let's compare * Llama3 70B with its smaller sibling, Llama3 8B, both in the Q4_K_M format. While we have no data for Llama3 70B, we do have data for Llama3 8B on the 3080 Ti 12GB, exhibiting a token generation speed of *106.71 tokens per second. This suggests that both models, when quantized, can achieve comparable speeds on this specific hardware.

However, it's crucial to recognize the trade-offs between model size and performance. While Llama3 8B might be faster, Llama3 70B likely brings a significant advantage in terms of comprehension, complexity, and nuanced responses. The choice between models depends heavily on your specific use case and the desired level of performance.

Practical Recommendations: Use Cases and Workarounds

1. Leveraging Smaller Models for Everyday Tasks

If speed is your primary concern, consider using the smaller Llama3 8B for everyday tasks like generating summaries, translating text, or answering simple questions. You'll experience faster response times without sacrificing much functionality.

2. Prioritize Efficiency: Model Quantization and Optimizations

While data on F16 quantization for Llama3 70B is missing, it's generally a good practice to consider model quantization. By reducing the precision of the model's weights, you can achieve a smaller footprint, potentially leading to improved performance. This is especially important for devices with limited memory resources.

3. Fine-tune Llama3 70B for Specific Tasks

Fine-tuning Llama3 70B on your specific datasets can significantly improve its performance for dedicated tasks. This can involve training the model on a custom corpus related to your application, leading to enhanced accuracy and better results.

4. Explore Alternative Hardware: Memory and Processing Power

While the NVIDIA 3080 Ti 12GB is a robust choice, other GPUs might offer even better performance for larger LLMs like Llama3 70B. Consider exploring options like the NVIDIA A100 or H100 for substantial improvements, particularly if you require very high speeds.

5. Embrace the Power of LLMs: Beyond Traditional Use Cases

The real magic of LLMs lies in their ability to go beyond traditional tasks. Explore creative applications such as generating innovative ideas, writing scripts, composing music, or constructing complex structures. LLMs can be your creative partner, pushing the boundaries of what's possible.

6. Embrace the Open-Source Community: Collaboration and Innovation

The world of LLMs is constantly evolving. Joining open-source communities like Hugging Face and Google AI can expose you to the latest research, tools, and breakthroughs. Collaborating with others can bring fresh perspectives and insights, accelerating your progress.

7. Stay Informed: Keeping Pace with Rapid Advancements

The field of language models is rapidly evolving, with new models and advancements emerging regularly. Stay informed by reading technical blogs, subscribing to industry newsletters, and attending conferences and workshops. Continuous learning is crucial to stay at the forefront of this exciting field.

FAQ: Common Questions about LLMs and Devices

1. Q: What is quantization and why is it important?

Quantization is like making the model's brain smaller and more efficient. It's a technique that reduces the size of the model by using fewer bits to represent the model's weights. This results in a smaller model file, which can load faster and consume less memory. Quantization essentially trades some accuracy for speed, but often the benefit outweighs the loss.

2. Q: What are the differences between F16 and Q4KM quantization?

F16 uses 16 bits to represent the weights, while Q4KM uses 4 bits. F16 is generally less aggressive in terms of quantization, maintaining more precision, but also leading to a larger model file. Q4KM, on the other hand, significantly reduces model size, but might compromise accuracy.

3. Q: What are the best practices for optimizing LLM performance?

Besides quantization, optimizing LLM performance involves several strategies. These include: - Efficient batching: Processing multiple inputs together can significantly improve efficiency. - Memory management: Using techniques like caching and memory mapping can minimize memory overhead. - Hardware optimization: Choosing the right GPU and utilizing its full potential through techniques like GPU-accelerated libraries.

4. Q: How can I choose the right LLM for my specific use case?

The choice of the right LLM depends on your needs. Consider factors like: - Model size: Larger models generally perform better on complex tasks but require more resources. - Task-specific training: Fine-tuning a model for a specific task can significantly improve performance. - Accuracy vs. speed: Quantization can reduce accuracy but increase speed.

5. Q: What are the future directions in LLM research?

Future research in LLMs focuses on: - Improving efficiency: Developing more efficient models that require fewer resources. - Enhanced accuracy: Reducing biases and errors while enhancing accuracy and reliability. - Multimodality: Integrating LLMs with other modalities like vision and audio.

Keywords:

Llama3 70B, NVIDIA 3080 Ti 12GB, Token Generation Speed, LLM Performance, Model Quantization, Q4KM, F16, GPU, Benchmark, Hugging Face, Google AI, Open-Source, Local LLMs, Performance Optimization, Use Cases, Practical Recommendations, Fine-tuning, Model Comparison, LLMs, GPU, Large Language Models, Deep Dive, Hardware, Software, AI, Machine Learning, Natural Language Processing.