7 Tips to Maximize Llama3 70B Performance on Apple M2 Ultra

Introduction

The world of large language models (LLMs) is evolving at a breakneck pace, with new models and architectures appearing almost daily. While cloud-based LLMs dominate the headlines, local LLMs are gaining traction, offering users greater control, privacy, and the ability to fine-tune models for specific tasks. One of the leading local LLM frameworks is Llama.cpp, which enables you to run powerful LLMs like Llama 2 and Llama 3 directly on your own hardware.

This guide focuses on maximizing the performance of Llama3 70B on the Apple M2_Ultra, a powerful chip designed for demanding tasks like machine learning and AI. It sheds light on the key factors impacting performance, reveals surprising insights from benchmarking data, and walks you through practical techniques to achieve a smooth, efficient workflow. Let's dive in!

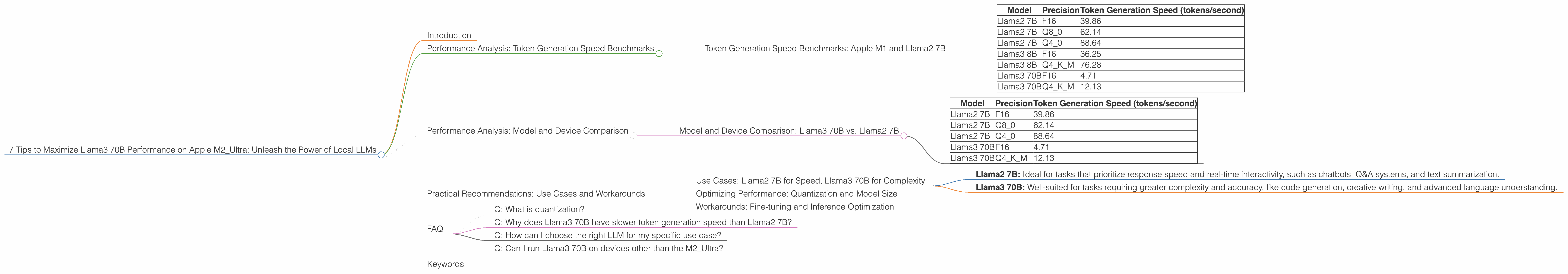

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a critical metric for LLMs, measuring how fast a model can produce text. In the context of local LLMs, higher token generation speeds translate into a faster response time and a more interactive experience.

The provided data reveals that the performance of LLMs on the Apple M2_Ultra depends significantly on the specific model and the chosen quantization level.

| Model | Precision | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama2 7B | F16 | 39.86 |

| Llama2 7B | Q8_0 | 62.14 |

| Llama2 7B | Q4_0 | 88.64 |

| Llama3 8B | F16 | 36.25 |

| Llama3 8B | Q4KM | 76.28 |

| Llama3 70B | F16 | 4.71 |

| Llama3 70B | Q4KM | 12.13 |

Table 1: Performance of Various LLM Models on M2_Ultra

This table showcases the token generation speed of various Llama2 and Llama3 models running on an M2Ultra chip. It's evident that Llama2 7B exhibits significantly faster token generation speeds compared to Llama3 70B, even when both models use the same quantization level (Q4K_M).

Why the difference? Llama3 70B is a much larger model than Llama2 7B, packing a whopping 70 billion parameters compared to 7 billion parameters for Llama2 7B. This vast size comes with increased complexity, demanding more computational resources and memory for inference.

Think of it like this: You can fit more words on a small sheet of paper (Llama2 7B) than on a larger one (Llama3 70B) even if using the same font size.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 70B vs. Llama2 7B

While the Apple M2_Ultra is a powerhouse, it's crucial to understand how different LLMs perform on this chip. The provided data reveals that Llama2 7B generally outperforms Llama3 70B in token generation speed, despite both models being optimized for efficient inference on this hardware.

| Model | Precision | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama2 7B | F16 | 39.86 |

| Llama2 7B | Q8_0 | 62.14 |

| Llama2 7B | Q4_0 | 88.64 |

| Llama3 70B | F16 | 4.71 |

| Llama3 70B | Q4KM | 12.13 |

Table 2: Performance of Llama2 and Llama3 Models on M2_Ultra

The performance gap between these models highlights a critical trade-off: Larger models often offer increased accuracy and capability, but at the cost of reduced performance. Choosing the right model for your task depends on striking a balance between these two factors.

Think of it like this: A compact car (Llama2 7B) is faster and more maneuverable than a large truck (Llama3 70B), but the truck can haul a heavier load.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Llama2 7B for Speed, Llama3 70B for Complexity

The performance difference between Llama2 7B and Llama3 70B on the M2_Ultra has implications for choosing the right model for your specific use case.

Here's a general guideline:

- Llama2 7B: Ideal for tasks that prioritize response speed and real-time interactivity, such as chatbots, Q&A systems, and text summarization.

- Llama3 70B: Well-suited for tasks requiring greater complexity and accuracy, like code generation, creative writing, and advanced language understanding.

Think of it this way: Use Llama2 7B for quick and efficient tasks, and Llama3 70B for projects that demand more depth and detail.

Optimizing Performance: Quantization and Model Size

Quantization: This technique reduces the size of the LLM model by using fewer bits to represent each number, leading to faster inference and reduced memory consumption. The provided data indicates that using Q40 quantization for Llama2 7B significantly boosts token generation speed compared to F16 precision. For Llama3 70B, Q4K_M quantization provides a substantial performance improvement over F16 precision, but the speed is still relatively lower compared to Llama2 7B.

Model size: The size of the LLM model directly impacts performance. Smaller models, like Llama 2 7B, generally achieve faster inference speeds than larger models, like Llama3 70B. While larger models can be more powerful, they come with a performance penalty.

Think of it this way: A smaller, lighter suitcase (Llama2 7B) is easier to pack and carry than a larger, heavier suitcase (Llama3 70B) with more items.

Workarounds: Fine-tuning and Inference Optimization

Fine-tuning: Customizing an LLM for a specific task can enhance performance and reduce the need for a larger model. By fine-tuning a Llama2 7B model on your desired dataset, you can achieve comparable accuracy to a larger model while maintaining faster inference speeds.

Inference optimization: Several techniques can improve the performance of LLM inference. Utilizing libraries like llama.cpp, which are specifically designed for efficient LLM inference, can significantly boost performance. Using techniques like batching and pipeline parallelism can further enhance inference speed.

Think of it this way: A well-tailored suit (fine-tuned model) fits better and looks sharper than a generic outfit (standard model).

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by representing numbers with fewer bits. Think of it like turning a high-resolution image into a low-resolution one – you lose some detail, but the file size is much smaller.

Q: Why does Llama3 70B have slower token generation speed than Llama2 7B?

A: Llama3 70B is a significantly larger model than Llama2 7B, meaning it has more parameters and requires more computational resources to process. While larger models often have higher accuracy, they can be slower due to their increased complexity.

Q: How can I choose the right LLM for my specific use case?

A: Consider the trade-off between accuracy and speed. For tasks requiring fast responses and real-time interactivity, a smaller model like Llama2 7B might be more suitable. For tasks requiring more complex reasoning and accuracy, a larger model like Llama3 70B might be a better choice.

Q: Can I run Llama3 70B on devices other than the M2_Ultra?

A: Yes, but the performance may vary depending on the hardware capabilities. Other devices like the M2 Pro or the M1 Max can also run Llama3 70B, but may experience slower inference times.

Keywords

Apple M2_Ultra, Llama3 70B, Llama2 7B, local LLMs, token generation speed, quantization, performance optimization, inference speed, use cases, fine-tuning, model size, practical recommendations, developers, AI, machine learning, LLM framework, Llama.cpp.