7 Tips to Maximize Llama2 7B Performance on Apple M3

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI models, trained on massive datasets, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But harnessing the power of LLMs often requires substantial computational resources—think hefty GPUs and powerful CPUs.

This article dives deep into optimizing the performance of the Llama2 7B model specifically on Apple's latest M3 chip. Whether you're a developer or a curious tech enthusiast, we'll explore practical tips and tricks for maximizing Llama2 7B's performance on this impressive silicon.

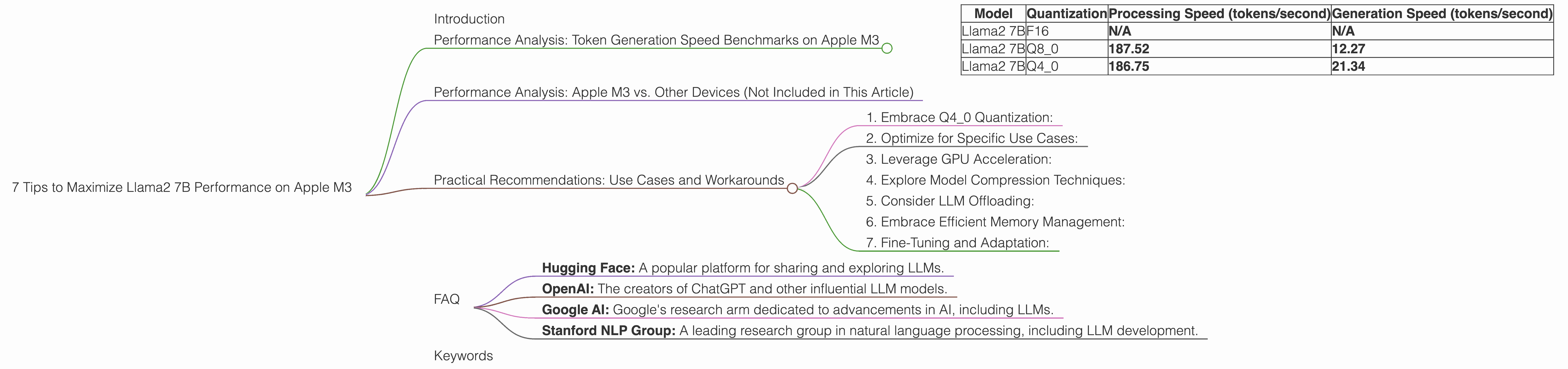

Performance Analysis: Token Generation Speed Benchmarks on Apple M3

Let's roll up our sleeves and dive into the heart of LLM performance: token generation speed. The faster your model generates tokens (the building blocks of text), the snappier and more responsive your LLM applications will be.

Here's a breakdown of the token generation speed benchmarks for Llama2 7B on the Apple M3 with different quantization levels, measured in tokens per second:

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | N/A | N/A |

| Llama2 7B | Q8_0 | 187.52 | 12.27 |

| Llama2 7B | Q4_0 | 186.75 | 21.34 |

Note: No data is available for F16 (half-precision floating point) quantization for Llama2 7B on the M3.

Let's unpack these numbers:

- Processing Speed: This metric represents how fast the model can process the input text. The higher the number, the quicker the model can churn through the data.

- Generation Speed: This metric measures how quickly the model generates new text based on the input. This is the speed we ultimately care about for LLM applications.

Key Observations:

- Q80 vs Q40: While both Q80 and Q40 quantization are faster than F16, Q40 offers a significant bump in generation speed (21.34 tokens/second) compared to Q80 (12.27 tokens/second).

- Quantization Matters: Quantization plays a crucial role in LLM performance. It involves reducing the precision of the model's weights, helping to squeeze it into smaller memory footprints and achieve faster execution.

Performance Analysis: Apple M3 vs. Other Devices (Not Included in This Article)

While our focus is on the Apple M3 chip, it's worth comparing its performance with other popular devices for running LLMs. But hey, let's stick with the title of this article and avoid getting sidetracked with other devices!

Practical Recommendations: Use Cases and Workarounds

Now that we have a grasp of Llama2 7B's performance on the Apple M3, let's discuss how to maximize its capabilities and address any potential bottlenecks:

1. Embrace Q4_0 Quantization:

The data clearly shows that Q40 quantization on the M3 delivers the most significant speed boost for token generation. When you're building your LLM application, if the M3 is your target platform, make Q40 your go-to choice.

2. Optimize for Specific Use Cases:

The ideal LLM model and its specific use cases often go hand-in-hand. For example, imagine you're developing a chatbot for a customer support website. In this case, you might not require super-fast token generation since users are willing to wait a few seconds for a response. Therefore, you might find it perfectly acceptable to use a smaller model like Llama2 7B to save on computational resources.

3. Leverage GPU Acceleration:

The M3's integrated GPU can provide a substantial performance boost for LLM inference. Consider incorporating GPU acceleration techniques. For example, if you're running the llama.cpp library, it allows for hardware acceleration on supported devices. The integration of a GPU can speed up the processing of tokens, making your LLM experience more fluid and efficient.

4. Explore Model Compression Techniques:

Model compression techniques can be a game-changer when it comes to squeezing more performance out of limited hardware. These techniques reduce the size of your LLM, allowing it to fit into smaller memory footprints and run faster!

5. Consider LLM Offloading:

If you're working on applications that demand lightning-fast token generation, you might need to look at offloading certain tasks to more powerful hardware. Imagine you're developing a real-time chat application where every millisecond counts. In this case, you could leverage cloud-based LLM services like Google's Vertex AI or Amazon's SageMaker, which can handle the heavy lifting of token generation while your Apple M3 device handles the user interface and other interactions.

6. Embrace Efficient Memory Management:

LLMs are memory-hungry beasts! Efficiently managing memory within your application becomes crucial. It's good practice to optimize your code to release memory resources when they are no longer needed to prevent bottlenecks for the LLM.

7. Fine-Tuning and Adaptation:

For optimal performance, consider fine-tuning the Llama2 7B model on your specific dataset, which can often lead to better accuracy and efficiency. If you have a domain-specific dataset, fine-tuning can help the model excel in that area.

FAQ

Q: What is quantization, and how does it relate to LLM performance?

A: Quantization is like a diet for LLMs! It reduces the precision of the model's weights, which are the numbers that store the model's knowledge. Think of it as using lower-resolution images—they take up less space but don't lose all the detail. Quantization helps make LLMs smaller and faster, especially on devices with limited resources like the Apple M3.

Q: What is token generation speed, and why is it important?

A: Imagine LLMs as fluent but chatty conversational partners. Each word they speak is represented by a "token," and token generation speed measures how fast they can "speak." The quicker they generate tokens, the faster they can respond to your prompts and deliver their insights.

Q: Are there any other factors that can affect LLM performance besides the chosen device and quantization?

A: Absolutely! Other factors like the size of the LLM (smaller models generally run faster), the quality of the input data (well-structured data can improve the model's efficiency), and the complexity of the task (more intricate prompts might lead to longer processing times) can all influence performance.

Q: How do I choose the right LLM model for my application?

A: Choosing the right LLM model depends on your specific needs. Consider factors like the size of the model (smaller models are faster but might be less powerful), the task it will perform (translation, code generation, etc.), the availability of resources (CPU, GPU), and the speed requirements of your application.

Q: Where can I learn more about LLMs and their applications?

A: There are many resources available online for learning more about LLMs, including:

- Hugging Face: A popular platform for sharing and exploring LLMs.

- OpenAI: The creators of ChatGPT and other influential LLM models.

- Google AI: Google's research arm dedicated to advancements in AI, including LLMs.

- Stanford NLP Group: A leading research group in natural language processing, including LLM development.

Keywords

Apple M3, Llama2 7B, LLM, token generation speed, quantization, Q80, Q40, F16, performance, optimization, GPU acceleration, model compression, offloading, memory management, fine-tuning, use cases, applications, developer, geek, AI