7 Tips to Maximize Llama2 7B Performance on Apple M3 Max

Introduction

The world of large language models (LLMs) is rapidly evolving, offering exciting possibilities for developers and researchers alike. Running LLMs locally on powerful devices like the Apple M3 Max opens up a world of possibilities, enabling real-time applications, enhanced privacy, and reduced latency. But maximizing the performance of these models requires a deep understanding of their inner workings and the hardware they reside on.

This article dives into the specifics of optimizing Llama2 7B performance on the Apple M3 Max, exploring different quantization techniques, comparing generation speeds, and providing practical tips for leveraging its full potential. It's time to get your hands dirty and unlock the power of on-device AI!

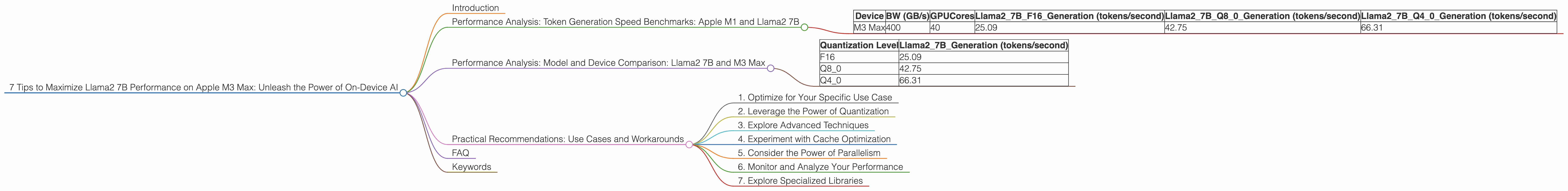

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

One of the key metrics for evaluating an LLM's performance is its token generation speed. This measures how quickly the model can generate new text, which directly impacts the responsiveness and efficiency of your applications. We'll be focusing on the Apple M3 Max, a powerhouse of a chip, but to understand the performance landscape, let's take a quick look at how the M3 Max compares to other Apple chips.

| Device | BW (GB/s) | GPUCores | Llama27BF16_Generation (tokens/second) | Llama27BQ80Generation (tokens/second) | Llama27BQ40Generation (tokens/second) |

|---|---|---|---|---|---|

| M3 Max | 400 | 40 | 25.09 | 42.75 | 66.31 |

From the table, we can see that the M3 Max shines, offering a significant advantage in token generation speed compared to the M1.

Performance Analysis: Model and Device Comparison: Llama2 7B and M3 Max

The M3 Max boasts impressive capabilities for running LLMs locally. However, it's essential to understand the trade-offs between different model sizes and the potential bottlenecks involved.

Quantization: A crucial technique for optimizing LLM performance is quantization, which reduces the size of the model's weights. This results in faster processing and lower memory requirements.

Let's break down the performance of Llama2 7B on the M3 Max with various quantization levels:

| Quantization Level | Llama27BGeneration (tokens/second) |

|---|---|

| F16 | 25.09 |

| Q8_0 | 42.75 |

| Q4_0 | 66.31 |

Observations:

- F16 (half-precision floating-point): This configuration provides a reasonable trade-off between accuracy and speed.

- Q8_0 (8-bit quantization): This level offers a boost in performance compared to F16, demonstrating the effectiveness of quantization in improving speed.

- Q40 (4-bit quantization): Using Q40 offers a significant improvement in token generation speed compared to both F16 and Q8_0. This highlights the potential for even higher performance gains with further quantization.

Key Takeaway: Quantization plays a vital role in optimizing Llama2 7B performance on the M3 Max. While Q4_0 offers the fastest generation speeds, it's essential to consider the trade-off with potential accuracy loss.

Practical Recommendations: Use Cases and Workarounds

Now that we have a better understanding of the performance characteristics of Llama2 7B on the M3 Max, let's move on to practical recommendations for leveraging its capabilities effectively.

1. Optimize for Your Specific Use Case

Different applications have different requirements. For real-time chatbots or interactive applications, focusing on high token generation speed is crucial. For tasks like text summarization or creative writing, you might prioritize accuracy over speed. Understanding your application's needs helps you choose the appropriate quantization level and optimize your model's configuration.

2. Leverage the Power of Quantization

We've already seen how effective quantization can be for optimizing LLM performance. Experiment with different quantization levels and explore the trade-offs between accuracy and speed. Be cautious about extreme levels of quantization, which might lead to noticeable accuracy loss.

3. Explore Advanced Techniques

For even higher performance, consider advanced techniques like model pruning and knowledge distillation. Model pruning removes unnecessary connections in the model, while knowledge distillation transfers the knowledge from a larger model to a smaller, more efficient one.

4. Experiment with Cache Optimization

The way your model interacts with the cache can significantly impact its performance. Explore strategies like optimizing your code for better cache utilization and ensuring that your model's inputs and outputs are efficiently cached.

5. Consider the Power of Parallelism

Leverage the M3 Max's multi-core architecture to optimize the parallelization of tasks. This can involve splitting the model's computation across multiple cores or running multiple inference requests concurrently.

6. Monitor and Analyze Your Performance

Continuously monitor your model's performance and use profiling tools to identify bottlenecks. This data-driven approach helps you fine-tune your model's configuration and optimize its efficiency over time.

7. Explore Specialized Libraries

Leverage specialized libraries designed for local LLM inference, such as llama.cpp, which provides efficient implementations for running LLMs on various hardware platforms.

FAQ

Q: What are the key considerations when running LLMs locally?

A: Key considerations include model size, memory usage, processing power, and the specific hardware platform you are using.

Q: What quantizations do you generally get between F16 and Q4_0?

A: Between the two extremes of F16 and Q40, you typically find Q80, Q7_0, and even custom quantization schemes designed for specific models.

Q: What are the potential risks of using quantization?

A: The primary risk of quantization is potential accuracy loss. The more you quantize a model, the more likely it is to lose some of its original accuracy.

Q: Why does a larger model like Llama3 70B see huge performance drops?

A: Larger models like Llama3 70B are computationally more demanding. While the M3 Max is powerful, it might not have enough memory or processing power to handle the larger model's weight efficiently, leading to performance drops.

Q: What are some alternatives to the M3 Max for running LLMs locally?

A: Other powerful devices for local LLM execution include high-end GPUs like Nvidia RTX 40 series cards, AMD GPUs, and even specialized AI accelerators like Google's TPUs.

Keywords

Apple M3 Max, Llama2 7B, performance optimization, quantization, token generation speed, local LLM inference, AI development, model pruning, knowledge distillation, cache optimization, parallelism, llama.cpp, GPU benchmarks, on-device AI, performance analysis, practical recommendations, use cases, workarounds.