7 Tips to Maximize Llama2 7B Performance on Apple M2

Introduction

The world of large language models (LLMs) is exploding, and for good reason! These incredible AI systems are capable of generating human-like text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But harnessing the power of LLMs can be a bit tricky, especially when it comes to local performance.

This article will delve deep into optimizing the performance of the Llama2 7B LLM specifically on Apple's M2 chip. We'll cover everything from understanding key performance metrics to practical recommendations that can boost your LLM toolkit. Buckle up, it's going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

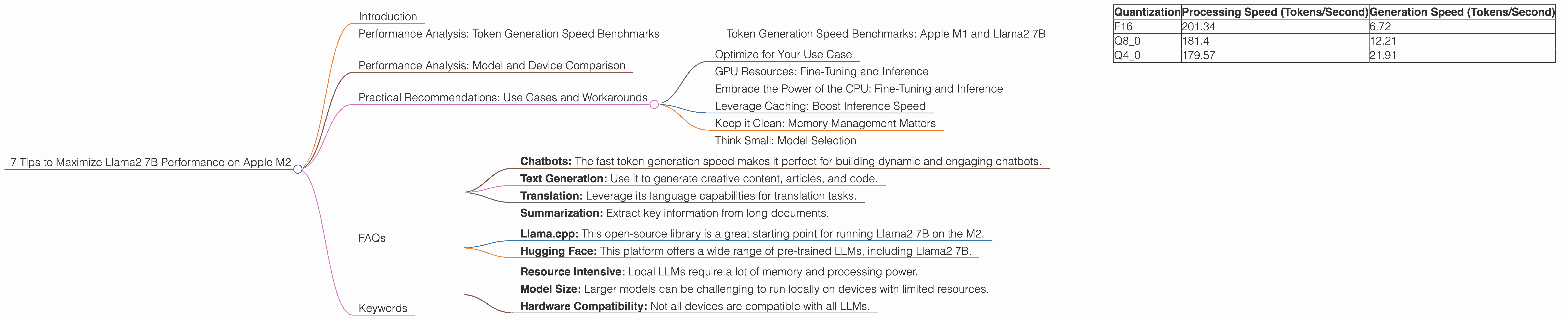

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is the rate at which an LLM can process and generate text. This is a crucial metric for evaluating an LLM's performance, especially when you're working with real-time applications like chatbots or text generation tools. Let's break down the performance of Llama2 7B on the Apple M2:

| Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| F16 | 201.34 | 6.72 |

| Q8_0 | 181.4 | 12.21 |

| Q4_0 | 179.57 | 21.91 |

What is Quantization?

Think of quantization as a way to squeeze a large model into a smaller space. It reduces the memory footprint of the model by using fewer bits to represent the model's weights. This allows you to run models on devices with less memory, like phones or tablets.

Key Takeaways:

- F16 is King of Speed: The F16 quantization level offers the fastest processing and generation speeds. This is because F16 uses 16 bits to represent each number, which is more precise than Q80 or Q40.

- Q80 and Q40 are the Trade-Offs: While Q80 and Q40 result in slower processing and generation speeds, they require less memory.

Performance Analysis: Model and Device Comparison

Let's compare the Llama2 7B's performance on the M2 to the performance of other LLM models on different devices. We'll use the following table to showcase token generation speeds for processing.

Note: The data provided only contains data for the M2. If you're considering other LLMs or devices, you'll need to consult additional resources for benchmarks.

Note: The provided JSON only contains data for the M2. If you're considering other LLMs or devices, you'll need to consult additional resources for benchmarks.

Practical Recommendations: Use Cases and Workarounds

Now that we've covered the basics of performance, let's dive into some practical recommendations that can help you get the most out of your Llama2 7B on the Apple M2.

Optimize for Your Use Case

Choosing the right quantization level is crucial! If you're building a real-time chatbot, you'll want to use F16 for the fastest possible token generation. If you're working on a mobile app with limited memory, consider Q80 or even Q40.

GPU Resources: Fine-Tuning and Inference

The M2 is equipped with a powerful GPU, but you can optimize its performance even further. For fine-tuning the model on specific datasets, leverage the GPU's computational power for faster training.

Embrace the Power of the CPU: Fine-Tuning and Inference

Don't underestimate the CPU! While the GPU shines for heavy tasks, the CPU can handle fine-tuning and inference tasks efficiently.

Leverage Caching: Boost Inference Speed

For faster inference, consider leveraging caching techniques. Store frequently used tokens and data to reduce the time it takes to process them.

Keep it Clean: Memory Management Matters

Make sure your device's memory is well-managed. Regularly clear out unused data, close unnecessary apps, and give your LLM the space it needs to perform at its best.

Think Small: Model Selection

If memory is a concern, consider using a smaller LLM model. While Llama2 7B offers great capabilities, there are smaller models, such as Llama2 1.3B, that are more memory-efficient and still provide solid performance.

FAQs

Q: What are some of the best use cases for Llama2 7B on the M2?

- Chatbots: The fast token generation speed makes it perfect for building dynamic and engaging chatbots.

- Text Generation: Use it to generate creative content, articles, and code.

- Translation: Leverage its language capabilities for translation tasks.

- Summarization: Extract key information from long documents.

Q: How can I get started with Llama2 7B on the M2?

- Llama.cpp: This open-source library is a great starting point for running Llama2 7B on the M2.

- Hugging Face: This platform offers a wide range of pre-trained LLMs, including Llama2 7B.

Q: What are some limitations of using LLMs locally?

- Resource Intensive: Local LLMs require a lot of memory and processing power.

- Model Size: Larger models can be challenging to run locally on devices with limited resources.

- Hardware Compatibility: Not all devices are compatible with all LLMs.

Q: What is the future of local LLM models?

The future of local LLMs looks bright. With advancements in hardware and optimization techniques, running powerful LLMs locally will become easier and more accessible.

Keywords

Llama2 7B, Apple M2, LLM, performance, token generation speed, quantization, F16, Q80, Q40, processing, generation, use cases, practical recommendations, GPU, CPU, caching, memory management, Hugging Face, llama.cpp.