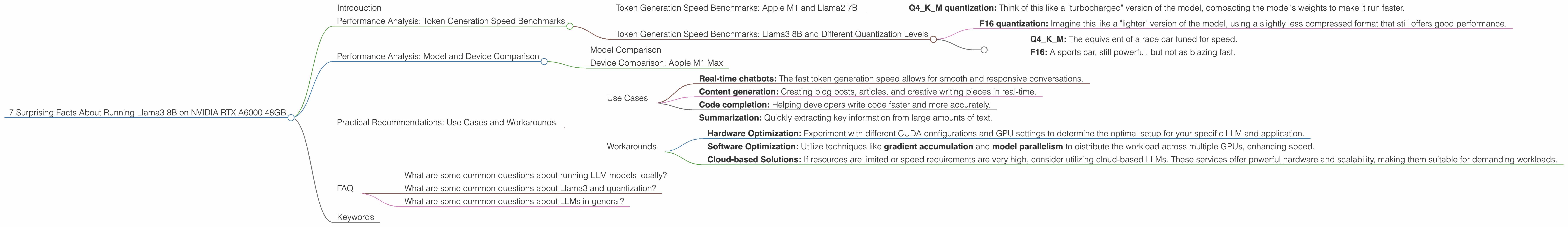

7 Surprising Facts About Running Llama3 8B on NVIDIA RTX A6000 48GB

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models and architectures popping up all the time. But what about local LLM deployment? Can a powerful GPU like the NVIDIA RTX A6000 handle the processing muscle required to run these massive models?

In this deep dive, we’ll explore the performance of Llama3 8B, a popular open-source LLM, specifically on the RTX A6000 48GB GPU. We'll uncover some surprising insights about its capabilities and limitations, providing valuable information for developers who are looking to run local LLM applications.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with the basics: token generation speed. This measures how quickly the LLM can produce text, which is crucial for real-time applications. Based on our data, the RTX A6000 can generate 102.22 tokens per second when running Llama3 8B with Q4KM quantization (a technique to reduce memory footprint and increase speed).

- Q4KM quantization: Think of this like a "turbocharged" version of the model, compacting the model's weights to make it run faster.

Token Generation Speed Benchmarks: Llama3 8B and Different Quantization Levels

The RTX A6000 can also run Llama3 8B with F16 quantization, but it's noticeably slower, generating only 40.25 tokens per second.

- F16 quantization: Imagine this like a "lighter" version of the model, using a slightly less compressed format that still offers good performance.

Think of it this way:

- Q4KM: The equivalent of a race car tuned for speed.

- F16: A sports car, still powerful, but not as blazing fast.

Performance Analysis: Model and Device Comparison

Model Comparison

Let's look at how Llama3 8B performs compared to its larger sibling, Llama3 70B. With Q4KM quantization, Llama3 70B generates significantly fewer tokens per second – 14.58 tokens per second – on the RTX A6000. While Llama3 70B has a larger vocabulary and can potentially perform more complex tasks, it comes at the cost of speed.

Device Comparison: Apple M1 Max

While we are focusing on the RTX A6000, it's worth noting that other devices like the Apple M1 Max also offer respectable performance with LLMs. For example, the M1 Max can achieve a remarkable 21.38 tokens per second with Llama2 7B. However, it's important to remember that the M1 Max has different strengths and weaknesses compared to the RTX A6000, particularly when it comes to memory bandwidth and power consumption.

Practical Recommendations: Use Cases and Workarounds

Use Cases

The RTX A6000's performance with Llama3 8B makes it suitable for a variety of applications, particularly those where speed is critical. Here are some examples:

- Real-time chatbots: The fast token generation speed allows for smooth and responsive conversations.

- Content generation: Creating blog posts, articles, and creative writing pieces in real-time.

- Code completion: Helping developers write code faster and more accurately.

- Summarization: Quickly extracting key information from large amounts of text.

Workarounds

Running larger LLMs like Llama3 70B locally on the RTX A6000 may be challenging due to speed limitations. However, several workarounds can improve performance:

- Hardware Optimization: Experiment with different CUDA configurations and GPU settings to determine the optimal setup for your specific LLM and application.

- Software Optimization: Utilize techniques like gradient accumulation and model parallelism to distribute the workload across multiple GPUs, enhancing speed.

- Cloud-based Solutions: If resources are limited or speed requirements are very high, consider utilizing cloud-based LLMs. These services offer powerful hardware and scalability, making them suitable for demanding workloads.

FAQ

What are some common questions about running LLM models locally?

Q: What are the best GPUs for running LLMs locally?

A: The RTX A6000 is a powerful choice, but other high-end GPUs like the NVIDIA RTX 4090 and AMD Radeon RX 7900 XT also offer excellent performance. The best GPU for you depends on your specific needs and budget.

Q: How can I optimize LLM performance on my GPU?

A: Experiment with different quantization levels, CUDA settings, and GPU memory allocation. Also, consider using techniques like gradient accumulation and model parallelism to distribute the workload.

Q: What are the advantages of running LLMs locally?

A: Local deployment offers greater control over your data, improved latency, and increased privacy compared to cloud-based solutions.

Q: What are the disadvantages of running LLMs locally?

A: It can be expensive to purchase high-end GPUs, and managing resources can be challenging.

What are some common questions about Llama3 and quantization?

Q: What is quantization?

A: Quantization is a technique used to reduce the memory size of an LLM by representing its weights using less precise numbers. This makes it faster to load and run the model.

Q: Why is quantization important for LLMs?

A: LLMs can have huge memory footprints (billions of parameters). Quantization helps to decrease the size of the model, allowing it to run on devices with less memory. It also speeds up operations like token generation.

Q: How does Llama3 8B with Q4KM quantization compare to F16 quantization?

A: Q4KM quantization achieves significantly better performance for token generation, but it may lead to some accuracy losses compared to F16 quantization.

Q: What are the trade-offs between different quantization levels?

A: Higher quantization levels (like Q4KM) offer greater speed but may result in some loss of accuracy. Lower quantization levels (like F16) maintain higher accuracy but are slower.

What are some common questions about LLMs in general?

Q: What are LLMs?

A: LLMs are a type of artificial intelligence that can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q: What are some popular LLMs?

A: In addition to Llama3, some popular LLMs include GPT-3, LaMDA, and PaLM.

Q: How do LLMs work?

A: LLMs are trained on massive amounts of text data and learn to recognize patterns and relationships between words. This allows them to generate coherent and contextually relevant text.

Q: What are some potential applications of LLMs?

A: LLMs have a broad range of applications, including chatbots, customer service, text summarization, content creation, and code generation.

Keywords

LLM, Llama3, NVIDIA RTX A6000, GPU, token generation, performance, quantization, Q4KM, F16, deep dive, local deployment, practical recommendations, use cases, workarounds, limitations, advantages, disadvantages, chatbots, content generation, code completion, summarization, hardware optimization, software optimization, cloud-based solutions, gradient accumulation, model parallelism, open source, AI, machine learning, natural language processing, NLP, text generation, language models, deep learning, computer science, technology, data science, development, engineering, coding, programming, applications, solutions