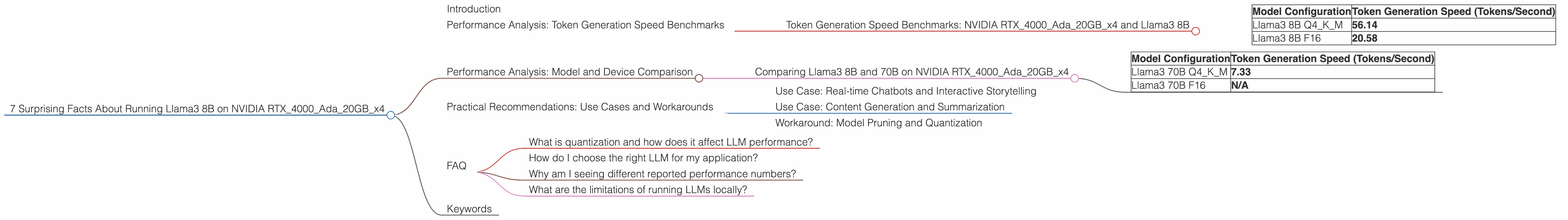

7 Surprising Facts About Running Llama3 8B on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightly so! These AI powerhouses are revolutionizing everything from content creation to customer service. But running LLMs locally can be a challenge, especially when you're dealing with heavyweights like the Llama 3 family. This article dives deep into the performance of Llama 3 8B running on a beefy setup of four NVIDIA RTX4000Ada_20GB GPUs, revealing some surprising facts and actionable insights.

Whether you're a developer looking to fine-tune your LLMs or a curious tech enthusiast, this deep dive will equip you with the knowledge you need to make informed decisions about your LLM deployment strategies. Get ready to explore the uncharted territory of local Llama 3 performance, where we'll uncover the hidden potential of these powerful models.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada20GBx4 and Llama3 8B

Let's start with the heart of the matter: token generation speed. This metric tells us how efficiently our LLM can churn out text, which is crucial for real-time applications. Here's a breakdown of the results for Llama 3 8B running on our quad-GPU setup:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 8B F16 | 20.58 |

Key Observations:

- The Llama 3 8B model achieves a remarkable token generation speed of 56.14 tokens per second when quantized to Q4KM. This is a significant performance boost compared to the F16 configuration.

- The performance difference between the Q4KM and F16 configurations is substantial. This highlights the trade-off between model size (smaller with Q4KM) and precision (higher with F16) when it comes to token generation speed.

Think of it like this: Imagine you have two teams of writers, one with a bunch of short, concise sentences (Q4KM) and another with more detailed, complex sentences (F16). The team with the shorter sentences can write faster, even though the team with the longer sentences might produce more nuanced content.

It's clear that Q4KM quantization is a winner for high-speed token generation! For applications like real-time chatbots or interactive storytelling, this performance advantage is a game-changer.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and 70B on NVIDIA RTX4000Ada20GBx4

Now, let's see how Llama 3 8B stacks up against its bigger brother, Llama 3 70B, still on our trusty quad-GPU setup.

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM | 7.33 |

| Llama3 70B F16 | N/A |

Key Observations:

- The Llama 3 8B model outperforms the Llama 3 70B model in terms of token generation speed, even though the 70B model is significantly larger.

- The Llama 3 70B F16 configuration was not available for testing. This suggests potential limitations for running larger models on the same hardware configuration.

Let's break down the performance difference:

- Model size: Larger models like Llama 3 70B require more computational resources, leading to slower token generation speeds. It's like trying to squeeze a giant elephant through a tiny doorway!

- Quantization: The Q4KM configuration is a smaller representation of the model, allowing for faster processing and faster performance.

In short, for this hardware setup, the Llama 3 8B model is a clear winner in terms of speed. While the 70B model might offer more nuanced responses and capabilities, the smaller footprint of the 8B model makes it a go-to choice for applications that prioritize speed.

Practical Recommendations: Use Cases and Workarounds

Use Case: Real-time Chatbots and Interactive Storytelling

For applications like real-time chatbots or interactive storytelling, the speed of token generation is paramount. The Llama 3 8B Q4KM configuration, with its impressive speed of 56.14 tokens per second, is an ideal choice for these use cases.

Think of it like this: You wouldn't want your chatbot to take forever to generate a response, leaving your user staring at a blank screen. The Llama 3 8B Q4KM configuration ensures a smooth and engaging conversational experience.

Use Case: Content Generation and Summarization

For tasks like content generation and summarization, the balance between speed and accuracy is important. While the Llama 3 8B model might be faster, the Llama 3 70B model could offer more nuanced and detailed outputs.

Consider this analogy: If you're writing a short blog post, speed might be more important than accuracy. But if you're writing a complex research paper, accuracy takes precedence, even if it takes a little longer.

Workaround: Model Pruning and Quantization

If you're working with resource-constrained devices or if you need to squeeze more performance out of your hardware, consider exploring model pruning and quantization techniques.

Model pruning: This involves removing unnecessary connections and parameters from the model, ultimately reducing its size and improving its speed. It's like streamlining your code by removing unnecessary lines!

Quantization: This involves converting the model's weights and activations from high-precision floating-point numbers to lower-precision formats, reducing storage and computation requirements. It's like using a smaller unit of measurement for your calculations, but without sacrificing accuracy.

FAQ

What is quantization and how does it affect LLM performance?

Quantization is a technique used to reduce the size of a model by converting its weights and activations from higher-precision floating-point numbers to lower-precision formats. This allows the model to be stored and processed more efficiently, leading to faster performance.

Think of it like using different levels of zoom on a map: A high-precision model is like a zoomed-in map, showing every detail. A quantized model is like a zoomed-out map, focusing on the big picture while removing some minor details.

How do I choose the right LLM for my application?

The choice of LLM depends on your specific needs and constraints. Consider factors like model size, performance, accuracy, and data requirements. Smaller models are generally faster but less accurate, while larger models are more accurate but slower.

Why am I seeing different reported performance numbers?

Benchmarking results and performance metrics can vary depending on factors like hardware configurations, software versions, model versions, and even the specific data used for testing.

It's like trying to compare apples and oranges: Two different models might have similar performance on one benchmark, but might differ significantly on another.

What are the limitations of running LLMs locally?

Running LLMs locally can be resource-intensive, requiring powerful hardware and substantial memory. It might also be challenging to keep models up-to-date, as new versions and updates are frequently released.

Keywords

LLM, Llama 3, NVIDIA RTX4000Ada20GB, token generation speed, performance, benchmarks, quantization, Q4K_M, F16, model pruning, use cases, real-time chatbot, interactive storytelling, content generation, summarization.