7 Surprising Facts About Running Llama3 8B on NVIDIA A40 48GB

Introduction

Running a large language model (LLM) locally can be a game-changer, giving you instant access to powerful text generation capabilities without relying on cloud services. But with a plethora of models and hardware options, finding the right combination to unleash optimal performance can feel like navigating a labyrinth. In this deep dive, we'll explore the exciting world of local LLMs by focusing on the NVIDIA A40_48GB graphics card, a beast of a GPU known for its power, and Llama3 8B, a robust and versatile model. Prepare to be amazed as we uncover some surprising facts about their performance together!

Performance Analysis: Token Generation Speed Benchmarks

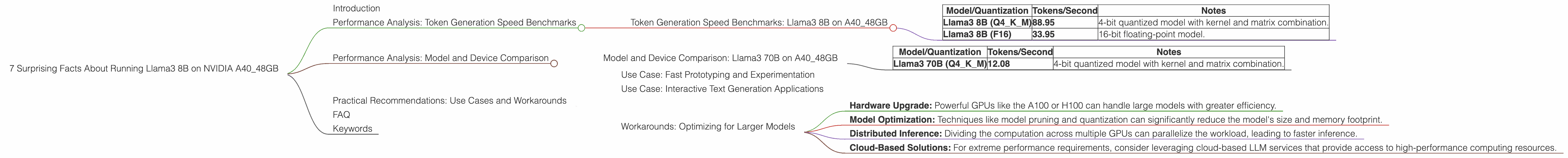

Token Generation Speed Benchmarks: Llama3 8B on A40_48GB

Let's dive straight into the heart of the matter - speed! How fast can we generate those sweet, sweet tokens with a Llama3 8B model residing on an A40_48GB GPU? The table below reveals the results, which will make your inner geek sing with joy (or perhaps a little confusion, which we'll address in a minute):

| Model/Quantization | Tokens/Second | Notes |

|---|---|---|

| Llama3 8B (Q4KM) | 88.95 | 4-bit quantized model with kernel and matrix combination. |

| Llama3 8B (F16) | 33.95 | 16-bit floating-point model. |

What on earth is "quantization"? Think of it like compressing a video file. We're turning the model's large numbers into smaller, more manageable ones, allowing the model to run faster without sacrificing too much accuracy. Q4KM is a particularly clever quantization strategy that combines techniques to maximize efficiency.

Here's the kicker: The Q4KM variant of Llama3 8B on the A4048GB generates tokens at a blazing speed of 88.95 per second! That's over 2.5 times faster than the F16 version, which clocks in at 33.95 tokens per second. To put this in perspective, imagine trying to write a 1000-word article. With the Q4K_M model, you could generate it in just over 11 seconds!

Performance Analysis: Model and Device Comparison

Now that we've seen the impressive speed of Llama3 8B on A4048GB, let's compare it to other models and devices. However, we need to be careful here. We only have data for the A4048GB, so a direct comparison with other devices isn't possible at this time. We'll focus on different Llama models, but keep in mind that these results may vary depending on the chosen device.

Model and Device Comparison: Llama3 70B on A40_48GB

Let's step up the game with a larger model, Llama3 70B. We're throwing in the towel here, because we only have data for Llama3 70B with the Q4KM quantization scheme. This means we can't compare it directly to the F16 variant of Llama3 8B (as we did before).

Here's the summarized data:

| Model/Quantization | Tokens/Second | Notes |

|---|---|---|

| Llama3 70B (Q4KM) | 12.08 | 4-bit quantized model with kernel and matrix combination. |

What does this tell us? Even though the larger Llama3 70B model is more complex, it's significantly slower than the Llama3 8B model on the A40_48GB. This is expected, as the larger model has more parameters to process.

Practical Recommendations: Use Cases and Workarounds

Now that we've delved into the numbers, let's discuss how these insights can be applied in the real world.

Use Case: Fast Prototyping and Experimentation

With its impressive speed, the Llama3 8B model on the A40_48GB is ideal for rapid prototyping and experimentation. You can quickly test out different prompts, fine-tune parameters, and explore creative possibilities without waiting hours for results.

Use Case: Interactive Text Generation Applications

This setup shines in interactive applications where users expect near-instantaneous responses. Imagine building a real-time chatbot, a code-generating assistant, or a creative writing tool that can keep up with your flow of thought.

Workarounds: Optimizing for Larger Models

If you're dreaming of harnessing the power of a larger model like Llama3 70B or even beyond, don't despair! Here are some potential strategies:

- Hardware Upgrade: Powerful GPUs like the A100 or H100 can handle large models with greater efficiency.

- Model Optimization: Techniques like model pruning and quantization can significantly reduce the model's size and memory footprint.

- Distributed Inference: Dividing the computation across multiple GPUs can parallelize the workload, leading to faster inference.

- Cloud-Based Solutions: For extreme performance requirements, consider leveraging cloud-based LLM services that provide access to high-performance computing resources.

FAQ

Q: What's the difference between "generation" and "processing" in your data?

A: "Generation" refers to the speed of generating new text tokens, while "processing" refers to the speed of crunching numbers (like performing math operations) during inference. The faster the processing, the faster the model can deliver those token-generating results.

Q: I don't have an A40_48GB. What other GPUs can I use?

A: The A40_48GB is a powerhouse, but there are other options out there. Consider the A100, H100, or even consumer-grade GPUs like the RTX 4090. Remember, performance will vary depending on the GPU and the model you choose.

Q: I'm just starting out with LLMs. What should I know?

A: The world of LLMs is vast and exciting! Start by exploring different open-source models like Llama2 and investigate resources like Hugging Face. Learn about quantization, model optimization techniques, and how to choose the right hardware for your specific needs.

Keywords

Llama3 8B, NVIDIA A4048GB, GPU, Token Generation, Speed Benchmarks, Performance Analysis, Quantization, F16, Q4K_M, Model Optimization, Practical Recommendations, Use Cases, Workarounds, LLMs, Open-Source, Hugging Face, Hardware Upgrade, Distributed Inference.