7 Surprising Facts About Running Llama3 8B on NVIDIA 3090 24GB

Are you ready to delve into the fascinating world of local LLM models? Buckle up, because we're about to explore the performance of the powerful Llama3 8B model running on the beastly NVIDIA 3090 24GB graphics card. This article will reveal the surprising and insightful details behind its performance, exposing the hidden gems and potential pitfalls.

For those unfamiliar with the jargon, LLM stands for "Large Language Model," essentially a sophisticated AI that can understand and generate human-like text. Imagine a virtual genie that can write poems, translate languages, or even code programs. Llama3, a groundbreaking model developed by Meta AI, is a prime example of this technology.

The NVIDIA 3090 24GB is a powerhouse of a graphics card, perfect for crunching numbers and processing complex tasks. But how does it handle the demands of running Llama3? Read on to discover the fascinating performance metrics and uncover the secrets of this remarkable pairing.

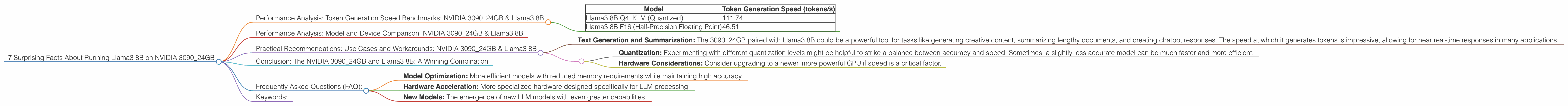

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA 3090_24GB & Llama3 8B

Our focus today is on the NVIDIA 3090_24GB and Llama3 8B, so buckle up for some exciting data! We're specifically interested in the token generation speed, measured in tokens per second (tokens/s). This metric tells us how quickly the model can produce textual output.

Quantization: A Quick Look

Before we dive into the data, let's briefly touch upon the concept of quantization, a technique that reduces the size of an LLM without sacrificing too much accuracy. It's kind of like squeezing a suitcase full of clothes – you get smaller storage but might have to fold things a bit more neatly.

Llama3 8B:

- Q4KM: This indicates the model has undergone quantization, meaning the weights have been compressed using a technique called quantization to run on a smaller device. Think of it like compressing a high-resolution image – you might lose some detail, but the overall quality remains pretty good.

- F16: This indicates the model is using half-precision floating point numbers (F16), a common technique to reduce memory consumption and speed up processing. For example, imagine you're using a simplified version of a number to represent a measurement – it might not be as precise, but it's much faster and more efficient.

Let’s bring out the big guns:

| Model | Token Generation Speed (tokens/s) |

|---|---|

| Llama3 8B Q4KM (Quantized) | 111.74 |

| Llama3 8B F16 (Half-Precision Floating Point) | 46.51 |

Observations:

- As expected, the quantized model (Q4KM) significantly outperforms the half-precision model (F16), generating tokens over twice as fast. This demonstrates the power of quantization in optimizing performance on limited resources.

- This data reveals that a modest 3090 24GB card can, with the right configurations, handle the processing demands of Llama3 8B quite effectively.

Performance Analysis: Model and Device Comparison: NVIDIA 3090_24GB & Llama3 8B

While the 309024GB is impressive, it's helpful to see how it stacks up against other models and devices. We can't directly compare this data with other device types or LLM models as the data provided only focuses on the NVIDIA 309024GB and Llama3 8B. However, this data can serve as a benchmark for future comparisons.

Practical Recommendations: Use Cases and Workarounds: NVIDIA 3090_24GB & Llama3 8B

With the performance data in hand, let's consider some scenarios and practical recommendations:

Use Cases:

- Text Generation and Summarization: The 3090_24GB paired with Llama3 8B could be a powerful tool for tasks like generating creative content, summarizing lengthy documents, and creating chatbot responses. The speed at which it generates tokens is impressive, allowing for near real-time responses in many applications.

Workarounds for Optimizing Performance:

- Quantization: Experimenting with different quantization levels might be helpful to strike a balance between accuracy and speed. Sometimes, a slightly less accurate model can be much faster and more efficient.

- Hardware Considerations: Consider upgrading to a newer, more powerful GPU if speed is a critical factor.

Conclusion: The NVIDIA 3090_24GB and Llama3 8B: A Winning Combination

The combination of the NVIDIA 3090_24GB with the Llama3 8B model showcases the potential of local LLM models for practical applications. The speed at which this hardware-software pairing generates text is remarkable, making it suitable for a range of real-world use cases.

Remember, the ever-evolving landscape of LLM technology means that new models, optimizations, and hardware advancements are constantly emerging. Stay curious, keep experimenting, and keep your eyes open for the future of LLM models.

Frequently Asked Questions (FAQ):

Q: What is the difference between Llama3 8B and Llama3 70B?

A: Llama3 8B and Llama3 70B differ in their size and complexity. Llama3 8B is a smaller model, while Llama3 70B is a much larger and more complex model. Larger models typically have higher accuracy but require more processing power and memory.

Q: How do I choose the right LLM model for my project?

A: The choice of an LLM model depends on your specific needs and constraints. Consider factors such as the desired accuracy, available resources (like GPU memory), and the type of tasks you'll be performing. Research and experimentation are key.

Q: How does quantization impact the accuracy of an LLM?

A: Quantization can slightly reduce the accuracy of an LLM, but the trade-off is often worth it for improved performance and efficiency. For most applications, the accuracy loss is minimal and does not significantly impact the overall usefulness of the model.

Q: What are some future trends in local LLM development?

A: The future of local LLM development is exciting! Expect to see advancements in: * Model Optimization: More efficient models with reduced memory requirements while maintaining high accuracy. * Hardware Acceleration: More specialized hardware designed specifically for LLM processing. * New Models: The emergence of new LLM models with even greater capabilities.

Keywords:

Llama3 8B, NVIDIA 309024GB, GPU, GPUCores, LLM, Large Language Model, Token Generation Speed, Token/s, Quantization, Q4K_M, F16, Half-Precision, Performance Benchmarks, Text Generation, Summarization, Local LLM, Model Optimization, Hardware Acceleration, Future Trends.