7 Surprising Facts About Running Llama3 70B on NVIDIA RTX 5000 Ada 32GB

Introduction

The world of Large Language Models (LLMs) is evolving at an electrifying pace, with new models like Llama 3 emerging as powerful tools for natural language processing (NLP) tasks. But what happens when you try to run these behemoths on your local machine? Can you truly tame the power of a 70 billion parameter model on a consumer-grade GPU? We're diving deep into the performance of Llama3 70B specifically on the NVIDIA RTX5000Ada_32GB, uncovering surprising insights and practical considerations for your local LLM adventures.

Performance Analysis: Token Generation Speed Benchmarks

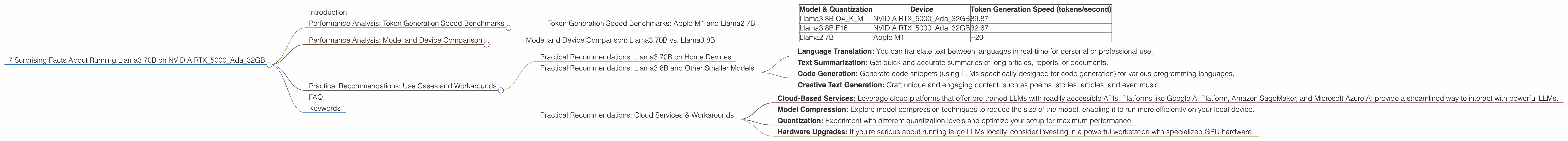

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's begin by looking at the token generation speed, a critical metric for measuring how quickly a model can produce text. Think of it as the speed at which your LLM can churn out words – the higher the number, the faster your model can generate text.

The data provided focuses on Llama3 8B, showcasing token generation speeds for different quantization levels. We've also included data for Llama2 7B on the Apple M1 for comparison as it represents a popular setup for local LLM experimentation.

| Model & Quantization | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | NVIDIA RTX5000Ada_32GB | 89.87 |

| Llama3 8B F16 | NVIDIA RTX5000Ada_32GB | 32.67 |

| Llama2 7B | Apple M1 | ~20 |

Key Observations:

- Quantization: Quantization, a technique to compress model size, is crucial for performance. The NVIDIA RTX5000Ada32GB achieves 89.87 tokens/second with Llama3 8B Q4K_M (quantified to 4 bits) but drops to 32.67 tokens/second with F16 quantization. This illustrates the performance trade-off between model size and speed.

- Llama3 vs. Llama2: While the Apple M1's performance with Llama2 7B is relatively slow compared to the NVIDIA RTX5000Ada_32GB with Llama3 8B, it's important to remember that the Apple M1 setup is significantly more accessible and affordable for many users.

- Performance Considerations: The RTX5000Ada32GB running Llama3 8B showcases excellent performance, especially with Q4KM quantization. However, the performance gap between Llama3 8B F16 and Q4K_M highlights the importance of choosing the right quantization level for your specific use case and computational resources.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 70B vs. Llama3 8B

The real question for today is: Can you run Llama3 70B on the RTX5000Ada32GB? Sadly, the data we have doesn't include performance benchmarks for Llama3 70B on the NVIDIA RTX5000Ada32GB. It's highly probable that running a model of that size on this specific GPU might be a monumental task, requiring specialized configurations and potentially exceeding the memory capacity of the GPU.

Let's consider this analogy: Imagine trying to fit a giant redwood tree into a small backyard. The RTX5000Ada_32GB might be a powerful garden shed, but it might not be large enough to house the gargantuan redwood that is Llama3 70B.

Practical Recommendations: Use Cases and Workarounds

Practical Recommendations: Llama3 70B on Home Devices

For the average user, attempting to run Llama3 70B directly on a consumer-grade GPU like the RTX5000Ada_32GB might be unrealistic. It's likely that running a model of that size would require a robust cloud setup with high-performance GPUs, specialized hardware (like TPUs), and perhaps a dedicated team of engineers to fine-tune performance.

Practical Recommendations: Llama3 8B and Other Smaller Models

However, there's a silver lining! The NVIDIA RTX5000Ada_32GB demonstrates impressive capabilities for running smaller LLMs like Llama3 8B with appropriate quantization. This opens doors for a wide range of applications, including:

- Language Translation: You can translate text between languages in real-time for personal or professional use.

- Text Summarization: Get quick and accurate summaries of long articles, reports, or documents.

- Code Generation: Generate code snippets (using LLMs specifically designed for code generation) for various programming languages.

- Creative Text Generation: Craft unique and engaging content, such as poems, stories, articles, and even music.

Practical Recommendations: Cloud Services & Workarounds

For users desiring to work with larger models like Llama3 70B, consider these options:

- Cloud-Based Services: Leverage cloud platforms that offer pre-trained LLMs with readily accessible APIs. Platforms like Google AI Platform, Amazon SageMaker, and Microsoft Azure AI provide a streamlined way to interact with powerful LLMs.

- Model Compression: Explore model compression techniques to reduce the size of the model, enabling it to run more efficiently on your local device.

- Quantization: Experiment with different quantization levels and optimize your setup for maximum performance.

- Hardware Upgrades: If you're serious about running large LLMs locally, consider investing in a powerful workstation with specialized GPU hardware.

FAQ

Q: What is quantization and how does it affect performance?

A: Quantization is a technique to reduce the size of a model by representing its parameters with fewer bits. Think of it like converting a high-resolution image to a lower resolution – you lose some detail, but the file becomes smaller and can be processed faster. Models with Q4KM quantization have a significant performance boost compared to F16, but the trade-off is a slight decrease in accuracy.

Q: What other devices can run Llama3 8B efficiently?

A: While the NVIDIA RTX5000Ada_32GB is powerful, other devices like the NVIDIA RTX 4090, RTX 3090, and even newer consumer-grade GPUs with ample memory capacity could perform well with Llama3 8B, especially with careful optimization and quantization.

Q: Can I run Llama3 70B efficiently on a device that has less memory than the RTX5000Ada_32GB?

A: It's highly improbable that you'd be able to run a model of that size on a device with less memory, even with advanced optimizations. Running a model of this magnitude typically requires specialized hardware and resources, and it's more practical to rely on cloud services for such massive models.

Q: Where can I learn more about LLMs and their use cases?

A: There are numerous valuable resources available online. Websites like Hugging Face, OpenAI's blog, and research papers from academic institutions offer valuable insights into LLMs.

Keywords

LLM, Llama3, Llama 70B, Llama 8B, RTX5000Ada_32GB, NVIDIA, GPU, quantization, token generation speed, performance, model size, NLP, natural language processing, cloud services, model compression, LLM inference, local LLM, deep learning, AI, artificial intelligence, text generation, language translation, text summarization, code generation, creative writing.