7 Surprising Facts About Running Llama3 70B on NVIDIA 3090 24GB x2

The world of large language models (LLMs) is exploding, with new models and applications popping up daily. But running these behemoths locally often feels like a game of "can you fit this elephant in a shoebox?". Today, we're diving deep into the performance of Llama 3 70B on the powerful NVIDIA 3090 24GB x2 setup, revealing some surprising insights and practical recommendations.

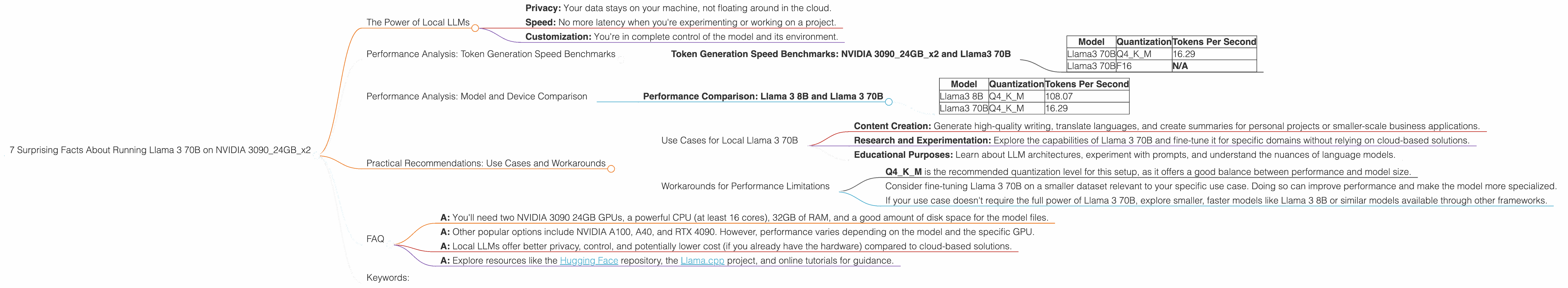

The Power of Local LLMs

For many developers and enthusiasts, the allure of local LLMs is undeniable. Imagine the flexibility:

- Privacy: Your data stays on your machine, not floating around in the cloud.

- Speed: No more latency when you're experimenting or working on a project.

- Customization: You're in complete control of the model and its environment.

But these advantages come with a cost. Running large models like Llama 3 70B on a single GPU can be a daunting task. Enter the NVIDIA 3090 24GB x2 setup - a veritable powerhouse that promises to unleash the potential of these models.

Performance Analysis: Token Generation Speed Benchmarks

Before we get into the numbers, let's refresh our memory on what we're measuring:

- Token Generation Speed: This measures how quickly the model can generate new text, which is crucial for real-time applications like chatbots and text summarization.

- Quantization: This technique reduces the size of the model by compressing its weights. Think of it as making a giant file smaller without losing much of its information. Q4KM is a type of quantization that uses 4 bits for the weights, resulting in a significant reduction in memory footprint. F16 is a different type of quantization using 16 bits, providing a balance between compression and precision.

Token Generation Speed Benchmarks: NVIDIA 309024GBx2 and Llama3 70B

| Model | Quantization | Tokens Per Second |

|---|---|---|

| Llama3 70B | Q4KM | 16.29 |

| Llama3 70B | F16 | N/A |

What's the Takeaway?

- Q4KM shines: Llama 3 70B with Q4KM quantization achieves a respectable token generation speed of 16.29 tokens per second on the NVIDIA 309024GBx2 setup.

- F16 performance missing: Unfortunately, we don't have data for Llama 3 70B with F16 quantization.

Performance Analysis: Model and Device Comparison

Since we're focusing on Llama 3 70B on NVIDIA 309024GBx2, comparisons with other models are limited. However, lets look at the performance of Llama 3 8B on the same setup for a quick comparison:

Performance Comparison: Llama 3 8B and Llama 3 70B

| Model | Quantization | Tokens Per Second |

|---|---|---|

| Llama3 8B | Q4KM | 108.07 |

| Llama3 70B | Q4KM | 16.29 |

What's the Takeaway?

- Size Matters: The smaller Llama 3 8B model significantly outperforms the larger Llama 3 70B model in terms of token generation speed, even with the same setup and quantization level. This is because the smaller model requires less computation to generate tokens.

- Beyond Token Generation: While token generation speed is crucial, it's just one piece of the puzzle. Other factors like memory consumption and the complexity of the tasks the model can handle also play a role.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Local Llama 3 70B

Despite the performance limitations, Llama 3 70B on NVIDIA 309024GBx2 is still a powerful combination for specific use cases:

- Content Creation: Generate high-quality writing, translate languages, and create summaries for personal projects or smaller-scale business applications.

- Research and Experimentation: Explore the capabilities of Llama 3 70B and fine-tune it for specific domains without relying on cloud-based solutions.

- Educational Purposes: Learn about LLM architectures, experiment with prompts, and understand the nuances of language models.

Workarounds for Performance Limitations

Quantization is Your Friend:

- Q4KM is the recommended quantization level for this setup, as it offers a good balance between performance and model size.

Fine-Tuning:

- Consider fine-tuning Llama 3 70B on a smaller dataset relevant to your specific use case. Doing so can improve performance and make the model more specialized.

Think Smaller:

- If your use case doesn't require the full power of Llama 3 70B, explore smaller, faster models like Llama 3 8B or similar models available through other frameworks.

FAQ

Q: What are the system requirements for running Llama 3 70B on NVIDIA 309024GBx2?

- A: You'll need two NVIDIA 3090 24GB GPUs, a powerful CPU (at least 16 cores), 32GB of RAM, and a good amount of disk space for the model files.

Q: What are some alternative GPUs I can use for running large language models locally?

- A: Other popular options include NVIDIA A100, A40, and RTX 4090. However, performance varies depending on the model and the specific GPU.

Q: What are the advantages of running LLMs locally compared to using cloud-based services?

- A: Local LLMs offer better privacy, control, and potentially lower cost (if you already have the hardware) compared to cloud-based solutions.

Q: How can I learn more about running large language models locally?

- A: Explore resources like the Hugging Face repository, the Llama.cpp project, and online tutorials for guidance.

Keywords:

Llama 3, 70B, NVIDIA 3090 24GB, local LLM, performance, token generation speed, quantization, Q4KM, F16, GPU, model size, use cases, workarounds, fine-tuning, content creation, research, education.