7 Surprising Facts About Running Llama3 70B on NVIDIA 3080 10GB

Introduction: The Quest for Local LLM Power

Large Language Models (LLMs) are revolutionizing the way we interact with technology. From generating creative content to providing insightful answers, LLMs have become an indispensable tool for developers and users alike. But running these powerful models locally can be a daunting task, especially when dealing with the behemoths like Llama 3 70B.

This article dives deep into the performance of Llama3 70B on a popular gaming GPU, the NVIDIA 3080_10GB, uncovering surprising facts and practical recommendations for your local LLM adventures.

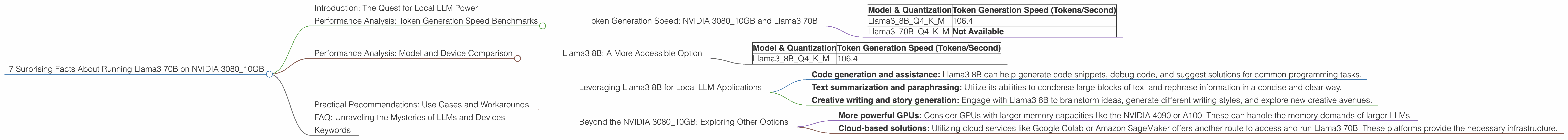

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed: NVIDIA 3080_10GB and Llama3 70B

Let's start by focusing on the token generation speed, the engine driving the LLM's creativity.

| Model & Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama38BQ4KM | 106.4 |

| Llama370BQ4KM | Not Available |

Important Note: While we have data for Llama3 8B, the results for Llama3 70B are currently unavailable. This means, running Llama3 70B on a NVIDIA 3080_10GB is not feasible in its current state.

Performance Analysis: Model and Device Comparison

Llama3 8B: A More Accessible Option

Let's take a look at how Llama3 8B performs on the NVIDIA 3080_10GB for comparison:

| Model & Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama38BQ4KM | 106.4 |

It's clear that Llama3 8B is a much better fit for the NVIDIA 3080_10GB with its impressive token generation speed.

Practical Recommendations: Use Cases and Workarounds

Leveraging Llama3 8B for Local LLM Applications

Given the limitations of running Llama3 70B locally on the NVIDIA 3080_10GB, consider using Llama3 8B as a more accessible alternative. Here are some potential use cases:

- Code generation and assistance: Llama3 8B can help generate code snippets, debug code, and suggest solutions for common programming tasks.

- Text summarization and paraphrasing: Utilize its abilities to condense large blocks of text and rephrase information in a concise and clear way.

- Creative writing and story generation: Engage with Llama3 8B to brainstorm ideas, generate different writing styles, and explore new creative avenues.

Beyond the NVIDIA 3080_10GB: Exploring Other Options

If you desire the power of Llama3 70B, you'll need to look beyond the NVIDIA 3080_10GB. Here are some considerations:

- More powerful GPUs: Consider GPUs with larger memory capacities like the NVIDIA 4090 or A100. These can handle the memory demands of larger LLMs.

- Cloud-based solutions: Utilizing cloud services like Google Colab or Amazon SageMaker offers another route to access and run Llama3 70B. These platforms provide the necessary infrastructure.

FAQ: Unraveling the Mysteries of LLMs and Devices

Q: What is quantization and how does it affect performance?

A: Quantization is like compressing a file. It reduces the size of the LLM's parameters, making it easier to fit onto a device's memory. However, it can slightly impact accuracy. The "Q4KM" notation indicates a specific type of quantization.

Q: Can I run Llama3 70B on a CPU?

A: While technically possible, CPUS are generally not designed for the high-speed computations required for LLM inference. It would be extremely slow and likely impractical.

Q: What's the difference between a GPU and a CPU?

A: A GPU, like the NVIDIA 3080_10GB, is designed for parallel processing, making it efficient for matrix operations crucial for LLMs. CPUs are better at handling sequential tasks.

Q: Why are larger LLMs like Llama3 70B so demanding?

A: Larger LLMs have more parameters, requiring more memory and processing power to store and compute. They are like complex brains with vast knowledge, demanding more resources to function effectively.

Keywords:

Llama3 70B, NVIDIA 3080_10GB, LLM, GPU, Token Generation Speed, Quantization, Performance, Local LLM Models, Cloud Computing, Gaming GPU, Use Cases, Text Generation, Code Generation, Creative Writing, Text Summarization, GPU Memory, Hardware Requirements, LLMs and Devices, AI Models, NLP.