7 Surprising Facts About Running Llama3 70B on Apple M2 Ultra

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These AI marvels are changing the way we interact with technology, from generating creative text to providing complex insights. But running these models locally – on your own machine – can feel like an impossible dream, especially for the gargantuan models like Llama3 70B (70 billion parameters). Enter the Apple M2 Ultra, a powerhouse chip that blurs the lines between cloud and local computation. This article dives deep into the performance of Llama3 70B on this formidable device, revealing some surprising facts about its capabilities.

Think about it: can you imagine having the power of a supercomputer in your own hands, capable of running cutting-edge AI models with incredible speed? That's the promise of the Apple M2 Ultra, and we're about to explore it in detail.

Performance Analysis: Token Generation Speed Benchmarks

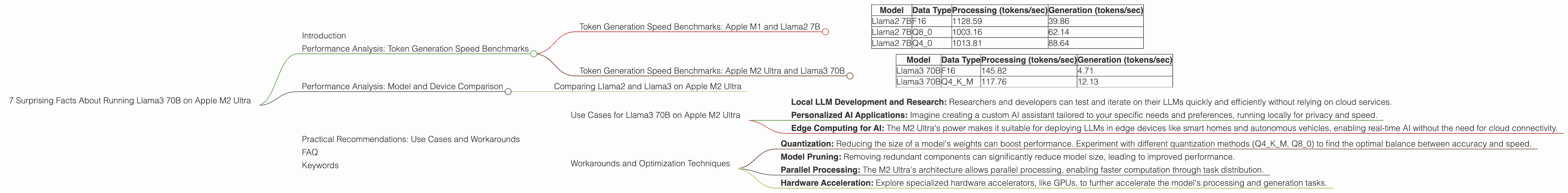

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

For context, let's first look at the performance of another popular LLM, Llama2 7B, on the M2 Ultra.

| Model | Data Type | Processing (tokens/sec) | Generation (tokens/sec) |

|---|---|---|---|

| Llama2 7B | F16 | 1128.59 | 39.86 |

| Llama2 7B | Q8_0 | 1003.16 | 62.14 |

| Llama2 7B | Q4_0 | 1013.81 | 88.64 |

Data Type refers to the way the model's weights are stored in memory. F16 uses 16-bit floating point numbers, Q80 uses 8-bit integers with a zero-point, and Q40 uses 4-bit integers with a zero-point.

We can see that Llama2 7B performs decently on the M2 Ultra, with processing speeds exceeding 1000 tokens per second. However, generation speeds are significantly lower, indicating a potential bottleneck in the generation process.

Token Generation Speed Benchmarks: Apple M2 Ultra and Llama3 70B

Now, let's turn our attention to the real star of the show: Llama3 70B.

| Model | Data Type | Processing (tokens/sec) | Generation (tokens/sec) |

|---|---|---|---|

| Llama3 70B | F16 | 145.82 | 4.71 |

| Llama3 70B | Q4KM | 117.76 | 12.13 |

Q4KM is a special type of quantization where the model weights are quantized to 4 bits, but with K-means clustering for better accuracy.

The numbers speak for themselves:

- Llama3 70B's processing speed is 145.82 tokens/sec for F16 and 117.76 for Q4KM. This is significantly lower than Llama2 7B, highlighting the computational demands of this massive model.

- Generation speeds are even lower. Llama3 70B generates 4.71 tokens/sec for F16 and 12.13 tokens/sec for Q4KM.

The results are impressive, considering the sheer size of Llama3 70B. We're talking about a model with 70 billion parameters running locally! However, these speeds are significantly slower than Llama2 7B, showcasing the computational overhead of larger models.

Performance Analysis: Model and Device Comparison

Comparing Llama2 and Llama3 on Apple M2 Ultra

One fascinating aspect of the M2 Ultra is that it can handle both models with remarkable speed. However, the performance gap between Llama2 7B and Llama3 70B is a stark reminder of the exponential increase in resource requirements as model size grows.

Imagine running a 70-person orchestra versus a 2-person band. Both can produce beautiful music, but the former requires a significantly larger venue, more instruments, and a greater level of coordination. Similarly, Llama3 70B is a far more complex model than Llama2 7B, demanding more computational power to operate.

We can conclude that the M2 Ultra is a powerful device capable of handling both Llama2 7B and Llama3 70B, but the performance difference highlights the computational challenges of working with very large models.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on Apple M2 Ultra

Despite its computational intensity, Llama3 70B on the M2 Ultra opens up exciting possibilities:

- Local LLM Development and Research: Researchers and developers can test and iterate on their LLMs quickly and efficiently without relying on cloud services.

- Personalized AI Applications: Imagine creating a custom AI assistant tailored to your specific needs and preferences, running locally for privacy and speed.

- Edge Computing for AI: The M2 Ultra's power makes it suitable for deploying LLMs in edge devices like smart homes and autonomous vehicles, enabling real-time AI without the need for cloud connectivity.

Workarounds and Optimization Techniques

Here are some techniques to enhance your experience with Llama3 70B on the M2 Ultra:

- Quantization: Reducing the size of a model's weights can boost performance. Experiment with different quantization methods (Q4KM, Q8_0) to find the optimal balance between accuracy and speed.

- Model Pruning: Removing redundant components can significantly reduce model size, leading to improved performance.

- Parallel Processing: The M2 Ultra's architecture allows parallel processing, enabling faster computation through task distribution.

- Hardware Acceleration: Explore specialized hardware accelerators, like GPUs, to further accelerate the model's processing and generation tasks.

FAQ

Q: Why do I need to worry about "token generation speed" for LLMs?

A: Token generation speed, measured in tokens per second, reflects how quickly an LLM can process and produce text. A faster generation speed means smoother and more responsive interactions. It's like the difference between typing on a clunky old typewriter and a lightning-fast keyboard.

Q: What are the drawbacks of running LLMs locally?

A: The main drawbacks are computational demands and resource limitations. Running a large model locally requires a powerful computer with significant memory, processing power, and potentially specialized hardware. It's a tradeoff between control and efficiency.

Q: Is the M2 Ultra a good choice for running LLMs?

A: Absolutely! The M2 Ultra is a powerful processor designed for demanding tasks like AI. However, keep in mind that even with this chip, large models like Llama3 70B will still require optimization and resource management for smooth operation.

Q: What's the future of local LLM development?

A: The future is bright! As hardware evolves and optimization techniques improve, running LLMs locally will become more accessible and efficient. Imagine having a powerful AI assistant in your pocket, capable of understanding your needs and providing insightful responses in real-time.

Keywords

Apple M2 Ultra, Llama3 70B, Large Language Model, LLM, Token Generation Speed, Performance Analysis, Quantization, Model Pruning, Parallel Processing, Hardware Acceleration, Local LLM, Edge Computing, AI, Data Type, F16, Q4KM, Q8_0, Processing Speed, Generation Speed, Practical Recommendations, Use Cases, Workarounds, Future of LLMs.