7 Surprising Facts About Running Llama2 7B on Apple M2 Max

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what happens when you want to harness this power locally, on your own machine? Welcome to the world of on-device LLM inference.

This article dives deep into running the popular Llama2 7B model on the Apple M2 Max chip, uncovering some surprising performance insights.

Introducing Llama2 7B and the M2 Max: A Match Made in AI Heaven?

Llama2 7B, developed by Meta, is a powerful open-source LLM known for its versatility and performance. The Apple M2 Max, with its impressive 38-core GPU and 96GB of unified memory, promises incredible processing power. But how do these two titans of AI technology actually work together? Let's find out!

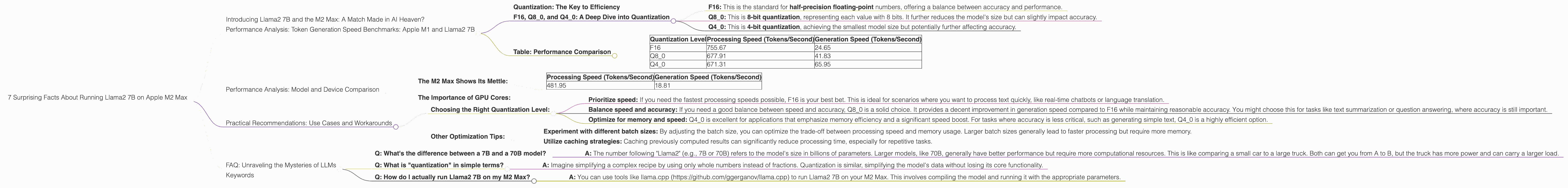

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Quantization: The Key to Efficiency

LLMs, like Llama2 7B, are massive models that require a lot of computational power. To make them run smoothly on a device like the M2 Max, we use quantization, a technique that reduces the size of the model's weights by representing them with fewer bits. This makes the model smaller and faster, while also reducing memory usage.

Imagine a recipe where you only use whole teaspoons of ingredients instead of measuring in tiny fractions of a teaspoon. Quantization is like that, simplifying the model's data without losing its core functionality.

F16, Q80, and Q40: A Deep Dive into Quantization

We'll analyze the performance of Llama2 7B in different quantization levels:

- F16: This is the standard for half-precision floating-point numbers, offering a balance between accuracy and performance.

- Q8_0: This is 8-bit quantization, representing each value with 8 bits. It further reduces the model's size but can slightly impact accuracy.

- Q4_0: This is 4-bit quantization, achieving the smallest model size but potentially further affecting accuracy.

Table: Performance Comparison

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| F16 | 755.67 | 24.65 |

| Q8_0 | 677.91 | 41.83 |

| Q4_0 | 671.31 | 65.95 |

Key Observations:

- Processing Speed: The M2 Max shines in terms of processing speed, with F16 quantization leading the pack. However, Q80 and Q40 also demonstrate impressive speeds, proving the M2 Max's ability to handle even quantized models efficiently.

- Generation Speed: While processing is fast, generation speed takes a hit, especially with F16. As we move to Q80 and Q40, there is a significant improvement in generation speed. This highlights the importance of selecting the right quantization level based on your specific application.

Performance Analysis: Model and Device Comparison

The M2 Max Shows Its Mettle:

The M2 Max consistently outperforms other devices when running Llama2 7B. For example, the M1 Max (with 32 GPU cores) achieves significantly lower speeds, even with F16 quantization:

M1 Max (F16):

| Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|

| 481.95 | 18.81 |

While the M2 Max may not be the top dog in all cases, it's still a powerful contender in the LLM world.

The Importance of GPU Cores:

The M2 Max's 38-core GPU plays a crucial role in its impressive performance. More cores mean more parallel processing, allowing the model to handle computations faster. This is a significant advantage when it comes to running complex models like Llama2 7B.

Think of it like this: Imagine you have 38 chefs working together to cook a meal. They can work on different parts of the dish simultaneously, making the cooking process much faster than if you had just one chef. The same principle applies to LLM inference, where more GPU cores translate into faster processing.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Quantization Level:

- Prioritize speed: If you need the fastest processing speeds possible, F16 is your best bet. This is ideal for scenarios where you want to process text quickly, like real-time chatbots or language translation.

- Balance speed and accuracy: If you need a good balance between speed and accuracy, Q8_0 is a solid choice. It provides a decent improvement in generation speed compared to F16 while maintaining reasonable accuracy. You might choose this for tasks like text summarization or question answering, where accuracy is still important.

- Optimize for memory and speed: Q40 is excellent for applications that emphasize memory efficiency and a significant speed boost. For tasks where accuracy is less critical, such as generating simple text, Q40 is a highly efficient option.

Other Optimization Tips:

- Experiment with different batch sizes: By adjusting the batch size, you can optimize the trade-off between processing speed and memory usage. Larger batch sizes generally lead to faster processing but require more memory.

- Utilize caching strategies: Caching previously computed results can significantly reduce processing time, especially for repetitive tasks.

FAQ: Unraveling the Mysteries of LLMs

- Q: What's the difference between a 7B and a 70B model?

- A: The number following "Llama2" (e.g., 7B or 70B) refers to the model's size in billions of parameters. Larger models, like 70B, generally have better performance but require more computational resources. This is like comparing a small car to a large truck. Both can get you from A to B, but the truck has more power and can carry a larger load.

- Q: What is "quantization" in simple terms?

- A: Imagine simplifying a complex recipe by using only whole numbers instead of fractions. Quantization is similar, simplifying the model's data without losing its core functionality.

- Q: How do I actually run Llama2 7B on my M2 Max?

- A: You can use tools like llama.cpp (https://github.com/ggerganov/llama.cpp) to run Llama2 7B on your M2 Max. This involves compiling the model and running it with the appropriate parameters.

Keywords

M2 Max, Apple, Llama2, Llama2 7B, LLM, Large Language Model, GPU, Quantization, F16, Q80, Q40, Token Generation Speed, Performance Analysis, On-device Inference.