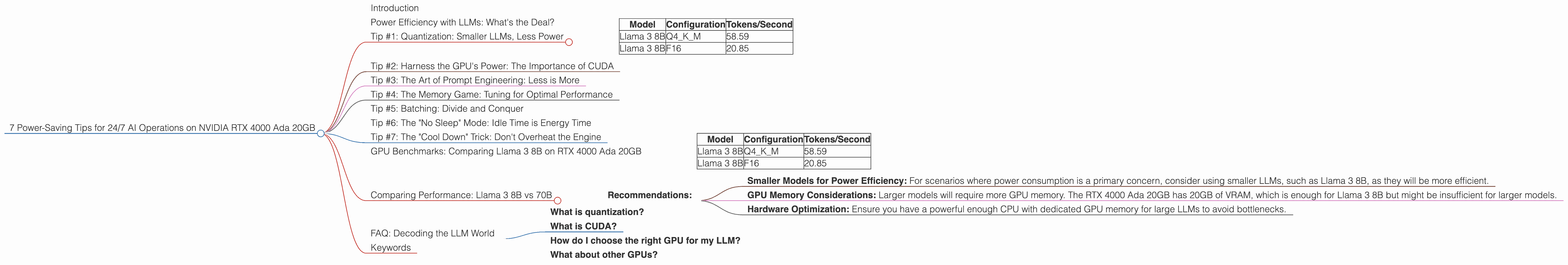

7 Power Saving Tips for 24 7 AI Operations on NVIDIA RTX 4000 Ada 20GB

Introduction

Running large language models (LLMs) locally can be a fantastic way to explore their capabilities, experiment with different prompts, and even build custom applications. However, these models are computationally demanding and can quickly drain your power supply, especially if you run them 24/7. This is where the NVIDIA RTX 4000 Ada 20GB comes in – a powerful GPU designed for AI workloads, but still needing smart optimizations to keep power consumption in check.

This article will delve into practical tips and tricks to optimize your LLM operations on this powerful GPU, maximizing performance while minimizing your energy footprint. We'll analyze real-world data, compare different configurations, and provide actionable advice to help you get the most out of your hardware.

Power Efficiency with LLMs: What's the Deal?

Let's get geeky for a moment. Imagine a powerful AI like a Formula 1 racecar - blazing fast but guzzling fuel (electricity in our case). Running an LLM like Llama 3 8B can be like pushing the car to its limits, demanding lots of power to maintain top speed. But like a skilled driver, we can optimize the car to perform efficiently.

Now, running a LLM 24/7 is like driving that Formula 1 car on an endurance race track. It's all about finding the right balance: high performance without burning all the fuel. This is where our power-saving tips come into play.

Tip #1: Quantization: Smaller LLMs, Less Power

Think about this: We can shrink the model size without sacrificing too much accuracy. It's like fitting your racecar with a slightly downsized engine – it might not be as fast, but it gets the job done with less fuel. Quantization is like trimming down your LLM, converting its weights to a smaller data type.

This means that instead of using large 32-bit floating point numbers for calculations, we can use smaller, 16-bit or even 8-bit representations - like replacing a whole banana with a smaller bite-sized piece*. It's a clever trick that can significantly reduce the memory footprint and computational demand.

The results speak for themselves:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 58.59 |

| Llama 3 8B | F16 | 20.85 |

*While the 8-bit version (Q4KM) is significantly faster, it might slightly impact the accuracy.

Tip #2: Harness the GPU's Power: The Importance of CUDA

Let's go back to the Formula 1 car analogy. Imagine trying to drive the car on a dirt track - not exactly the ideal environment. CUDA is like having the perfect track for your car. It's NVIDIA's parallel computing platform that lets the GPU shine.

CUDA allows us to run our LLM model directly on the GPU's powerful processing cores, taking advantage of its parallel processing capabilities. This is way more efficient than relying on the CPU alone, which is like trying to drive the Formula 1 car on a bumpy road.

To ensure you're using CUDA, make sure your LLM implementation and environment are configured for GPU acceleration.

Tip #3: The Art of Prompt Engineering: Less is More

A good prompt is like a precise steering wheel - it guides your LLM toward the desired outcome, ensuring efficient processing. A poorly crafted prompt, like trying to steer a car with a loose wheel, leads to wasted energy and unnecessary calculations.

Here's the trick: Short, precise prompts with clear instructions will give your LLM the information it needs without forcing it to process unnecessary bits and bytes.

Tip #4: The Memory Game: Tuning for Optimal Performance

Remember that powerful engine in our Formula 1 car? It needs the right amount of fuel to perform at its best. Just like that, your GPU needs the right amount of memory to run your LLM efficiently.

Here's the key: Allocate enough memory to avoid swapping, which is like the engine running out of fuel mid-race! Adjust the memory allocation parameters for your LLM implementation based on the model size and complexity.

Tip #5: Batching: Divide and Conquer

Our Formula 1 car is fast, but it can't handle all the turns at once. Batching is like breaking down the race track into manageable sections, processing multiple prompts simultaneously.

This approach helps the GPU operate more efficiently, optimizing its processing power and minimizing energy consumption. Experiment with different batch sizes to see what works best for your specific LLM and use case.

Tip #6: The "No Sleep" Mode: Idle Time is Energy Time

Imagine our Formula 1 car idling at the starting line. It's not racing, yet it's still burning fuel. Similarly, idle GPU cores in your computer are like the car idling, consuming power even when your LLM is not actively processing.

Here's the trick: Use tools to automatically shutdown the GPU when it's not actively performing a task, similar to turning off the car engine when you're parked. You can explore NVIDIA's power management features or tools like "gpu-power-off" to achieve this.

Tip #7: The "Cool Down" Trick: Don't Overheat the Engine

Just like a car engine needs to cool down after a race, your GPU needs to dissipate heat efficiently. Overheating can lead to performance throttling, which means your LLM will slow down and consume more power.

Here's the solution: Ensure your system has proper cooling mechanisms, such as fans and heat sinks, to prevent overheating. You can also monitor GPU temperatures using tools like "nvidia-smi" and adjust fan speeds manually to keep things cool.

GPU Benchmarks: Comparing Llama 3 8B on RTX 4000 Ada 20GB

Now let's dive into some real-world data to see how these tips can impact the performance of a popular language model. We'll focus on Llama 3 8B, a powerful yet manageable LLM, analyzing the difference between two quantization techniques:

- Q4KM (Quantization): This quantization method dramatically reduces memory usage, allowing for faster processing.

- F16 (Float16): While smaller than the original 32-bit representation, F16 still consumes more memory compared to Q4KM.

Let's compare the results:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 58.59 |

| Llama 3 8B | F16 | 20.85 |

Analysis:

- Q4KM: This quantization method generates tokens at an impressive speed of 58.59 tokens per second. This speed is significantly higher than the F16 configuration, meaning that Q4KM is more efficient in terms of power consumption.

- F16: While F16 provides a more faithful representation of the original model, its speed is significantly slower compared to Q4KM, resulting in higher power consumption.

Conclusion:

Our data shows that the Q4KM configuration is a clear winner in terms of speed and power efficiency. This demonstrates the power of quantization techniques to significantly reduce the computational needs of LLMs, leading to better performance and lower energy consumption.

Comparing Performance: Llama 3 8B vs 70B

While the data provided does not include performance metrics for larger models like Llama 3 70B on the RTX 4000 Ada 20GB, it's important to understand that larger models will require more memory and processing power. The overall performance of these larger models might not be as impressive, and power consumption will likely be higher.

Recommendations:

- Smaller Models for Power Efficiency: For scenarios where power consumption is a primary concern, consider using smaller LLMs, such as Llama 3 8B, as they will be more efficient.

- GPU Memory Considerations: Larger models will require more GPU memory. The RTX 4000 Ada 20GB has 20GB of VRAM, which is enough for Llama 3 8B but might be insufficient for larger models.

- Hardware Optimization: Ensure you have a powerful enough CPU with dedicated GPU memory for large LLMs to avoid bottlenecks.

FAQ: Decoding the LLM World

What is quantization?

Quantization is a technique for reducing the size of a model by converting its weights to smaller data types. This is like shrinking a detailed picture into a smaller version with fewer pixels. It allows for faster processing and less memory usage.

What is CUDA?

CUDA is NVIDIA's parallel computing platform that allows you to run applications directly on the GPU's powerful processing cores. This is like having a special track designed for your Formula 1 car to race faster.

How do I choose the right GPU for my LLM?

Consider the size and complexity of your LLM, the amount of GPU memory required, and your budget. The RTX 4000 Ada 20GB is a good choice for running smaller LLMs, while larger models may require a more powerful GPU with more memory.

What about other GPUs?

The RTX 4000 Ada 20GB is a great option for running LLMs, but other GPUs are available in the market. For more information on comparing GPUs for LLM inference, refer to resources like the "GPU Benchmarks on LLM Inference" project (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference).

Keywords

NVIDIA RTX 4000 Ada 20GB, LLM, Large Language Model, Llama 3, Quantization, CUDA, Power Efficiency, GPU, Token/Second, Energy Consumption, Performance, Prompt Engineering, Memory Management, Batching, Idle Power Consumption, GPU Temperature, Cool Down, FAQ