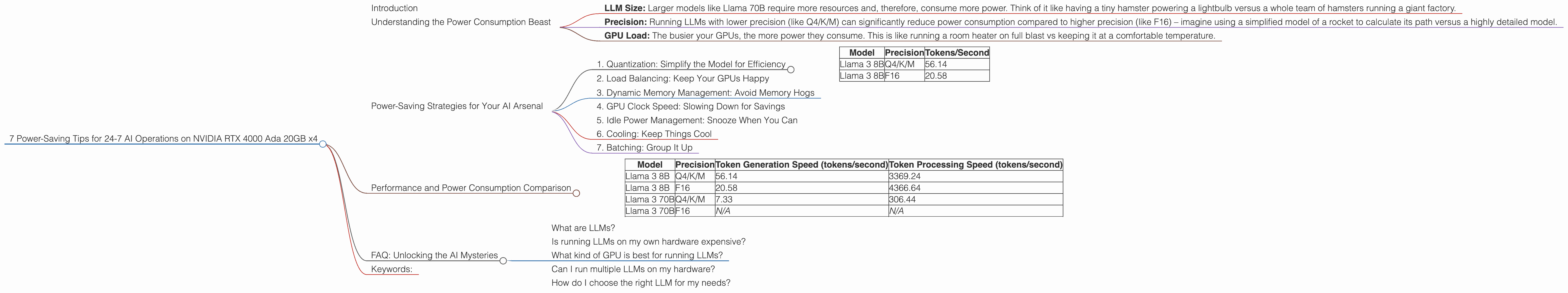

7 Power Saving Tips for 24 7 AI Operations on NVIDIA RTX 4000 Ada 20GB x4

Introduction

Running large language models (LLMs) on your own hardware opens up a whole new world of possibilities. Imagine having instant access to a powerful AI assistant, able to generate creative content, answer your questions, and even write code - all without relying on cloud services. But with the increasing size and complexity of LLMs, keeping them running 24/7 can become a power-hungry endeavor. This is where the power of optimizing your setup comes into play. This article will delve into practical tips for running LLMs on an NVIDIA RTX 4000 Ada 20GB x4 setup while keeping your electricity bill in check.

Understanding the Power Consumption Beast

Before diving into power-saving techniques, let's understand the factors influencing your power bill:

- LLM Size: Larger models like Llama 70B require more resources and, therefore, consume more power. Think of it like having a tiny hamster powering a lightbulb versus a whole team of hamsters running a giant factory.

- Precision: Running LLMs with lower precision (like Q4/K/M) can significantly reduce power consumption compared to higher precision (like F16) – imagine using a simplified model of a rocket to calculate its path versus a highly detailed model.

- GPU Load: The busier your GPUs, the more power they consume. This is like running a room heater on full blast vs keeping it at a comfortable temperature.

Power-Saving Strategies for Your AI Arsenal

Let's get into the nitty-gritty of optimizing your AI setup for energy efficiency:

1. Quantization: Simplify the Model for Efficiency

Think of quantization as a "diet" for your AI model. It transforms numerical data from higher precision (like F16) to lower precision (like Q4/K/M), making it lighter on its feet. This effectively reduces the amount of data your GPU needs to process, translating into significant power savings.

For example:

On our beloved NVIDIA RTX 4000 Ada 20GB x4 rig, we can see the difference in token generation speed between Llama 3 8B running on Q4/K/M and F16:

| Model | Precision | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4/K/M | 56.14 |

| Llama 3 8B | F16 | 20.58 |

As you can see, using Q4/K/M for Llama 3 8B on this setup almost triples the token generation speed compared to F16. This translates to less power consumption for the same amount of work.

2. Load Balancing: Keep Your GPUs Happy

Imagine a single GPU juggling a million tasks at once trying to cope with the workload. This is where load balancing comes in. By strategically distributing the workload across multiple GPUs, you reduce the stress on each individual GPU, minimizing power consumption and potentially even increasing performance. Think of it like having a team of workers instead of a single overworked person.

3. Dynamic Memory Management: Avoid Memory Hogs

Imagine a massive table filled with plates but you only need a few. This is similar to how GPUs handle memory. Dynamic memory management allows your GPUs to allocate just enough memory for the current task, avoiding unnecessary resource allocation and power consumption. This keeps things lean and efficient.

4. GPU Clock Speed: Slowing Down for Savings

Just like a car, your GPU can run at different speeds. Running your GPUs at a lower clock speed can decrease power consumption, but it could also slightly reduce performance. It's like driving a car at a lower speed – you reach your destination later, but you save fuel.

Important Note: While this can work, remember that every GPU has its own ideal performance range. You don't want to go too low, which can lead to instability.

5. Idle Power Management: Snooze When You Can

GPUs consume power even when they're idle. Enable power management features to automatically put GPUs in a low-power state when not actively used. This is like turning off lights in an empty room to save energy.

6. Cooling: Keep Things Cool

Heat is the enemy of efficiency. Proper cooling ensures your GPUs operate at their optimal temperature, leading to longer lifespan and reduced power consumption. Think of it like keeping your house cool – you use less energy to run your air conditioning.

7. Batching: Group It Up

Instead of processing requests individually, batch them up and process them in groups. This approach can significantly optimize GPU utilization and reduce power consumption. It's like sending a large delivery truck instead of multiple small ones – you get things done faster and more efficiently with less energy.

Performance and Power Consumption Comparison

Let's take a look at the differences between the Llama 3 8B and Llama 3 70B models on our trusted NVIDIA RTX 4000 Ada 20GB x4 setup, showcasing the impact of model size and precision.

| Model | Precision | Token Generation Speed (tokens/second) | Token Processing Speed (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4/K/M | 56.14 | 3369.24 |

| Llama 3 8B | F16 | 20.58 | 4366.64 |

| Llama 3 70B | Q4/K/M | 7.33 | 306.44 |

| Llama 3 70B | F16 | N/A | N/A |

Observations:

- Model Size: The Llama 3 70B model, being significantly larger than the Llama 3 8B, naturally shows lower token generation and processing speeds.

- Precision: Using Q4/K/M precision for the 8B model significantly boosts both generation and processing speeds, likely contributing to reduced power consumption compared to F16.

- Missing Data: Currently, data for F16 precision on the Llama 3 70B model is not available on this particular setup.

Key Takeaway: The choice of model size and precision heavily influences power consumption. Choosing smaller models with lower precision can lead to substantial savings.

FAQ: Unlocking the AI Mysteries

What are LLMs?

LLMs are large language models, a type of AI that excels in understanding and generating human-like text. They are trained on vast amounts of data, allowing them to perform complex tasks like translation, writing different types of creative content, and answering your questions in a conversational way.

Is running LLMs on my own hardware expensive?

The cost of running LLMs on your own hardware depends on your setup, the LLM you are using, and how often you run it. You can keep costs down by adopting the tips outlined in this article, such as using lower precision, optimizing your GPU setup, and managing your usage patterns.

What kind of GPU is best for running LLMs?

GPUs with high memory capacity (like 20GB or more) and high compute power are ideal for running LLMs. NVIDIA's RTX 4000 series, with its dedicated Tensor Core and high memory bandwidth, is currently a popular choice for this purpose.

Can I run multiple LLMs on my hardware?

Yes, you can run multiple LLMs simultaneously, but it's essential to consider the resources available and ensure your setup can handle the workload.

How do I choose the right LLM for my needs?

The best LLM for you depends on your requirements. If you need a model for specific tasks like translation or code generation, look for models specialized in those areas. If you want a more general purpose LLM, explore models like Llama 2 and Bloom.

Keywords:

LLMs, NVIDIA, RTX 4000 Ada, power saving, AI, GPU, quantization, load balancing, memory management, clock speed, batching, token generation, token processing, performance, efficiency, electricity, cost.