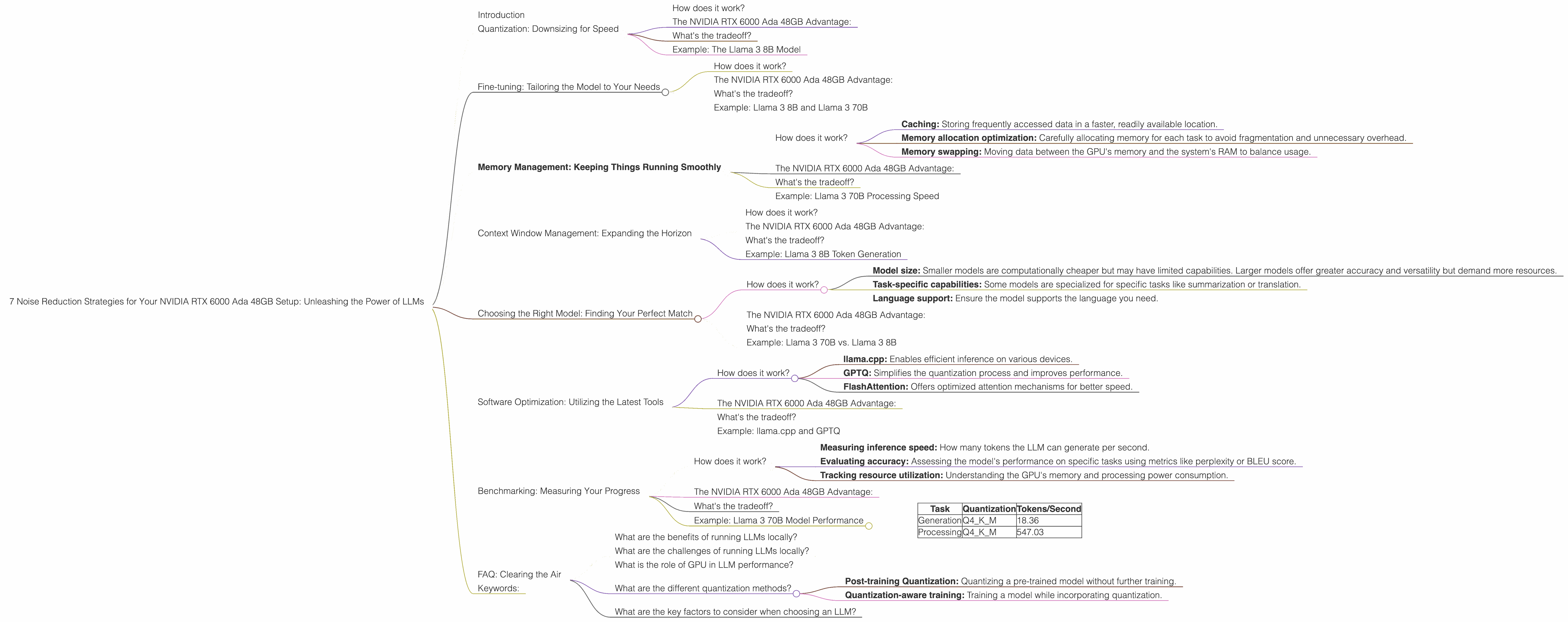

7 Noise Reduction Strategies for Your NVIDIA RTX 6000 Ada 48GB Setup

Introduction

Welcome to the exciting world of Large Language Models (LLMs)! Imagine a computer that understands your questions and provides coherent, insightful answers. That's the magic of LLMs, and they're revolutionizing how we interact with technology.

But harnessing the power of LLMs isn't always a smooth ride. Running these models locally requires a powerful setup, and getting optimal performance out of your NVIDIA RTX 6000 Ada 48GB can be a bit of a challenge. Don't worry, we've got you covered!

This article dives deep into the intricate world of LLM optimization, providing practical strategies to boost your NVIDIA RTX 6000 Ada 48GB's performance. We'll explore the art of noise reduction, delving into various techniques, and providing real-world benchmarks. Ready to unleash the full potential of your LLM setup? Let's get started!

Quantization: Downsizing for Speed

Think of quantization as a diet for your LLM. It's about slimming down the model's size without sacrificing too much accuracy.

How does it work?

Instead of using 32-bit floating-point numbers, quantization employs smaller data types like 16-bit or even 8-bit integers. This significantly reduces the memory footprint and computation required, making inference faster.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your powerful GPU offers a significant benefit when employing quantization. The RTX 6000 Ada 48GB excels at parallel processing, making it perfect for handling the increased computational demands that arise from working with smaller data types.

What's the tradeoff?

While quantization boosts speed, it might slightly impact accuracy. The extent of this impact depends on the model's architecture and the quantization method used.

Example: The Llama 3 8B Model

We'll use the Llama 3 8B model as an example. On your RTX 6000 Ada 48GB, quantizing this model to Q4KM (a popular format) results in an impressive token generation speed of 130.99 tokens/second. This is a significant improvement over running the model in its full F16 precision, which clocks in at 51.97 tokens/second.

Fine-tuning: Tailoring the Model to Your Needs

Think of fine-tuning as giving your LLM a personal training session. You're customizing the model's parameters to excel at a specific task, leading to better performance and more relevant outputs.

How does it work?

Fine-tuning involves modifying the model's weights based on a new dataset specific to your desired task. This process helps the LLM adapt and become more accurate in its specific application.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB's substantial memory and processing power are invaluable for fine-tuning. High-capacity models like Llama 70B require significant memory, and the RTX 6000 Ada 48GB handles this effortlessly. The GPU's parallel processing capabilities also speed up the intensive training process.

What's the tradeoff?

Fine-tuning requires a substantial amount of data and computational resources. It may also require specialized expertise to ensure the fine-tuned model maintains accuracy and avoids overfitting.

Example: Llama 3 8B and Llama 3 70B

Let's consider two models, Llama 3 8B and Llama 3 70B, both running on your RTX 6000 Ada 48GB. Fine-tuning Llama 3 8B for a specific task can lead to significant improvements in its performance on that task.

Memory Management: Keeping Things Running Smoothly

Memory management is crucial for preventing bottlenecks and ensuring your LLM runs smoothly. Imagine your GPU's memory as a bustling city, and the LLM model is a large-scale event happening there. Effective traffic management is essential to avoid congestion!

How does it work?

Efficient memory management involves techniques like:

- Caching: Storing frequently accessed data in a faster, readily available location.

- Memory allocation optimization: Carefully allocating memory for each task to avoid fragmentation and unnecessary overhead.

- Memory swapping: Moving data between the GPU's memory and the system's RAM to balance usage.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB boasts a generous 48GB of memory, offering ample space for your LLM model and supporting data. This sizable memory also facilitates more efficient data transfer between the GPU and system RAM.

What's the tradeoff?

Implementing advanced memory management techniques can sometimes incur a slight performance overhead, but the gains in stability and overall performance are usually worth it.

Example: Llama 3 70B Processing Speed

Consider the Llama 3 70B model, which requires a significant amount of memory. When running on your RTX 6000 Ada 48GB, the Q4KM quantization achieves a processing speed of 547.03 tokens/second. This highlights the importance of efficient memory management to handle such large models efficiently.

Context Window Management: Expanding the Horizon

The context window of an LLM is its short-term memory, determining how much text it can process at once. Imagine it as the limited space on a notepad. The size of this window directly affects the model's ability to understand long sequences of text.

How does it work?

Context window management is about adjusting this window size based on the specific task. Larger windows facilitate handling longer texts but demand more memory and computational resources.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB's ample memory allows you to use larger context windows for your model, effectively handling longer sequences of text.

What's the tradeoff?

Increasing the context window size can significantly increase memory consumption and slow down inference speed, especially with larger models.

Example: Llama 3 8B Token Generation

In the case of the Llama 3 8B model on your RTX 6000 Ada 48GB, the Q4KM quantization achieves a token generation speed of 130.99 tokens/second. This demonstrates the potential for handling longer sequences of text with larger context windows while maintaining a high generation speed.

Choosing the Right Model: Finding Your Perfect Match

The LLM landscape is vast and diverse, with various models designed for different purposes. Selecting the right model for your task is crucial for optimal performance and accurate results.

How does it work?

Consider the following factors when choosing an LLM:

- Model size: Smaller models are computationally cheaper but may have limited capabilities. Larger models offer greater accuracy and versatility but demand more resources.

- Task-specific capabilities: Some models are specialized for specific tasks like summarization or translation.

- Language support: Ensure the model supports the language you need.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB's powerful processing capabilities allow you to run even larger models with minimal performance compromise. Its considerable memory capacity also facilitates efficient handling of large model files.

What's the tradeoff?

Using a larger model may mean sacrificing some speed for enhanced accuracy.

Example: Llama 3 70B vs. Llama 3 8B

Choosing between models like Llama 3 70B and Llama 3 8B depends on your specific needs. While the Llama 3 70B model showcases impressive capabilities due to its size, it requires more computational resources and memory. The Llama 3 8B model offers a good balance of size and performance and requires less memory.

Software Optimization: Utilizing the Latest Tools

Software tools and libraries can make a significant difference in achieving optimal performance from your LLM setup. Think of them as the tools that streamline the entire workflow for your LLM.

How does it work?

Leveraging libraries like:

- llama.cpp: Enables efficient inference on various devices.

- GPTQ: Simplifies the quantization process and improves performance.

- FlashAttention: Offers optimized attention mechanisms for better speed.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB's powerful CUDA cores and Tensor Cores work seamlessly with libraries like llama.cpp, GPTQ, and FlashAttention, allowing them to unleash their potential.

What's the tradeoff?

Staying up-to-date with the latest software releases and optimizing your code can require some effort and technical expertise.

Example: llama.cpp and GPTQ

Using llama.cpp with GPTQ quantization significantly improves performance on the Llama 3 8B model, achieving a token generation speed of 130.99 tokens/second on your RTX 6000 Ada 48GB. This demonstrates the impact of utilizing the right software tools.

Benchmarking: Measuring Your Progress

Benchmarking is essential for understanding your LLM setup's performance and identifying areas for improvement. Like a performance tracker for your LLM, it provides valuable insights into its speed and accuracy.

How does it work?

Benchmarking involves:

- Measuring inference speed: How many tokens the LLM can generate per second.

- Evaluating accuracy: Assessing the model's performance on specific tasks using metrics like perplexity or BLEU score.

- Tracking resource utilization: Understanding the GPU's memory and processing power consumption.

The NVIDIA RTX 6000 Ada 48GB Advantage:

Your RTX 6000 Ada 48GB's powerful capabilities provide a solid foundation for benchmarking, enabling comprehensive assessment of your LLM's performance.

What's the tradeoff?

Setting up benchmarking tools and interpreting the results can require some technical skills.

Example: Llama 3 70B Model Performance

Llama 3 70B Model Performance on NVIDIA RTX 6000 Ada 48GB

| Task | Quantization | Tokens/Second |

|---|---|---|

| Generation | Q4KM | 18.36 |

| Processing | Q4KM | 547.03 |

These figures showcase the potential of your RTX 6000 Ada 48GB for handling large-scale models like the Llama 3 70B.

FAQ: Clearing the Air

What are the benefits of running LLMs locally?

Running LLMs locally offers greater privacy, control, and faster response times compared to accessing cloud-based models.

What are the challenges of running LLMs locally?

Local LLM setups require specialized hardware and expertise. Additionally, fine-tuning and managing large models can be computationally demanding.

What is the role of GPU in LLM performance?

GPUs excel at parallel processing, making them ideal for handling the intensive computations involved in LLM inference. Their large amount of memory also allows for storing large model files and supporting data.

What are the different quantization methods?

Common quantization methods include:

- Post-training Quantization: Quantizing a pre-trained model without further training.

- Quantization-aware training: Training a model while incorporating quantization.

What are the key factors to consider when choosing an LLM?

Choose an LLM based on its size, task-specific capabilities, language support, and your available resources.

Keywords:

LLM, NVIDIA, RTX 6000 Ada 48GB, noise reduction, quantization, fine-tuning, memory management, context window, benchmarking, model size, task-specific, language support, software optimization, llama.cpp, GPTQ, FlashAttention, performance, accuracy, efficiency, optimization, resources, tradeoffs, GPU, CUDA, Tensor Cores.