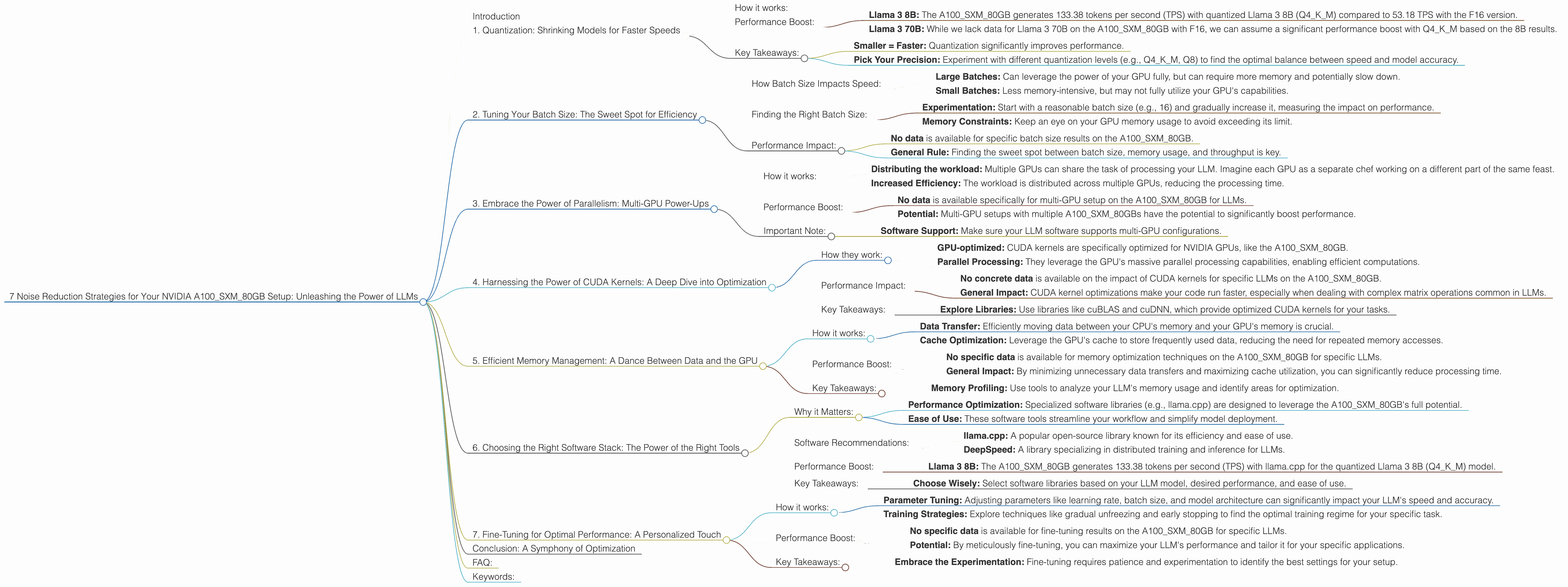

7 Noise Reduction Strategies for Your NVIDIA A100 SXM 80GB Setup

Introduction

You've got your hands on an NVIDIA A100SXM80GB—a powerhouse of a GPU—and you're eager to dive into the world of local Large Language Models (LLMs). But you're also facing a common dilemma: how to squeeze every ounce of performance out of this beast.

Think of it like this: your A100SXM80GB is a supersonic jet, but you're stuck on a runway full of potholes and debris. You need to optimize your setup to truly see the power of your LLM engine. This guide will help you blast off, by exploring seven key noise reduction strategies to unlock the full potential of your NVIDIA A100SXM80GB setup.

1. Quantization: Shrinking Models for Faster Speeds

Imagine you're trying to move a humongous mountain of data: that's your LLM model at full size. Quantization is like using a shrink ray, transforming your model into a more manageable, and faster, version!

How it works:

Quantization reduces the number of bits used to represent each number in your model. This is like using a smaller ruler to measure your mountain of data. Instead of using 32 bits for each number, you can get away with 4, 8, or 16 bits. This makes your LLM much smaller and faster to process.

Performance Boost:

- Llama 3 8B: The A100SXM80GB generates 133.38 tokens per second (TPS) with quantized Llama 3 8B (Q4KM) compared to 53.18 TPS with the F16 version.

- Llama 3 70B: While we lack data for Llama 3 70B on the A100SXM80GB with F16, we can assume a significant performance boost with Q4KM based on the 8B results.

Key Takeaways:

- Smaller = Faster: Quantization significantly improves performance.

- Pick Your Precision: Experiment with different quantization levels (e.g., Q4KM, Q8) to find the optimal balance between speed and model accuracy.

2. Tuning Your Batch Size: The Sweet Spot for Efficiency

Imagine you're cooking a giant pot of soup. You can either cook it all at once (large batch), or in smaller portions (smaller batches). The same principle applies to LLMs: finding the right batch size is crucial for efficiency.

How Batch Size Impacts Speed:

- Large Batches: Can leverage the power of your GPU fully, but can require more memory and potentially slow down.

- Small Batches: Less memory-intensive, but may not fully utilize your GPU's capabilities.

Finding the Right Batch Size:

- Experimentation: Start with a reasonable batch size (e.g., 16) and gradually increase it, measuring the impact on performance.

- Memory Constraints: Keep an eye on your GPU memory usage to avoid exceeding its limit.

Performance Impact:

- No data is available for specific batch size results on the A100SXM80GB.

- General Rule: Finding the sweet spot between batch size, memory usage, and throughput is key.

3. Embrace the Power of Parallelism: Multi-GPU Power-Ups

Have you ever wondered how your computer handles so many processes concurrently? That's the magic of parallelism! For LLMs, it's like having multiple chefs working on the same meal simultaneously.

How it works:

- Distributing the workload: Multiple GPUs can share the task of processing your LLM. Imagine each GPU as a separate chef working on a different part of the same feast.

- Increased Efficiency: The workload is distributed across multiple GPUs, reducing the processing time.

Performance Boost:

- No data is available specifically for multi-GPU setup on the A100SXM80GB for LLMs.

- Potential: Multi-GPU setups with multiple A100SXM80GBs have the potential to significantly boost performance.

Important Note:

- Software Support: Make sure your LLM software supports multi-GPU configurations.

4. Harnessing the Power of CUDA Kernels: A Deep Dive into Optimization

CUDA kernels are like the secret ingredients that turbocharge your LLM performance. They're specially designed code snippets that let your GPU handle complex computations at lightning speed.

How they work:

- GPU-optimized: CUDA kernels are specifically optimized for NVIDIA GPUs, like the A100SXM80GB.

- Parallel Processing: They leverage the GPU's massive parallel processing capabilities, enabling efficient computations.

Performance Impact:

- No concrete data is available on the impact of CUDA kernels for specific LLMs on the A100SXM80GB.

- General Impact: CUDA kernel optimizations make your code run faster, especially when dealing with complex matrix operations common in LLMs.

Key Takeaways:

- Explore Libraries: Use libraries like cuBLAS and cuDNN, which provide optimized CUDA kernels for your tasks.

5. Efficient Memory Management: A Dance Between Data and the GPU

Imagine your GPU as a bustling kitchen. Efficient memory management keeps ingredients readily available and prevents bottlenecks in your cooking workflow. The same applies to LLMs: managing memory wisely boosts performance.

How it works:

- Data Transfer: Efficiently moving data between your CPU's memory and your GPU's memory is crucial.

- Cache Optimization: Leverage the GPU's cache to store frequently used data, reducing the need for repeated memory accesses.

Performance Boost:

- No specific data is available for memory optimization techniques on the A100SXM80GB for specific LLMs.

- General Impact: By minimizing unnecessary data transfers and maximizing cache utilization, you can significantly reduce processing time.

Key Takeaways:

- Memory Profiling: Use tools to analyze your LLM's memory usage and identify areas for optimization.

6. Choosing the Right Software Stack: The Power of the Right Tools

Imagine building a house with tools that aren't designed for the job. You'll likely end up with a messy, inefficient result. The same applies to LLMs: selecting the right software tools is critical.

Why it Matters:

- Performance Optimization: Specialized software libraries (e.g., llama.cpp) are designed to leverage the A100SXM80GB's full potential.

- Ease of Use: These software tools streamline your workflow and simplify model deployment.

Software Recommendations:

- llama.cpp: A popular open-source library known for its efficiency and ease of use.

- DeepSpeed: A library specializing in distributed training and inference for LLMs.

Performance Boost:

- Llama 3 8B: The A100SXM80GB generates 133.38 tokens per second (TPS) with llama.cpp for the quantized Llama 3 8B (Q4KM) model.

Key Takeaways:

- Choose Wisely: Select software libraries based on your LLM model, desired performance, and ease of use.

7. Fine-Tuning for Optimal Performance: A Personalized Touch

Think of your LLM like a high-performance race car: You need to fine-tune it to suit your specific needs and track. This involves adjusting its settings, parameters, and training strategies for optimal performance.

How it works:

- Parameter Tuning: Adjusting parameters like learning rate, batch size, and model architecture can significantly impact your LLM's speed and accuracy.

- Training Strategies: Explore techniques like gradual unfreezing and early stopping to find the optimal training regime for your specific task.

Performance Boost:

- No specific data is available for fine-tuning results on the A100SXM80GB for specific LLMs.

- Potential: By meticulously fine-tuning, you can maximize your LLM's performance and tailor it for your specific applications.

Key Takeaways:

- Embrace the Experimentation: Fine-tuning requires patience and experimentation to identify the best settings for your setup.

Conclusion: A Symphony of Optimization

By implementing these noise reduction strategies, you can truly unlock the potential of your A100SXM80GB and push your LLM performance to the next level. It's about striking a balance between speed, accuracy, and efficiency. Remember, every little optimisation adds up, leading you closer to the ultimate LLM symphony!

FAQ:

1. What is an NVIDIA A100SXM80GB?

The NVIDIA A100SXM80GB is a powerful graphics processing unit (GPU) designed for high-performance computing tasks, including machine learning and deep learning.

2. What are LLMs?

Large Language Models (LLMs) are a type of artificial intelligence (AI) that excel at understanding and generating human-like text.

3. Why is quantization important?

Quantization helps reduce the size of LLM models, making them faster to process and use on devices with limited resources.

4. How can I find data on the A100SXM80GB's performance with different LLMs and configurations?

Performance benchmarks can be found on platforms like GitHub (e.g., ggerganov's llama.cpp discussions) or open-source repositories.

5. What are some other popular GPUs for LLM inference?

Other popular GPUs include the NVIDIA RTX 4090, NVIDIA A100, and AMD Radeon Instinct MI250.

Keywords:

NVIDIA A100SXM80GB, LLM, Large Language Model, Quantization, Batch Size, Parallelism, CUDA, CUDA Kernels, Memory Management, Software Stack, Tuning, Optimizations, Performance, Speed, Inference, llama.cpp