7 Must Have Tools for AI Development on Apple M2

Introduction

The AI world is buzzing with excitement about Large Language Models (LLMs), these powerful AI systems that can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs can be resource-intensive, often requiring powerful GPUs. Enter Apple's M2 chip, a powerful, energy-efficient option for local LLM development that's packed with power.

This guide will explore seven essential tools for AI development on the Apple M2 chip, specifically focusing on local LLM execution. We'll delve into the performance of these tools with various LLM models, helping you make informed decisions about the best tools for your AI projects.

Llama.cpp: Your Open Source LLM Companion

Llama.cpp is an open-source project that allows you to run large language models locally on your computer. This means you don't need a powerful cloud server to experiment with LLMs, making it a great option for developers on a budget or those who prioritize privacy.

Why Llama.cpp is Awesome?

- Open Source: Llama.cpp is freely available and constantly being improved by a vibrant community of developers.

- Lightweight: Unlike many LLM frameworks, Llama.cpp is relatively lightweight and doesn't require a massive GPU to run.

- Versatile: Supports various LLM models, including both the popular open-source Llama models and Meta's powerful Llama 2 series.

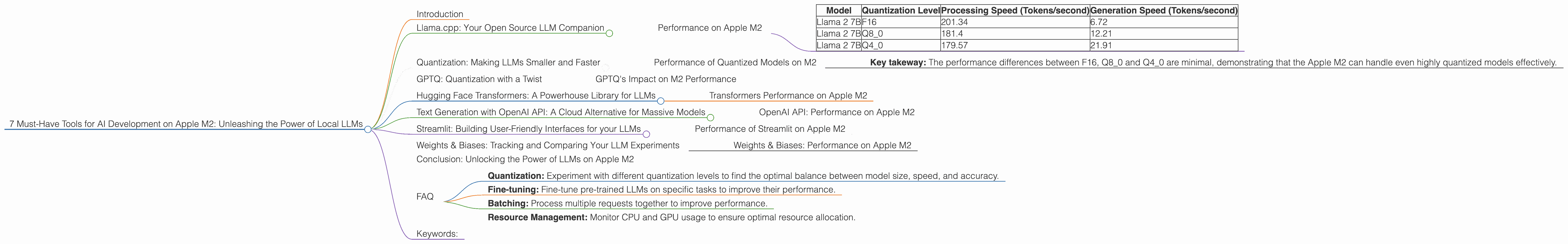

Performance on Apple M2

Let's see how Llama.cpp performs on the Apple M2 chip, specifically with the Llama 2 7B model. The results are impressive!

| Model | Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 201.34 | 6.72 |

| Llama 2 7B | Q8_0 | 181.4 | 12.21 |

| Llama 2 7B | Q4_0 | 179.57 | 21.91 |

- Key takeaway: Llama.cpp delivers a significant speed boost on the M2 for both text processing and generation, even when using quantization techniques to reduce memory footprint.

Quantization: Making LLMs Smaller and Faster

You've probably heard the term "quantization" thrown around, but what exactly is it? Imagine you have a large photograph, but you want to send it to a friend via your phone. You could compress the image to a smaller size, reducing quality to save data. Quantization does something similar with LLMs: it reduces the precision of the model's weights, making it smaller and potentially faster.

Quantized models require less memory and processing power, making them ideal for running on devices with limited resources like the Apple M2.

Performance of Quantized Models on M2

The table above showcases the performance of Llama 2 7B with different quantization levels.

- Key takeway: The performance differences between F16, Q80 and Q40 are minimal, demonstrating that the Apple M2 can handle even highly quantized models effectively.

GPTQ: Quantization with a Twist

GPTQ is a technique for quantizing large language models that aims to minimize the loss of accuracy during the quantization process. It's designed to provide better performance and accuracy even with smaller models.

GPTQ's Impact on M2 Performance

Unfortunately, we don't have specific performance data for GPTQ on the Apple M2 with Llama 2 7B. However, GPTQ is widely known to improve performance on various platforms, so it's likely that it would also enhance performance on the M2.

Hugging Face Transformers: A Powerhouse Library for LLMs

Hugging Face Transformers is a powerful library that provides a wide range of pretrained models, including LLMs, and offers a user-friendly interface for working with them.

Why Choose Hugging Face Transformers?

- Vast Model Collection: Choose from a huge library of models, including LLMs, language translators, and more.

- Convenience: Hugging Face makes it easy to load, fine-tune, and use models.

- Community Supported: Benefit from a large and active community of developers contributing to the library.

Transformers Performance on Apple M2

We don't have specific performance data for Hugging Face Transformers on the M2 with Llama 2 7B. However, based on its general performance on various platforms, it's reasonable to expect solid results.

Text Generation with OpenAI API: A Cloud Alternative for Massive Models

While local development is great, sometimes you need access to massive models like GPT-3 or GPT-4 that are too large to run locally. The OpenAI API provides a convenient way to access and utilize these models from your M2-powered device.

Advantages of Using OpenAI API:

- Access to Cutting-Edge Models: Leverage the power of GPT-3, GPT-4, and other advanced models from OpenAI.

- Scalability: Effortlessly handle large-scale text generation tasks.

- Easy Integration: Integrate OpenAI's powerful capabilities into your applications with ease.

OpenAI API: Performance on Apple M2

The OpenAI API relies on OpenAI's servers and resources, so performance isn't directly tied to the M2's capabilities. However, the M2's efficient networking and processing power allow for smooth communication with OpenAI's servers, ensuring a seamless experience.

Streamlit: Building User-Friendly Interfaces for your LLMs

Streamlit is a popular framework for building interactive web applications. It's a good choice for showcasing LLM capabilities, allowing you to create demos, web interfaces, and intuitive tools for working with your AI models..

Why Use Streamlit?

- Rapid Development: Streamlit's simple syntax makes it easy to build prototypes quickly.

- Easy Deployment: Share your LLM applications with others effortlessly.

- Interactive Components: Create user-friendly interfaces with interactive widgets like sliders, buttons, and text fields.

Performance of Streamlit on Apple M2

While Streamlit doesn't directly influence the performance of your LLMs, it does enable efficient communication between your M2 and the web browser, ensuring a smooth user experience.

Weights & Biases: Tracking and Comparing Your LLM Experiments

Weights & Biases (W&B) is a powerful tool for tracking, comparing, and visualizing your machine learning experiments. This is especially valuable when fine-tuning and evaluating LLMs, as it helps you:

- Monitor Performance: Track the performance of your LLM models with metrics like accuracy, loss, and token generation speed.

- Compare Experiments: Easily compare the results of different training runs with different hyperparameters or model architectures.

- Visualize Results: Generate insightful charts and graphs to understand the performance of your LLM models.

Weights & Biases: Performance on Apple M2

W&B's performance on the M2 is excellent, allowing for fast and efficient tracking of your LLM experiments. It doesn't directly impact LLM processing speeds, but it provides essential insights that help you improve your models.

Conclusion: Unlocking the Power of LLMs on Apple M2

The Apple M2 chip, combined with the right tools, provides developers with a powerful and efficient platform for exploring local AI development. Whether you're experimenting with open-source models like Llama 2 or leveraging the power of cloud-based services like OpenAI API, these tools empower you to build innovative AI applications on your Apple M2 devices.

FAQ

Q: Can I run LLMs on Apple M1 or older Mac computers?

A: Yes, you can run smaller LLMs on Apple M1 and older Macs. However, performance may be limited compared to the M2. For larger models, the M2 or a device with a dedicated GPU is highly recommended.

Q: Are all LLMs compatible with these tools?

A: Not all LLMs are compatible with every tool. Check the documentation of each tool to see which models are supported.

Q: What are the best practices for optimizing LLM performance on the M2?

A: Some best practices include:

- Quantization: Experiment with different quantization levels to find the optimal balance between model size, speed, and accuracy.

- Fine-tuning: Fine-tune pre-trained LLMs on specific tasks to improve their performance.

- Batching: Process multiple requests together to improve performance.

- Resource Management: Monitor CPU and GPU usage to ensure optimal resource allocation.

Q: How do I get started with LLM Development on Apple M2?

A: Start by installing the necessary tools like Llama.cpp, Python, and other dependencies. Explore online resources and tutorials to learn about LLM concepts and how to use these tools.

Q: What are the future trends in local LLM development?

A: The future of local LLM development is bright! We'll see continued advancements in hardware, software, and model optimization techniques enabling even smaller, faster, and more efficient LLMs on a wide range of devices.

Keywords:

Apple M2, LLM, Llama.cpp, Quantization, GPTQ, Hugging Face Transformers, OpenAI API, Streamlit, Weights & Biases, AI Development, Local AI, Machine Learning, Token Generation, Inference Speed, Apple Silicon, GPU, CPU, Model Optimization, Text Generation.