7 Key Factors to Consider When Choosing Between NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB for AI

Introduction

For developers diving into the world of local Large Language Models (LLMs), choosing the right hardware can make or break your project. The NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB are two popular GPUs that offer incredible performance for running LLMs, but selecting the best fit for your needs requires careful consideration. This article will guide you through seven key factors to compare these GPUs and help you decide which one is the ideal match for your AI endeavors.

Performance Analysis

Here's a breakdown of how these two GPUs perform in different scenarios, highlighting their strengths and weaknesses:

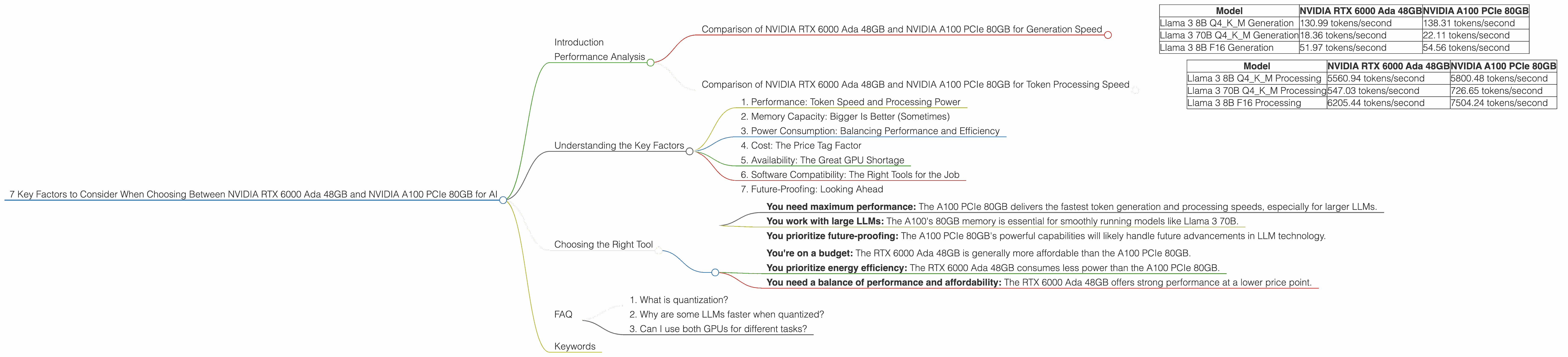

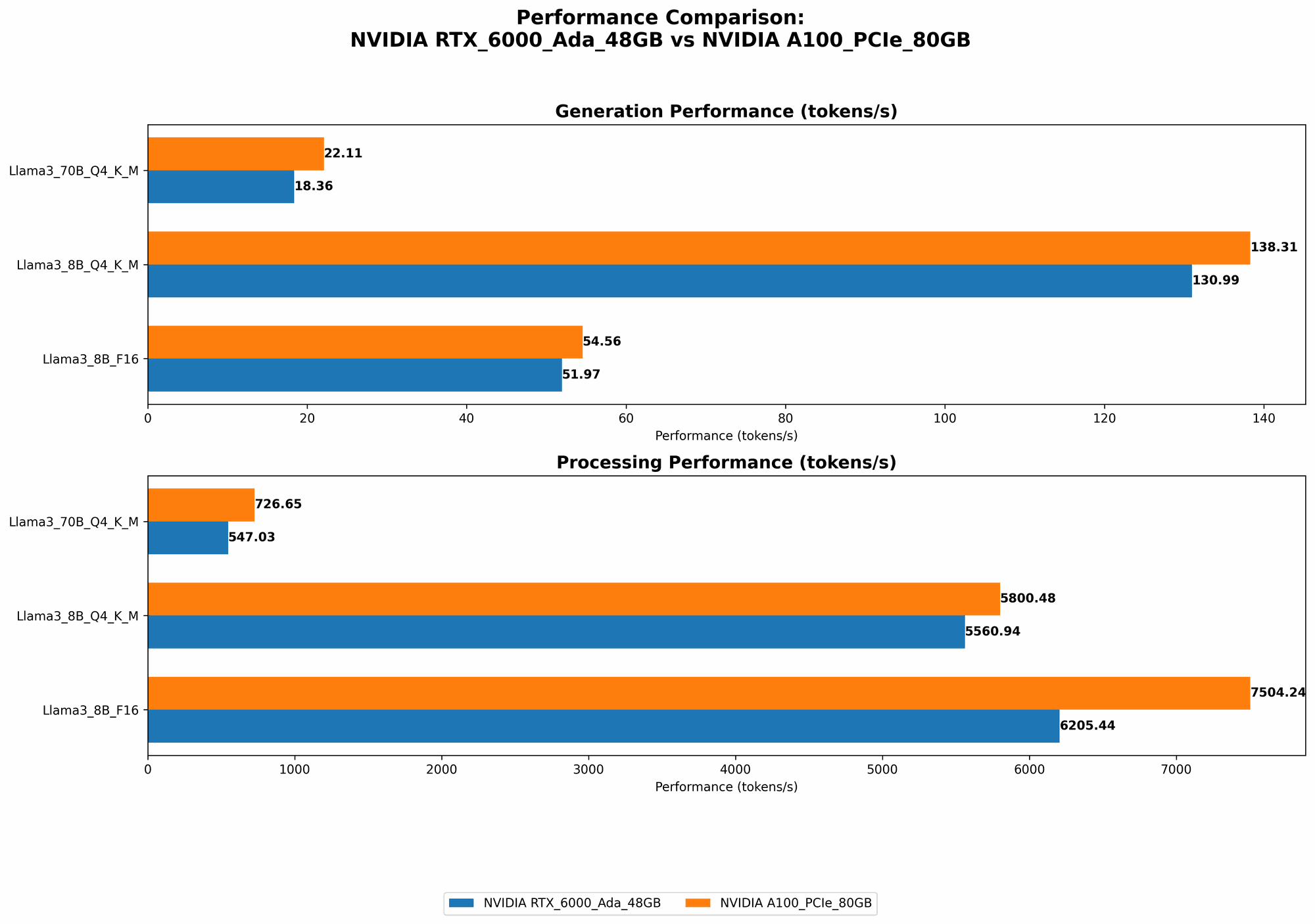

Comparison of NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB for Generation Speed

The NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB both deliver impressive token generation speed, particularly when running LLMs in quantized formats like Q4KM (4-bit quantization). However, the A100 PCIe 80GB edges out the RTX 6000 Ada 48GB in every tested scenario.

| Model | NVIDIA RTX 6000 Ada 48GB | NVIDIA A100 PCIe 80GB |

|---|---|---|

| Llama 3 8B Q4KM Generation | 130.99 tokens/second | 138.31 tokens/second |

| Llama 3 70B Q4KM Generation | 18.36 tokens/second | 22.11 tokens/second |

| Llama 3 8B F16 Generation | 51.97 tokens/second | 54.56 tokens/second |

(Note: We don't have data for F16 (half precision) generation for Llama 3 70B on either device.)

The A100 PCIe 80GB consistently demonstrates higher throughput, generating about 6% more tokens per second compared to the RTX 6000 Ada 48GB for the 8B model and a much more significant 20% jump for the 70B model. This difference becomes increasingly noticeable when working with larger LLMs.

Comparison of NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB for Token Processing Speed

Similar to generation speed, the A100 PCIe 80GB consistently outperforms the RTX 6000 Ada 48GB in token processing speed for both 8B and 70B models in Q4KM format. The difference in processing speed is even more pronounced for the larger 70B model, where the A100 PCIe 80GB demonstrates about 30% faster processing.

| Model | NVIDIA RTX 6000 Ada 48GB | NVIDIA A100 PCIe 80GB |

|---|---|---|

| Llama 3 8B Q4KM Processing | 5560.94 tokens/second | 5800.48 tokens/second |

| Llama 3 70B Q4KM Processing | 547.03 tokens/second | 726.65 tokens/second |

| Llama 3 8B F16 Processing | 6205.44 tokens/second | 7504.24 tokens/second |

(Note: We don't have data for F16 (half precision) processing for Llama 3 70B on either device.)

This performance difference is crucial for applications that involve intensive token processing tasks, such as text summarization, translation, and code generation. The A100 PCIe 80GB's superior processing capabilities make it a more attractive choice for these demanding tasks.

Understanding the Key Factors

Now, let's delve into the key factors that influence your decision:

1. Performance: Token Speed and Processing Power

As we explored in the analysis above, the NVIDIA A100 PCIe 80GB generally outperforms the RTX 6000 Ada 48GB in both generation and processing speed, particularly for larger LLMs. This difference can be significant for developers working with computationally demanding models and applications. The A100 PCIe 80GB's edge in this area makes it a strong contender for users prioritizing speed and efficiency.

2. Memory Capacity: Bigger Is Better (Sometimes)

The NVIDIA RTX 6000 Ada 48GB comes with 48GB of GDDR6 memory, while the NVIDIA A100 PCIe 80GB boasts an impressive 80GB of HBM2e memory. The choice between the two depends on the size of the LLM you want to run. If you're working with larger models like the 70B Llama 3, the A100's 80GB memory capacity is essential to avoid memory constraints and ensure smooth operation. For smaller models like the 8B Llama 3, 48GB may be sufficient, potentially saving you some cost.

3. Power Consumption: Balancing Performance and Efficiency

The NVIDIA A100 PCIe 80GB consumes more power than the RTX 6000 Ada 48GB. This difference in energy consumption might be a significant factor, especially for users concerned about energy costs or running their setup in environments with limited power resources. The RTX 6000 Ada 48GB offers a more power-efficient option for those seeking to minimize energy consumption without sacrificing significant performance.

4. Cost: The Price Tag Factor

The NVIDIA RTX 6000 Ada 48GB is generally more affordable than the NVIDIA A100 PCIe 80GB. While cost shouldn't be the only factor, it's definitely something to consider if you're working on a tighter budget. The RTX 6000 Ada 48GB provides a solid balance of performance and affordability, making it an appealing choice for budget-conscious developers.

5. Availability: The Great GPU Shortage

The GPU market has been volatile in recent years, with supply chain issues impacting availability and pricing. It's crucial to research the availability of both the RTX 6000 Ada 48GB and the A100 PCIe 80GB before making your decision. It's best to check with authorized retailers and distributors to get an accurate understanding of current stock levels and potential lead times.

6. Software Compatibility: The Right Tools for the Job

While both GPUs support a wide range of software frameworks, it's essential to ensure that your chosen GPU is compatible with the specific LLM software you're using. For example, some LLM libraries and tools might have optimized performance on specific GPUs. Research the compatibility and performance aspects of your preferred software tools with both the RTX 6000 Ada 48GB and A100 PCIe 80GB to make the right choice.

7. Future-Proofing: Looking Ahead

The field of AI is constantly evolving, and new LLMs and frameworks are emerging regularly. Consider how your GPU choice might affect your ability to handle future advancements. The A100 PCIe 80GB's superior memory and processing capabilities might provide more leeway for handling larger and more complex LLMs in the future. However, the RTX 6000 Ada 48GB could still offer solid performance for a range of applications, and its affordability might make it a more attractive option for users who want room to upgrade later.

Choosing the Right Tool

The decision between the NVIDIA RTX 6000 Ada 48GB and NVIDIA A100 PCIe 80GB ultimately depends on your specific needs and priorities. Here's a quick summary to help you decide:

Choose the NVIDIA A100 PCIe 80GB if:

- You need maximum performance: The A100 PCIe 80GB delivers the fastest token generation and processing speeds, especially for larger LLMs.

- You work with large LLMs: The A100's 80GB memory is essential for smoothly running models like Llama 3 70B.

- You prioritize future-proofing: The A100 PCIe 80GB's powerful capabilities will likely handle future advancements in LLM technology.

Choose the NVIDIA RTX 6000 Ada 48GB if:

- You're on a budget: The RTX 6000 Ada 48GB is generally more affordable than the A100 PCIe 80GB.

- You prioritize energy efficiency: The RTX 6000 Ada 48GB consumes less power than the A100 PCIe 80GB.

- You need a balance of performance and affordability: The RTX 6000 Ada 48GB offers strong performance at a lower price point.

FAQ

1. What is quantization?

Quantization is like compressing a large language model (LLM) to make it smaller and faster. It's basically taking the numbers within the model and representing them with fewer bits, like a simplified code for the LLM. This makes the model smaller and quicker to load and run, but it can sometimes slightly reduce the quality of the LLM's responses.

2. Why are some LLMs faster when quantized?

Smaller models are typically faster to run and require less processing power. Quantization helps make LLMs smaller and more efficient, enabling them to process information quickly and generate responses faster. It's like having a streamlined version of your LLM, ready to handle tasks with lightning speed.

3. Can I use both GPUs for different tasks?

Yes! You can utilize both the RTX 6000 Ada 48GB and A100 PCIe 80GB simultaneously for different tasks or even for different aspects of the same task. For example, you could run a smaller LLM, like the Llama 3 8B, on the RTX 6000 Ada 48GB for generating text, while using the A100 PCIe 80GB to handle more demanding tasks like processing large datasets or training a larger LLM. This setup can optimize your workflow and leverage the strengths of your two GPUs.

Keywords

LLM, Large Language Model, NVIDIA RTX 6000 Ada 48GB, NVIDIA A100 PCIe 80GB, GPU, Graphics Processing Unit, Token Generation, Token Processing, Memory Capacity, Power Consumption, Cost, Availability, Software Compatibility, Future-Proofing, Quantization, Q4KM, F16, Llama 3, AI, Artificial Intelligence, Inference, Deep Learning, Machine Learning, Performance, Speed, Efficiency, Developer, Data Scientist, Hardware, Software, LLM Models, llama.cpp, GPU Benchmarks, Token/Second, LLM Inference