7 Key Factors to Consider When Choosing Between NVIDIA RTX 5000 Ada 32GB and NVIDIA RTX 6000 Ada 48GB for AI

Introduction

Are you a developer looking to build and run powerful AI models, particularly large language models (LLMs)? Choosing the right hardware is crucial for maximizing performance, efficiency, and overall success. In the world of AI, NVIDIA's RTX 5000 Ada 32GB and RTX 6000 Ada 48GB are two popular choices, both offering impressive compute capabilities. But which one is right for you?

This article will delve into the crucial factors to consider when deciding between these two powerful GPUs, providing you with a comprehensive understanding of their strengths and weaknesses. We'll analyze performance data for popular LLM models to help you navigate the decision-making process and select the best GPU for your specific needs.

Performance Analysis: Comparing Token Generation and Processing

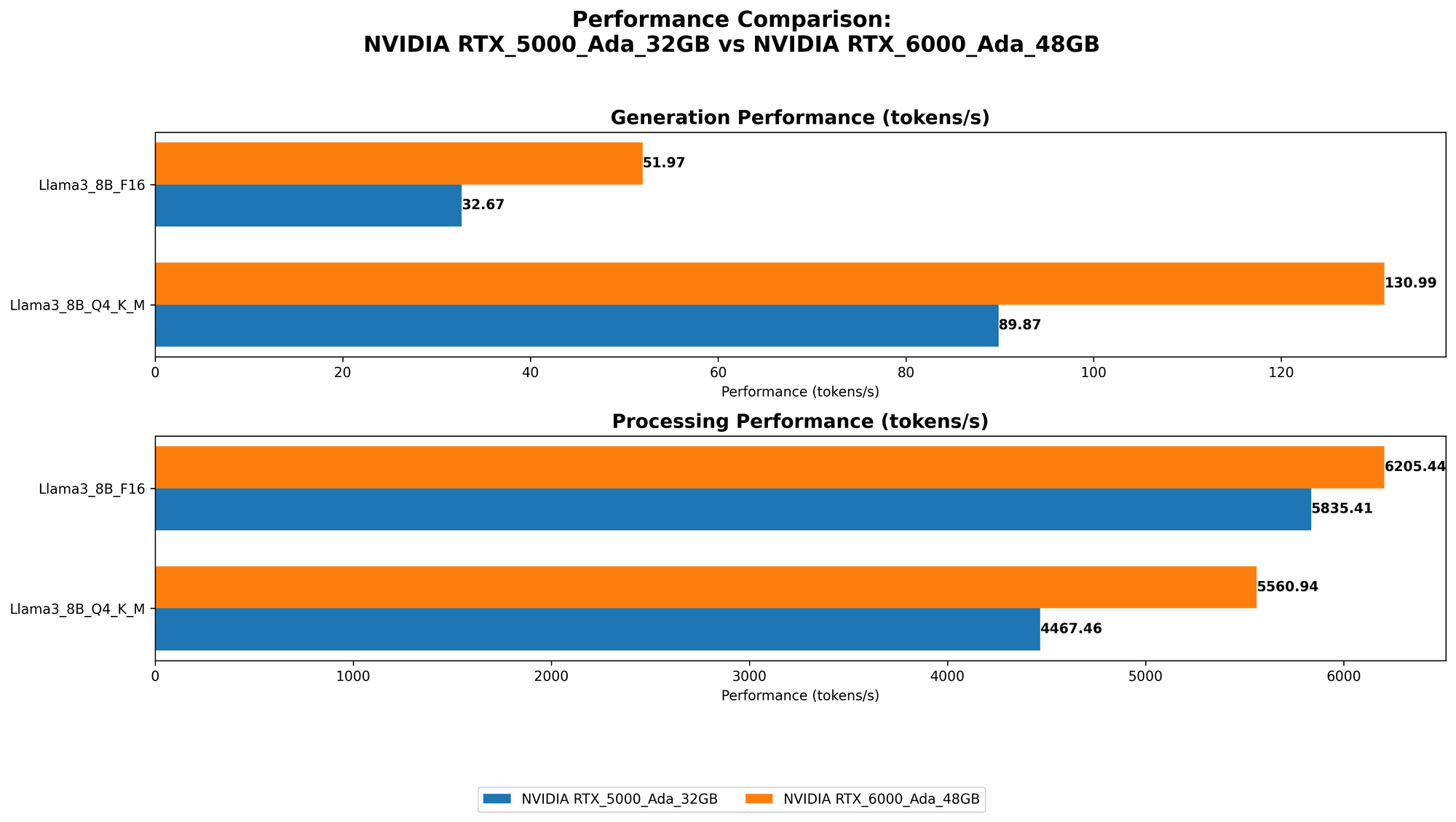

NVIDIA RTX 5000 Ada 32GB vs. NVIDIA RTX 6000 Ada 48GB: Token Generation

The RTX 6000 Ada 48GB performs better in terms of token generation speed for both Llama 3 8B and Llama 3 70B models. This is especially pronounced in the Q4KM mode.

For Llama 3 8B:

- RTX 6000 Ada 48GB: 130.99 tokens per second in Q4KM.

- RTX 5000 Ada 32GB: 89.87 tokens per second in Q4KM.

This means the RTX 6000 Ada 48GB is about 46% faster than the RTX 5000 Ada 32GB in generating tokens for the Llama 3 8B model.

For Llama 3 70B:

- RTX 6000 Ada 48GB: 18.36 tokens per second in Q4KM.

- RTX 5000 Ada 32GB: Data not available.

Unfortunately, we don't have data for the RTX 5000 Ada 32GB's performance with Llama 3 70B in Q4KM mode. However, the RTX 6000 Ada 48GB clearly demonstrates a significant advantage for larger LLM models.

NVIDIA RTX 5000 Ada 32GB vs. NVIDIA RTX 6000 Ada 48GB: Token Processing

In terms of token processing speed, the RTX 6000 Ada 48GB again shows superiority, but the difference compared to the RTX 5000 Ada 32GB is less dramatic.

For Llama 3 8B:

- RTX 6000 Ada 48GB: 5560.94 tokens per second in Q4KM.

- RTX 5000 Ada 32GB: 4467.46 tokens per second in Q4KM.

The RTX 6000 Ada 48GB is about 25% faster than the RTX 5000 Ada 32GB in processing tokens for the Llama 3 8B model.

For Llama 3 70B:

- RTX 6000 Ada 48GB: 547.03 tokens per second in Q4KM.

- RTX 5000 Ada 32GB: Data not available.

Again, we lack data for the RTX 5000 Ada 32GB's performance with Llama 3 70B in Q4KM mode. The RTX 6000 Ada 48GB demonstrates its strength in handling the massive scale of larger LLMs.

Practical Implications

The performance differences in token generation and processing suggest that the RTX 6000 Ada 48GB is a better choice for handling larger LLMs like Llama 3 70B, while the RTX 5000 Ada 32GB might be sufficient for smaller models like Llama 3 8B.

However, when considering the price difference, the RTX 5000 Ada 32GB might be a more cost-effective solution if you primarily work with smaller models and prioritizes value for money.

7 Key Factors to Consider When Choosing Between NVIDIA RTX 5000 Ada 32GB and NVIDIA RTX 6000 Ada 48GB

1. Model Size and Complexity: Larger Models, Larger Demands

The size and complexity of the LLM you plan to run are key factors in determining the optimal hardware. Larger models with billions of parameters often require more memory and compute resources. This is where the RTX 6000 Ada 48GB shines with its greater memory capacity.

Example: Trying to run a 70B parameter model on the RTX 5000 Ada 32GB might lead to memory limitations and decreased performance.

The RTX 6000 Ada 48GB provides the additional memory and compute power necessary to handle the demands of larger, more complex models.

2. Quantization: Balancing Performance and Resource Consumption

Quantization is a technique used to reduce the size of LLM models by representing weights using fewer bits. It's like storing data in a smaller suitcase. While it offers performance improvements and reduces memory requirements, it also impacts model accuracy.

- Q4KM: This represents a specific quantization scheme where the model weights are stored using 4 bits, and kernel and matrix multiplication is performed using integer arithmetic.

- F16: This represents a quantization scheme using 16-bit floating point numbers. F16 models generally offer a balance between accuracy and performance.

For Llama 3 8B, the RTX 6000 Ada 48GB outperforms the RTX 5000 Ada 32GB in both Q4KM mode and F16 mode. This highlights its ability to handle more demanding quantization requirements.

Practical Implications

If you are working with a sensitive application where accuracy is paramount, you might prioritize F16 models. However, if you need to optimize for speed and resource constraints, Q4KM might be a better choice.

3. Memory Capacity: More RAM, More Power

The RTX 6000 Ada 48GB boasts 48GB of dedicated GPU memory compared to the RTX 5000 Ada 32GB's 32GB. It's like having a bigger storage drive for your AI models. This difference is critical when working with large models.

Example: Imagine trying to run a 70B parameter LLM on a device with limited memory; it's like trying to fit a large bookcase into a small apartment. The lack of memory can seriously impact performance and might even prevent the model from running at all.

The RTX 6000 Ada 48GB offers the spaciousness needed to accommodate larger and more complex models, while the 32GB capacity of the RTX 5000 Ada 32GB might be sufficient for smaller models.

4. Compute Power: More Power, Faster Results

The RTX 6000 Ada 48GB offers more compute power, measured in Tensor Cores, than the RTX 5000 Ada 32GB. These Tensor Cores are specialized processors designed to accelerate matrix multiplications, a fundamental operation in AI models. It's like having a faster processor for your AI tasks.

Practical Implications

The RTX 6000 Ada 48GB can handle more complex and demanding computations, leading to faster training and inference times. For tasks requiring high-performance computing, the RTX 6000 Ada 48GB is the clear winner.

5. Power Consumption: More Power, More Power Consumption

With its greater compute power and memory capacity, the RTX 6000 Ada 48GB naturally consumes more power than the RTX 5000 Ada 32GB. It's like having a bigger engine that guzzles more fuel.

Practical Implications

If you are concerned about energy efficiency and operating costs, the RTX 5000 Ada 32GB might be a better choice. However, if you prioritize performance over power consumption, the RTX 6000 Ada 48GB is the way to go.

6. Price: Value for Money

The RTX 6000 Ada 48GB is significantly more expensive than the RTX 5000 Ada 32GB. It's like deciding between a luxury car and a practical, everyday vehicle.

Practical Implications

The RTX 5000 Ada 32GB can be a more cost-effective solution if you are on a budget or primarily work with smaller models. However, if you need the extra power and memory of the RTX 6000 Ada 48GB, the higher price might be justified for your specific use cases.

7. Your Specific Needs: Tailoring Your Choice

The best GPU for you ultimately depends on your specific needs and the tasks you plan to perform.

- If you are working with small to medium-sized LLM models and prioritizing cost-effectiveness and energy efficiency, the RTX 5000 Ada 32GB might be a suitable choice.

- If you are working with larger LLMs, prioritizing performance and memory capacity, the RTX 6000 Ada 48GB is likely the better option.

Conclusion

Choosing between the NVIDIA RTX 5000 Ada 32GB and RTX 6000 Ada 48GB involves a careful assessment of your requirements and priorities. A combination of factors like model size, quantization methods, memory capacity, and compute power needs to be considered to make the optimal decision.

The RTX 6000 Ada 48GB emerges as a powerful solution for handling larger, more complex LLM models, while the RTX 5000 Ada 32GB offers a more affordable option for smaller models and those prioritizing energy efficiency. Ultimately, the choice is yours, and now you have the knowledge to make an informed decision!

FAQ

What are some other popular GPUs for running AI models?

Besides the RTX 5000 Ada 32GB and RTX 6000 Ada 48GB, other popular GPUs for AI include the NVIDIA A100, A40, and H100.

How can I choose the right GPU for my specific LLM?

Consider the size of the model, the quantization method you plan to use, the memory requirements, and the compute power needed to handle the training and inference tasks.

What is the difference between token generation and token processing?

Token generation is the process of creating new tokens (words or sub-words) by the LLM, while token processing involves the LLM analyzing and understanding the input tokens to generate output. Think of it as writing and reading a story. "Generating" the story is like writing it, while "processing" is like reading and understanding it.

What is the benefit of using quantization?

Quantization can significantly reduce the size of LLMs, optimizing performance and reducing memory requirements. It's like compressing a file to make it smaller and faster to download.

Keywords

NVIDIA RTX 5000 Ada 32GB, NVIDIA RTX 6000 Ada 48GB, LLM, AI, Large Language Model, GPU, Token Generation, Token Processing, Quantization, Q4KM, F16, Memory Capacity, Compute Power