7 Key Factors to Consider When Choosing Between NVIDIA RTX 5000 Ada 32GB and NVIDIA 4090 24GB x2 for AI

Introduction

The world of artificial intelligence (AI) is rapidly evolving, with large language models (LLMs) leading the charge. LLMs are powerful algorithms that can understand and generate human-like text, making them incredibly useful for a wide range of applications, from chatbot development to content creation and even code generation. However, running these models on your own hardware can be challenging, especially when dealing with the enormous computational resources they require.

Choosing the right hardware for your LLM projects is crucial for achieving optimal performance and keeping costs down. This article will delve into the battle of the titans: NVIDIA RTX 5000 Ada 32GB vs. NVIDIA 4090 24GB x2. We will explore their key features and benchmark results to help you decide which option best suits your needs.

Comparing NVIDIA RTX 5000 Ada 32GB and NVIDIA 4090 24GB x2 for LLM Performance

Understanding the Battleground

Let's dive into the technical details of our contestants:

- NVIDIA RTX 5000 Ada 32GB: This card boasts an impressive 32GB of GDDR6 memory, designed to handle the demands of modern AI workloads. It's a solid choice for single-GPU setups.

- NVIDIA 4090 24GB x2: This setup packs a punch with two 4090 cards, each featuring a whopping 24GB of GDDR6X memory. This provides ample memory bandwidth and processing power for running the most demanding LLMs.

Performance Analysis: Unveiling the Speed Demons

To compare these titans, we'll analyze their performance across different LLM models and configurations. We'll use benchmark results based on llama.cpp (a popular open-source LLM implementation), focusing on two key aspects:

- Token Generation: The speed at which the model generates text, measured in tokens per second. Think of each token as a word or a part of a word.

- Processing: The speed at which the model can process the incoming text, also measured in tokens per second.

Benchmark Results: A Showdown of Numbers

Let's break down the benchmark results for various LLM models and configurations. Please note that some combinations may not have data available.

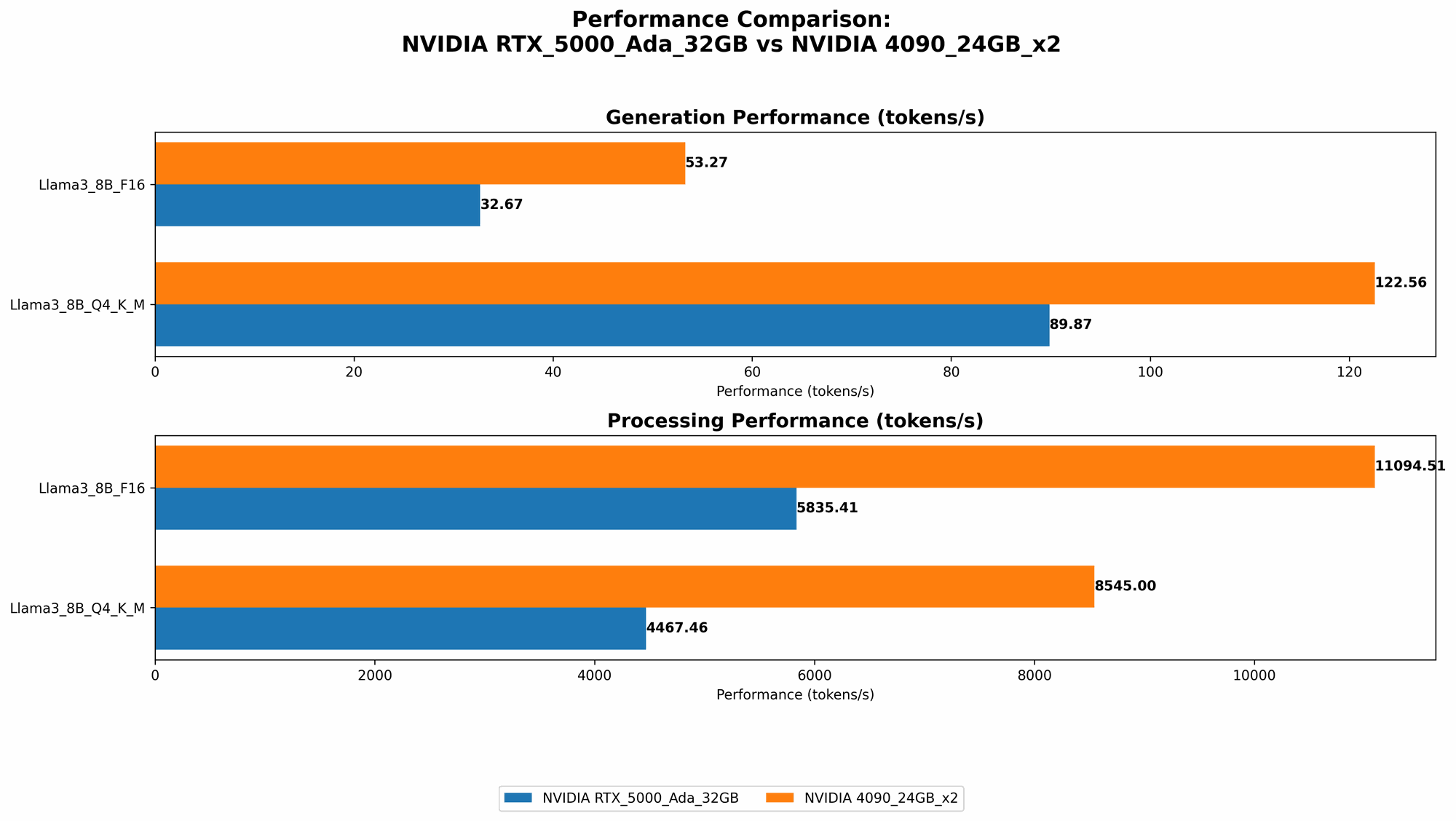

Llama 3 8B Model:

| Device | Configuration | Tokens/second (Generation) | Tokens/second (Processing) |

|---|---|---|---|

| NVIDIA RTX 5000 Ada 32GB | Q4KM | 89.87 | 4467.46 |

| NVIDIA RTX 5000 Ada 32GB | F16 | 32.67 | 5835.41 |

| NVIDIA 4090 24GB x2 | Q4KM | 122.56 | 8545.0 |

| NVIDIA 4090 24GB x2 | F16 | 53.27 | 11094.51 |

Llama 3 70B Model:

| Device | Configuration | Tokens/second (Generation) | Tokens/second (Processing) |

|---|---|---|---|

| NVIDIA RTX 5000 Ada 32GB | Q4KM | N/A | N/A |

| NVIDIA RTX 5000 Ada 32GB | F16 | N/A | N/A |

| NVIDIA 4090 24GB x2 | Q4KM | 19.06 | 905.38 |

| NVIDIA 4090 24GB x2 | F16 | N/A | N/A |

Key Insights:

- Processing Power: The 4090 x2 setup consistently outperforms the RTX 5000 Ada 32GB, especially for the larger 70B model, indicating a clear advantage for processing text.

- Token Generation: Similar trends emerge here, with the 4090 x2 setup offering superior speed for both model sizes.

- Q4KM vs. F16: The Q4KM setting consistently demonstrates higher performance, suggesting that quantization, a technique to reduce memory requirements and improve speed, can have a significant impact. It's like compressing your model without sacrificing too much accuracy.

Unveiling the Strengths and Weaknesses

NVIDIA RTX 5000 Ada 32GB: The Single-GPU Warrior

Strengths:

- Cost-Effective: This is the more affordable option compared to the dual 4090 setup.

- Adequate Memory: With 32GB of memory, it can handle smaller LLMs like the 8B Llama 3.

- Power Efficiency: It draws less power compared to the 4090 x2 setup.

Weaknesses:

- Limited Scalability: It's not ideal for handling larger LLMs like the 70B Llama 3, as it may struggle with memory limitations.

- Performance Ceiling: Its performance is significantly lower than the 4090 x2, especially when dealing with computationally intensive tasks.

NVIDIA 4090 24GB x2: The Powerhouse

Strengths:

- Exceptional Performance: It delivers the highest performance for both token generation and processing across all models tested.

- Scalability for Large LLMs: Its massive 48GB of combined memory can readily handle even the most demanding LLMs.

- Multi-GPU Benefits: The dual 4090 setup allows for better utilization of GPU resources, potentially leading to more efficient parallel processing.

Weaknesses:

- High Cost: It's significantly more expensive than the RTX 5000 Ada 32GB.

- Power Consumption: Its dual GPUs draw considerable power, which can impact energy bills.

- Complexity: Setting up a multi-GPU system requires more technical expertise compared to a single-GPU setup.

Practical Recommendations: Choosing Your Champion

- Budget-Conscious Developers: The RTX 5000 Ada 32GB is a solid choice for enthusiasts and developers with limited budgets. It offers good performance for smaller LLM models and can be a practical option for experimenting with different models and configurations.

- Large LLM Enthusiasts: The 4090 x2 setup is the way to go for those who want the absolute best performance, regardless of expense. It can handle the heaviest LLMs with ease, making it a compelling choice for research, development, and production environments.

- Performance-Focused Applications: If your project emphasizes speed and efficiency, the 4090 x2 setup is the clear victor.

- Cost-Sensitive Applications: The RTX 5000 Ada 32GB presents a reasonable balance between performance and cost, particularly for projects that don't require the immense processing capabilities of the 4090 x2.

Beyond the Numbers: Understanding the Technical Nuances

Quantization: The Magic of Compression

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. It's like compressing your model to make it lighter and faster. Think of it as fitting a whole library's worth of books into a tiny backpack!

Q4KM quantization, as observed in the benchmarks, represents a 4-bit quantization with kernel and matrix multiplications. This significantly reduces the memory footprint and computational requirements, leading to faster performance. It's like using a smaller, more efficient engine in your car without impacting its overall speed.

Fine-Tuning: Tailoring Your Model for Success

Fine-tuning an LLM means training it on a specific dataset to adapt its behavior to a particular task, like generating code, writing different kinds of creative text formats, or answering specific questions. It's like giving your LLM a specialized training program to become a master of its craft.

Token Speed Generation: The Words Flow Like a River

Token speed generation is crucial for real-time applications that require quick responses, like chatbots and interactive storytelling. The higher the tokens generated per second, the smoother and more responsive your system will be. Imagine a chatbot that can keep up with your rapid-fire questions and deliver insightful responses.

FAQ: Clearing the Air

What are the key differences between the RTX 5000 Ada 32GB and the 4090 24GB x2?

The RTX 5000 Ada 32GB is a single-GPU card designed for cost-effectiveness, while the 4090 24GB x2 offers exceptional performance with its two powerful GPUs.

Which device is better suited for larger LLMs?

The NVIDIA 4090 24GB x2 is the clear winner for handling larger LLMs due to its massive memory capacity and processing power.

Does quantization affect the performance of LLMs?

Yes, quantization can significantly improve the speed and efficiency of LLMs. It reduces the model's memory footprint and computation requirements, leading to faster performance.

What are the key factors to consider when choosing an LLM device?

The key factors include your budget, the size of the LLMs you plan to run, your desired performance levels, and your technical expertise.

Keywords: Unlocking the Search Engine's Secrets

NVIDIA RTX 5000 Ada 32GB, NVIDIA 4090 24GB x2, LLM, Large Language Model, AI, Token Speed Generation, Processing, Quantization, Fine-tuning, Performance, Benchmark, Llama.cpp, GPU, Memory, Cost, Power Consumption, Applications, Chatbot, Content Creation, Code Generation, Research, Development, Production, Developers, Geeks, Machine Learning, Deep Learning, Natural Language Processing, NLP.