7 Key Factors to Consider When Choosing Between NVIDIA RTX 4000 Ada 20GB and NVIDIA A100 SXM 80GB for AI

Introduction

The world of large language models (LLMs) is exploding, offering unprecedented capabilities for text generation, translation, and other tasks. But running these powerful models locally requires serious hardware, and choosing the right GPU can make all the difference. Two popular contenders for AI workloads are the NVIDIA RTX4000Ada20GB and the NVIDIA A100SXM_80GB.

This article dives deep into the performance and characteristics of these two GPUs, helping you determine which one best suits your LLM needs. We'll analyze their strengths and weaknesses, compare their performance across various LLM models, and guide you through key factors to consider. No more guessing, let's break down the facts!

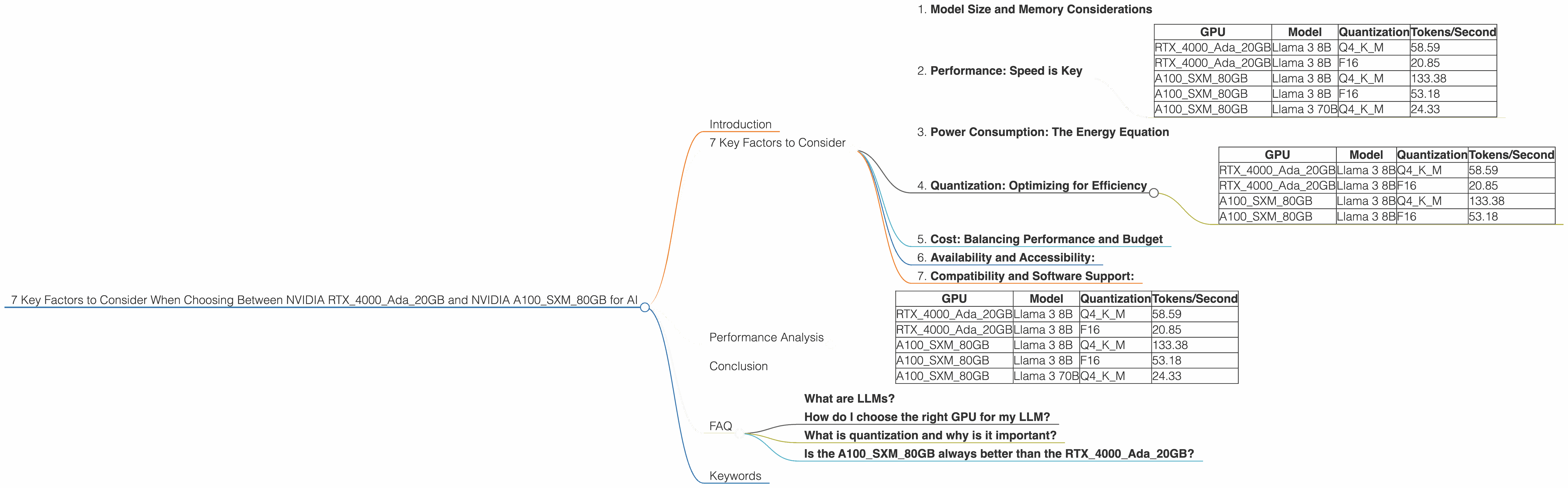

7 Key Factors to Consider

1. Model Size and Memory Considerations

Let's be honest, LLMs are like hungry hippos, constantly gobbling up memory. Think of the NVIDIA RTX4000Ada20GB as a snack-sized option, perfect for smaller models. Its 20GB of memory is ideal for training and running models like Llama 3 8B. On the other hand, the A100SXM_80GB packs a whopping 80GB of memory, making it a heavyweight champion for training and running larger, more complex models like Llama 3 70B.

Example: If you're working with Llama 3 70B, the RTX4000Ada20GB simply won't have enough memory to handle it, while the A100SXM_80GB can comfortably accommodate its enormous size.

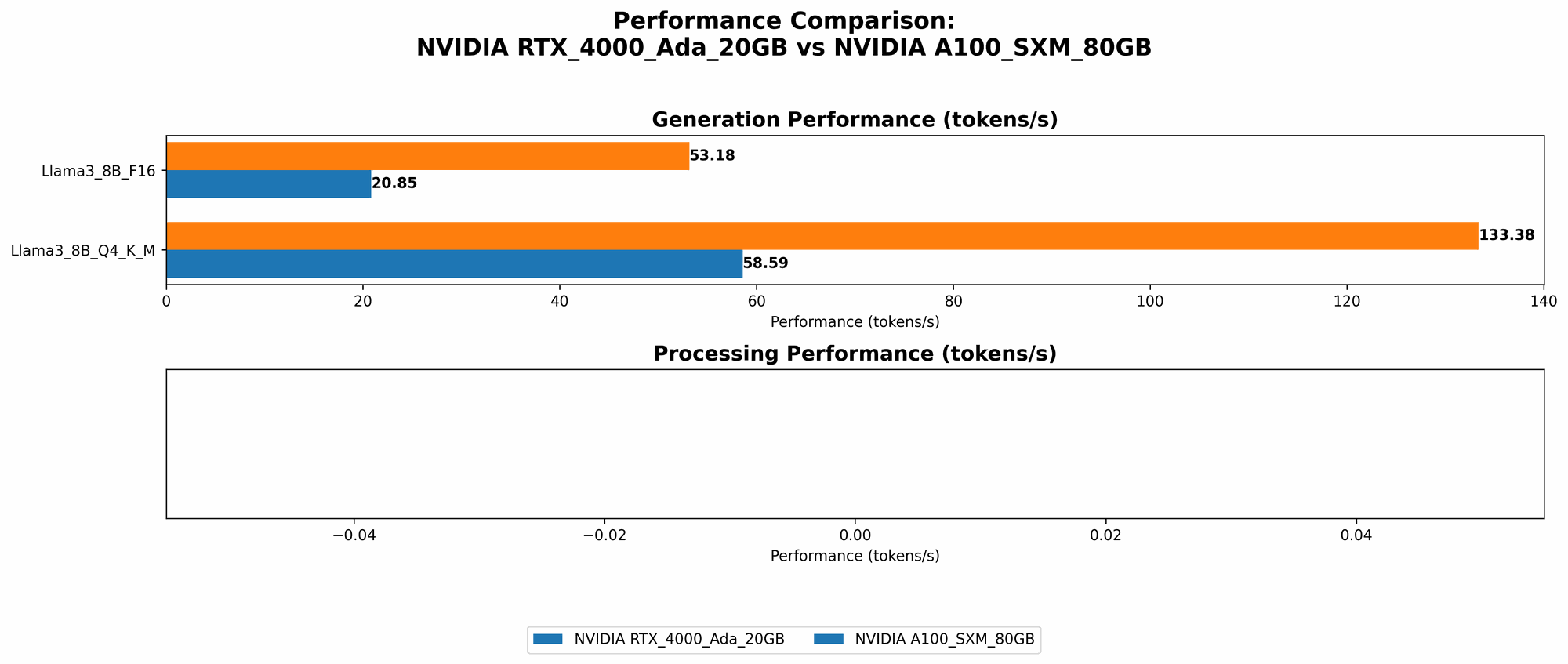

2. Performance: Speed is Key

Now let's talk about performance, the true measure of a GPU's power. In the arena of LLM inference, the RTX4000Ada20GB and the A100SXM80GB stand apart in their speed. The A100SXM80GB demonstrably outpaces the RTX4000Ada20GB, particularly when it comes to larger models.

Data:

| GPU | Model | Quantization | Tokens/Second |

|---|---|---|---|

| RTX4000Ada_20GB | Llama 3 8B | Q4KM | 58.59 |

| RTX4000Ada_20GB | Llama 3 8B | F16 | 20.85 |

| A100SXM80GB | Llama 3 8B | Q4KM | 133.38 |

| A100SXM80GB | Llama 3 8B | F16 | 53.18 |

| A100SXM80GB | Llama 3 70B | Q4KM | 24.33 |

Interpreting the data: The A100SXM80GB boasts significantly higher token/second performance than the RTX4000Ada20GB, especially for the Llama 3 8B model, regardless of quantization (Q4K_M or F16). It's also the only one that can handle Llama 3 70B, delivering respectable performance even with this behemoth.

Practical implications: If you prioritize speed and can afford the A100SXM80GB, it's a clear frontrunner. The RTX4000Ada_20GB is still a solid option for smaller models and projects where budget is a constraint.

3. Power Consumption: The Energy Equation

As with any powerhouse, performance comes at a price, literally. The A100SXM80GB consumes significantly more power than the RTX4000Ada_20GB due to its larger size and processing capabilities. This can be a crucial factor, especially if you're running your model on a tight power budget or are concerned about environmental impact.

Think of it this way: The A100SXM80GB is like a super-powered sports car, fast and efficient, but thirsty for fuel. The RTX4000Ada_20GB is a trusty sedan, getting you where you need to go with less energy consumption.

Practical implications: For most users, the RTX4000Ada20GB offers a good balance between performance and energy efficiency. However, if you're running your models in a data center or have access to ample power, the A100SXM_80GB's raw power might be worth the extra energy expenditure.

4. Quantization: Optimizing for Efficiency

Quantization is like a compression technique for LLMs. It reduces model size and memory footprint, making them more efficient and faster to run. Both the RTX4000Ada20GB and A100SXM_80GB support quantization, significantly improving performance while reducing memory requirements.

Think of it like this: Quantization is like swapping a high-resolution image for a lower-resolution one. You might lose some visual detail, but the file size becomes much smaller, allowing it to load and display faster.

Data:

| GPU | Model | Quantization | Tokens/Second |

|---|---|---|---|

| RTX4000Ada_20GB | Llama 3 8B | Q4KM | 58.59 |

| RTX4000Ada_20GB | Llama 3 8B | F16 | 20.85 |

| A100SXM80GB | Llama 3 8B | Q4KM | 133.38 |

| A100SXM80GB | Llama 3 8B | F16 | 53.18 |

Interpreting the data: The A100SXM80GB consistently outperforms the RTX4000Ada20GB across different quantization levels, particularly for the Q4KM setting. This emphasizes the A100SXM_80GB's ability to handle more complex and demanding workloads.

Practical implications: Quantization is a powerful optimization strategy, and both GPUs can leverage it effectively. However, the A100SXM80GB's performance is superior to the RTX4000Ada_20GB, especially for larger models.

5. Cost: Balancing Performance and Budget

The A100SXM80GB is the more expensive option, reflecting its advanced capabilities. The RTX4000Ada_20GB is a more budget-friendly alternative, particularly for smaller projects or users with limited financial resources.

Example: The A100SXM80GB can cost upwards of $10,000, while the RTX4000Ada_20GB can be purchased for a fraction of that price.

Practical implications: If budget is a major concern, the RTX4000Ada20GB is a great choice. However, if performance and scalability are paramount, the higher price of the A100SXM_80GB might be justified.

6. Availability and Accessibility:

Although both cards are available, often the A100SXM80GB is harder to acquire due to its higher demand and limited production. The RTX4000Ada_20GB is more readily available, making it a more reliable option for those who need a GPU immediately.

Think of it like this: Finding an A100SXM80GB is like trying to snag a limited-edition collectible. The RTX4000Ada_20GB is more like a standard issue, available at most major retailers.

Practical implications: If you need a GPU quickly, the RTX4000Ada20GB is likely the easier option. However, if you're willing to wait or have access to specific channels, the A100SXM_80GB might be attainable.

7. Compatibility and Software Support:

Both the RTX4000Ada20GB and A100SXM_80GB are compatible with major deep learning frameworks like TensorFlow, PyTorch, and CUDA. They offer excellent software support, ensuring smooth integration and seamless development experiences.

Think of it like this: Both GPUs are like versatile actors, readily adapting to different roles in your AI project.

Practical implications: You can be confident that either card will play nicely with your existing setup and tools. The choice boils down to performance and budget considerations.

Performance Analysis

Data:

| GPU | Model | Quantization | Tokens/Second |

|---|---|---|---|

| RTX4000Ada_20GB | Llama 3 8B | Q4KM | 58.59 |

| RTX4000Ada_20GB | Llama 3 8B | F16 | 20.85 |

| A100SXM80GB | Llama 3 8B | Q4KM | 133.38 |

| A100SXM80GB | Llama 3 8B | F16 | 53.18 |

| A100SXM80GB | Llama 3 70B | Q4KM | 24.33 |

Analysis:

The A100SXM80GB demonstrably outperforms the RTX4000Ada20GB for both Llama 3 8B and Llama 3 70B. Its superior speed is evident, especially with the 70B model, which the RTX4000Ada20GB cannot handle.

The RTX4000Ada_20GB offers respectable performance for smaller models like Llama 3 8B. Its more affordable price and lower power consumption make it an appealing option for users with budget constraints.

Quantization helps both GPUs achieve more efficient performance, but the A100SXM80GB consistently outperforms the RTX4000Ada20GB, particularly with Q4K_M settings.

Practical Recommendations:

For large models (like Llama 3 70B) or those who prioritize raw speed: The A100SXM80GB is the clear winner, although its cost and power consumption might be a concern.

For smaller models (like Llama 3 8B) or users with limited budget and power: The RTX4000Ada_20GB strikes a good balance between performance and efficiency.

For data center environments or high-performance computing: The A100SXM80GB's exceptional performance might be worth its higher cost and power consumption.

Conclusion

Choosing between the NVIDIA RTX4000Ada20GB and NVIDIA A100SXM80GB for your LLM needs is a decision based on factors like model size, performance requirements, budget, and power consumption. The A100SXM80GB is a powerhouse designed for larger models and demanding workloads, while the RTX4000Ada20GB offers a more budget-friendly and energy-efficient option for smaller models.

The key is to understand your specific needs and choose the GPU that best aligns with your project's requirements. Don't let the technical jargon intimidate you; with this comprehensive guide, you can confidently select the perfect GPU for unleashing the full potential of your LLMs.

FAQ

What are LLMs?

LLMs, or large language models, are powerful AI models trained on vast amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

How do I choose the right GPU for my LLM?

Consider your model size, performance expectations, budget, and power consumption. Smaller models can run on the RTX4000Ada20GB, while larger models might require the A100SXM_80GB.

What is quantization and why is it important?

Quantization is a technique that reduces the size and memory footprint of a model. This makes it faster to run and more efficient, particularly for large models.

Is the A100SXM80GB always better than the RTX4000Ada_20GB?

Not always! The A100SXM80GB is better for larger models and demanding workloads, but the RTX4000Ada_20GB offers a more affordable and energy-efficient option for beginners or users with limited resources.

Keywords

LLMs, Large Language Models, NVIDIA RTX4000Ada20GB, NVIDIA A100SXM80GB, GPU, Performance, Memory, Tokens/Second, Quantization, Cost, Availability, Compatibility, Software Support, AI, Machine Learning, Deep Learning, Inference, Training, Llama 3 8B, Llama 3 70B, Q4K_M, F16, TensorFlow, PyTorch, CUDA.