7 Key Factors to Consider When Choosing Between NVIDIA A40 48GB and NVIDIA A100 PCIe 80GB for AI

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware. This article will dive deep into two titans of the GPU world: NVIDIA A4048GB and NVIDIA A100PCIe_80GB, comparing their performance on various Llama LLM models. We'll analyze key factors like memory capacity, processing speed, and token generation rates to help you decide which GPU best suits your AI needs.

Imagine you're building a spaceship for a grand adventure. You have two options: a powerful, sleek ship with limited cargo space, or a bulkier ship boasting a massive cargo hold. Choosing the right ship depends on the length of your journey and the amount of supplies you need. The same principle applies when choosing between the A4048GB and A100PCIe_80GB - each GPU has its strengths and weaknesses, and choosing wisely is crucial for your AI exploration.

Performance Comparison of A4048GB and A100PCIe_80GB for Llama 3 LLMs

Memory Capacity: A Crucial Factor

The first battleground is memory capacity. The A100PCIe80GB boasts a whopping 80 GB of HBM2e memory, while the A40_48GB offers 48 GB. This difference directly translates to the size of LLMs you can handle on these GPUs.

Imagine your ship's cargo hold representing memory capacity. The A100PCIe80GB is like a giant freighter, capable of carrying immense payloads of data, while the A40_48GB is like a nimble cargo ship, perfect for smaller loads.

For smaller LLMs like Llama 3 8B (8 billion parameters), both GPUs are champions. They can easily fit the model's weights into their memory and process data efficiently. However, when venturing into the massive territory of LLMs like Llama 3 70B (70 billion parameters), the A100PCIe80GB's larger memory becomes a game-changer. This robust memory allows you to run larger models without the need for complex model partitioning or compromises in performance.

Token Speed: A Deep Dive into Generation and Processing

The speed at which a GPU processes textual data is critical for real-time applications like conversational AI or text generation. This speed is measured in tokens per second - a token being a basic unit of language.

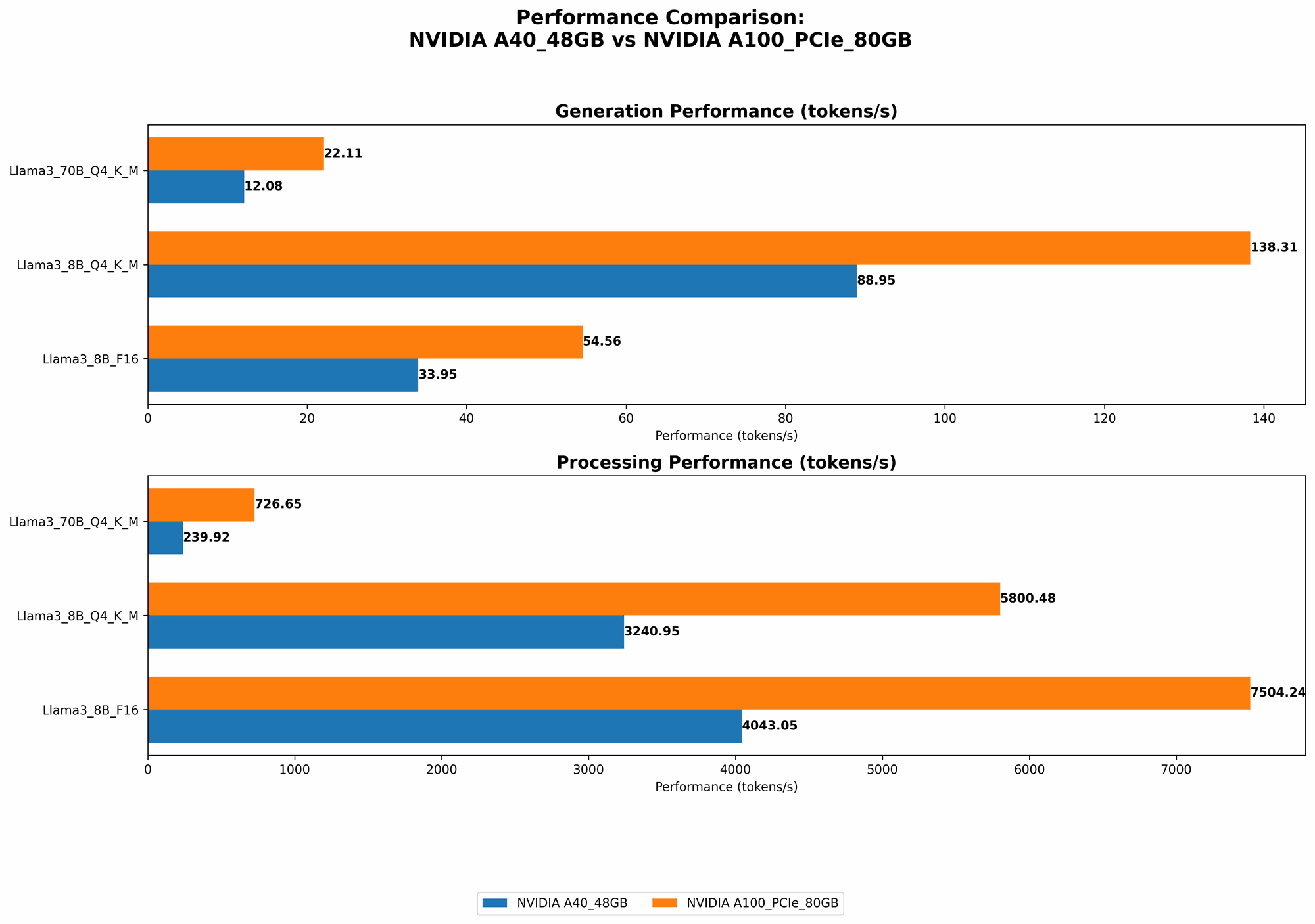

Token Generation Speed

Let's break down the token generation speed for different Llama model configurations:

| Device | Model | Quantization | Tokens per second |

|---|---|---|---|

| A40_48GB | Llama 3 8B | Q4KM | 88.95 |

| A100PCIe80GB | Llama 3 8B | Q4KM | 138.31 |

| A40_48GB | Llama 3 8B | F16 | 33.95 |

| A100PCIe80GB | Llama 3 8B | F16 | 54.56 |

| A40_48GB | Llama 3 70B | Q4KM | 12.08 |

| A100PCIe80GB | Llama 3 70B | Q4KM | 22.11 |

Here's what we see:

- A100PCIe80GB shines in token generation speed across all Llama 3 models. Its significantly higher token generation rate translates to faster responses in your AI applications. This means your chatbots will be quicker to respond, and your text generation processes will be smoother, making it ideal for real-time interactive scenarios.

- For smaller models like Llama 3 8B, both GPUs offer impressive token generation speeds. However, as we scale up to Llama 3 70B, the A100PCIe80GB's advantage becomes more apparent.

- Quantization impacts token generation speed. The Q4KM quantization method, which compresses the model's weights, generally offers faster token generation compared to F16, the half-precision floating-point format.

Token Processing Speed

Token processing speed reflects how fast the GPU can process a sequence of tokens to understand context and generate outputs. This is essential for maintaining conversational coherency and ensuring natural-sounding responses.

| Device | Model | Quantization | Tokens per second |

|---|---|---|---|

| A40_48GB | Llama 3 8B | Q4KM | 3240.95 |

| A100PCIe80GB | Llama 3 8B | Q4KM | 5800.48 |

| A40_48GB | Llama 3 8B | F16 | 4043.05 |

| A100PCIe80GB | Llama 3 8B | F16 | 7504.24 |

| A40_48GB | Llama 3 70B | Q4KM | 239.92 |

| A100PCIe80GB | Llama 3 70B | Q4KM | 726.65 |

Let's analyze the numbers:

- Again, A100PCIe80GB displays superior token processing speed across all Llama 3 models. This means it can tackle complex language tasks more efficiently, resulting in more coherent and accurate outputs.

- While A40_48GB performs well with Llama 3 8B, its performance drops significantly with Llama 3 70B. This is due to its smaller memory capacity, which might require complex model partitioning, leading to slower processing.

- F16 quantization offers slightly faster token processing compared to Q4KM for Llama 3 8B. This could be attributed to the nature of F16, which allows for more direct computations.

Think of token processing as the speed of a spaceship's navigation system. A faster system helps the ship navigate the vast expanse of language more effectively, leading to more accurate and efficient journeys.

Quantization: A Trade-off of Speed and Memory

Quantization is a technique used to reduce the size of LLM models by compressing their weights, leading to faster inference times and lower memory requirements. Think of quantization as packing your spaceship's cargo more efficiently to maximize space.

Both GPUs support Q4KM quantization, which compresses the weights to a quarter of their original size. This offers a significant boost in speed and enables you to run larger models on devices with limited memory, like the A40_48GB.

GPU Cores: The Workhorse of AI

GPU cores are the individual processors within a GPU that handle the mathematical operations required for AI computations. More GPU cores generally translate to faster processing, even when running smaller models.

The A4048GB features 48 GB of GPU cores, while the A100PCIe_80GB boasts 5248 GPU cores. This difference in GPU cores directly impacts the overall processing power of the GPU.

Think of GPU cores as a spaceship's engines. The more engines you have, the faster your spaceship will go.

Power Consumption and Cost: Considerations for Budget-Conscious Users

While performance reigns supreme, the real-world implications of power consumption and cost are crucial for practical application.

The A4048GB is designed for power efficiency, consuming less power than the A100PCIe_80GB. However, this efficiency comes with lower processing power.

The A100PCIe80GB is a powerhouse but comes with a higher price tag. This means it's ideal for high-performance applications where cost is less of a concern.

Think of power consumption as a spaceship's fuel consumption. A ship with efficient engines requires less fuel but may be slower. A ship with more powerful engines requires more fuel but can travel farther and faster.

Practical Recommendations: Choosing the Right GPU for Your AI Journey

- For budget-conscious developers working with smaller LLMs like Llama 3 8B, the A4048GB offers an excellent balance of performance and power efficiency. It's a robust choice for projects that don't require the immense processing power of the A100PCIe_80GB.

- If you're venturing into the realm of massive LLMs like Llama 3 70B or working with real-time AI applications that demand peak performance, the A100PCIe80GB is the king. It offers exceptional processing speed, ample memory capacity, and a comprehensive solution for those who prioritize performance above all else.

Conclusion

Choosing between NVIDIA A4048GB and A100PCIe_80GB for running Llama 3 LLMs comes down to balancing your needs with your resources.

- A40_48GB: A powerhouse for smaller LLMs, offering excellent performance and efficiency.

- A100PCIe80GB: The ideal choice for demanding real-time AI tasks and massive LLMs, providing unbridled power and generous memory capacity.

By carefully considering performance metrics, cost, energy efficiency, and your specific AI needs, you can chart a successful course through the world of LLMs and GPU technology.

FAQ

What are LLMs?

LLMs are large language models, sophisticated AI algorithms that can process and generate human-like text. Think of LLMs as AI that can understand and speak your language!

What is quantization?

Quantization is a technique that reduces the size of a model by compressing its weights. Think of it as packing your clothes more efficiently for a trip!

What are tokens?

Tokens are the basic units of language that LLMs use to process text. They're like the building blocks of a language!

How does token generation speed impact my AI applications?

The faster the token generation speed, the quicker your AI can respond to prompts and generate text. Think of it like fast typing – the quicker you type, the faster you can communicate your thoughts!

What should I consider when choosing between A4048GB and A100PCIe_80GB?

- LLM size: If you're working with large models like Llama 3 70B, the A100PCIe80GB is a better choice due to its larger memory capacity.

- Performance: If you need peak performance, the A100PCIe80GB is the clear winner with its superior processing speed.

- Budget: The A40_48GB is more budget-friendly and offers excellent value for smaller LLMs.

Keywords

Large language models, LLM, AI, GPU, NVIDIA A4048GB, NVIDIA A100PCIe_80GB, Llama 3, token generation, token processing, quantization, performance, memory, cost, efficiency, speed, applications, development.