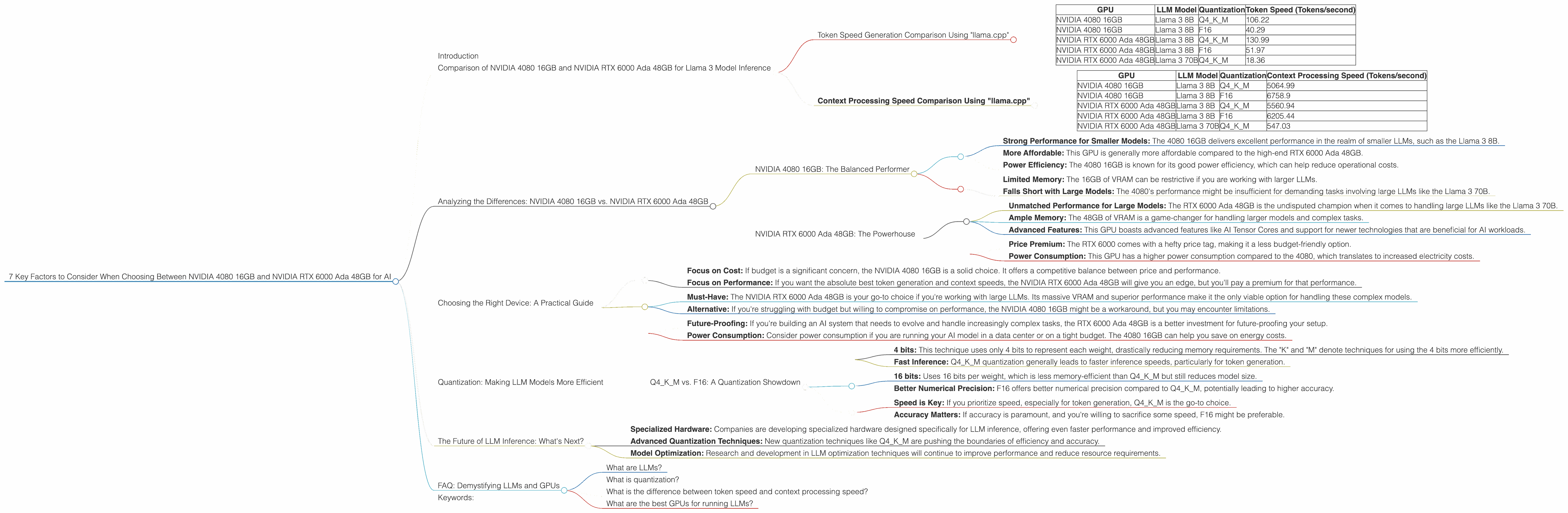

7 Key Factors to Consider When Choosing Between NVIDIA 4080 16GB and NVIDIA RTX 6000 Ada 48GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes the demand for powerful hardware to run these models. Two popular GPUs for this task are the NVIDIA 4080 16GB and the NVIDIA RTX 6000 Ada 48GB. Both are powerhouses in their own right, offering impressive performance for AI workloads. But which one is right for you?

This article will dive deep into the key factors you should consider to make the best decision for your specific needs. We'll analyze their performance on specific LLM models, discuss their strengths and weaknesses, and provide practical recommendations for different use cases. Buckle up, this is going to be a wild ride!

Comparison of NVIDIA 4080 16GB and NVIDIA RTX 6000 Ada 48GB for Llama 3 Model Inference

Let's get down to brass tacks and see how these two GPUs stack up against each other in the realm of LLM inference using the popular Llama 3 model*. We'll be looking at both 8B and 70B models, and using two common quantization techniques: 4-bit quantization (Q4KM) and 16-bit floating point (F16).

- Llama 3 is an open-source LLM, meaning you can download and run it locally. This makes it an excellent choice for testing and experimenting with different configurations, and for exploring the inner workings of LLMs.

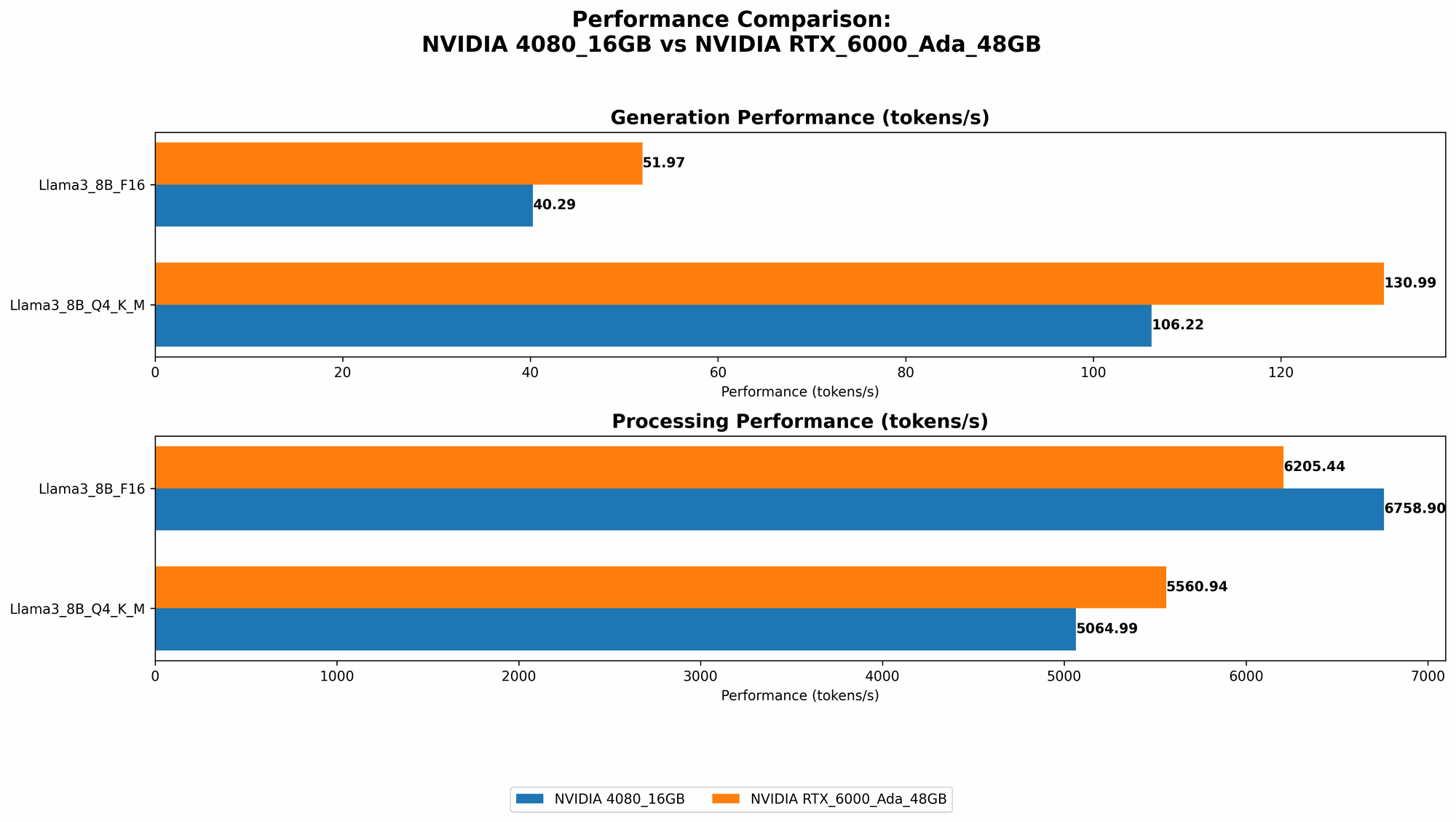

Token Speed Generation Comparison Using "llama.cpp"

Token speed refers to how fast a GPU can generate new text tokens based on a given prompt. This is a critical metric for interactive applications like chatbots.

| GPU | LLM Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|---|

| NVIDIA 4080 16GB | Llama 3 8B | Q4KM | 106.22 |

| NVIDIA 4080 16GB | Llama 3 8B | F16 | 40.29 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | Q4KM | 130.99 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | F16 | 51.97 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 70B | Q4KM | 18.36 |

Key Observations:

- RTX 6000 Ada 48GB is the champion for token speed: It outperforms the 4080 by a significant margin (23% and 29% for Q4KM and F16, respectively) for the Llama 3 8B model.

- Larger Models Slow Things Down: When we move up to the Llama 3 70B, the difference shrinks, but the RTX 6000 still takes the lead.

Quantization Impacts Performance: Q4KM quantization consistently yields higher token speeds than F16, suggesting that it's more efficient for these specific models.

- Real-World Implications:

Interactive Applications: The RTX 6000 proves to be a better choice for creating interactive LLMs, especially with smaller models, as it can generate text faster, leading to a more responsive user experience.

- Resource-Constrained Environments: The 4080 16GB might be a more suitable option for tasks that require a balance between cost and performance, especially when working with larger models.

Context Processing Speed Comparison Using "llama.cpp"

Context processing speed refers to how quickly a GPU can process the input text (the "context") before generating output. It's a crucial factor for tasks involving long sequences, such as summarizing lengthy documents.

| GPU | LLM Model | Quantization | Context Processing Speed (Tokens/second) |

|---|---|---|---|

| NVIDIA 4080 16GB | Llama 3 8B | Q4KM | 5064.99 |

| NVIDIA 4080 16GB | Llama 3 8B | F16 | 6758.9 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | Q4KM | 5560.94 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | F16 | 6205.44 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 70B | Q4KM | 547.03 |

Key Observations:

- Similar Performance for Smaller Models: The 4080 and RTX 6000 exhibit comparable context processing performance for the Llama 3 8B model.

- RTX 6000 Wins with Large Models: The RTX 6000 shines when processing the Llama 3 70B model, despite its slower token speed.

F16 is faster: While the Q4KM for token speed is faster, the F16 is faster in processing context for all models.

- Real-World Implications:

Long Sequences: For applications that involve processing large amounts of text, such as summarization or document understanding, the RTX 6000's superior performance in dealing with the Llama 3 70B is invaluable.

- Balanced Performance: The 4080 16GB strikes a balance between cost and performance, especially for smaller models, and could be a compelling choice for resource-optimized applications.

Analyzing the Differences: NVIDIA 4080 16GB vs. NVIDIA RTX 6000 Ada 48GB

NVIDIA 4080 16GB: The Balanced Performer

Strengths:

- Strong Performance for Smaller Models: The 4080 16GB delivers excellent performance in the realm of smaller LLMs, such as the Llama 3 8B.

- More Affordable: This GPU is generally more affordable compared to the high-end RTX 6000 Ada 48GB.

- Power Efficiency: The 4080 16GB is known for its good power efficiency, which can help reduce operational costs.

Weaknesses:

- Limited Memory: The 16GB of VRAM can be restrictive if you are working with larger LLMs.

- Falls Short with Large Models: The 4080's performance might be insufficient for demanding tasks involving large LLMs like the Llama 3 70B.

NVIDIA RTX 6000 Ada 48GB: The Powerhouse

Strengths:

- Unmatched Performance for Large Models: The RTX 6000 Ada 48GB is the undisputed champion when it comes to handling large LLMs like the Llama 3 70B.

- Ample Memory: The 48GB of VRAM is a game-changer for handling larger models and complex tasks.

- Advanced Features: This GPU boasts advanced features like AI Tensor Cores and support for newer technologies that are beneficial for AI workloads.

Weaknesses:

- Price Premium: The RTX 6000 comes with a hefty price tag, making it a less budget-friendly option.

- Power Consumption: This GPU has a higher power consumption compared to the 4080, which translates to increased electricity costs.

Choosing the Right Device: A Practical Guide

The decision between these two GPUs boils down to your specific needs and budget constraints. Let's break it down based on your use cases:

For Work with Smaller LLMs (e.g., Llama 3 8B):

Focus on Cost: If budget is a significant concern, the NVIDIA 4080 16GB is a solid choice. It offers a competitive balance between price and performance.

Focus on Performance: If you want the absolute best token generation and context speeds, the NVIDIA RTX 6000 Ada 48GB will give you an edge, but you'll pay a premium for that performance.

For Work with Larger LLMs (e.g., Llama 3 70B):

Must-Have: The NVIDIA RTX 6000 Ada 48GB is your go-to choice if you're working with large LLMs. Its massive VRAM and superior performance make it the only viable option for handling these complex models.

Alternative: If you're struggling with budget but willing to compromise on performance, the NVIDIA 4080 16GB might be a workaround, but you may encounter limitations.

Beyond Performance:

Future-Proofing: If you're building an AI system that needs to evolve and handle increasingly complex tasks, the RTX 6000 Ada 48GB is a better investment for future-proofing your setup.

Power Consumption: Consider power consumption if you are running your AI model in a data center or on a tight budget. The 4080 16GB can help you save on energy costs.

Quantization: Making LLM Models More Efficient

Quantization is the technique of reducing the size and precision of the weights used by neural networks. This has several benefits:

- Reduced Memory Footprint: Quantized models require less memory to store and process, making them more efficient.

Faster Inference: Quantization can significantly improve inference speed, as the GPU has less data to work with.

Think of it like a Diet for LLMs: Just like a diet can make you lighter and faster, quantization helps your LLM shed some "weight" and run more smoothly.

Q4KM vs. F16: A Quantization Showdown

Q4KM:

- 4 bits: This technique uses only 4 bits to represent each weight, drastically reducing memory requirements. The "K" and "M" denote techniques for using the 4 bits more efficiently.

- Fast Inference: Q4KM quantization generally leads to faster inference speeds, particularly for token generation.

F16:

- 16 bits: Uses 16 bits per weight, which is less memory-efficient than Q4KM but still reduces model size.

- Better Numerical Precision: F16 offers better numerical precision compared to Q4KM, potentially leading to higher accuracy.

Choosing the Right Quantization Technique:

- Speed is Key: If you prioritize speed, especially for token generation, Q4KM is the go-to choice.

- Accuracy Matters: If accuracy is paramount, and you're willing to sacrifice some speed, F16 might be preferable.

Note: The best quantization technique depends on the specific model, and you might need to experiment to find the sweet spot.

The Future of LLM Inference: What's Next?

The world of LLM inference is constantly evolving, and new advancements are emerging rapidly. Here are some key trends to watch:

- Specialized Hardware: Companies are developing specialized hardware designed specifically for LLM inference, offering even faster performance and improved efficiency.

- Advanced Quantization Techniques: New quantization techniques like Q4KM are pushing the boundaries of efficiency and accuracy.

- Model Optimization: Research and development in LLM optimization techniques will continue to improve performance and reduce resource requirements.

FAQ: Demystifying LLMs and GPUs

What are LLMs?

LLMs are large neural networks trained on massive datasets of text and code. This allows them to generate human-like text, translate languages, summarize information, and perform other complex linguistic tasks.

What is quantization?

Quantization is a technique for reducing the size of the weights used by neural networks. It involves mapping the original, high-precision weights to a lower precision scale, while minimizing the loss of information. This results in smaller models that require less memory and can process data faster.

What is the difference between token speed and context processing speed?

Token speed is how quickly a GPU can generate new text tokens based on a given prompt. Context processing speed refers to how fast a GPU can process the input text before generating output.

What are the best GPUs for running LLMs?

The best GPU for running LLMs depends on your specific needs, budget, and model size. For smaller models, the NVIDIA 4080 16GB offers good performance at a reasonable price. For larger models, the NVIDIA RTX 6000 Ada 48GB is the ultimate powerhouse, but comes with a higher price tag.

Keywords:

LLM, large language model, NVIDIA 4080 16GB, NVIDIA RTX 6000 Ada 48GB, GPU, inference, token speed, context processing speed, quantization, Q4KM, F16, Llama 3, AI, deep learning, machine learning, performance, comparison, review, guide, recommendation, efficiency, cost, budget, future, trends, hardware, software, development, research, AI workload, AI applications, chatbot, language model, conversational AI, NLP, natural language processing, text generation, text summarization, document understanding, data center, power consumption, memory, VRAM, AI Tensor Cores.