7 Key Factors to Consider When Choosing Between NVIDIA 4070 Ti 12GB and NVIDIA A100 SXM 80GB for AI

Introduction

The world of artificial intelligence (AI), particularly large language models (LLMs), is exploding with exciting possibilities. However, running these models locally requires powerful hardware, which can be a significant investment. This article delves into the key factors you should consider when deciding between two popular GPU choices for LLM inference: the NVIDIA 4070 Ti 12GB and the NVIDIA A100 SXM 80GB.

Imagine you're a developer building an LLM-powered chatbot, a personalized AI assistant, or even a creative writing tool. Choosing the right hardware is crucial for performance, efficiency, and ultimately, the success of your project. Let's dive in and see which GPU best suits your needs!

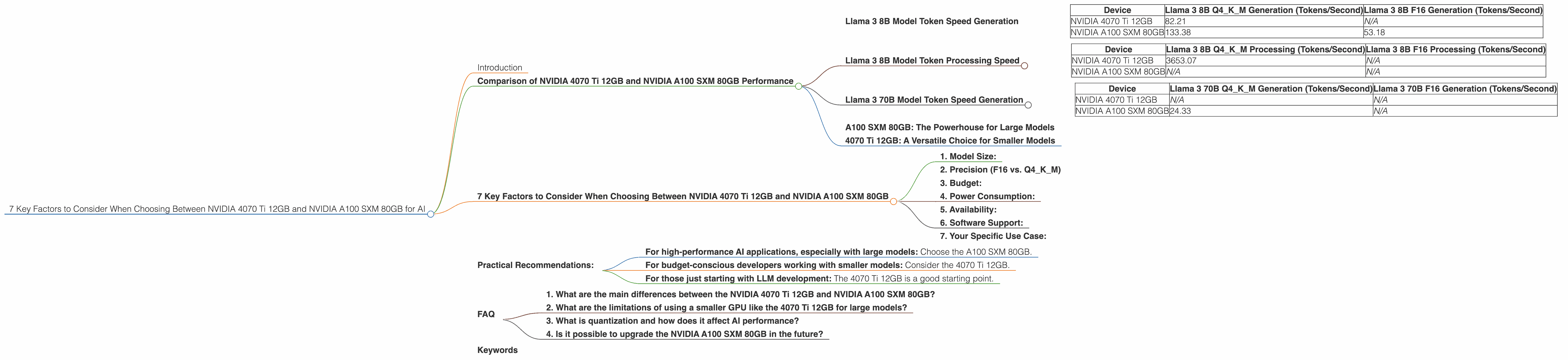

Comparison of NVIDIA 4070 Ti 12GB and NVIDIA A100 SXM 80GB Performance

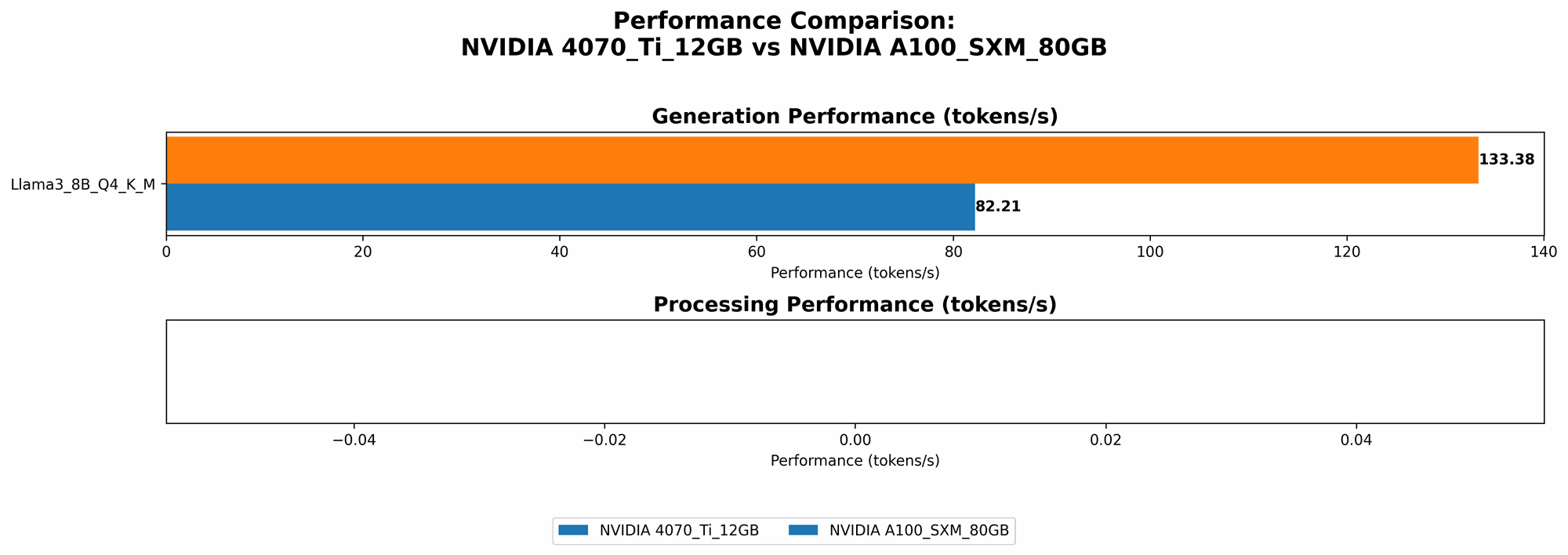

Llama 3 8B Model Token Speed Generation

Let's start with the powerhouse of AI: token speed generation! This metric measures how quickly your GPU can generate text, which directly impacts the responsiveness of your AI applications.

| Device | Llama 3 8B Q4KM Generation (Tokens/Second) | Llama 3 8B F16 Generation (Tokens/Second) |

|---|---|---|

| NVIDIA 4070 Ti 12GB | 82.21 | N/A |

| NVIDIA A100 SXM 80GB | 133.38 | 53.18 |

The A100 SXM 80GB clearly wins this round, delivering approximately 62% faster token generation for the quantized Llama 3 8B model (Q4KM) and a whopping 150% faster speed for the F16 precision model! This means if you're building applications that demand swift text generation - like real-time chatbots or interactive stories - the A100 might be the better option, especially if you can leverage the F16 precision.

Llama 3 8B Model Token Processing Speed

What about processing those tokens? Token processing speed is essential for tasks like translation, summarizing text, or analyzing large datasets.

| Device | Llama 3 8B Q4KM Processing (Tokens/Second) | Llama 3 8B F16 Processing (Tokens/Second) |

|---|---|---|

| NVIDIA 4070 Ti 12GB | 3653.07 | N/A |

| NVIDIA A100 SXM 80GB | N/A | N/A |

The 4070 Ti 12GB shows its strength here with an impressive token processing speed for the Q4KM Llama3 8B model. Unfortunately, data for the A100 SXM 80GB for these performance metrics is unavailable.

Llama 3 70B Model Token Speed Generation

Let's ramp things up! If you need to run larger models like Llama 3 70B, things get a bit more complex.

| Device | Llama 3 70B Q4KM Generation (Tokens/Second) | Llama 3 70B F16 Generation (Tokens/Second) |

|---|---|---|

| NVIDIA 4070 Ti 12GB | N/A | N/A |

| NVIDIA A100 SXM 80GB | 24.33 | N/A |

The 4070 Ti 12GB unfortunately struggles with the larger Llama 3 70B model, and data for both F16 precision and processing speeds is missing. The A100 SXM 80GB is the clear winner, but it's important to note that even with this powerful GPU, the token generation speed for the 70B model is significantly slower than the 8B model.

A100 SXM 80GB: The Powerhouse for Large Models

From the data above, it's clear that the NVIDIA A100 SXM 80GB shines when it comes to large language models. Its powerful architecture and substantial memory allow it to handle larger models and more complex workloads with greater efficiency. For users who need to run LLMs like Llama 3 70B and beyond, the A100 is the go-to choice.

4070 Ti 12GB: A Versatile Choice for Smaller Models

While the A100 SXM 80GB is a powerhouse, it's not the only option! The NVIDIA 4070 Ti 12GB offers a fantastic blend of performance and affordability. Its performance is more than adequate for handling smaller LLMs like Llama 3 8B, and its lower cost makes it a compelling option for budget-conscious users or those just starting with LLM development.

7 Key Factors to Consider When Choosing Between NVIDIA 4070 Ti 12GB and NVIDIA A100 SXM 80GB

Now, let's break down the key factors that should guide your decision:

1. Model Size:

As we saw with the Llama 3 models, bigger models usually require more processing power and memory. If you plan to run large LLMs like Llama 3 70B or larger, the A100 SXM 80GB is the clear winner. However, if you're working with smaller models (e.g., Llama 3 8B) the 4070 Ti 12GB might be sufficient.

2. Precision (F16 vs. Q4KM)

LLMs can use different levels of precision, which affect performance and memory footprint. F16 (half-precision) trades some accuracy for speed. Q4KM (quantization) uses even less precision, making models smaller and faster but potentially sacrificing some quality. The A100 SXM 80GB currently has better support for F16 precision, offering significant speed boosts for certain models. However, the 4070 Ti 12GB might be sufficient for applications where the Q4KM precision is acceptable.

3. Budget:

The A100 SXM 80GB is considerably more expensive than the 4070 Ti 12GB. If budget is a significant constraint, the 4070 Ti 12GB is a more practical choice.

4. Power Consumption:

The A100 SXM 80GB is a power-hungry beast. Consider your power supply and cooling capabilities before choosing this option. The 4070 Ti 12GB is more energy-efficient.

5. Availability:

The A100 is a server-grade GPU that might be harder to acquire for individual developers. The 4070 Ti 12GB is a consumer-grade GPU that's more readily available.

6. Software Support:

Both NVIDIA GPUs are well-supported by popular AI frameworks like PyTorch and TensorFlow. However, specialized libraries and optimized code for certain models might be more readily available for the A100 due to its popularity in high-performance computing.

7. Your Specific Use Case:

Ultimately, the best GPU for you depends on your specific use case. If you're building a real-time chatbot that needs to handle large language models efficiently, the A100 will be a better choice. For developers working with smaller LLMs or on a tighter budget, the 4070 Ti 12GB is a versatile and cost-effective option.

Practical Recommendations:

- For high-performance AI applications, especially with large models: Choose the A100 SXM 80GB.

- For budget-conscious developers working with smaller models: Consider the 4070 Ti 12GB.

- For those just starting with LLM development: The 4070 Ti 12GB is a good starting point.

FAQ

1. What are the main differences between the NVIDIA 4070 Ti 12GB and NVIDIA A100 SXM 80GB?

The main difference lies in their performance and capabilities. The A100 SXM 80GB is a more powerful GPU designed for high-performance computing and large-scale AI tasks. It boasts a larger memory capacity, more processing power, and better performance for larger models. The 4070 Ti 12GB is a more affordable and energy-efficient option that delivers excellent performance for smaller LLMs.

2. What are the limitations of using a smaller GPU like the 4070 Ti 12GB for large models?

The 4070 Ti 12GB might struggle with the memory requirements and processing demands of large models, leading to slower performance and potentially even instability.

3. What is quantization and how does it affect AI performance?

Quantization is a technique that reduces the precision of model weights and activations, making them smaller and faster to process. While quantization can significantly improve speed, it can also result in a slight decrease in accuracy.

4. Is it possible to upgrade the NVIDIA A100 SXM 80GB in the future?

NVIDIA GPUs are not upgradeable in the traditional sense. However, new driver updates and software optimizations are often released, enhancing performance and support for new models.

Keywords

NVIDIA 4070 Ti 12GB, NVIDIA A100 SXM 80GB, LLM, large language models, AI, artificial intelligence, token generation, token processing, performance, budget, power consumption, availability, software support, use case, Llama 3 8B, Llama 3 70B, precision, F16, Q4KM, quantization.