7 Key Factors to Consider When Choosing Between NVIDIA 3090 24GB and NVIDIA L40S 48GB for AI

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run these AI behemoths. Choosing the right GPU for your LLM needs can be a daunting task. Two of the most popular contenders in the GPU arena are the NVIDIA 309024GB and the NVIDIA L40S48GB.

This article will guide you through a comprehensive comparison of the two, highlighting their strengths, weaknesses, and suitability for various LLM models. By the end, you'll be equipped to make an informed decision for your specific AI endeavors.

Comparison of NVIDIA 309024GB and NVIDIA L40S48GB for Llama Model Performance

Apple M1 Token Speed Generation

Let's dive into the performance numbers! We'll be focusing on the Llama 3 models (8B and 70B), which are known for their impressive capabilities and are commonly used for research and development.

Our analysis will use two primary metrics:

- Token Generation Speed: This measures how many tokens per second the GPU can generate, directly impacting how quickly your LLM can respond to prompts. Think of it like the typing speed of your AI assistant - the higher the number, the faster it can generate text.

- Token Processing Speed: This metric reflects how fast the GPU can process tokens within the LLM's internal workings, influencing overall inference speed. It's like the brainpower of the AI, determining how quickly it can understand and process information.

Token Generation Speed: Picking the Right GPU for Your LLM Size

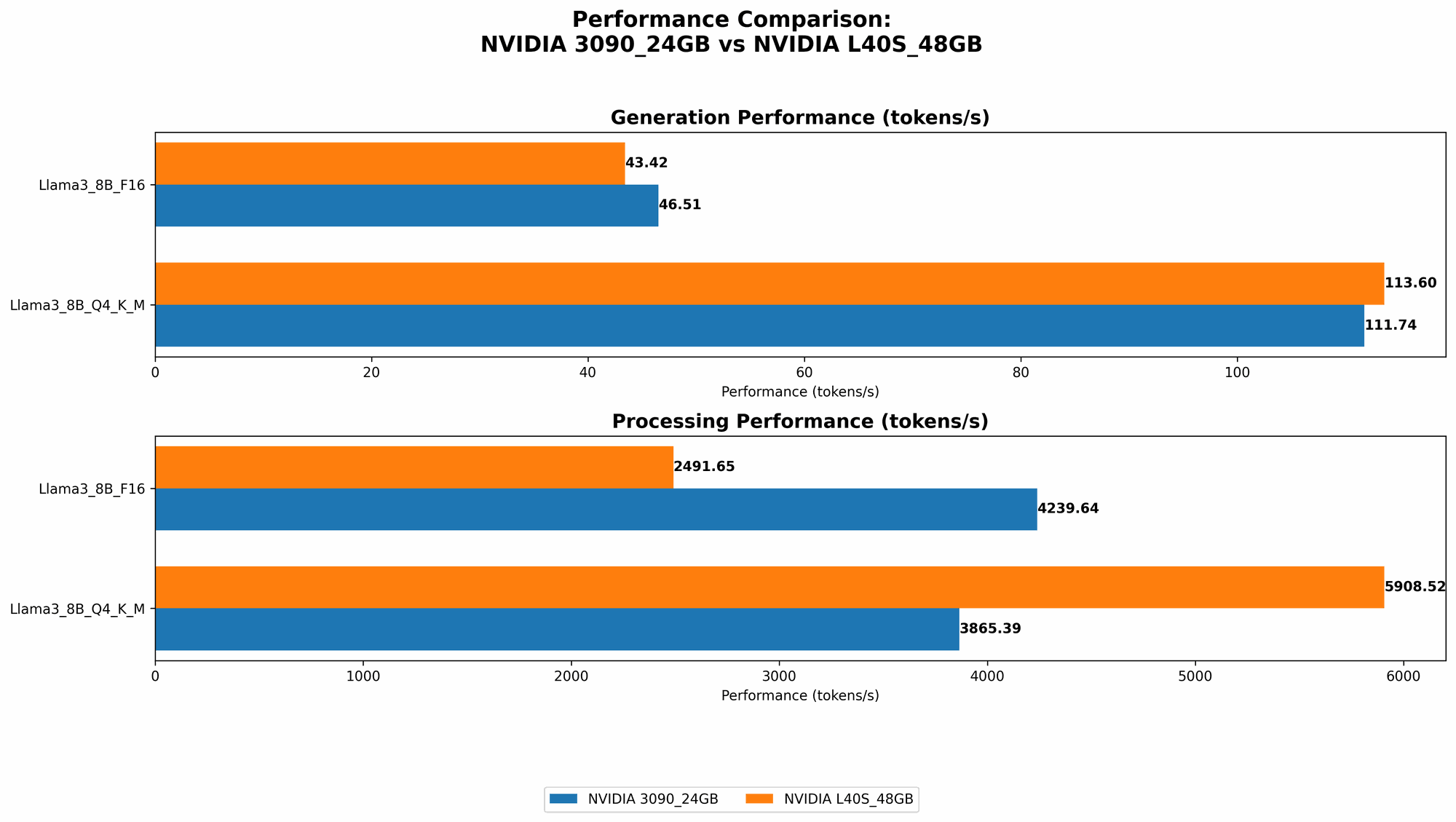

Let's start with Llama 3 8B. Check out the table below to see how the GPUs stack up:

| GPU | Llama 3 8B Q4/K/M Generation (tokens/second) | Llama 3 8B F16 Generation (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | 111.74 | 46.51 |

| NVIDIA L40S_48GB | 113.6 | 43.42 |

So, for Llama 3 8B, the L40S48GB is slightly faster than the 309024GB in terms of token generation speed. However, the difference is not significant.

Now let's look at the Llama 3 70B model:

| GPU | Llama 3 70B Q4/K/M Generation (tokens/second) | Llama 3 70B F16 Generation (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | N/A | N/A |

| NVIDIA L40S_48GB | 15.31 | N/A |

As you can see, the 309024GB doesn't have data available for Llama 3 70B. This suggests that it struggles to handle the larger model's memory requirements. On the other hand, the L40S48GB demonstrates better performance for Llama 3 70B, even though the data lacks the F16 generation speed.

Verdict:

If you're working with smaller LLMs like Llama 3 8B, the NVIDIA 309024GB is perfectly capable. However, if you intend to work with larger models like Llama 3 70B (or models with even more parameters), the L40S48GB offers a clear advantage due to its larger memory capacity and faster processing speed.

Token Processing Speed: Delving Deeper into AI Power

Now let's move on to the token processing speed, which is just as crucial for efficient LLM operation.

Llama 3 8B:

| GPU | Llama 3 8B Q4/K/M Processing (tokens/second) | Llama 3 8B F16 Processing (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | 3865.39 | 4239.64 |

| NVIDIA L40S_48GB | 5908.52 | 2491.65 |

The L40S48GB significantly outperforms the 309024GB when quantizing the Llama 3 8B model with Q4/K/M. The increase in processing speed is remarkable. However, this changes drastically when using F16. In this case, the 309024GB outshines the L40S48GB.

Llama 3 70B:

| GPU | Llama 3 70B Q4/K/M Processing (tokens/second) | Llama 3 70B F16 Processing (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | N/A | N/A |

| NVIDIA L40S_48GB | 649.08 | N/A |

The 309024GB lacks data, indicating an inability to handle the large memory demands of the Llama 3 70B model. The L40S48GB, however, still manages to deliver decent token processing speeds.

Verdict:

While the L40S48GB offers faster processing speeds for smaller LLMs (like Llama 3 8B) when using Q4/K/M quantization, its performance drops significantly with F16 compared to the 309024GB. This makes the 309024GB the better choice for those who prioritize performance when using F16. For larger LLMs (like Llama 3 70B), the L40S48GB is the only option due to its superior memory capacity.

Quantization: Simplifying the AI Brain

If you haven't heard of quantization before, imagine it like simplifying a complex recipe by using fewer ingredients. It's a process of reducing the size of the AI model by representing its data with fewer bits. This makes the model smaller and faster, but it can sometimes affect its accuracy.

Q4/K/M quantization, with its lower precision, benefits from faster processing speeds, as seen in the L40S48GB performance. F16, with its higher precision, generally delivers slightly more accurate results, which is why the 309024GB shines in this scenario.

The takeaway:

Quantization is a powerful tool for optimizing LLM performance, but you need to consider the trade-offs between speed and accuracy.

Factors to Consider When Choosing Between NVIDIA 309024GB and NVIDIA L40S48GB

1. Memory Capacity: The AI's Storage Closet

The L40S48GB has a clear advantage in memory capacity with its whopping 48 GB of GDDR6 memory. This allows it to handle larger models like Llama 3 70B, which require significant memory to store their massive parameter sets. The 309024GB, with its 24GB of memory, struggles to handle such large models effectively.

Imagine your AI as a chef. The memory capacity of the GPU is like the chef's pantry. The larger the pantry, the more ingredients (parameters) the chef can store, allowing them to create more complex and intricate dishes (models).

2. Power Consumption: The AI's Energy Appetite

Both GPUs are power-hungry beasts. The L40S_48GB, with its larger memory and processing power, naturally consumes more electricity. While it offers impressive performance, the higher energy cost could be a deciding factor for budget-conscious users.

3. Cost: The Price Tag of AI Expertise

The L40S48GB comes at a premium price compared to the 309024GB, reflecting its advanced features and higher performance. The 3090_24GB, while still expensive, offers a more affordable option for those seeking a balance between cost and performance.

4. Availability: The AI Hardware Lottery

Both GPUs can be challenging to acquire due to their high demand and limited supply. However, the L40S_48GB might be even more difficult to find, as it is a newer and more specialized product.

5. Cooling and Noise: The AI's Cooling System

Both GPUs generate significant heat and can be noisy. You'll need a robust cooling system to prevent overheating and maintain optimal performance. The L40S_48GB, with its higher processing power, might require even more attention to cooling.

6. Software Compatibility: The AI's Programming Language

Both GPUs are fully compatible with popular machine learning frameworks like TensorFlow and PyTorch. However, the L40S_48GB might have better support for newer AI development tools and libraries. You should always check the software compatibility before making your final decision.

7. Use Case: Tailoring Your AI to Its Task

The best choice between the NVIDIA 309024GB and NVIDIA L40S48GB depends entirely on your specific needs and the size of your LLM.

- For smaller LLMs (like Llama 3 8B) or those prioritizing F16 precision, the 3090_24GB can be a cost-effective and powerful option.

- For larger LLMs (like Llama 3 70B) or those requiring Q4/K/M processing speed, the L40S_48GB is the superior choice, despite its higher cost and energy consumption.

Conclusion: Finding the Right GPU for Your AI Journey

Selecting the right GPU for your LLM is crucial for maximizing performance, accuracy, and efficiency. The NVIDIA 309024GB and NVIDIA L40S48GB offer distinct advantages and disadvantages.

The 309024GB excels in F16 precision and affordability, making it suitable for smaller LLMs and cost-conscious developers. The L40S48GB, with its larger memory and boosted processing power, reigns supreme for larger LLMs and those who prioritize performance over cost.

Ultimately, the best GPU for your AI endeavors depends on your specific needs, budget, and model size. By carefully considering the factors discussed in this article, you can make an informed decision and embark on your AI journey with confidence.

FAQ: Unveiling the Mysteries of LLMs and GPUs

1. What is a large language model (LLM)?

LLMs are sophisticated AI models trained on massive amounts of text data. They can understand, generate, and translate human language with impressive accuracy, making them ideal for tasks like text summarization, chatbot development, and writing creative content.

2. What is quantization?

Quantization is a process of compressing the size of an LLM by representing its data using fewer bits. This can dramatically speed up inference time, but it can also slightly reduce accuracy.

3. What is the difference between Q4/K/M and F16 quantization?

Q4/K/M uses fewer bits than F16, resulting in a decrease in accuracy but a significant boost in speed. The choice between using Q4/K/M or F16 depends on your specific needs, balancing accuracy and performance.

4. Why is memory capacity so important for LLMs?

LLMs require large amounts of memory to store their vast parameter sets. A GPU with insufficient memory can struggle to handle larger models effectively.

5. How do I know which GPU is right for me?

The best GPU depends on your specific LLM, the type of tasks you're performing, and your tolerance for trade-offs between cost, performance, and energy consumption. This guide provides a comprehensive framework to help you make an informed decision.

Keywords:

NVIDIA 309024GB, NVIDIA L40S48GB, GPU, LLM, Llama 3, Llama 3 8B, Llama 3 70B, Token Generation Speed, Token Processing Speed, Quantization, Q4/K/M, F16, Memory Capacity, Power Consumption, Cost, Availability, Cooling, Noise, Software Compatibility, Use Case, AI, Machine Learning