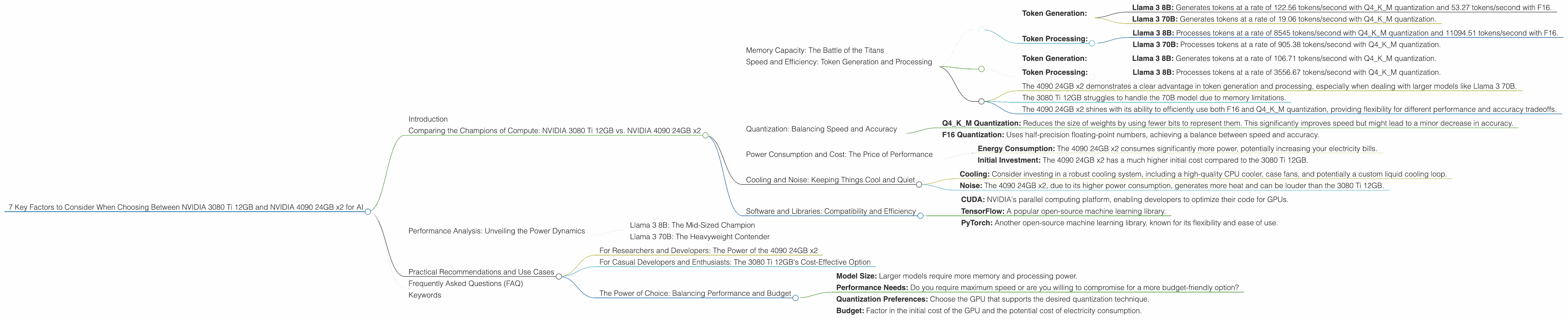

7 Key Factors to Consider When Choosing Between NVIDIA 3080 Ti 12GB and NVIDIA 4090 24GB x2 for AI

Introduction

In the rapidly evolving world of artificial intelligence, Large Language Models (LLMs) are transforming how we interact with technology. These powerful models, capable of generating human-like text, translating languages, and answering questions, require significant computational resources to run effectively. Choosing the right hardware for your LLM project can be a daunting task, especially with the plethora of GPUs available in the market.

This article dives into the critical factors to consider when deciding between the NVIDIA 3080 Ti 12GB and the NVIDIA 4090 24GB x2 setups for running LLMs, specifically for developers who want to explore local model deployment. We'll analyze performance differences, discuss strengths and weaknesses, and provide practical recommendations based on your specific needs.

Comparing the Champions of Compute: NVIDIA 3080 Ti 12GB vs. NVIDIA 4090 24GB x2

Memory Capacity: The Battle of the Titans

Let's start with the obvious: the NVIDIA 4090 24GB x2 boasts a whopping 48 GB of VRAM, while the 3080 Ti 12GB provides a respectable 12 GB. This difference is significant, especially when dealing with large LLMs like the 70B parameter Llama 3. Larger models require more memory to store their weights and activations, and a lack of sufficient VRAM can lead to performance bottlenecks or even outright failure.

Imagine your LLM as a hungry giant – it needs a lot of "food" (data) to function. The 4090 24GB x2 has a massive "pantry" (VRAM) to accommodate its appetite, while the 3080 Ti 12GB's "pantry" is more modest.

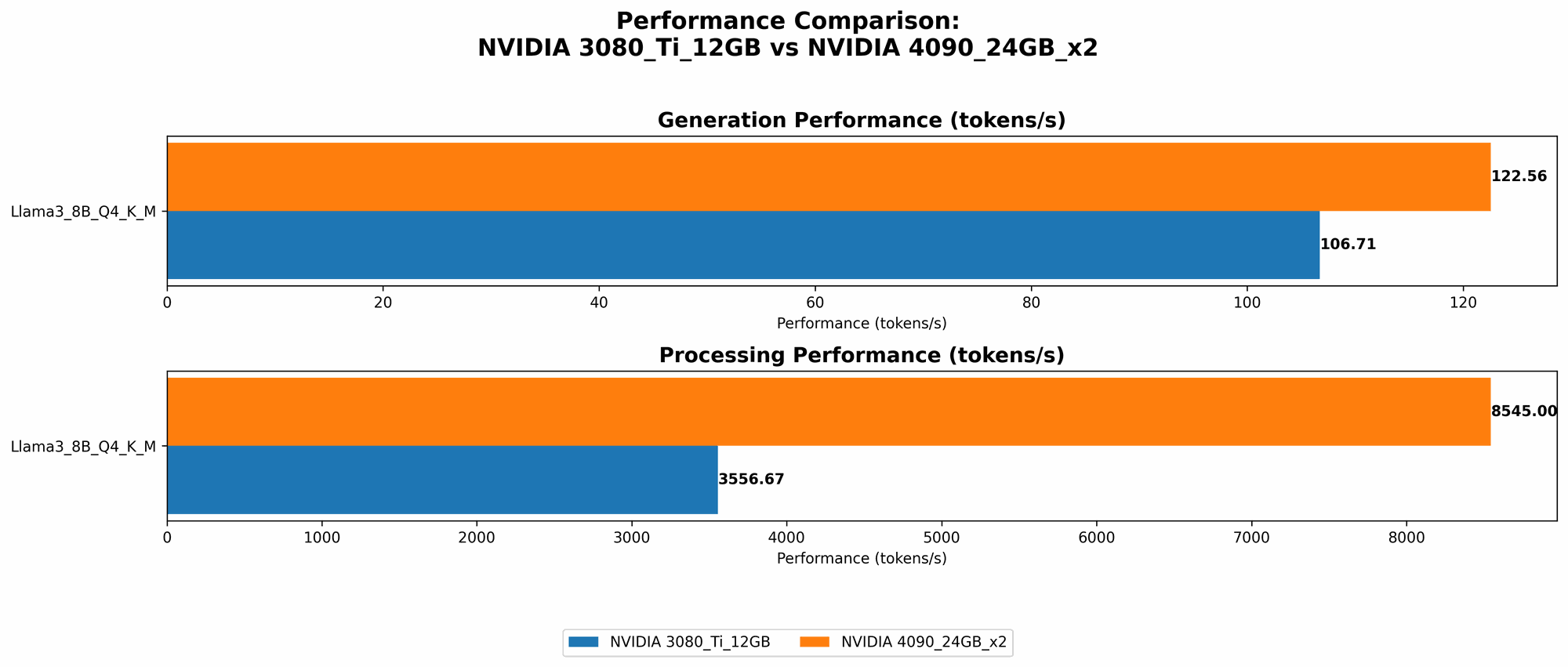

Speed and Efficiency: Token Generation and Processing

Token generation, which involves breaking down text into individual units, is critical for LLM inference. Token processing occurs when the LLM performs calculations and generates predictions based on the input tokens.

NVIDIA 4090 24GB x2:

- Token Generation:

- Llama 3 8B: Generates tokens at a rate of 122.56 tokens/second with Q4KM quantization and 53.27 tokens/second with F16.

- Llama 3 70B: Generates tokens at a rate of 19.06 tokens/second with Q4KM quantization.

- Token Processing:

- Llama 3 8B: Processes tokens at a rate of 8545 tokens/second with Q4KM quantization and 11094.51 tokens/second with F16.

- Llama 3 70B: Processes tokens at a rate of 905.38 tokens/second with Q4KM quantization.

NVIDIA 3080 Ti 12GB:

- Token Generation:

- Llama 3 8B: Generates tokens at a rate of 106.71 tokens/second with Q4KM quantization.

- Token Processing:

- Llama 3 8B: Processes tokens at a rate of 3556.67 tokens/second with Q4KM quantization.

Key Observations:

- The 4090 24GB x2 demonstrates a clear advantage in token generation and processing, especially when dealing with larger models like Llama 3 70B.

- The 3080 Ti 12GB struggles to handle the 70B model due to memory limitations.

- The 4090 24GB x2 shines with its ability to efficiently use both F16 and Q4KM quantization, providing flexibility for different performance and accuracy tradeoffs.

Quantization: Balancing Speed and Accuracy

Quantization is a technique used to reduce the size of LLM weights, enabling faster inference without sacrificing too much accuracy. It's like compressing a large file to make it easier to share and load quickly.

- Q4KM Quantization: Reduces the size of weights by using fewer bits to represent them. This significantly improves speed but might lead to a minor decrease in accuracy.

- F16 Quantization: Uses half-precision floating-point numbers, achieving a balance between speed and accuracy.

The NVIDIA 4090 24GB x2 can handle both Q4KM and F16 quantization, offering flexibility for different use cases. The 3080 Ti 12GB only supports Q4KM, limiting the possibilities for optimization.

Power Consumption and Cost: The Price of Performance

The NVIDIA 4090 24GB x2 is a power-hungry beast and comes at a premium price. The 3080 Ti 12GB, while still a powerful card, is generally more energy-efficient and has a lower price tag.

Consider the following:

- Energy Consumption: The 4090 24GB x2 consumes significantly more power, potentially increasing your electricity bills.

- Initial Investment: The 4090 24GB x2 has a much higher initial cost compared to the 3080 Ti 12GB.

The choice between the two depends on the specific needs of your project and your budget. If you're working on research or developing a production-level LLM that requires maximum speed and performance, the 4090 24GB x2 might be justifiable. However, if you have budget constraints or are developing an LLM for personal use, the 3080 Ti 12GB could be a more practical and cost-effective option.

Cooling and Noise: Keeping Things Cool and Quiet

With high-performance GPUs comes heat generation. Both the 3080 Ti 12GB and the 4090 24GB x2 require adequate cooling solutions to prevent overheating and maintain optimal performance.

- Cooling: Consider investing in a robust cooling system, including a high-quality CPU cooler, case fans, and potentially a custom liquid cooling loop.

- Noise: The 4090 24GB x2, due to its higher power consumption, generates more heat and can be louder than the 3080 Ti 12GB.

Software and Libraries: Compatibility and Efficiency

Both the 3080 Ti 12GB and the 4090 24GB x2 support a wide range of AI software and libraries. These include:

- CUDA: NVIDIA's parallel computing platform, enabling developers to optimize their code for GPUs.

- TensorFlow: A popular open-source machine learning library.

- PyTorch: Another open-source machine learning library, known for its flexibility and ease of use.

Ensure that your chosen LLM model and the libraries you plan to use are compatible with both GPUs before making a decision.

Performance Analysis: Unveiling the Power Dynamics

Llama 3 8B: The Mid-Sized Champion

The NVIDIA 4090 24GB x2 outperforms the 3080 Ti 12GB in both token generation and processing for the Llama 3 8B model. This superiority is evident in both Q4KM and F16 quantization, offering flexibility for different performance-accuracy tradeoffs.

For example, the 4090 24GB x2 achieves approximately 15% faster token generation and more than double the token processing speed in Q4KM compared to the 3080 Ti 12GB.

Llama 3 70B: The Heavyweight Contender

The NVIDIA 4090 24GB x2 is the clear winner in this category, as the 3080 Ti 12GB struggles to handle the 70B model due to its limited memory capacity. The 4090 24GB x2 excels with its generous VRAM and exhibits a significant speed advantage, enabling smooth and efficient operation.

The 4090 24GB x2 processes tokens at a rate of 905.38 tokens/second with Q4KM quantization, while the 3080 Ti 12GB can't even handle the 70B model under these conditions.

Practical Recommendations and Use Cases

For Researchers and Developers: The Power of the 4090 24GB x2

If you're pushing the boundaries of LLM research or developing production-level models, the NVIDIA 4090 24GB x2 offers the power and flexibility you need. Its ability to handle large models and its efficiency in both quantization options make it a powerful tool for exploring cutting-edge AI applications.

For Casual Developers and Enthusiasts: The 3080 Ti 12GB's Cost-Effective Option

For developers who are just starting out with LLMs or those who are working on smaller-scale projects, the NVIDIA 3080 Ti 12GB provides a more affordable and accessible option. It delivers excellent performance for models like Llama 3 8B and offers good value for money.

The Power of Choice: Balancing Performance and Budget

Ultimately, the best device for you depends on your specific use case, project requirements, and budget constraints. Consider the following factors:

- Model Size: Larger models require more memory and processing power.

- Performance Needs: Do you require maximum speed or are you willing to compromise for a more budget-friendly option?

- Quantization Preferences: Choose the GPU that supports the desired quantization technique.

- Budget: Factor in the initial cost of the GPU and the potential cost of electricity consumption.

Frequently Asked Questions (FAQ)

Q: What is an LLM and why are they important? A: A Large Language Model (LLM) is a type of artificial intelligence model trained on massive amounts of text data. LLMs can generate human-like text, translate languages, answer questions, and much more, revolutionizing fields like natural language processing, content creation, and customer service.

Q: What is quantization and how does it help? A: Quantization is a technique used to reduce the size of LLM weights, enabling faster inference without sacrificing too much accuracy. It's like compressing a large file to make it easier to share and load quickly. By reducing the number of bits used to represent weights, quantization allows for faster processing and less memory usage.

Q: Should I choose a multi-GPU setup for my LLM? *A: * Multi-GPU setups can significantly improve performance for large LLM models. If you need maximum speed and are comfortable with the added complexity and cost, using multiple GPUs can provide a substantial boost. However, for smaller models and budget-conscious projects, a single GPU can be sufficient.

Keywords

LLMs, large language models, NVIDIA 3080 Ti, NVIDIA 4090, GPU, AI, token generation, token processing, quantization, Q4KM, F16, performance, memory capacity, power consumption, cooling, noise, software, libraries, CUDA, TensorFlow, PyTorch, Llama 3, 8B, 70B, comparison, recommendations, use cases, FAQ, keywords, SEO, developer, geek, user-friendly, cost-effective, budget, research, production, casual, enthusiast, performance-accuracy tradeoff, speed, efficiency, memory, processing, capacity, consumption, cooling, libraries, multi-GPU.