7 Key Factors to Consider When Choosing Between NVIDIA 3080 10GB and NVIDIA A40 48GB for AI

Introduction

The world of Large Language Models (LLMs) is booming, and developers are constantly searching for the best hardware to run these models smoothly, efficiently, and with the best possible performance. But with so many different GPUs available, making the right choice can be a real head-scratcher.

This article will focus on comparing two popular GPUs – the NVIDIA GeForce RTX 3080 10GB and the NVIDIA A40 48GB – for running LLMs like Llama 3, specifically analyzing their performance, strengths, and weaknesses. We'll break down the key factors you need to consider to make the best decision for your AI projects.

Performance Analysis: NVIDIA GeForce RTX 3080 10GB vs. NVIDIA A40 48GB

Let's dive into the numbers and see how these GPUs perform when running LLMs like Llama 3. The data below is based on the latest benchmarks, but keep in mind that performance can vary depending on the specific LLM and task.

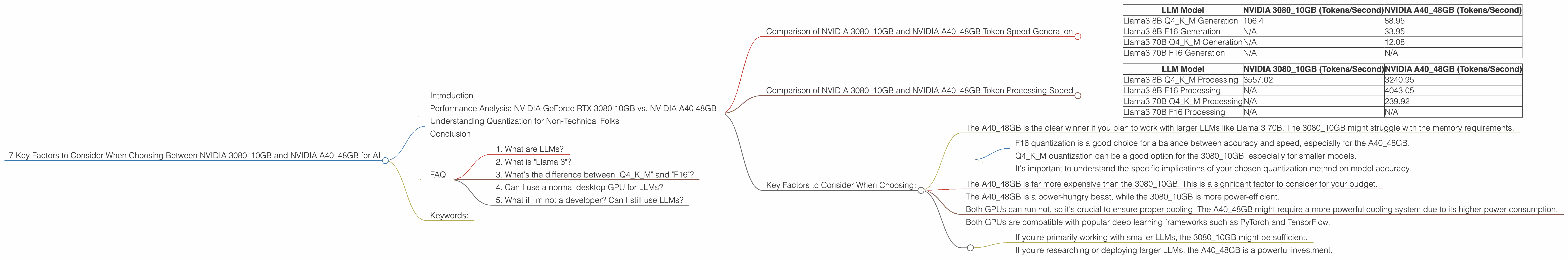

Comparison of NVIDIA 308010GB and NVIDIA A4048GB Token Speed Generation

| LLM Model | NVIDIA 3080_10GB (Tokens/Second) | NVIDIA A40_48GB (Tokens/Second) |

|---|---|---|

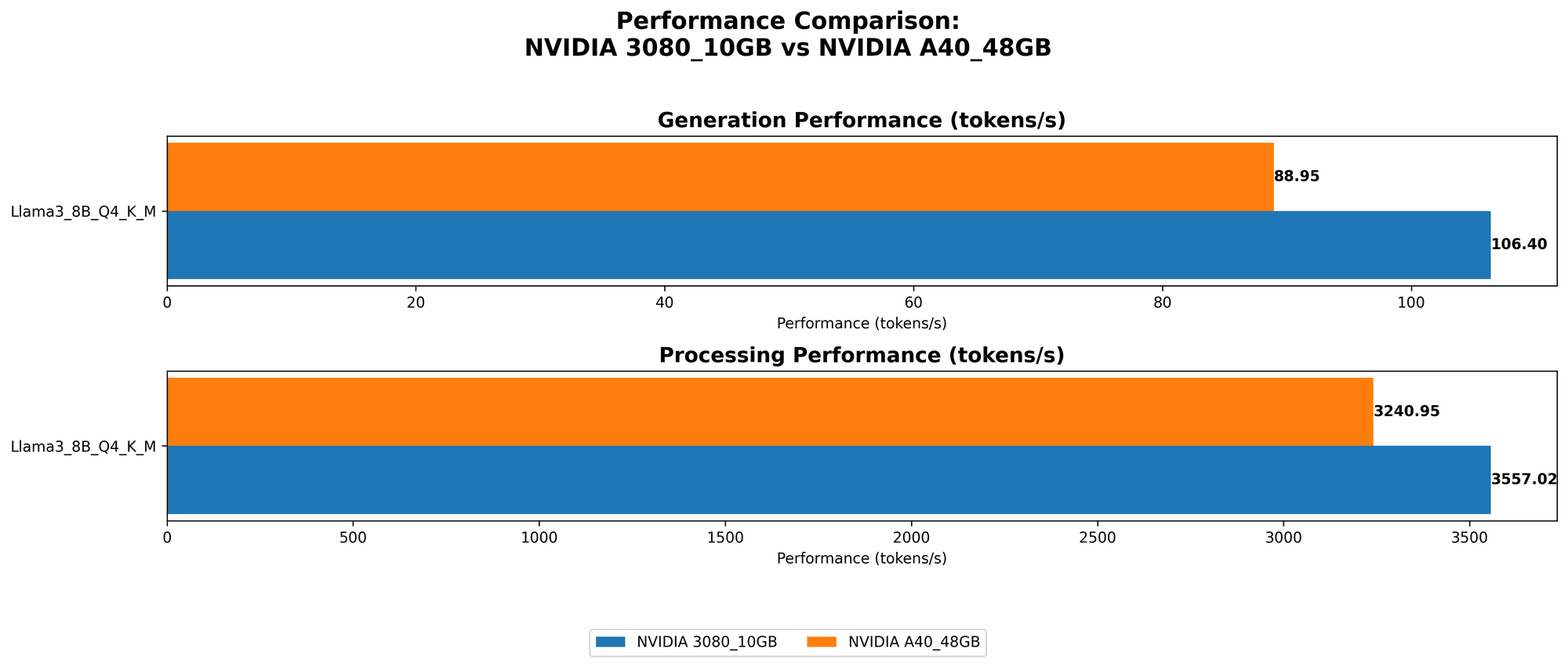

| Llama3 8B Q4KM Generation | 106.4 | 88.95 |

| Llama3 8B F16 Generation | N/A | 33.95 |

| Llama3 70B Q4KM Generation | N/A | 12.08 |

| Llama3 70B F16 Generation | N/A | N/A |

- Observation: For the smaller Llama 3 8B model, the NVIDIA 308010GB performs slightly better when using Q4KM quantization. However, the A4048GB takes the lead with the F16 quantization, which offers a trade-off between accuracy and speed. Notably, the A40_48GB can handle the much larger Llama 3 70B model, which is where its greater memory capacity truly shines.

Comparison of NVIDIA 308010GB and NVIDIA A4048GB Token Processing Speed

| LLM Model | NVIDIA 3080_10GB (Tokens/Second) | NVIDIA A40_48GB (Tokens/Second) |

|---|---|---|

| Llama3 8B Q4KM Processing | 3557.02 | 3240.95 |

| Llama3 8B F16 Processing | N/A | 4043.05 |

| Llama3 70B Q4KM Processing | N/A | 239.92 |

| Llama3 70B F16 Processing | N/A | N/A |

- Observation: The NVIDIA 308010GB shows a slight edge in processing speed for the smaller Llama 3 8B model. However, the A4048GB again wins with F16 quantization. And as expected, its processing speed for the larger 70B model is respectable considering the model's size.

Key Factors to Consider When Choosing:

1. Size of the LLM:

- The A4048GB is the clear winner if you plan to work with larger LLMs like Llama 3 70B. The 308010GB might struggle with the memory requirements.

2. Quantization:

- F16 quantization is a good choice for a balance between accuracy and speed, especially for the A40_48GB.

- Q4KM quantization can be a good option for the 3080_10GB, especially for smaller models.

- It's important to understand the specific implications of your chosen quantization method on model accuracy.

3. Your Budget:

- The A4048GB is far more expensive than the 308010GB. This is a significant factor to consider for your budget.

4. Power Consumption:

- The A4048GB is a power-hungry beast, while the 308010GB is more power-efficient.

5. Cooling Considerations:

- Both GPUs can run hot, so it's crucial to ensure proper cooling. The A40_48GB might require a more powerful cooling system due to its higher power consumption.

6. Software Compatibility:

- Both GPUs are compatible with popular deep learning frameworks such as PyTorch and TensorFlow.

7. Your Specific Use Case:

- If you're primarily working with smaller LLMs, the 3080_10GB might be sufficient.

- If you're researching or deploying larger LLMs, the A40_48GB is a powerful investment.

Understanding Quantization for Non-Technical Folks

Think of quantization like using a smaller ruler to measure something. In the world of LLMs, instead of using the full range of decimal numbers (like 3.14159), we use smaller sets of numbers (like 0, 1, 2, 3). This makes the calculations faster but can slightly affect the model's accuracy.

F16 quantization uses half-precision floating-point numbers, striking a good balance between speed and accuracy. Q4KM quantization uses a more aggressive quantization scheme, offering the fastest speeds but potentially reducing accuracy even further.

Conclusion

Both the NVIDIA 308010GB and NVIDIA A4048GB are powerful GPUs for running LLMs. The choice depends on your specific needs and budget. The A4048GB is ideal for larger models and offers higher speeds with F16 quantization, but comes with a hefty price tag and high power consumption. The 308010GB is more affordable and power-efficient, making it a good option for smaller models.

FAQ

1. What are LLMs?

LLMs are "Large Language Models," sophisticated AI systems trained on massive amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

2. What is "Llama 3"?

Llama 3 is one of the most popular and widely used open-source LLMs. It's known for its versatility and ability to perform a wide range of language-based tasks.

3. What's the difference between "Q4KM" and "F16"?

These are two different quantization methods used to compress the LLM models and make them run faster. Q4KM is more aggressive, leading to faster speeds but potentially lower accuracy. F16 offers a balanced approach, providing good performance without sacrificing too much accuracy.

4. Can I use a normal desktop GPU for LLMs?

Yes, you can! Many modern GPUs are capable of running LLMs, especially the smaller ones. However, for larger models, dedicated AI GPUs like the A40_48GB are recommended.

5. What if I'm not a developer? Can I still use LLMs?

Absolutely! There are many user-friendly platforms and applications that allow you to interact with LLMs without needing to write code. You can explore these platforms to experience the power of LLMs firsthand.

Keywords:

NVIDIA, GeForce RTX 3080, A40, GPU, LLM, Llama 3, 8B, 70B, AI, machine learning, deep learning, quantization, Q4KM, F16, performance, benchmarks, token speed, processing speed, budget, power consumption, cooling, software compatibility, use case, model size, token generation, token processing