7 Key Factors to Consider When Choosing Between NVIDIA 3070 8GB and NVIDIA RTX A6000 48GB for AI

Introduction

The world of AI is rapidly evolving, fueled by the power of Large Language Models (LLMs). These remarkable models, capable of generating human-like text, translating languages, and answering your questions in a comprehensive and informative way, require substantial computational horsepower to function effectively. If you're diving into the exciting world of local LLMs, choosing the right hardware is crucial, and two popular choices are the NVIDIA 30708GB and the NVIDIA RTXA6000_48GB.

This article will guide you through the key factors to consider when deciding between these two GPUs, exploring the nuances of their performance with Llama model variants (Llama 3 8B and 70B) and helping you make an informed choice based on your needs and budget.

Understanding the Basics: 30708GB vs. RTXA6000_48GB

Let's break down the key differences between these two titans of the GPU world:

NVIDIA 3070_8GB: The Budget-Friendly Option

- Memory: 8GB GDDR6 - This might seem adequate for smaller LLMs, but it can be a bottleneck for larger models.

- Cores: 5888 CUDA cores - Enough power to handle smaller models smoothly.

- Price: More affordable than the RTX A6000.

NVIDIA RTXA600048GB: The Heavyweight Champion

- Memory: 48GB HBM2e - A behemoth of RAM, allowing you to load and process massive models effortlessly.

- Cores: 10752 CUDA cores - Raw power unleashed!

- Price: Hefty price tag, reflecting its top-tier performance.

Performance Analysis: Llama 3 8B and 70B

The real test comes when we put these GPUs to work with specific LLMs. Let's examine how they perform with Llama 3 8B and 70B for both Generation (creating text) and Processing (completing tasks).

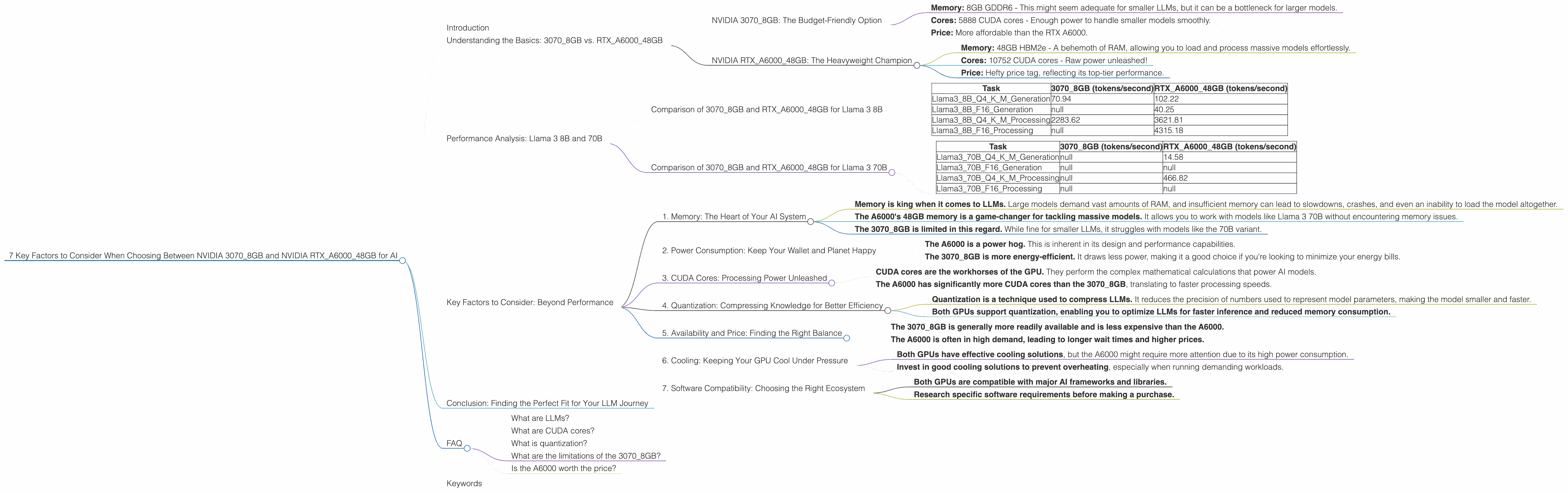

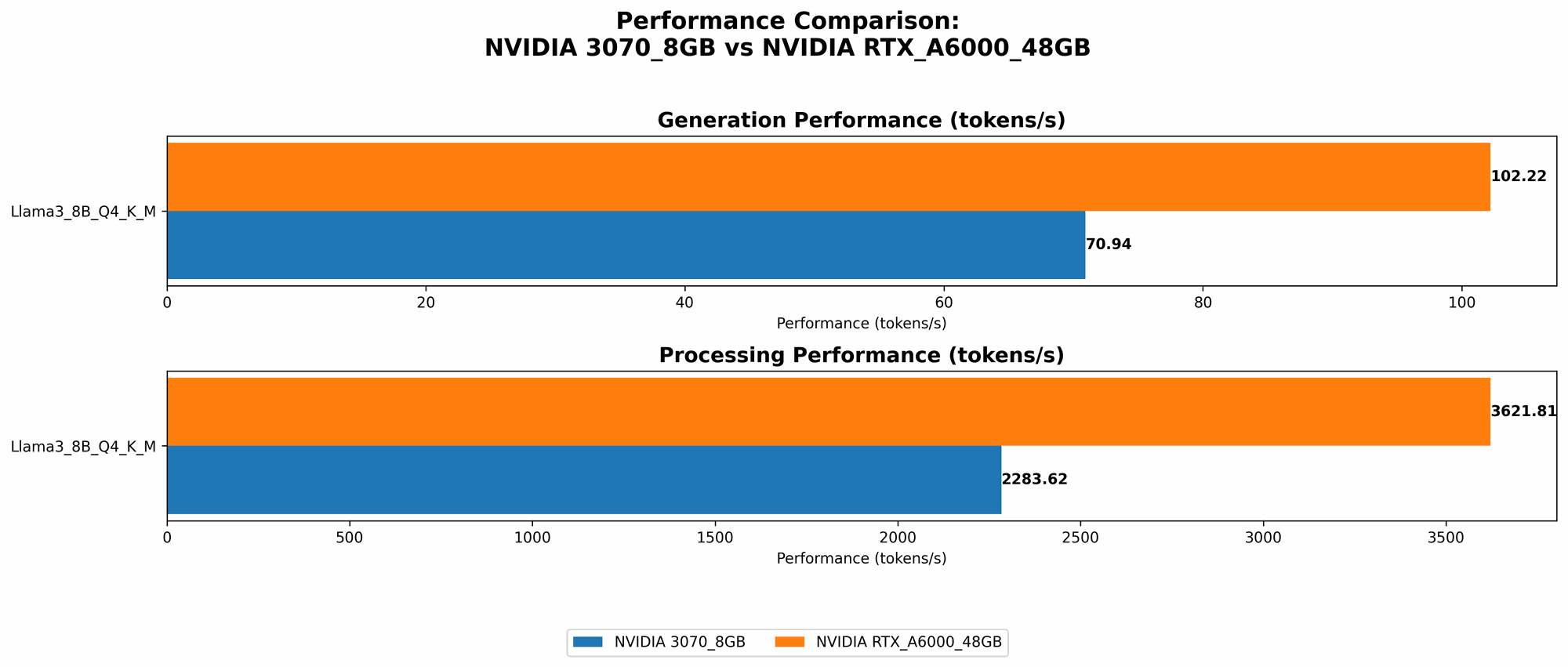

Comparison of 30708GB and RTXA6000_48GB for Llama 3 8B

| Task | 3070_8GB (tokens/second) | RTXA600048GB (tokens/second) |

|---|---|---|

| Llama38BQ4KM_Generation | 70.94 | 102.22 |

| Llama38BF16_Generation | null | 40.25 |

| Llama38BQ4KM_Processing | 2283.62 | 3621.81 |

| Llama38BF16_Processing | null | 4315.18 |

Key Observations:

- The RTXA600048GB consistently outperforms the 30708GB in both generation and processing tasks with both Q4K_M (quantized) and F16 (half-precision floating point) configurations of the Llama 3 8B model.

- The A6000 provides a significant boost in generation speed, reaching over 100 tokens per second. This means you'll see faster responses and more fluid interaction with the LLM.

- For processing tasks, the RTX A6000 again takes the lead, achieving a processing throughput of over 3600 tokens per second. This is roughly 58% faster than the 3070_8GB.

Practical Recommendations:

- For developers working with Llama 3 8B, the RTX_A6000 is the clear winner. Its superior performance will save you valuable time and boost your productivity, particularly when working on complex tasks or handling larger datasets.

- If budget is your primary concern, the 3070_8GB is a decent option for Llama 3 8B. It still offers good performance, but you might experience some limitations with larger models.

Comparison of 30708GB and RTXA6000_48GB for Llama 3 70B

| Task | 3070_8GB (tokens/second) | RTXA600048GB (tokens/second) |

|---|---|---|

| Llama370BQ4KM_Generation | null | 14.58 |

| Llama370BF16_Generation | null | null |

| Llama370BQ4KM_Processing | null | 466.82 |

| Llama370BF16_Processing | null | null |

Key Observations:

- The 30708GB doesn't have enough memory to handle the Llama 3 70B model. This is due to the model's enormous size, requiring more than the 8GB available on the 30708GB.

- The RTXA600048GB can comfortably handle the Llama 3 70B model. Its massive 48GB of memory provides ample space to load and execute this behemoth of an LLM.

- The RTX_A6000's performance with Llama 3 70B is noticeably slower compared to the Llama 3 8B model. This is due to the increased complexity of the larger model.

Practical Recommendations:

- For anyone aiming to work with the Llama 3 70B model, the RTXA600048GB is the only viable option. The 3070_8GB simply lacks the memory capacity to handle this large model.

Key Factors to Consider: Beyond Performance

While performance is crucial, other factors deserve consideration.

1. Memory: The Heart of Your AI System

- Memory is king when it comes to LLMs. Large models demand vast amounts of RAM, and insufficient memory can lead to slowdowns, crashes, and even an inability to load the model altogether.

- The A6000's 48GB memory is a game-changer for tackling massive models. It allows you to work with models like Llama 3 70B without encountering memory issues.

- The 3070_8GB is limited in this regard. While fine for smaller LLMs, it struggles with models like the 70B variant.

Think of it this way: if your model is a hungry dragon, then the A6000 is a giant feast, while the 3070_8GB is just a snack.

2. Power Consumption: Keep Your Wallet and Planet Happy

- The A6000 is a power hog. This is inherent in its design and performance capabilities.

- The 3070_8GB is more energy-efficient. It draws less power, making it a good choice if you're looking to minimize your energy bills.

While the A6000 can be a bit of an energy vampire, its performance might be worth the extra cost.

3. CUDA Cores: Processing Power Unleashed

- CUDA cores are the workhorses of the GPU. They perform the complex mathematical calculations that power AI models.

- The A6000 has significantly more CUDA cores than the 3070_8GB, translating to faster processing speeds.

Think of CUDA cores like a team of workers. The A6000 has a larger team, allowing it to complete tasks more efficiently.

4. Quantization: Compressing Knowledge for Better Efficiency

- Quantization is a technique used to compress LLMs. It reduces the precision of numbers used to represent model parameters, making the model smaller and faster.

- Both GPUs support quantization, enabling you to optimize LLMs for faster inference and reduced memory consumption.

Quantization is like condensing a huge textbook into a smaller, more manageable summary. You lose a little detail, but you gain efficiency in the process.

5. Availability and Price: Finding the Right Balance

- The 3070_8GB is generally more readily available and is less expensive than the A6000.

- The A6000 is often in high demand, leading to longer wait times and higher prices.

The choice often boils down to a trade-off between performance and your budget.

6. Cooling: Keeping Your GPU Cool Under Pressure

- Both GPUs have effective cooling solutions, but the A6000 might require more attention due to its high power consumption.

- Invest in good cooling solutions to prevent overheating, especially when running demanding workloads.

A hot GPU can be a performance killer, so make sure you have adequate cooling in place.

7. Software Compatibility: Choosing the Right Ecosystem

- Both GPUs are compatible with major AI frameworks and libraries.

- Research specific software requirements before making a purchase.

Make sure your chosen GPU plays nicely with the AI tools you plan to use.

Conclusion: Finding the Perfect Fit for Your LLM Journey

The choice between the NVIDIA 30708GB and the NVIDIA RTXA600048GB depends on your needs and priorities. If you're working with smaller LLMs like Llama 3 8B and budget is a major factor, the 30708GB offers adequate performance and affordability. However, if you're tackling larger models like Llama 3 70B or demand the absolute highest performance, the RTXA600048GB is the clear winner.

Remember, the key is to choose a GPU that meets your specific needs and fits within your budget.

FAQ

What are LLMs?

Large Language Models (LLMs) are AI systems that can understand and generate human-like text. They are trained on vast amounts of data and excel at tasks such as text generation, translation, summarization, and answering questions.

What are CUDA cores?

CUDA cores are specialized processors on NVIDIA GPUs that accelerate computations for tasks like AI training and inference. More CUDA cores mean more processing power.

What is quantization?

Quantization is a technique for compressing LLMs by reducing the precision of numbers representing model parameters. This makes models smaller and faster, but with a slight loss of accuracy.

What are the limitations of the 3070_8GB?

The 3070_8GB's primary limitation is its limited memory (8GB), which can be insufficient for large LLMs.

Is the A6000 worth the price?

The A6000 comes with a premium price tag, but its high performance, large memory, and capability to handle demanding workloads make it a worthwhile investment for serious AI development.

Keywords

LLMs, NVIDIA 30708GB, NVIDIA RTXA6000_48GB, GPU, AI, Llama 3 8B, Llama 3 70B, Performance, Memory, Power Consumption, CUDA cores, Quantization, Availability, Price, Cooling, Software Compatibility