7 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA 4090 24GB x2 for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run them efficiently. Whether you're a developer building the next groundbreaking AI application, a researcher exploring the frontiers of natural language processing, or just someone fascinated by the capabilities of these amazing models, you'll likely need a powerful machine to bring your ideas to life.

Two popular choices stand out: the Apple M3 100GB 10Cores and the NVIDIA 409024GBx2. These are both strong contenders for running LLMs, each with its unique strengths and weaknesses. This article will provide an in-depth comparison of these devices, helping you make an informed decision based on your specific needs and budget.

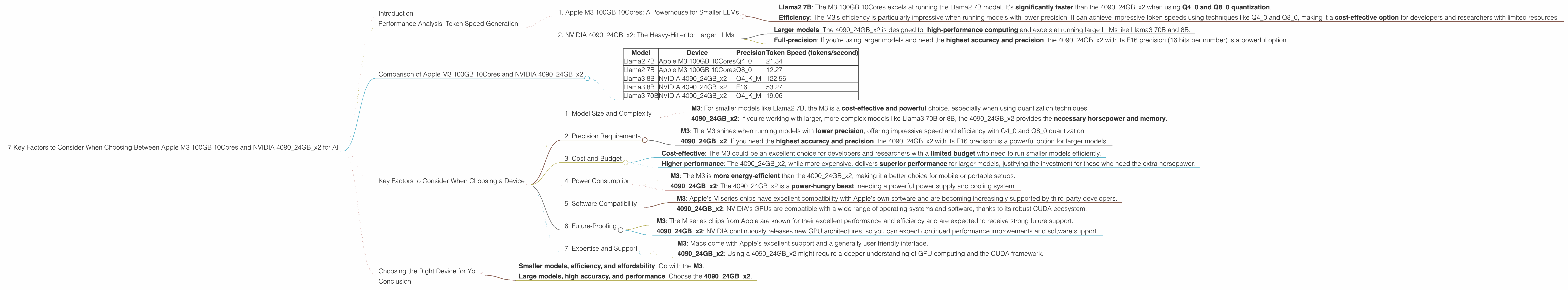

Performance Analysis: Token Speed Generation

1. Apple M3 100GB 10Cores: A Powerhouse for Smaller LLMs

The Apple M3 100GB 10Cores is a marvel of engineering, known for its speed and efficiency. It's particularly good at running smaller LLM models, especially when using quantization techniques like Q40 and Q80. Quantization reduces the size of the model by representing the numbers with fewer bits, making it more efficient and faster to run.

Here are some key takeaways:

- Llama2 7B: The M3 100GB 10Cores excels at running the Llama2 7B model. It's significantly faster than the 409024GBx2 when using Q40 and Q80 quantization.

- Efficiency: The M3's efficiency is particularly impressive when running models with lower precision. It can achieve impressive token speeds using techniques like Q40 and Q80, making it a cost-effective option for developers and researchers with limited resources.

Example: Imagine generating text with Llama2 7B. At a token speed of 21.34 tokens per second, you could generate over 77,000 words per minute, which is about the length of a short novel!

2. NVIDIA 409024GBx2: The Heavy-Hitter for Larger LLMs

The NVIDIA 409024GBx2, a powerful GPU with a massive amount of memory, is the champion for larger language models. It shines when running models like Llama3 70B and 8B.

Key highlights:

- Larger models: The 409024GBx2 is designed for high-performance computing and excels at running large LLMs like Llama3 70B and 8B.

- Full-precision: If you're using larger models and need the highest accuracy and precision, the 409024GBx2 with its F16 precision (16 bits per number) is a powerful option.

Example: Generating text with Llama3 70B on the 409024GBx2 can achieve over 19 tokens per second, which is significantly faster than the M3 100GB 10Cores for this particular model.

Comparison of Apple M3 100GB 10Cores and NVIDIA 409024GBx2

Here's a table summarizing the performance differences between the Apple M3 100GB 10Cores and the NVIDIA 409024GBx2 for running various LLMs:

| Model | Device | Precision | Token Speed (tokens/second) |

|---|---|---|---|

| Llama2 7B | Apple M3 100GB 10Cores | Q4_0 | 21.34 |

| Llama2 7B | Apple M3 100GB 10Cores | Q8_0 | 12.27 |

| Llama3 8B | NVIDIA 409024GBx2 | Q4KM | 122.56 |

| Llama3 8B | NVIDIA 409024GBx2 | F16 | 53.27 |

| Llama3 70B | NVIDIA 409024GBx2 | Q4KM | 19.06 |

Important Considerations:

- Data Availability: We have limited data for some of the combinations, such as the M3 with Llama2 7B using F16. This is due to the lack of available benchmarks.

- Focus on performance: This analysis primarily focuses on token speed generation for different models and precision levels. Other factors, such as memory usage, power consumption, and cost, are not covered in detail.

Key Factors to Consider When Choosing a Device

1. Model Size and Complexity

The choice between an M3 and a 409024GBx2 heavily depends on the size and complexity of the LLM you plan to run.

- M3: For smaller models like Llama2 7B, the M3 is a cost-effective and powerful choice, especially when using quantization techniques.

- 409024GBx2: If you're working with larger, more complex models like Llama3 70B or 8B, the 409024GBx2 provides the necessary horsepower and memory.

2. Precision Requirements

LLMs can be run with different levels of precision, affecting accuracy and performance.

- M3: The M3 shines when running models with lower precision, offering impressive speed and efficiency with Q40 and Q80 quantization.

- 409024GBx2: If you need the highest accuracy and precision, the 409024GBx2 with its F16 precision is a powerful option for larger models.

3. Cost and Budget

The M3 100GB 10Cores is generally more affordable than the 409024GBx2.

- Cost-effective: The M3 could be an excellent choice for developers and researchers with a limited budget who need to run smaller models efficiently.

- Higher performance: The 409024GBx2, while more expensive, delivers superior performance for larger models, justifying the investment for those who need the extra horsepower.

4. Power Consumption

Consider power consumption, especially if you're running your models on a laptop or a machine with limited power resources.

- M3: The M3 is more energy-efficient than the 409024GBx2, making it a better choice for mobile or portable setups.

- 409024GBx2: The 409024GBx2 is a power-hungry beast, needing a powerful power supply and cooling system.

5. Software Compatibility

Ensure the operating system and software you use are compatible with the chosen device.

- M3: Apple's M series chips have excellent compatibility with Apple's own software and are becoming increasingly supported by third-party developers.

- 409024GBx2: NVIDIA's GPUs are compatible with a wide range of operating systems and software, thanks to its robust CUDA ecosystem.

6. Future-Proofing

Consider how your needs might evolve in the future.

- M3: The M series chips from Apple are known for their excellent performance and efficiency and are expected to receive strong future support.

- 409024GBx2: NVIDIA continuously releases new GPU architectures, so you can expect continued performance improvements and software support.

7. Expertise and Support

Consider your expertise and the level of support you need.

- M3: Macs come with Apple's excellent support and a generally user-friendly interface.

- 409024GBx2: Using a 409024GBx2 might require a deeper understanding of GPU computing and the CUDA framework.

Choosing the Right Device for You

The decision between the Apple M3 100GB 10Cores and the NVIDIA 409024GBx2 depends on your specific needs and priorities. Here's a quick guide:

- Smaller models, efficiency, and affordability: Go with the M3.

- Large models, high accuracy, and performance: Choose the 409024GBx2.

Conclusion

The world of LLM development is exciting and complex. Choosing the right hardware is crucial to ensure smooth and efficient execution of your models. Both the Apple M3 100GB 10Cores and NVIDIA 409024GBx2 are powerful options, each with its strengths and weaknesses. By carefully considering the factors outlined in this article, you can select the device that best aligns with your individual needs and budget.