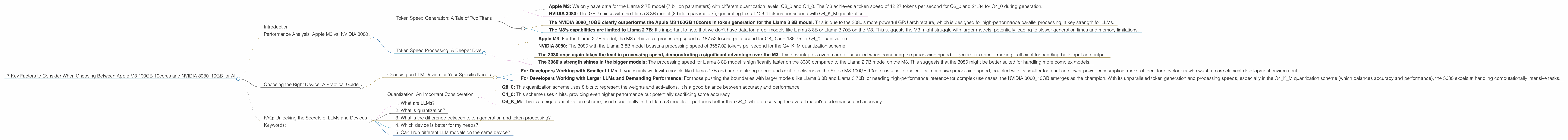

7 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA 3080 10GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes a burgeoning need for powerful hardware to run these models efficiently. As a developer diving into the exciting realm of local LLM deployment, you're likely facing a crucial question: which device is best for your specific needs? This article dives into the performance of two popular options, the Apple M3 100GB 10cores and NVIDIA 3080_10GB, for running LLMs like Llama 2 and Llama 3, helping you make an informed decision.

Performance Analysis: Apple M3 vs. NVIDIA 3080

Token Speed Generation: A Tale of Two Titans

Token speed is a crucial metric in LLM performance, measuring how fast the model can generate text. Let's see how the M3 and 3080 stack up in this key area.

Apple M3: We only have data for the Llama 2 7B model (7 billion parameters) with different quantization levels: Q80 and Q40. The M3 achieves a token speed of 12.27 tokens per second for Q80 and 21.34 for Q40 during generation.

NVIDIA 3080: This GPU shines with the Llama 3 8B model (8 billion parameters), generating text at 106.4 tokens per second with Q4KM quantization.

Key Takeaways:

- The NVIDIA 3080_10GB clearly outperforms the Apple M3 100GB 10cores in token generation for the Llama 3 8B model. This is due to the 3080's more powerful GPU architecture, which is designed for high-performance parallel processing, a key strength for LLMs.

- The M3's capabilities are limited to Llama 2 7B: It's important to note that we don't have data for larger models like Llama 3 8B or Llama 3 70B on the M3. This suggests the M3 might struggle with larger models, potentially leading to slower generation times and memory limitations.

Token Speed Processing: A Deeper Dive

Token processing speed refers to how quickly the model can process input tokens. This is crucial for inference speed and overall model performance.

Apple M3: For the Llama 2 7B model, the M3 achieves a processing speed of 187.52 tokens per second for Q80 and 186.75 for Q40 quantization.

NVIDIA 3080: The 3080 with the Llama 3 8B model boasts a processing speed of 3557.02 tokens per second for the Q4KM quantization scheme.

Key Takeaways:

- The 3080 once again takes the lead in processing speed, demonstrating a significant advantage over the M3. This advantage is even more pronounced when comparing the processing speed to generation speed, making it efficient for handling both input and output.

- The 3080's strength shines in the bigger models: The processing speed for Llama 3 8B model is significantly faster on the 3080 compared to the Llama 2 7B model on the M3. This suggests that the 3080 might be better suited for handling more complex models.

Choosing the Right Device: A Practical Guide

Choosing an LLM Device for Your Specific Needs:

For Developers Working with Smaller LLMs: If you mainly work with models like Llama 2 7B and are prioritizing speed and cost-effectiveness, the Apple M3 100GB 10cores is a solid choice. Its impressive processing speed, coupled with its smaller footprint and lower power consumption, makes it ideal for developers who want a more efficient development environment.

For Developers Working with Larger LLMs and Demanding Performance: For those pushing the boundaries with larger models like Llama 3 8B and Llama 3 70B, or needing high-performance inference for complex use cases, the NVIDIA 308010GB emerges as the champion. With its unparalleled token generation and processing speeds, especially in the Q4K_M quantization scheme (which balances accuracy and performance), the 3080 excels at handling computationally intensive tasks.

Quantization: An Important Consideration

Quantization is a technique that reduces the memory footprint and computational requirements of LLMs by representing the model's weights and activations with lower precision. This can significantly improve performance, especially on devices with limited memory or computational power.

Imagine trying to pack all your clothes into a suitcase – smaller suitcase, smaller clothes. Quantization is similar, making the model smaller so it can run faster. This is especially useful for devices like the M3, which might struggle with larger models.

Q8_0: This quantization scheme uses 8 bits to represent the weights and activations. It is a good balance between accuracy and performance.

Q4_0: This scheme uses 4 bits, providing even higher performance but potentially sacrificing some accuracy.

Q4KM: This is a unique quantization scheme, used specifically in the Llama 3 models. It performs better than Q4_0 while preserving the overall model's performance and accuracy.

FAQ: Unlocking the Secrets of LLMs and Devices

1. What are LLMs?

LLMs are powerful AI models that are trained on massive amounts of text data. They are capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

2. What is quantization?

Quantization is like compressing a massive dataset: It reduces the size and complexity of a model by representing its components with smaller, more manageable data types. This improves the model's efficiency by making it faster and requiring less memory. You can think of it like using a smaller suitcase to pack your clothes – it's more manageable!

3. What is the difference between token generation and token processing?

Token generation refers to the output of an LLM, the actual text it creates. Token processing is the input stage, where the model receives and processes the tokens you provide. It's like the difference between writing a letter and reading a letter.

4. Which device is better for my needs?

It all depends! If you're working with smaller models and prioritize cost and efficiency, the M3 is a great choice. But if you're working with larger models and need top-tier performance, the 3080 is the winner.

5. Can I run different LLM models on the same device?

Definitely! Both the M3 and 3080 can run various LLM models, but their capabilities and performance might vary depending on the model's size and complexity.

Keywords:

Apple M3, NVIDIA 3080, LLM, Llama 2, Llama 3, Token Speed, Token Processing, Quantization, Q80, Q40, Q4KM, AI, Machine Learning, Deep Learning, GPU, Performance, Speed, Memory, Development, Inference, Model Size, Use Cases, GPU, CPU, Developer Tools, Data Science, NLP, Natural Language Processing.