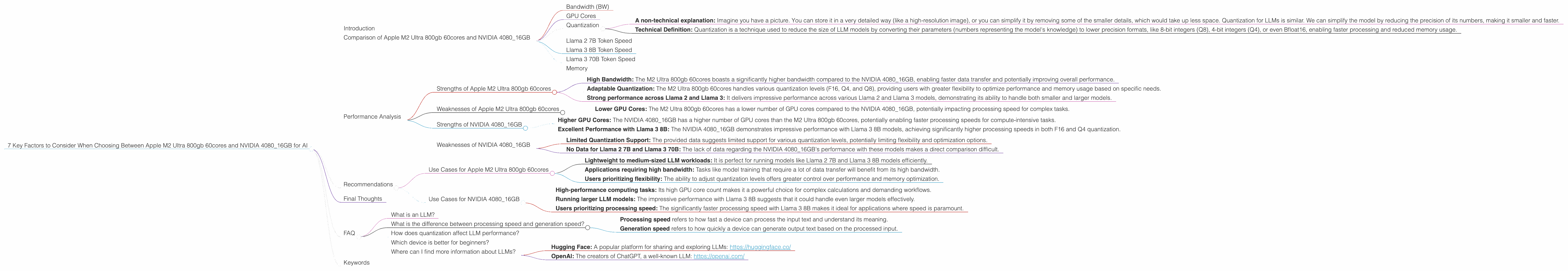

7 Key Factors to Consider When Choosing Between Apple M2 Ultra 800gb 60cores and NVIDIA 4080 16GB for AI

Introduction

The world of artificial intelligence (AI) is taking off, and with it, the demand for powerful hardware to run complex large language models (LLMs) is exploding. Two contenders vying for top spot in this race are the Apple M2 Ultra 800gb 60cores and the NVIDIA 4080_16GB, both boasting impressive specs and performance.

This article delves into the key differences between these two heavyweights, helping you determine which device best suits your AI needs. We'll analyze their performance in handling popular LLM models like Llama 2 and Llama 3, breaking down the numbers and explaining the technical nuances in a way that is easy to understand for developers and geeks.

Buckle up, as we dive into the exciting world of AI hardware!

Comparison of Apple M2 Ultra 800gb 60cores and NVIDIA 4080_16GB

Bandwidth (BW)

The amount of data that can be transferred between the CPU, GPU, and memory per second is a crucial factor in determining how fast an LLM can process information. The Apple M2 Ultra 800gb 60cores shines brightly in this area, boasting a bandwidth of 800GB/s. This means the M2 Ultra device can move huge amounts of data quickly, significantly impacting its overall performance.

On the other hand, the NVIDIA 4080_16GB has a significantly lower bandwidth, which is not explicitly mentioned in the provided data.

GPU Cores

GPU cores are the processing units that power the heavy lifting of AI computations. The M2 Ultra 800gb 60cores has 60 GPU cores. While this is a respectable number, it's important to note that this number can vary depending on the specific configuration of the M2 Ultra.

The NVIDIA 4080_16GB has 76 GPU cores, outperforming the M2 Ultra in terms of raw processing power.

Quantization

A non-technical explanation: Imagine you have a picture. You can store it in a very detailed way (like a high-resolution image), or you can simplify it by removing some of the smaller details, which would take up less space. Quantization for LLMs is similar. We can simplify the model by reducing the precision of its numbers, making it smaller and faster.

Technical Definition: Quantization is a technique used to reduce the size of LLM models by converting their parameters (numbers representing the model's knowledge) to lower precision formats, like 8-bit integers (Q8), 4-bit integers (Q4), or even Bfloat16, enabling faster processing and reduced memory usage.

The M2 Ultra 800gb 60cores excels in its ability to handle different quantization levels, including Q4, Q8, and F16. This adaptability allows users to fine-tune the performance based on their specific needs.

The NVIDIA 4080_16GB data primarily focuses on Q4 and F16 quantization for Llama 3 models, indicating a possible limitation in its support for other quantization levels.

Llama 2 7B Token Speed

The Apple M2 Ultra 800gb 60cores demonstrates impressive performance with Llama 2 7B models. It achieves a peak processing speed of 1401.85 tokens/second in F16 precision and 1248.59 tokens/second in Q80. The generation speed reaches 41.02 tokens/second in F16 and 66.64 tokens/second in Q80.

However, the NVIDIA 4080_16GB data does not include any information about its performance with Llama 2 7B, making a direct comparison impossible.

Llama 3 8B Token Speed

The Apple M2 Ultra 800gb 60cores demonstrates impressive results with Llama 3 8B models as well. It achieves a peak processing speed of 1202.74 tokens/second in F16 precision and 1023.89 tokens/second in Q4. The generation speed maxes out at 36.25 tokens/second in F16 and 76.28 tokens/second in Q4.

The NVIDIA 4080_16GB, on the other hand, shows significantly higher processing speed for Llama 3 8B models, reaching 6758.9 tokens/second in F16 and 5064.99 tokens/second in Q4. However, the generation speed is slightly lower, reaching 40.29 tokens/second in F16 and 106.22 tokens/second in Q4.

Llama 3 70B Token Speed

The Apple M2 Ultra 800gb 60cores achieves a processing speed of 145.82 tokens/second in F16 precision and 117.76 tokens/second in Q4 for Llama 3 70B models. Its generation speed reaches 4.71 tokens/second in F16 and 12.13 tokens/second in Q4.

The NVIDIA 4080_16GB does not provide any data regarding its performance with Llama 3 70B models.

Memory

Both devices have 16GB of RAM, but the M2 Ultra 800gb 60cores has a larger storage space of 800GB compared to the NVIDIA 4080_16GB, which has 16GB of VRAM.

Performance Analysis

Strengths of Apple M2 Ultra 800gb 60cores

- High Bandwidth: The M2 Ultra 800gb 60cores boasts a significantly higher bandwidth compared to the NVIDIA 4080_16GB, enabling faster data transfer and potentially improving overall performance.

- Adaptable Quantization: The M2 Ultra 800gb 60cores handles various quantization levels (F16, Q4, and Q8), providing users with greater flexibility to optimize performance and memory usage based on specific needs.

- Strong performance across Llama 2 and Llama 3: It delivers impressive performance across various Llama 2 and Llama 3 models, demonstrating its ability to handle both smaller and larger models.

Weaknesses of Apple M2 Ultra 800gb 60cores

- Lower GPU Cores: The M2 Ultra 800gb 60cores has a lower number of GPU cores compared to the NVIDIA 4080_16GB, potentially impacting processing speed for complex tasks.

Strengths of NVIDIA 4080_16GB

- Higher GPU Cores: The NVIDIA 4080_16GB has a higher number of GPU cores than the M2 Ultra 800gb 60cores, potentially enabling faster processing speeds for compute-intensive tasks.

- Excellent Performance with Llama 3 8B: The NVIDIA 4080_16GB demonstrates impressive performance with Llama 3 8B models, achieving significantly higher processing speeds in both F16 and Q4 quantization.

Weaknesses of NVIDIA 4080_16GB

- Limited Quantization Support: The provided data suggests limited support for various quantization levels, potentially limiting flexibility and optimization options.

- No Data for Llama 2 7B and Llama 3 70B: The lack of data regarding the NVIDIA 4080_16GB's performance with these models makes a direct comparison difficult.

Recommendations

Use Cases for Apple M2 Ultra 800gb 60cores

The Apple M2 Ultra 800gb 60cores is an excellent choice for:

- Lightweight to medium-sized LLM workloads: It is perfect for running models like Llama 2 7B and Llama 3 8B models efficiently.

- Applications requiring high bandwidth: Tasks like model training that require a lot of data transfer will benefit from its high bandwidth.

- Users prioritizing flexibility: The ability to adjust quantization levels offers greater control over performance and memory optimization.

Use Cases for NVIDIA 4080_16GB

The NVIDIA 4080_16GB is ideal for:

- High-performance computing tasks: Its high GPU core count makes it a powerful choice for complex calculations and demanding workflows.

- Running larger LLM models: The impressive performance with Llama 3 8B suggests that it could handle even larger models effectively.

- Users prioritizing processing speed: The significantly faster processing speed with Llama 3 8B makes it ideal for applications where speed is paramount.

Final Thoughts

Choosing between the Apple M2 Ultra 800gb 60cores and the NVIDIA 4080_16GB depends on your specific needs and priorities.

The M2 Ultra 800gb 60cores excels in its high bandwidth, adaptable quantization, and strong performance with a wide range of LLM models. It is a versatile option for various AI workflows.

The NVIDIA 4080_16GB stands out with its higher GPU core count, impressive performance for Llama 3 8B models, and its promise of handling even larger LLMs. It is a powerful choice for tasks demanding high processing speed and handling complex models.

Ultimately, it's best to weigh your specific requirements and carefully consider the strengths and weaknesses of each device before making your decision.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that excels in understanding and generating human-like text. These models are trained on massive datasets of text and code, enabling them to perform tasks like translation, writing different kinds of creative content, and answering your questions in an informative way.

What is the difference between processing speed and generation speed?

- Processing speed refers to how fast a device can process the input text and understand its meaning.

- Generation speed refers to how quickly a device can generate output text based on the processed input.

How does quantization affect LLM performance?

Quantization can significantly impact LLM performance. Smaller quantization levels like Q4 or Q8 reduce the precision of the model's parameters, making it smaller and faster, but potentially impacting its accuracy. Larger quantization levels like F16 offer higher precision but may require more memory and processing power.

Which device is better for beginners?

If you're new to LLMs, the Apple M2 Ultra 800gb 60cores is a good starting point. Its versatility and adaptability make it easier to experiment with different models and quantization levels.

Where can I find more information about LLMs?

- Hugging Face: A popular platform for sharing and exploring LLMs: https://huggingface.co/

- OpenAI: The creators of ChatGPT, a well-known LLM: https://openai.com/

Keywords

Apple M2 Ultra 800gb 60cores, NVIDIA 4080_16GB, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Bandwidth, GPU Cores, Quantization, F16, Q4, Q8, Processing Speed, Generation Speed, AI Hardware, Performance Comparison,