7 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and NVIDIA A100 PCIe 80GB for AI

Introduction

The world of artificial intelligence (AI) is rapidly evolving, with large language models (LLMs) like Llama 2 and Llama 3 pushing the boundaries of what's possible. But running these behemoths locally requires serious hardware. This article delves into the choice between two popular contenders: the Apple M2 Pro 200GB 16-core and the NVIDIA A100 PCIe 80GB.

We'll dissect seven key factors to help you decide which device best suits your AI projects, focusing on their performance with Llama 2 and Llama 3 models. This isn't a simple "winner takes all" scenario; both have strengths and weaknesses, and understanding these nuances is crucial for making the right choice.

Think of it this way: you're choosing a car. One is a sleek, efficient hybrid – the Apple M2 Pro – perfect for everyday driving. The other is a muscular, high-performance beast – the NVIDIA A100 – ideal for hauling heavy loads and conquering challenging terrain.

Let's explore which one is the right fit for your AI journey!

Apple M2 Pro 200GB 16-cores vs NVIDIA A100 PCIe 80GB: A Comprehensive Comparison

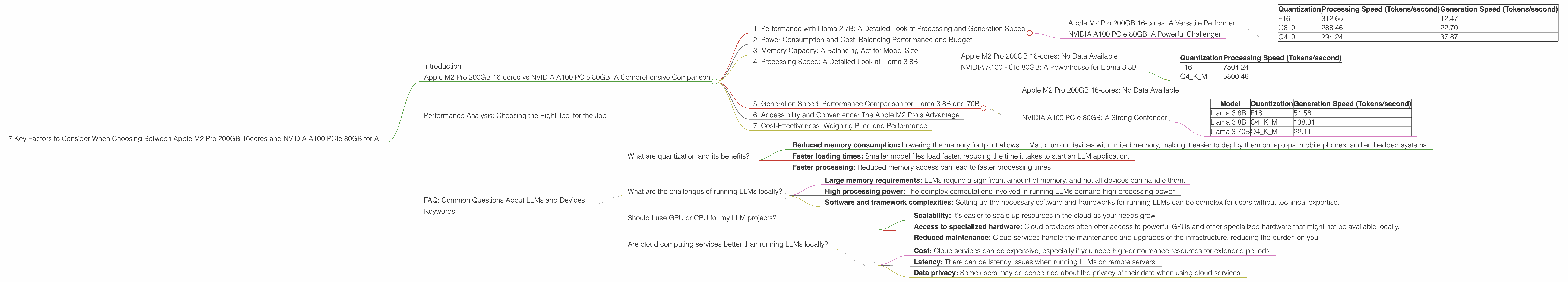

1. Performance with Llama 2 7B: A Detailed Look at Processing and Generation Speed

Apple M2 Pro 200GB 16-cores: A Versatile Performer

The Apple M2 Pro shines with its performance on Llama 2 7B. Whether you opt for F16, Q80, or Q40 quantization, the M2 Pro delivers impressive results.

Let's dive into the numbers:

| Quantization | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| F16 | 312.65 | 12.47 |

| Q8_0 | 288.46 | 22.70 |

| Q4_0 | 294.24 | 37.87 |

The M2 Pro's performance is consistent across different quantization levels, making it a reliable choice for Llama 2 7B projects.

NVIDIA A100 PCIe 80GB: A Powerful Challenger

Unfortunately, we don't have data for the NVIDIA A100 PCIe 80GB's performance on Llama 2 7B. So, we can't directly compare these two devices for Llama 2 7B.

2. Power Consumption and Cost: Balancing Performance and Budget

The Apple M2 Pro is a known energy efficiency champion. Its relatively low power consumption translates to lower operating costs. This makes it an attractive option for those seeking a balance between performance and affordability.

On the other hand, the NVIDIA A100 PCIe 80GB is a power-hungry beast, requiring a significant amount of electricity to operate. While its raw performance is undeniable, be prepared for a heftier electricity bill.

3. Memory Capacity: A Balancing Act for Model Size

The Apple M2 Pro 200GB offers a generous amount of memory, making it capable of handling larger models. This means you can explore more complex LLMs without encountering memory bottlenecks.

The NVIDIA A100 PCIe 80GB boasts a slightly smaller memory capacity. While 80GB is still substantial, it might limit you to smaller models if you plan to run multiple instances or work with extremely large datasets.

4. Processing Speed: A Detailed Look at Llama 3 8B

Apple M2 Pro 200GB 16-cores: No Data Available

Unfortunately, no data is currently available for the Apple M2 Pro's performance with Llama 3 8B. This limits our ability to compare the two devices in this specific context.

NVIDIA A100 PCIe 80GB: A Powerhouse for Llama 3 8B

The NVIDIA A100 PCIe 80GB excells with Llama 3 8B, showcasing impressive processing speed across both F16 and Q4KM quantization levels, as shown below:

| Quantization | Processing Speed (Tokens/second) |

|---|---|

| F16 | 7504.24 |

| Q4KM | 5800.48 |

The A100's performance with Llama 3 8B is phenomenal. If you prioritize raw processing power, the A100 is a clear winner.

5. Generation Speed: Performance Comparison for Llama 3 8B and 70B

Apple M2 Pro 200GB 16-cores: No Data Available

Similar to the processing speed, we lack data for the Apple M2 Pro's performance with Llama 3 8B and 70B for generation.

NVIDIA A100 PCIe 80GB: A Strong Contender

The NVIDIA A100 PCIe 80GB demonstrates its strength with Llama 3 8B and 70B, delivering commendable generation speeds.

| Model | Quantization | Generation Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B | F16 | 54.56 |

| Llama 3 8B | Q4KM | 138.31 |

| Llama 3 70B | Q4KM | 22.11 |

While the A100 PCIe 80GB might not be a blazing-fast generator, its performance is still respectable, especially considering the large size of these models.

6. Accessibility and Convenience: The Apple M2 Pro's Advantage

The Apple M2 Pro, being part of the Mac ecosystem, benefits from its seamless integration with Apple products. This makes it incredibly convenient for developers already invested in the Apple world.

The NVIDIA A100 PCIe 80GB, on the other hand, requires a more involved setup, often necessitating the use of specialized software and frameworks. While this doesn't make it inaccessible, it does demand more technical expertise and may not be as user-friendly for beginners.

7. Cost-Effectiveness: Weighing Price and Performance

The Apple M2 Pro, despite its lower performance, offers better value for its price. For developers on a budget who prioritize affordable performance, the M2 Pro is an attractive choice.

The NVIDIA A100 PCIe 80GB is a premium piece of hardware, commanding a high price tag. It only makes financial sense if you need the peak performance it offers.

Performance Analysis: Choosing the Right Tool for the Job

Both the Apple M2 Pro 200GB 16-cores and the NVIDIA A100 PCIe 80GB have their strengths and weaknesses. Deciding which one is "better" depends entirely on your specific needs and priorities.

For researchers and developers who need to process large datasets and work with the latest, largest LLM models like Llama 3 8B and 70B, the NVIDIA A100 PCIe 80GB is the clear choice. No other currently available consumer-grade device can match its raw processing power, and its performance with large models is undeniable.

However, for those who want a more affordable and accessible option with reasonable performance for smaller models like Llama 2 7B, the Apple M2 Pro 200GB 16-cores is a solid contender. Its cost-effectiveness, energy efficiency, and seamless integration with the Apple ecosystem make it an attractive option for many developers.

Remember that the "best" device is relative to your specific needs. Do you prioritize raw processing power or accessible performance? Are you working with large models or smaller ones? By carefully considering these factors, you can select the perfect tool for your AI projects.

FAQ: Common Questions About LLMs and Devices

What are quantization and its benefits?

Quantization is a technique used to reduce the size of large language models (LLMs) without significantly impacting their performance. It involves converting the model's weights (the parameters that define the model's behavior) from 32-bit floating-point numbers (F32) to smaller data types like 16-bit floating-point (F16), 8-bit integer (Q8), or 4-bit integer (Q4).

This "compression" offers several benefits:

- Reduced memory consumption: Lowering the memory footprint allows LLMs to run on devices with limited memory, making it easier to deploy them on laptops, mobile phones, and embedded systems.

- Faster loading times: Smaller model files load faster, reducing the time it takes to start an LLM application.

- Faster processing: Reduced memory access can lead to faster processing times.

What are the challenges of running LLMs locally?

Running LLMs locally can be challenging for several reasons:

- Large memory requirements: LLMs require a significant amount of memory, and not all devices can handle them.

- High processing power: The complex computations involved in running LLMs demand high processing power.

- Software and framework complexities: Setting up the necessary software and frameworks for running LLMs can be complex for users without technical expertise.

Should I use GPU or CPU for my LLM projects?

GPUs (Graphics Processing Units) are generally better suited for running LLMs than CPUs (Central Processing Units). GPUs are designed to efficiently handle parallel computations, making them ideal for the complex matrix operations involved in LLM inference. CPUs, on the other hand, are not as well-suited for parallel computations.

Are cloud computing services better than running LLMs locally?

Cloud computing services offer several benefits over running LLMs locally:

- Scalability: It's easier to scale up resources in the cloud as your needs grow.

- Access to specialized hardware: Cloud providers often offer access to powerful GPUs and other specialized hardware that might not be available locally.

- Reduced maintenance: Cloud services handle the maintenance and upgrades of the infrastructure, reducing the burden on you.

However, there are also downsides to cloud services:

- Cost: Cloud services can be expensive, especially if you need high-performance resources for extended periods.

- Latency: There can be latency issues when running LLMs on remote servers.

- Data privacy: Some users may be concerned about the privacy of their data when using cloud services.

Keywords

Apple M2 Pro, NVIDIA A100 PCIe, LLMs, Llama 2, Llama 3, processing speed, generation speed, quantization, F16, Q80, Q40, memory capacity, power consumption, cost-effectiveness, AI, machine learning, deep learning, GPU, CPU, cloud computing