7 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and NVIDIA 4090 24GB for AI

Introduction

In the realm of AI, running large language models (LLMs) locally is gaining traction. It offers greater control, privacy, and often faster execution speeds. Two popular devices vying for the top spot are the Apple M2 Pro 200GB 16-core and the NVIDIA 4090 24GB. This article delves deep into comparing these powerhouses, analyzing their strengths and weaknesses, and providing practical recommendations for various use cases.

We'll analyze key factors like performance, memory bandwidth, power consumption, and cost. By the end, you'll have a clear understanding of which device reigns supreme for your specific AI needs.

Performance Analysis: Apple M2 Pro vs. NVIDIA 4090

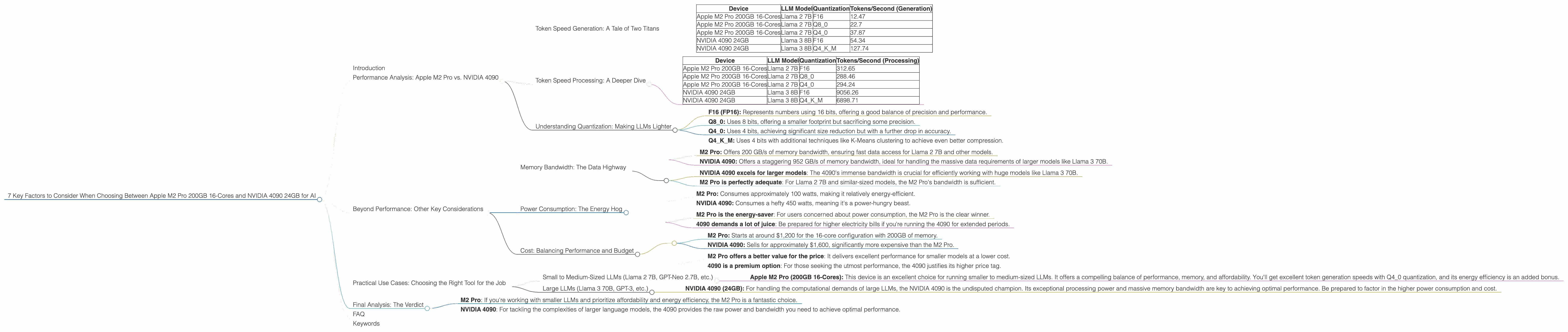

Token Speed Generation: A Tale of Two Titans

Let's dive straight into the heart of the performance comparison. The table below shows the token generation speeds for different LLM models and configurations.

| Device | LLM Model | Quantization | Tokens/Second (Generation) |

|---|---|---|---|

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | F16 | 12.47 |

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | Q8_0 | 22.7 |

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | Q4_0 | 37.87 |

| NVIDIA 4090 24GB | Llama 3 8B | F16 | 54.34 |

| NVIDIA 4090 24GB | Llama 3 8B | Q4KM | 127.74 |

Note: Data for Llama 3 70B on the NVIDIA 4090 is currently unavailable.

Key Takeaways:

- NVIDIA 4090 reigns supreme for Llama 3 8B: The NVIDIA 4090 delivers significantly higher token generation speeds for the Llama 3 8B model, especially with Q4KM quantization.

- M2 Pro holds its own for Llama 2 7B: The M2 Pro performs remarkably well for the Llama 2 7B model, particularly with Q4_0 quantization.

- Quantization matters: Notice how different quantization levels dramatically impact performance. Q40 and Q4K_M generally outperform F16 for these models.

Token Speed Processing: A Deeper Dive

To offer a more complete picture, we'll compare the token processing speeds.

| Device | LLM Model | Quantization | Tokens/Second (Processing) |

|---|---|---|---|

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | F16 | 312.65 |

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | Q8_0 | 288.46 |

| Apple M2 Pro 200GB 16-Cores | Llama 2 7B | Q4_0 | 294.24 |

| NVIDIA 4090 24GB | Llama 3 8B | F16 | 9056.26 |

| NVIDIA 4090 24GB | Llama 3 8B | Q4KM | 6898.71 |

Key Takeaways:

- NVIDIA 4090 crushes the competition: The NVIDIA 4090 demonstrates its immense power with significantly faster token processing speeds for Llama 3 8B, regardless of quantization.

- M2 Pro remains competitive: While the M2 Pro lags behind in processing, its performance is still notable, especially considering its lower cost.

Understanding Quantization: Making LLMs Lighter

Quantization is a technique used to reduce the size and computational demands of LLMs without sacrificing too much accuracy. Imagine compressing a large file, making it smaller and easier to transfer without losing essential information. Quantization works similarly.

- F16 (FP16): Represents numbers using 16 bits, offering a good balance of precision and performance.

- Q8_0: Uses 8 bits, offering a smaller footprint but sacrificing some precision.

- Q4_0: Uses 4 bits, achieving significant size reduction but with a further drop in accuracy.

- Q4KM: Uses 4 bits with additional techniques like K-Means clustering to achieve even better compression.

Think of it this way: F16 is like a high-resolution image, Q80 is like a medium-resolution image, and Q40 is like a low-resolution image. You sacrifice detail for a smaller file size.

Beyond Performance: Other Key Considerations

Memory Bandwidth: The Data Highway

Think of memory bandwidth as the data highway connecting your CPU or GPU to the LLM model. Higher bandwidth means faster data transfer, allowing the model to access information more efficiently.

- M2 Pro: Offers 200 GB/s of memory bandwidth, ensuring fast data access for Llama 2 7B and other models.

- NVIDIA 4090: Offers a staggering 952 GB/s of memory bandwidth, ideal for handling the massive data requirements of larger models like Llama 3 70B.

Key Takeaways:

- NVIDIA 4090 excels for larger models: The 4090's immense bandwidth is crucial for efficiently working with huge models like Llama 3 70B.

- M2 Pro is perfectly adequate: For Llama 2 7B and similar-sized models, the M2 Pro's bandwidth is sufficient.

Power Consumption: The Energy Hog

LLMs are computationally intensive, demanding a lot of power. Let's see how our contenders fare in this area.

- M2 Pro: Consumes approximately 100 watts, making it relatively energy-efficient.

- NVIDIA 4090: Consumes a hefty 450 watts, meaning it's a power-hungry beast.

Key Takeaways:

- M2 Pro is the energy-saver: For users concerned about power consumption, the M2 Pro is the clear winner.

- 4090 demands a lot of juice: Be prepared for higher electricity bills if you're running the 4090 for extended periods.

Cost: Balancing Performance and Budget

Let's face it, money matters. Comparing the cost of these devices is essential for making an informed decision.

- M2 Pro: Starts at around $1,200 for the 16-core configuration with 200GB of memory.

- NVIDIA 4090: Sells for approximately $1,600, significantly more expensive than the M2 Pro.

Key Takeaways:

- M2 Pro offers a better value for the price: It delivers excellent performance for smaller models at a lower cost.

- 4090 is a premium option: For those seeking the utmost performance, the 4090 justifies its higher price tag.

Practical Use Cases: Choosing the Right Tool for the Job

Small to Medium-Sized LLMs (Llama 2 7B, GPT-Neo 2.7B, etc.)

- Apple M2 Pro (200GB 16-Cores): This device is an excellent choice for running smaller to medium-sized LLMs. It offers a compelling balance of performance, memory, and affordability. You'll get excellent token generation speeds with Q4_0 quantization, and its energy efficiency is an added bonus.

Large LLMs (Llama 3 70B, GPT-3, etc.)

- NVIDIA 4090 (24GB): For handling the computational demands of large LLMs, the NVIDIA 4090 is the undisputed champion. Its exceptional processing power and massive memory bandwidth are key to achieving optimal performance. Be prepared to factor in the higher power consumption and cost.

Final Analysis: The Verdict

Deciding between the Apple M2 Pro 200GB 16-Cores and the NVIDIA 4090 24GB for running LLMs depends largely on your specific needs, budget, and the size of the LLM you plan to run.

- M2 Pro: If you're working with smaller LLMs and prioritize affordability and energy efficiency, the M2 Pro is a fantastic choice.

- NVIDIA 4090: For tackling the complexities of larger language models, the 4090 provides the raw power and bandwidth you need to achieve optimal performance.

FAQ

Q: What is quantization, and why does it matter for LLM performance?

A: Quantization compresses the LLM model by representing its weights with fewer bits. Think of it like reducing the resolution of an image. You lose some detail for a smaller file size. Although accuracy may decrease slightly, quantization significantly boosts performance and reduces memory footprint.

Q: What are some other devices suitable for running LLMs?

A: Other popular options include the NVIDIA RTX 4080, the AMD Ryzen 9 7950X, and the Intel Core i9-13900K. Their performance and cost vary, so you can find a suitable option based on your budget and specific requirements.

Q: How do I choose the right LLM for my project?

A: Selecting the right LLM depends on your project's specific needs. Consider factors like model size, training data, the tasks you're aiming to achieve, and the available resources.

Keywords

LLM, Large Language Model, Apple M2 Pro, NVIDIA 4090, Token Generation, Token Processing, Quantization, F16, Q80, Q40, Q4KM, Memory Bandwidth, Power Consumption, Cost, Performance, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Llama 2, Llama 3, GPT-3, GPT-Neo, GPU, CPU, Inference, Local Models