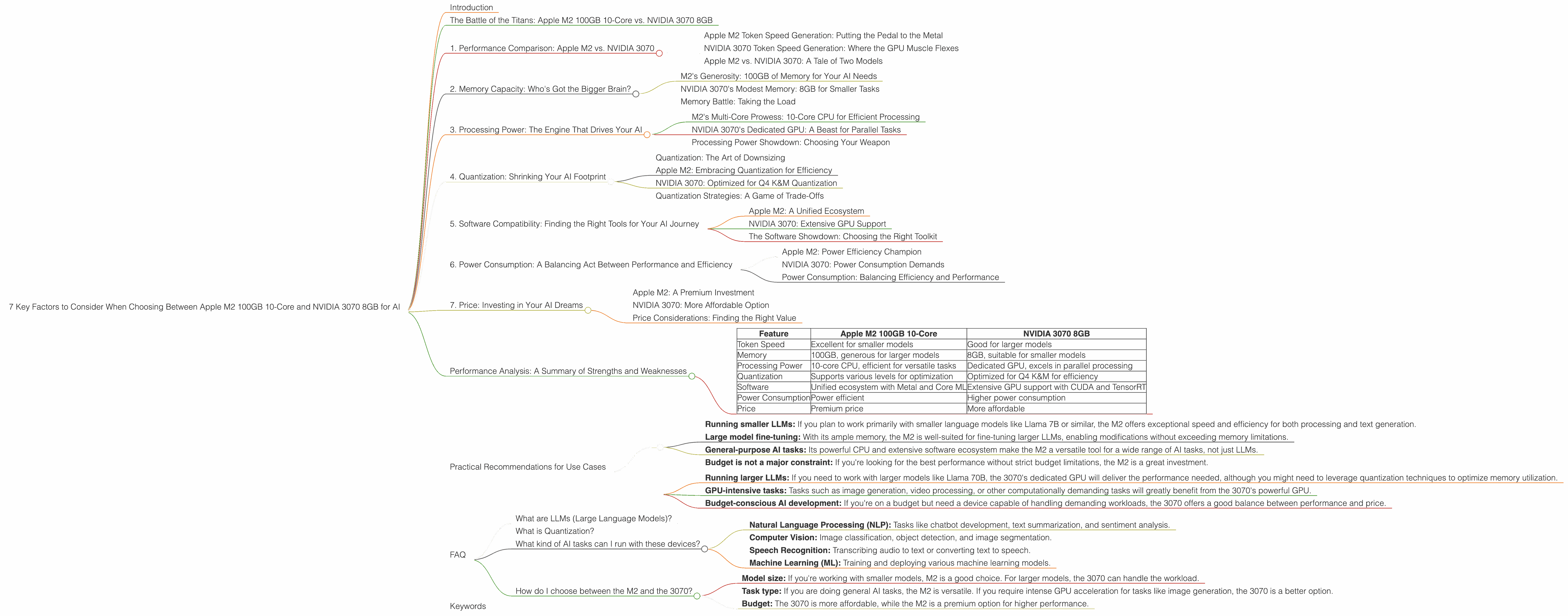

7 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and NVIDIA 3070 8GB for AI

Introduction

The world of large language models (LLMs) is booming, and running them locally is becoming increasingly popular. But with so many different devices on the market, how do you choose the right one for your needs? In this article, we'll compare two popular options: the Apple M2 100GB 10-Core and the NVIDIA 3070 8GB, focusing on their performance with various LLM models. We will explore crucial factors that will help you decide which device is best for your AI endeavors.

The Battle of the Titans: Apple M2 100GB 10-Core vs. NVIDIA 3070 8GB

Choosing between these two powerful devices can feel like a classic David vs. Goliath situation. The Apple M2 100GB 10-Core, with its impressive memory and processing power, represents the underdog. On the other hand, the NVIDIA 3070 8GB, known for its dedicated GPU prowess, embodies the established champion.

To make the best decision, let's dive into the key factors that will determine the victor in this AI showdown:

1. Performance Comparison: Apple M2 vs. NVIDIA 3070

Apple M2 Token Speed Generation: Putting the Pedal to the Metal

The Apple M2 boasts impressive token speeds, especially when it comes to generating text. With a smaller model like Llama2 7B, the M2 delivers a whopping 6.72 tokens per second for F16 quantization. That's fast enough to keep up with even the most verbose chatbot!

NVIDIA 3070 Token Speed Generation: Where the GPU Muscle Flexes

The NVIDIA 3070 doesn't disappoint when it comes to token speed generation either. While the data doesn't show performance for Llama2 7B, it does showcase the 3070's strength with Llama 3 8B. Using Q4 K&M quantization, the 3070 generates 70.94 tokens per second, a good performance considering the larger model size.

Apple M2 vs. NVIDIA 3070: A Tale of Two Models

The tale of the token speeds tells an exciting story. While the M2 shines with smaller models, the 3070 shows its true power with larger ones. To give you a better picture, imagine the M2 as a nimble and agile sprinter, perfect for quick tasks. The 3070 is like a powerful marathon runner, able to tackle larger, more demanding workloads.

2. Memory Capacity: Who's Got the Bigger Brain?

M2's Generosity: 100GB of Memory for Your AI Needs

The Apple M2 comes equipped with a generous 100GB of memory - a true blessing for running large language models. It's like having an expansive library available at your fingertips. With this amount of memory, you can load larger models and run them with ease.

NVIDIA 3070's Modest Memory: 8GB for Smaller Tasks

The NVIDIA 3070 sports a more modest 8GB of memory, a good amount for smaller models or fine-tuning. It's like having a well-organized book shelf, perfect for your core collection. However, if you want to explore larger LLM territory, the 3070 might struggle.

Memory Battle: Taking the Load

The M2's memory advantage shines when dealing with larger LLMs like Llama 70B. The 3070, with its more limited memory, might face challenges in hosting and running such models efficiently.

3. Processing Power: The Engine That Drives Your AI

M2's Multi-Core Prowess: 10-Core CPU for Efficient Processing

The M2 boasts a powerful 10-core CPU, giving it a significant advantage in processing tasks. It's like having 10 highly-skilled engineers working in tandem to build your AI project. This multi-core architecture allows for efficient parallel processing, handling complex workloads smoothly.

NVIDIA 3070's Dedicated GPU: A Beast for Parallel Tasks

The NVIDIA 3070, known for its powerful GPU, excels in parallel processing. It's like having a custom-built machine for specific AI tasks, especially when dealing with massive datasets.

Processing Power Showdown: Choosing Your Weapon

The M2 excels in general-purpose tasks and processing, while the 3070 shines when it comes to specific computationally-intensive AI tasks. For a balanced approach, consider the M2 for versatile tasks and the 3070 for specialized workload demanding high parallel processing power.

4. Quantization: Shrinking Your AI Footprint

Quantization: The Art of Downsizing

Quantization is a technique that reduces model size and memory consumption, allowing you to run larger models on devices with limited resources. It's like compressing a picture to save storage space without sacrificing too much detail. Think of it as a diet for your AI models.

Apple M2: Embracing Quantization for Efficiency

The M2 supports various quantization levels, including F16, Q8, and Q4. This flexibility allows you to choose the right level of quantization to optimize for speed and memory footprint.

NVIDIA 3070: Optimized for Q4 K&M Quantization

The NVIDIA 3070 currently shows performance for Q4 K&M (Knowledge and Memory) quantization, a popular choice for reducing model size while maintaining accuracy. This quantization approach allows for more efficient resource utilization, making the 3070 a viable option for running larger LLMs.

Quantization Strategies: A Game of Trade-Offs

Different quantization levels offer different benefits. While F16 provides a balance between accuracy and speed, Q8 and Q4 prioritize memory efficiency. The choice of quantization depends on your specific workload and the trade-off you are willing to make between accuracy and resource consumption.

5. Software Compatibility: Finding the Right Tools for Your AI Journey

Apple M2: A Unified Ecosystem

The Apple M2 is part of a unified ecosystem, with software specifically designed for its architecture. You can find a variety of tools and libraries, such as Metal and Core ML, optimized for its performance.

NVIDIA 3070: Extensive GPU Support

The NVIDIA 3070 enjoys extensive GPU support from frameworks like CUDA and TensorRT. This extensive ecosystem guarantees access to a vast collection of libraries and optimization tools specifically targeted for GPU acceleration.

The Software Showdown: Choosing the Right Toolkit

Both the Apple M2 and the NVIDIA 3070 boast extensive software support. For developers seeking a unified ecosystem, the Apple M2's tools might be a perfect fit. However, those looking for a vast selection of GPU-optimized frameworks and libraries might prefer the NVIDIA 3070's robust ecosystem.

6. Power Consumption: A Balancing Act Between Performance and Efficiency

Apple M2: Power Efficiency Champion

The Apple M2 is known for its power efficiency, consuming less energy compared to its NVIDIA counterpart. It's like having a fuel-efficient car, capable of traveling long distances while conserving precious resources.

NVIDIA 3070: Power Consumption Demands

The NVIDIA 3070, with its powerful GPU, requires more power to operate. It's like driving a performance car, offering exhilarating speed but demanding more fuel to power its engine.

Power Consumption: Balancing Efficiency and Performance

The M2 is a great choice if you prioritize power consumption and efficiency, especially when dealing with smaller models. The 3070, however, is suited for scenarios where performance is paramount, even if it means sacrificing a bit of efficiency.

7. Price: Investing in Your AI Dreams

Apple M2: A Premium Investment

The Apple M2, with its powerful hardware and software ecosystem, comes at a premium price. It's like making a long-term investment in a high-quality asset, offering a solid return on your investment.

NVIDIA 3070: More Affordable Option

The NVIDIA 3070, while still a powerful GPU, generally has a more affordable price point. It's like a well-priced car, offering a good balance between performance and cost.

Price Considerations: Finding the Right Value

Choosing between these devices requires considering your budget alongside your performance needs. The Apple M2, with its premium price tag, is a worthy investment if you require a powerful and versatile device with great memory capacity. The NVIDIA 3070 is a more affordable option offering powerful performance, especially for GPU-intensive AI tasks.

Performance Analysis: A Summary of Strengths and Weaknesses

Here's a summarized comparison of the Apple M2 100GB 10-core and the NVIDIA 3070 8GB, highlighting their strengths and weaknesses based on the data presented:

| Feature | Apple M2 100GB 10-Core | NVIDIA 3070 8GB |

|---|---|---|

| Token Speed | Excellent for smaller models | Good for larger models |

| Memory | 100GB, generous for larger models | 8GB, suitable for smaller models |

| Processing Power | 10-core CPU, efficient for versatile tasks | Dedicated GPU, excels in parallel processing |

| Quantization | Supports various levels for optimization | Optimized for Q4 K&M for efficiency |

| Software | Unified ecosystem with Metal and Core ML | Extensive GPU support with CUDA and TensorRT |

| Power Consumption | Power efficient | Higher power consumption |

| Price | Premium price | More affordable |

Practical Recommendations for Use Cases

Use the Apple M2 100GB 10-Core for:

- Running smaller LLMs: If you plan to work primarily with smaller language models like Llama 7B or similar, the M2 offers exceptional speed and efficiency for both processing and text generation.

- Large model fine-tuning: With its ample memory, the M2 is well-suited for fine-tuning larger LLMs, enabling modifications without exceeding memory limitations.

- General-purpose AI tasks: Its powerful CPU and extensive software ecosystem make the M2 a versatile tool for a wide range of AI tasks, not just LLMs.

- Budget is not a major constraint: If you're looking for the best performance without strict budget limitations, the M2 is a great investment.

Use the NVIDIA 3070 8GB for:

- Running larger LLMs: If you need to work with larger models like Llama 70B, the 3070's dedicated GPU will deliver the performance needed, although you might need to leverage quantization techniques to optimize memory utilization.

- GPU-intensive tasks: Tasks such as image generation, video processing, or other computationally demanding tasks will greatly benefit from the 3070's powerful GPU.

- Budget-conscious AI development: If you're on a budget but need a device capable of handling demanding workloads, the 3070 offers a good balance between performance and price.

FAQ

What are LLMs (Large Language Models)?

LLMs are sophisticated AI models trained on vast amounts of text data. They can understand and generate human-like text, translate languages, write different creative text formats, and answer your questions in an informative way.

What is Quantization?

Quantization is a process of reducing the precision of a model's weights and activations to decrease memory consumption and improve performance on smaller devices. Imagine it like using fewer colors to paint a picture. While you might lose some detail, the resulting image is smaller and takes less storage space.

What kind of AI tasks can I run with these devices?

These devices are powerful enough for a wide range of AI applications:

- Natural Language Processing (NLP): Tasks like chatbot development, text summarization, and sentiment analysis.

- Computer Vision: Image classification, object detection, and image segmentation.

- Speech Recognition: Transcribing audio to text or converting text to speech.

- Machine Learning (ML): Training and deploying various machine learning models.

How do I choose between the M2 and the 3070?

The best choice depends on your specific needs:

- Model size: If you're working with smaller models, M2 is a good choice. For larger models, the 3070 can handle the workload.

- Task type: If you are doing general AI tasks, the M2 is versatile. If you require intense GPU acceleration for tasks like image generation, the 3070 is a better option.

- Budget: The 3070 is more affordable, while the M2 is a premium option for higher performance.

Keywords

LLMs, Large Language Models, Apple M2, NVIDIA 3070, GPU, CPU, Memory, Token Speed, Quantization, F16, Q8, Q4, K&M, Software Compatibility, Power Consumption, Price, AI, Machine Learning, NLP, Computer Vision, Speech Recognition, performance, comparison, recommendation, use cases, developer, geek, local models.