7 Key Factors to Consider When Choosing Between Apple M1 Ultra 800gb 48cores and NVIDIA A40 48GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding with exciting advancements, creating a demand for powerful hardware to run these complex models locally. Two prominent contenders in this arena are the Apple M1 Ultra 800GB 48cores and the NVIDIA A40 48GB, both packing impressive processing capabilities.

This article provides a comprehensive comparison of these devices, focusing on their strengths and weaknesses in various LLM scenarios, helping you make an informed choice for your AI projects.

Comparing Apple M1 Ultra and NVIDIA A40 for LLMs: A Deep Dive

Imagine trying to build a house: You need to consider the foundation, the walls, the roof, and everything in between. Similarly, choosing the right device for your LLM projects involves examining key factors beyond sheer processing power. Here's a detailed breakdown:

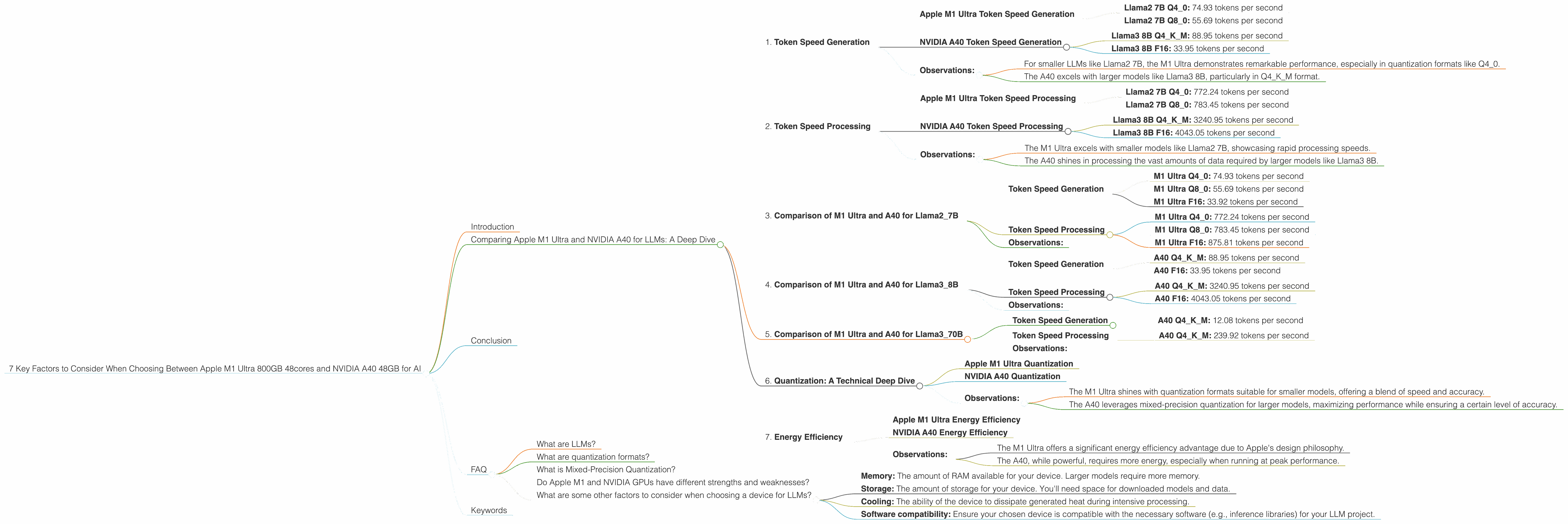

1. Token Speed Generation

We'll start with token speed, the rate at which your LLM processes text. Think of it as the number of words a machine can read and understand per second.

Apple M1 Ultra Token Speed Generation

The M1 Ultra shines in its fast token generation, exceeding 70 tokens per second for certain LLMs:

- Llama2 7B Q4_0: 74.93 tokens per second

- Llama2 7B Q8_0: 55.69 tokens per second

NVIDIA A40 Token Speed Generation

The A40, while powerful, lags slightly behind in token generation for some models:

- Llama3 8B Q4KM: 88.95 tokens per second

- Llama3 8B F16: 33.95 tokens per second

Observations:

- For smaller LLMs like Llama2 7B, the M1 Ultra demonstrates remarkable performance, especially in quantization formats like Q4_0.

- The A40 excels with larger models like Llama3 8B, particularly in Q4KM format.

Practical Takeaway: If you're working with smaller models that prioritize fast text processing, the M1 Ultra might be your preferred choice. However, for larger models like Llama3 8B, the A40 offers a compelling advantage.

2. Token Speed Processing

Let's look at token speed processing, which measures how quickly your LLM can process the information within each token. Think of it as the speed at which the machine can understand the meaning of each word.

Apple M1 Ultra Token Speed Processing

The M1 Ultra demonstrates impressive token processing speeds for Llama2 7B:

- Llama2 7B Q4_0: 772.24 tokens per second

- Llama2 7B Q8_0: 783.45 tokens per second

NVIDIA A40 Token Speed Processing

The A40 offers significantly higher processing speeds for larger models:

- Llama3 8B Q4KM: 3240.95 tokens per second

- Llama3 8B F16: 4043.05 tokens per second

Observations:

- The M1 Ultra excels with smaller models like Llama2 7B, showcasing rapid processing speeds.

- The A40 shines in processing the vast amounts of data required by larger models like Llama3 8B.

Practical Takeaway: If you prioritize processing speed for smaller models, the M1 Ultra is a strong contender. However, for large-scale LLMs, the A40's processing prowess is unparalleled.

3. Comparison of M1 Ultra and A40 for Llama2_7B

For a clearer picture, let's directly compare the M1 Ultra and the A40 using the Llama2 7B model. It's important to note that the A40 doesn't have data for Llama2 7B performance, so we can only consider Apple M1 Ultra data.

Token Speed Generation

- M1 Ultra Q4_0: 74.93 tokens per second

- M1 Ultra Q8_0: 55.69 tokens per second

- M1 Ultra F16: 33.92 tokens per second

Token Speed Processing

- M1 Ultra Q4_0: 772.24 tokens per second

- M1 Ultra Q8_0: 783.45 tokens per second

- M1 Ultra F16: 875.81 tokens per second

Observations:

The M1 Ultra exhibits remarkably fast token generation and processing speeds for Llama2 7B, regardless of the quantization format.

Practical Takeaway: The Apple M1 Ultra is a powerhouse for running Llama2 7B locally, offering swift performance in various quantization formats.

4. Comparison of M1 Ultra and A40 for Llama3_8B

Let's compare the performance of these devices for the Llama3 8B model.

Token Speed Generation

- A40 Q4KM: 88.95 tokens per second

- A40 F16: 33.95 tokens per second

Token Speed Processing

- A40 Q4KM: 3240.95 tokens per second

- A40 F16: 4043.05 tokens per second

Observations:

The A40 holds a clear advantage in both token generation and processing speeds, particularly for the Q4KM quantization format.

Practical Takeaway: If you're working with the Llama3 8B model, the NVIDIA A40 outperforms the M1 Ultra in terms of processing and generation speed.

5. Comparison of M1 Ultra and A40 for Llama3_70B

Now, let's examine the performance of both devices for the substantial Llama3 70B model.

Token Speed Generation

- A40 Q4KM: 12.08 tokens per second

Token Speed Processing

- A40 Q4KM: 239.92 tokens per second

Important Note: There are no data available for Llama3 70B with F16 quantization for both devices. This means we can only compare their performance with Q4KM quantization.

Observations:

The A40, despite being slower than with 8B model, still offers usable performance for Llama3 70B in the Q4KM quantization format.

Practical Takeaway: The A40 is capable of running Llama3 70B, but you'll find slower performance compared to smaller models. If your project requires the massive capabilities of Llama3 70B, the A40 is your best option given the available data.

6. Quantization: A Technical Deep Dive

Quantization is a powerful technique for optimizing model size and processing speed. Think of it as compressing the information within an LLM without losing too much meaning.

Apple M1 Ultra Quantization

The M1 Ultra excels with various quantization formats for smaller models like Llama2 7B, including Q80 and Q40. These formats allow for faster processing while maintaining a good balance of accuracy.

NVIDIA A40 Quantization

The A40 supports mixed-precision quantization, like Q4KM for models like Llama3 8B and 70B. This format allows for a more optimized balance of speed and accuracy compared to full precision (F16).

Observations:

- The M1 Ultra shines with quantization formats suitable for smaller models, offering a blend of speed and accuracy.

- The A40 leverages mixed-precision quantization for larger models, maximizing performance while ensuring a certain level of accuracy.

Practical Takeaway: Choose your quantization format based on the size of your LLM and your desired trade-off between speed and accuracy. Smaller models often benefit from formats like Q40 and Q80, while larger models might fare better with mixed-precision quantization.

7. Energy Efficiency

Energy efficiency is crucial for long-running AI tasks. Consider how much power your device consumes, especially if you're running multiple models or training.

Apple M1 Ultra Energy Efficiency

The M1 Ultra is known for its energy efficiency, especially considering its powerful performance. Apple prioritizes low-power consumption to extend battery life in its products.

NVIDIA A40 Energy Efficiency

The A40 is a high-performance GPU, inherently demanding more power compared to the M1 Ultra. However, advances in GPU architecture have made them more energy-efficient than previous generations.

Observations:

- The M1 Ultra offers a significant energy efficiency advantage due to Apple's design philosophy.

- The A40, while powerful, requires more energy, especially when running at peak performance.

Practical Takeaway: If you're concerned about energy costs or battery life, the M1 Ultra is a more energy-efficient option, particularly for smaller models. However, if you require the ultimate processing power, the A40 might be your choice despite higher energy consumption.

Conclusion

Choosing between the Apple M1 Ultra 800GB 48cores and the NVIDIA A40 48GB depends on your specific project requirements. The M1 Ultra is a compelling option for smaller models like Llama2 7B, offering lightning-fast token generation and processing speeds in various quantization formats. It also excels in terms of energy efficiency. The A40 shines when working with larger models like Llama3 8B and 70B, especially in mixed-precision quantization, although it consumes more power.

Ultimately, the best option for you will depend on your specific use case, model size, desired accuracy, and energy budget.

FAQ

What are LLMs?

LLMs are complex AI models that can understand and generate human-like text. Think of them as incredibly sophisticated language machines that can write stories, translate languages, and answer questions.

What are quantization formats?

Quantization formats are ways to compress the information within an LLM to reduce its memory footprint and improve processing speed. They essentially reduce the amount of data the model needs to store and process, helping it run faster.

What is Mixed-Precision Quantization?

Mixed-precision quantization uses a combination of different data precision levels within the model. It allows you to optimize for speed and accuracy without sacrificing too much on either front.

Do Apple M1 and NVIDIA GPUs have different strengths and weaknesses?

Yes, they do! Apple M1 processors are designed for overall efficiency, while NVIDIA GPUs are known for their raw processing power for demanding tasks like 3D graphics and AI. They both excel in their respective areas.

What are some other factors to consider when choosing a device for LLMs?

In addition to the factors mentioned above, consider:

- Memory: The amount of RAM available for your device. Larger models require more memory.

- Storage: The amount of storage for your device. You'll need space for downloaded models and data.

- Cooling: The ability of the device to dissipate generated heat during intensive processing.

- Software compatibility: Ensure your chosen device is compatible with the necessary software (e.g., inference libraries) for your LLM project.

Keywords

Large Language Models, LLM, Apple M1 Ultra, NVIDIA A40, token speed, generation, processing, quantization, Q4KM, F16, energy efficiency, Llama2, Llama3, AI, machine learning, deep learning, inference, performance, comparison, benchmarks, developers, geeks, technology, innovation.