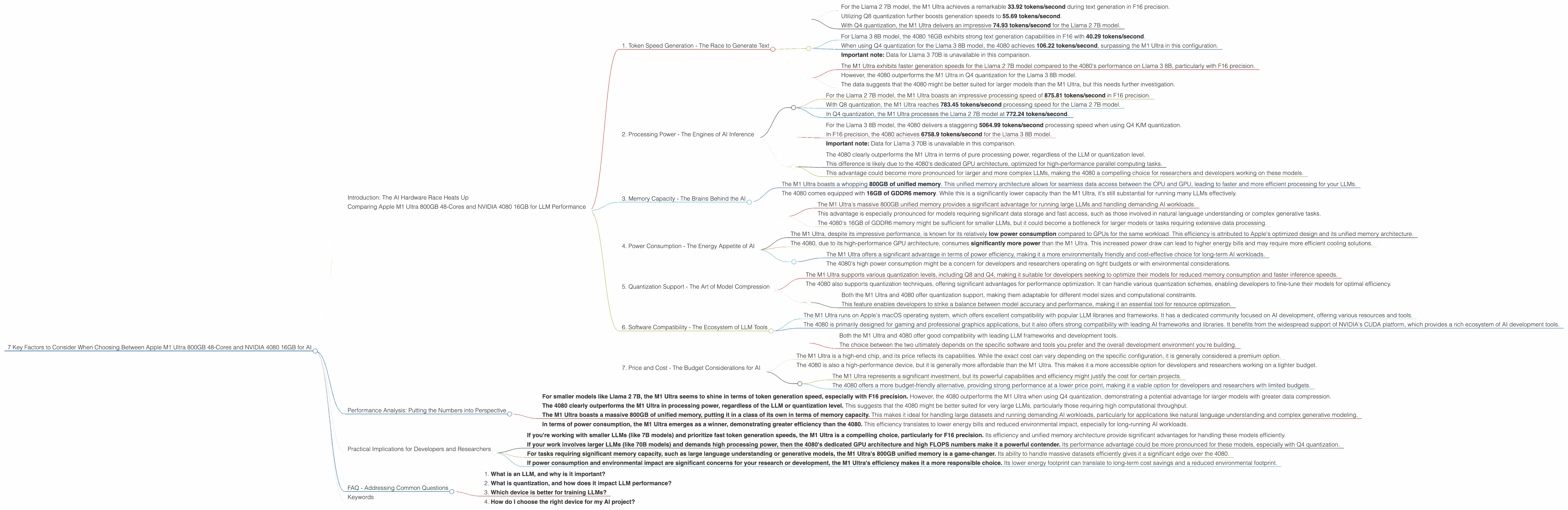

7 Key Factors to Consider When Choosing Between Apple M1 Ultra 800gb 48cores and NVIDIA 4080 16GB for AI

Introduction: The AI Hardware Race Heats Up

The world of artificial intelligence (AI) is experiencing a renaissance, fueled by the rapid advancements in large language models (LLMs). These powerful AI systems are revolutionizing how we interact with computers, with applications ranging from chatbots to creative writing tools and even scientific discovery. To unleash the full potential of LLMs, researchers and developers need powerful hardware that can handle the intensive computational demands of training and running these models.

Two heavyweight contenders in this hardware race are Apple's M1 Ultra 800GB 48-cores processor and NVIDIA's GeForce RTX 4080 16GB graphics card. Both devices boast impressive performance, but they cater to different needs and offer unique advantages.

This article delves into a detailed comparison of these two powerhouses, providing insights into their performance characteristics, strengths, and weaknesses. By examining seven key factors - including token speed generation, processing power, and memory capacity - we'll help you determine which device aligns best with your specific AI requirements.

Comparing Apple M1 Ultra 800GB 48-Cores and NVIDIA 4080 16GB for LLM Performance

1. Token Speed Generation - The Race to Generate Text

Token speed generation is a crucial metric for LLMs, reflecting how quickly a device can process and generate text. This metric is often measured in tokens per second (TPS). A higher TPS translates to faster inference speeds, enabling smoother and more responsive user experiences with AI applications.

Apple M1 Ultra 800GB 48-Cores:

- For the Llama 2 7B model, the M1 Ultra achieves a remarkable 33.92 tokens/second during text generation in F16 precision.

- Utilizing Q8 quantization further boosts generation speeds to 55.69 tokens/second.

- With Q4 quantization, the M1 Ultra delivers an impressive 74.93 tokens/second for the Llama 2 7B model.

NVIDIA 4080 16GB:

- For Llama 3 8B model, the 4080 16GB exhibits strong text generation capabilities in F16 with 40.29 tokens/second.

- When using Q4 quantization for the Llama 3 8B model, the 4080 achieves 106.22 tokens/second, surpassing the M1 Ultra in this configuration.

- Important note: Data for Llama 3 70B is unavailable in this comparison.

Key Takeaways:

- The M1 Ultra exhibits faster generation speeds for the Llama 2 7B model compared to the 4080's performance on Llama 3 8B, particularly with F16 precision.

- However, the 4080 outperforms the M1 Ultra in Q4 quantization for the Llama 3 8B model.

The data suggests that the 4080 might be better suited for larger models than the M1 Ultra, but this needs further investigation.

Imagine this: You're using an AI-powered chatbot for customer service. A higher token speed means faster response times, leading to a more seamless and satisfying user experience.

2. Processing Power - The Engines of AI Inference

Behind the scenes, the AI model's processing power is the driving force behind its performance. This power is measured in FLOPS (floating-point operations per second), signifying the number of calculations a device can execute in a given timeframe.

Apple M1 Ultra 800GB 48-Cores:

- For the Llama 2 7B model, the M1 Ultra boasts an impressive processing speed of 875.81 tokens/second in F16 precision.

- With Q8 quantization, the M1 Ultra reaches 783.45 tokens/second processing speed for the Llama 2 7B model.

- In Q4 quantization, the M1 Ultra processes the Llama 2 7B model at 772.24 tokens/second.

NVIDIA 4080 16GB:

- For the Llama 3 8B model, the 4080 delivers a staggering 5064.99 tokens/second processing speed when using Q4 K/M quantization.

- In F16 precision, the 4080 achieves 6758.9 tokens/second for the Llama 3 8B model.

- Important note: Data for Llama 3 70B is unavailable in this comparison.

Key Takeaways:

- The 4080 clearly outperforms the M1 Ultra in terms of pure processing power, regardless of the LLM or quantization level.

- This difference is likely due to the 4080's dedicated GPU architecture, optimized for high-performance parallel computing tasks.

This advantage could become more pronounced for larger and more complex LLMs, making the 4080 a compelling choice for researchers and developers working on these models.

Think of it like this: Processing power is analogous to the engine of a car. A powerful engine enables faster acceleration and smoother performance, just like a high FLOPS number translates to quicker inference speeds for your AI models.

3. Memory Capacity - The Brains Behind the AI

Memory capacity is crucial for storing and accessing the massive amounts of data required by LLMs. The more memory a device has, the larger and more complex models it can handle effectively.

Apple M1 Ultra 800GB 48-Cores:

- The M1 Ultra boasts a whopping 800GB of unified memory. This unified memory architecture allows for seamless data access between the CPU and GPU, leading to faster and more efficient processing for your LLMs.

NVIDIA 4080 16GB:

- The 4080 comes equipped with 16GB of GDDR6 memory. While this is a significantly lower capacity than the M1 Ultra, it's still substantial for running many LLMs effectively.

Key Takeaways:

- The M1 Ultra's massive 800GB unified memory provides a significant advantage for running large LLMs and handling demanding AI workloads.

- This advantage is especially pronounced for models requiring significant data storage and fast access, such as those involved in natural language understanding or complex generative tasks.

- The 4080's 16GB of GDDR6 memory might be sufficient for smaller LLMs, but it could become a bottleneck for larger models or tasks requiring extensive data processing.

Think of memory capacity as the brain of your AI system. A larger brain allows you to store more information and process it more efficiently, leading to better performance and capabilities for your AI model.

4. Power Consumption - The Energy Appetite of AI

Power consumption is a crucial factor to consider, especially for long-running AI workloads. A device with higher power consumption will require more energy and potentially generate more heat, impacting both its efficiency and environmental footprint.

Apple M1 Ultra 800GB 48-Cores:

- The M1 Ultra, despite its impressive performance, is known for its relatively low power consumption compared to GPUs for the same workload. This efficiency is attributed to Apple's optimized design and its unified memory architecture.

NVIDIA 4080 16GB:

- The 4080, due to its high-performance GPU architecture, consumes significantly more power than the M1 Ultra. This increased power draw can lead to higher energy bills and may require more efficient cooling solutions.

Key Takeaways:

- The M1 Ultra offers a significant advantage in terms of power efficiency, making it a more environmentally friendly and cost-effective choice for long-term AI workloads.

- The 4080's high power consumption might be a concern for developers and researchers operating on tight budgets or with environmental considerations.

Imagine running your AI model for days on end. A more power-efficient device will be more sustainable and less expensive in the long run.

5. Quantization Support - The Art of Model Compression

Quantization is a technique for reducing the size and memory footprint of AI models without sacrificing significant accuracy. This technique involves converting the model's parameters from high-precision floating-point numbers to lower-precision integer representations, allowing for faster inference speeds and reduced memory requirements.

Apple M1 Ultra 800GB 48-Cores:

- The M1 Ultra supports various quantization levels, including Q8 and Q4, making it suitable for developers seeking to optimize their models for reduced memory consumption and faster inference speeds.

NVIDIA 4080 16GB:

- The 4080 also supports quantization techniques, offering significant advantages for performance optimization. It can handle various quantization schemes, enabling developers to fine-tune their models for optimal efficiency.

Key Takeaways:

- Both the M1 Ultra and 4080 offer quantization support, making them adaptable for different model sizes and computational constraints.

- This feature enables developers to strike a balance between model accuracy and performance, making it an essential tool for resource optimization.

Think of it as squeezing your AI model into a smaller suitcase. Quantization helps you travel light, enabling faster inference speeds and reduced memory requirements without losing significant functionality.

6. Software Compatibility - The Ecosystem of LLM Tools

Software compatibility is an essential factor to consider when choosing your AI hardware. A device's compatibility with popular LLM frameworks and tools can significantly influence its usability and ease of deployment.

Apple M1 Ultra 800GB 48-Cores:

- The M1 Ultra runs on Apple's macOS operating system, which offers excellent compatibility with popular LLM libraries and frameworks. It has a dedicated community focused on AI development, offering various resources and tools.

NVIDIA 4080 16GB:

- The 4080 is primarily designed for gaming and professional graphics applications, but it also offers strong compatibility with leading AI frameworks and libraries. It benefits from the widespread support of NVIDIA's CUDA platform, which provides a rich ecosystem of AI development tools.

Key Takeaways:

- Both the M1 Ultra and 4080 offer good compatibility with leading LLM frameworks and development tools.

- The choice between the two ultimately depends on the specific software and tools you prefer and the overall development environment you're building.

Imagine building a house for your AI model. A compatible software environment provides the foundations and tools needed for your model to thrive.

7. Price and Cost - The Budget Considerations for AI

Price is a significant factor for developers and researchers, especially when working with expensive hardware. Comparing the cost of these two devices provides insights into their overall value proposition.

Apple M1 Ultra 800GB 48-Cores:

- The M1 Ultra is a high-end chip, and its price reflects its capabilities. While the exact cost can vary depending on the specific configuration, it is generally considered a premium option.

NVIDIA 4080 16GB:

- The 4080 is also a high-performance device, but it is generally more affordable than the M1 Ultra. This makes it a more accessible option for developers and researchers working on a tighter budget.

Key Takeaways:

- The M1 Ultra represents a significant investment, but its powerful capabilities and efficiency might justify the cost for certain projects.

- The 4080 offers a more budget-friendly alternative, providing strong performance at a lower price point, making it a viable option for developers and researchers with limited budgets.

Think of it like this: The price tag is like the entrance fee to the AI playground. Choose your ride based on your budget and the adventure you want to embark on.

Performance Analysis: Putting the Numbers into Perspective

Let's take a deeper dive into the performance analysis of the M1 Ultra and 4080, using the data we have gathered. While these are just two data points, they provide valuable insights into the relative strengths and weaknesses of these devices.

- For smaller models like Llama 2 7B, the M1 Ultra seems to shine in terms of token generation speed, especially with F16 precision. However, the 4080 outperforms the M1 Ultra when using Q4 quantization, demonstrating a potential advantage for larger models with greater data compression.

- The 4080 clearly outperforms the M1 Ultra in processing power, regardless of the LLM or quantization level. This suggests that the 4080 might be better suited for very large LLMs, particularly those requiring high computational throughput.

- The M1 Ultra boasts a massive 800GB of unified memory, putting it in a class of its own in terms of memory capacity. This makes it ideal for handling large datasets and running demanding AI workloads, particularly for applications like natural language understanding and complex generative modeling.

- In terms of power consumption, the M1 Ultra emerges as a winner, demonstrating greater efficiency than the 4080. This efficiency translates to lower energy bills and reduced environmental impact, especially for long-running AI workloads.

Practical Implications for Developers and Researchers

Based on this analysis, here are some practical recommendations for choosing between the M1 Ultra and the 4080:

- If you're working with smaller LLMs (like 7B models) and prioritize fast token generation speeds, the M1 Ultra is a compelling choice, particularly for F16 precision. Its efficiency and unified memory architecture provide significant advantages for handling these models efficiently.

- If your work involves larger LLMs (like 70B models) and demands high processing power, then the 4080's dedicated GPU architecture and high FLOPS numbers make it a powerful contender. Its performance advantage could be more pronounced for these models, especially with Q4 quantization.

- For tasks requiring significant memory capacity, such as large language understanding or generative models, the M1 Ultra's 800GB unified memory is a game-changer. Its ability to handle massive datasets efficiently gives it a significant edge over the 4080.

- If power consumption and environmental impact are significant concerns for your research or development, the M1 Ultra's efficiency makes it a more responsible choice. Its lower energy footprint can translate to long-term cost savings and a reduced environmental footprint.

FAQ - Addressing Common Questions

1. What is an LLM, and why is it important?

LLMs, or large language models, are AI systems trained on massive amounts of text data. They excel at understanding and generating human-like text, enabling applications like chatbots, translation tools, and even content creation.

2. What is quantization, and how does it impact LLM performance?

Quantization is a technique for reducing the size of AI models by converting their parameters from high-precision floating-point numbers to lower-precision integer representations. This makes the models smaller and faster to run, while retaining a good level of accuracy.

3. Which device is better for training LLMs?

Both the M1 Ultra and 4080 can be used for training, but the 4080 is generally considered more suitable due to its high processing power. However, the M1 Ultra's unified memory can also be beneficial for training, especially for models with a large number of parameters.

4. How do I choose the right device for my AI project?

Consider the size of your LLM, the specific tasks you're performing, your budget, and your overall development environment. For smaller models and specific tasks like text generation, the M1 Ultra might be a good choice. For larger models and demanding workloads, the 4080 could be more suitable.

Keywords

Apple M1 Ultra, NVIDIA 4080, LLM, Large Language Models, AI, Artificial Intelligence, Performance Comparison, Token Speed Generation, Processing Power, Memory Capacity, Power Consumption, Quantization, Software Compatibility, Price, Cost, Developer, Researcher, AI Hardware, GPU, CPU, Unified Memory, GDDR6 Memory, Llama 2, Llama 3, Inference, Inference Speed, FLOPS, TPS, CUDA, macOS, Frameworks, Libraries, AI Development, Hardware Selection, AI Project, Practical Recommendations, FAQ.