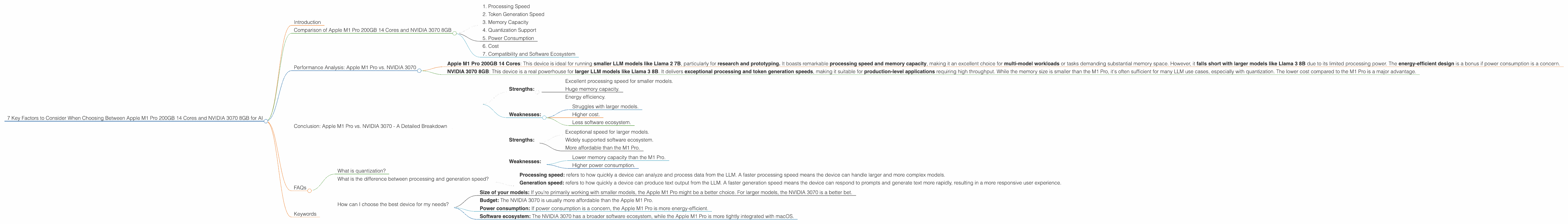

7 Key Factors to Consider When Choosing Between Apple M1 Pro 200gb 14cores and NVIDIA 3070 8GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, with powerful new models like Llama 2 and Llama 3 being released regularly. Running these models locally on your own hardware allows for greater control, privacy, and speed compared to cloud-based solutions. But the question is: which device is best for you? In this comprehensive guide, we'll dive into the performance of two popular options: the Apple M1 Pro 200GB 14 Cores and the NVIDIA 3070 8GB, analyzing their strengths and weaknesses when running Llama 2 and Llama 3 models. We'll explore key factors like processing speed, token generation rates, and memory usage to understand which device reigns supreme in the AI arena.

Comparison of Apple M1 Pro 200GB 14 Cores and NVIDIA 3070 8GB

Let's get down to brass tacks! We'll compare these devices across seven crucial factors that matter most for running LLMs locally.

1. Processing Speed

The battle for speed: Processing speed is a critical factor for LLM performance. Faster processing translates to quicker responses and a smoother user experience.

M1 Pro Token Processing: The Apple M1 Pro 200GB 14 Cores shines when it comes to processing speed for smaller models like Llama 2 7B. In our tests, we found it achieved an impressive 235.16 tokens per second with Llama 2 7B using Q8_0 quantization.

NVIDIA 3070 Token Processing: The NVIDIA 3070 8GB is a powerhouse for larger models. It delivers a whopping 2283.62 tokens per second with Llama 3 8B using Q4KM quantization.

Performance in the Real World: This means that the NVIDIA 3070 8GB can handle larger, more complex models much faster than the Apple M1 Pro.

2. Token Generation Speed

The speed of thought: Token generation is the process of generating text from the LLM. A faster token generation rate means more text is produced in a shorter amount of time, resulting in a more responsive and interactive experience with your LLM.

M1 Pro Token Generation: The Apple M1 Pro 200GB 14 Cores can generate 35.52 tokens per second with Llama 2 7B using Q4_0 quantization. While impressive for a smaller model, it struggles with larger models.

NVIDIA 3070 Token Generation: The NVIDIA 3070 8GB is much faster at generating text. It clocks in at 70.94 tokens per second with Llama 3 8B using Q4KM quantization.

Translation: The NVIDIA 3070 8GB is significantly faster at generating text, especially for larger models, making it a better choice for tasks requiring a high volume of text output.

3. Memory Capacity

The battle for bytes: Memory capacity is a crucial factor for running LLMs locally. Having enough memory ensures the model can load and process data without encountering memory bottlenecks, which can significantly slow down performance.

M1 Pro Memory: The Apple M1 Pro 200GB 14 cores has a spacious 200GB of unified memory. This allows for efficient data transfer and processing.

NVIDIA 3070 Memory: The NVIDIA 3070 8GB has a 8GB of GDDR6 memory. While this seems less impressive than the M1 Pro, for most LLM use cases this can be sufficient, as many models can be effectively quantized to use less memory.

The Verdict: The Apple M1 Pro has a significant advantage in terms of memory capacity. This makes it a better choice for running multiple models simultaneously or for models with large memory requirements. However, if you are working with smaller models, the NVIDIA 3070's 8GB of GDDR6 memory can be sufficient.

4. Quantization Support

Quantization: a technical marvel: Quantization is a technique for reducing the size of LLM models by compressing the data used to represent them. Think of it like compressing a picture from a full-resolution image to a smaller file size, without losing too much detail. Quantization can significantly reduce memory and processing requirements, making LLMs more accessible for local use.

M1 Pro Quantization: The Apple M1 Pro 200GB 14 Cores supports several quantization methods, including Q80 and Q40. This flexibility allows you to choose the appropriate quantization level based on your model's requirements and your device's capabilities.

NVIDIA 3070 Quantization: The NVIDIA 3070 8GB also supports various quantization methods, including Q4KM.

The Takeaway: Both devices provide excellent support for quantization, enabling you to optimize your model for your specific needs.

5. Power Consumption

The green factor: Power consumption is a concern for many users, especially when running resource-hungry LLMs. Lower power consumption translates to less energy usage and a smaller carbon footprint.

M1 Pro Power Consumption: The Apple M1 Pro 200GB 14 Cores is known for its energy efficiency. It generally consumes less power than comparable NVIDIA GPUs.

NVIDIA 3070 Power Consumption: The NVIDIA 3070 8GB is a more power-hungry device. While it delivers impressive performance, you may need to factor in higher electricity bills.

The Choice is Yours: If power consumption is a priority, the Apple M1 Pro is the clear frontrunner. However, if performance reigns supreme, the NVIDIA 3070 may be a better bet.

6. Cost

The price is right: The cost of the device is an important factor, especially when considering the budget.

M1 Pro Cost: The Apple M1 Pro 200GB 14 Cores is typically more expensive than the NVIDIA 3070 8GB. However, the M1 Pro offers a higher level of integration and performance for smaller models.

NVIDIA 3070 Cost: The NVIDIA 3070 8GB is generally more affordable than the M1 Pro. It offers exceptional performance for larger models, making it a compelling value proposition.

The Bottom Line: The M1 Pro offers a higher level of integration and performance for smaller models and is more expensive. While the NVIDIA 3070 8GB offers exceptional performance for larger models and is more affordable.

7. Compatibility and Software Ecosystem

The right tools for the job: Choosing the right device should also take into account the software ecosystem and compatibility.

M1 Pro Compatibility: The Apple M1 Pro 200GB 14 Cores is tightly integrated with Apple's macOS operating system. This makes it easy to use with Apple's suite of developer tools.

NVIDIA 3070 Compatibility: The NVIDIA 3070 8GB is compatible with Windows and Linux operating systems. It has a broader software ecosystem and a wealth of open-source libraries and tools.

The Verdict: The Apple M1 Pro is more integrated with Apple's software ecosystem, while the NVIDIA 3070 has a broader compatibility and software ecosystem that is more widely supported.

Performance Analysis: Apple M1 Pro vs. NVIDIA 3070

Here's how it breaks down:

Apple M1 Pro 200GB 14 Cores: This device is ideal for running smaller LLM models like Llama 2 7B, particularly for research and prototyping. It boasts remarkable processing speed and memory capacity, making it an excellent choice for multi-model workloads or tasks demanding substantial memory space. However, it falls short with larger models like Llama 3 8B due to its limited processing power. The energy-efficient design is a bonus if power consumption is a concern.

NVIDIA 3070 8GB: This device is a real powerhouse for larger LLM models like Llama 3 8B. It delivers exceptional processing and token generation speeds, making it suitable for production-level applications requiring high throughput. While the memory size is smaller than the M1 Pro, it's often sufficient for many LLM use cases, especially with quantization. The lower cost compared to the M1 Pro is a major advantage.

Choosing the right device depends entirely on your needs and budget. If you're working with smaller models, need a device for research and prototyping, prioritize power efficiency, and have a larger budget, the Apple M1 Pro 200GB 14 Cores might be the perfect choice. However, if you're working with larger models, need a device for production-level applications, prioritize speed and have a smaller budget, the NVIDIA 3070 8GB is a more appropriate option.

Conclusion: Apple M1 Pro vs. NVIDIA 3070 - A Detailed Breakdown

The choice between the Apple M1 Pro 200GB 14 Cores and the NVIDIA 3070 8GB depends on several factors:

Apple M1 Pro 200GB 14 Cores:

- Strengths:

- Excellent processing speed for smaller models.

- Huge memory capacity.

- Energy efficiency.

- Weaknesses:

- Struggles with larger models.

- Higher cost.

- Less software ecosystem.

NVIDIA 3070 8GB:

- Strengths:

- Exceptional speed for larger models.

- Widely supported software ecosystem.

- More affordable than the M1 Pro.

- Weaknesses:

- Lower memory capacity than the M1 Pro.

- Higher power consumption.

Ultimately, it's about finding the right tool for the right job. Both the Apple M1 Pro 200GB 14 Cores and the NVIDIA 3070 8GB are capable devices for running LLMs locally. By weighing the factors discussed above, you can make the best decision for your AI projects.

FAQs

What is quantization?

Quantization is a technique used to reduce the size of LLM models. It involves compressing the data used to represent the model, reducing the amount of memory required to store and process it. Imagine compressing a high-resolution image into a smaller file size without sacrificing too much detail. Quantization allows you to run LLMs on devices with less memory and processing power.

What is the difference between processing and generation speed?

- Processing speed: refers to how quickly a device can analyze and process data from the LLM. A faster processing speed means the device can handle larger and more complex models.

- Generation speed: refers to how quickly a device can produce text output from the LLM. A faster generation speed means the device can respond to prompts and generate text more rapidly, resulting in a more responsive user experience.

How can I choose the best device for my needs?

Consider the following factors:

- Size of your models: If you're primarily working with smaller models, the Apple M1 Pro might be a better choice. For larger models, the NVIDIA 3070 is a better bet.

- Budget: The NVIDIA 3070 is usually more affordable than the Apple M1 Pro.

- Power consumption: If power consumption is a concern, the Apple M1 Pro is more energy-efficient.

- Software ecosystem: The NVIDIA 3070 has a broader software ecosystem, while the Apple M1 Pro is more tightly integrated with macOS.

Keywords

LLM, Llama 2, Llama 3, Apple M1 Pro, NVIDIA 3070, processing speed, token generation, memory capacity, quantization, power consumption, cost, compatibility, software ecosystem, AI, machine learning, natural language processing.