7 Key Factors to Consider When Choosing Between Apple M1 68gb 7cores and NVIDIA 3070 8GB for AI

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI marvels are changing the way we interact with computers, opening doors to new possibilities in natural language processing, code generation, and creative writing. But running these computationally intensive LLMs requires significant processing power. This article will guide you through the crucial factors to consider when choosing between Apple M1 68GB 7-cores and NVIDIA 3070 8GB for running LLMs, focusing on Llama 2 and Llama 3 models.

We'll analyze their performance, strengths, and weaknesses, helping you decide which device best suits your specific needs.

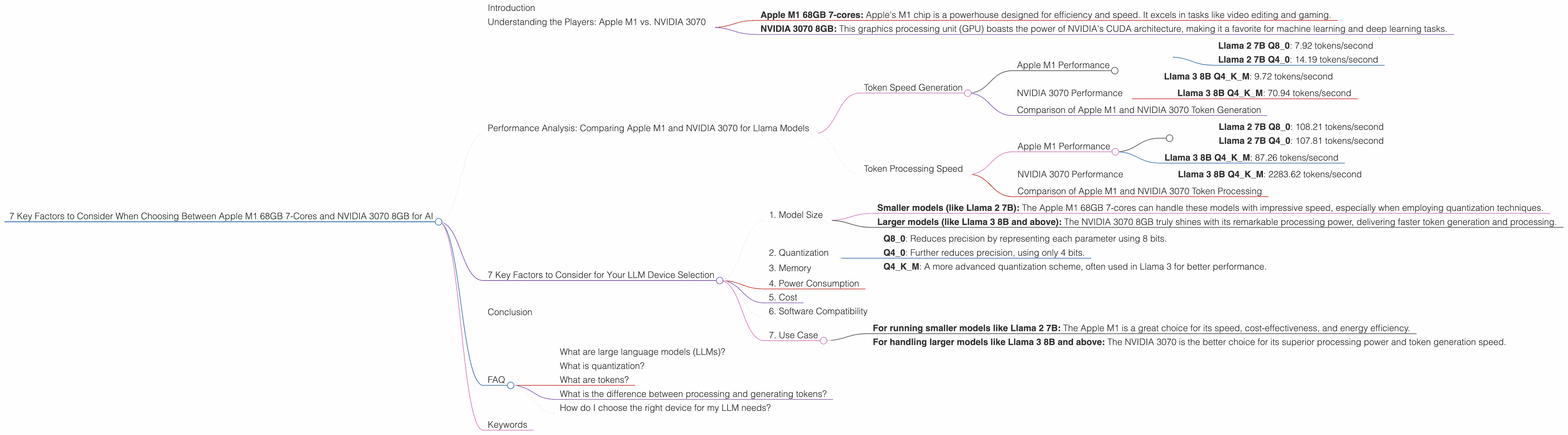

Understanding the Players: Apple M1 vs. NVIDIA 3070

Before diving into the analysis, let's briefly introduce the two contenders:

- Apple M1 68GB 7-cores: Apple's M1 chip is a powerhouse designed for efficiency and speed. It excels in tasks like video editing and gaming.

- NVIDIA 3070 8GB: This graphics processing unit (GPU) boasts the power of NVIDIA's CUDA architecture, making it a favorite for machine learning and deep learning tasks.

Performance Analysis: Comparing Apple M1 and NVIDIA 3070 for Llama Models

Picking the right device depends on your specific needs and the LLM you intend to run. Let's analyze the performance of both the Apple M1 68GB 7-cores and NVIDIA 3070 8GB in processing and generating tokens for different Llama models.

Token Speed Generation

Apple M1 Performance

The Apple M1 68GB 7-cores shows impressive token generation speeds for Llama 2 7B, particularly with quantized models:

- Llama 2 7B Q8_0: 7.92 tokens/second

- Llama 2 7B Q4_0: 14.19 tokens/second

For the larger Llama 3 8B, the performance remains respectable:

- Llama 3 8B Q4KM: 9.72 tokens/second

However, data for larger models like Llama 2 70B and Llama 3 70B isn't available for the Apple M1.

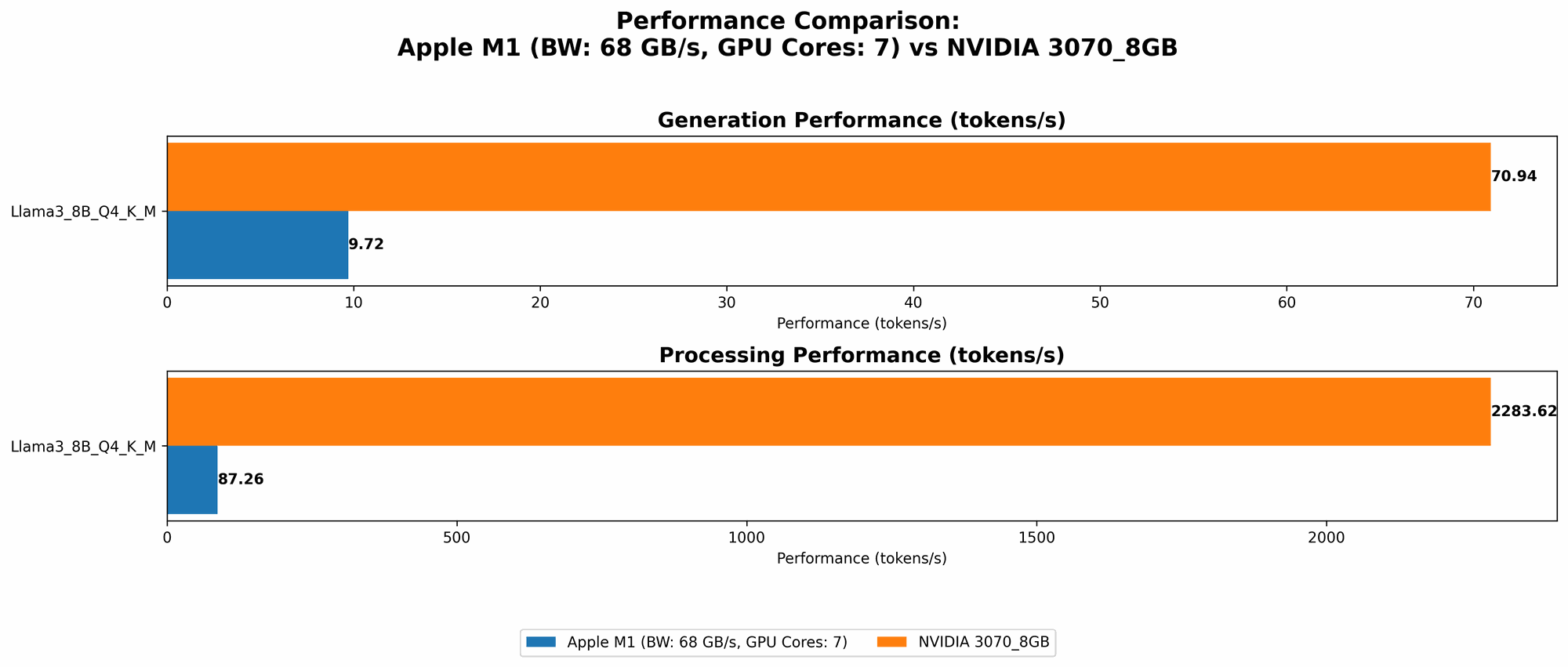

NVIDIA 3070 Performance

The NVIDIA 3070 demonstrates superior token generation for Llama 3 8B:

- Llama 3 8B Q4KM: 70.94 tokens/second

Again, data for larger models like Llama 2 70B and Llama 3 70B isn't available for the NVIDIA 3070.

Comparison of Apple M1 and NVIDIA 3070 Token Generation

The NVIDIA 3070 clearly outperforms the Apple M1 in token generation, especially for the larger Llama 3 8B model. The Apple M1 holds its ground with smaller models, especially when using quantization techniques. Think of it like a marathon: the M1 is a fast sprinter, while the 3070 is a long-distance runner.

Token Processing Speed

Apple M1 Performance

The Apple M1 excels in processing tokens for Llama 2 7B, achieving excellent speeds with quantized models:

- Llama 2 7B Q8_0: 108.21 tokens/second

- Llama 2 7B Q4_0: 107.81 tokens/second

For Llama 3 8B, the performance remains strong:

- Llama 3 8B Q4KM: 87.26 tokens/second

NVIDIA 3070 Performance

The NVIDIA 3070 showcases its power in token processing for Llama 3 8B, demonstrating exceptional speeds:

- Llama 3 8B Q4KM: 2283.62 tokens/second

Comparison of Apple M1 and NVIDIA 3070 Token Processing

The NVIDIA 3070 leaves the Apple M1 in the dust when processing tokens of the larger Llama 3 8B model. However, the Apple M1 manages to keep up with the NVIDIA 3070 for the smaller Llama 2 7B.

7 Key Factors to Consider for Your LLM Device Selection

1. Model Size

The size of your LLM is crucial.

- Smaller models (like Llama 2 7B): The Apple M1 68GB 7-cores can handle these models with impressive speed, especially when employing quantization techniques.

- Larger models (like Llama 3 8B and above): The NVIDIA 3070 8GB truly shines with its remarkable processing power, delivering faster token generation and processing.

Imagine a car race: smaller cars are faster on tight tracks, while larger cars dominate open highways.

2. Quantization

Quantization is a technique that reduces the number of bits used to represent model parameters.

- Q8_0: Reduces precision by representing each parameter using 8 bits.

- Q4_0: Further reduces precision, using only 4 bits.

- Q4KM: A more advanced quantization scheme, often used in Llama 3 for better performance.

Quantization can significantly boost performance, especially for smaller models on the Apple M1. However, it can also introduce limitations in model accuracy. Think of it as compressing a video: you save space but might lose some visual quality.

3. Memory

Memory is crucial for storing LLM models and their parameters. The Apple M1 68GB 7-cores offers ample memory for running smaller models (like Llama 2 7B). The NVIDIA 8GB is a bit tight for larger models, especially if you plan to use F16 precision.

4. Power Consumption

The Apple M1 is known for its energy efficiency. If you prioritize power consumption, the Apple M1 is a great choice. The NVIDIA 3070, however, is known for its higher power consumption.

5. Cost

The Apple M1 is often a more budget-friendly option compared to the cost of a powerful GPU like the NVIDIA 3070.

6. Software Compatibility

Both the Apple M1 and NVIDIA 3070 have their own ecosystems and software compatibility. You'll need to ensure that your LLM framework and tools are compatible with the chosen device.

7. Use Case

Consider your specific use case.

- For running smaller models like Llama 2 7B: The Apple M1 is a great choice for its speed, cost-effectiveness, and energy efficiency.

- For handling larger models like Llama 3 8B and above: The NVIDIA 3070 is the better choice for its superior processing power and token generation speed.

Conclusion

Choosing between the Apple M1 68GB 7-cores and NVIDIA 3070 8GB for running LLMs depends on your specific needs, the model size you plan to work with, and your priorities. If cost, energy efficiency, and smaller model support are important, the Apple M1 is a great choice. If you need the raw power to handle larger models and prioritize speed, then the NVIDIA 3070 is your champion.

FAQ

What are large language models (LLMs)?

LLMs are AI models trained on massive datasets of text and code, allowing them to understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is a technique used to reduce the number of bits used to represent model parameters. It can significantly boost performance but may introduce limitations in model accuracy.

What are tokens?

Tokens are the basic units of text in an LLM. Each word or punctuation mark is represented as a token. Tokenization is the process of breaking down text into tokens.

What is the difference between processing and generating tokens?

Processing tokens involves reading and understanding the input, while generating tokens involves creating new text.

How do I choose the right device for my LLM needs?

Consider the factors discussed in the article, including model size, memory requirements, and your budget. It's a good idea to experiment with different devices and compare their performance for your specific use case.

Keywords

Apple M1, NVIDIA 3070, LLM, Llama 2, Llama 3, token speed, processing speed, quantization, memory, power consumption, cost, software compatibility, use case, AI, deep learning, natural language processing.