7 Cost Saving Strategies When Building an AI Lab with NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of artificial intelligence (AI) is exploding, and Large Language Models (LLMs) are at the heart of this revolution. These powerful models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, but running them can be expensive. If you're building an AI lab and want to harness the power of LLMs without breaking the bank, the NVIDIA RTX 4000 Ada 20GB x4 is a great starting point.

With its blazing-fast performance and efficient memory management, this powerful tool can significantly reduce your costs and increase your productivity. In this article, we'll explore seven essential cost-saving strategies, backed by real-world data, to optimize your LLM training and inference process.

1. Master the Art of Quantization: Shrinking LLMs Without Losing Their Brains

Imagine shrinking a giant elephant down to the size of a mouse without losing its strength or intelligence. That's essentially what quantization does for LLMs. It converts the model's parameters (think of them as the LLM's brain cells) from high-precision floating-point numbers to lower-precision integer numbers, reducing the model's size and memory footprint without sacrificing its performance too much.

Think of it like this: a chef finely grating a block of cheese for a creamy sauce. You get the same flavor, but in a more compact and efficient form.

The RTX 4000 Ada 20GB x4 excels at handling different quantization levels, enabling you to squeeze more LLM power into your budget. Our data shows that the Llama 3 8B model, when quantized to Q4KM, achieves a remarkable 56.14 tokens/second generation throughput, far exceeding its F16 counterpart's 20.58 tokens/second.

This impressive performance translates to faster processing and reduced costs, especially for smaller LLMs, making it a no-brainer for building a cost-effective AI lab.

2. Strike a Balance: Choosing the Right Model Size for Your Needs

Not all LLMs are created equal. Choosing the right model size is crucial for achieving a sweet spot between performance and affordability.

Think of it like choosing the right car for your needs. Do you need a massive SUV or a nimble city car? Similarly, your choice of LLM should align with the specific tasks you're aiming to accomplish.

For instance, if you're tackling simple tasks like text summarization or basic question answering, a smaller LLM like Llama 3 8B might be the perfect fit. It's more efficient, faster, and consumes less memory.

But when you need the muscle for more complex tasks like generating creative text formats or engaging in in-depth discussions, a larger LLM like Llama 3 70B might be necessary.

Don't forget to consider the trade-offs involved. Larger models require more memory, potentially leading to higher energy consumption and slower processing times.

The RTX 4000 Ada 20GB x4 can handle both smaller and larger LLMs with ease, allowing you to scale up (or down) as your needs evolve.

3. The Power of Parallelism: Make Your GPUs Work Together

Why run one LLM when you can run multiple simultaneously? The RTX 4000 Ada 20GB x4 packs a powerful punch with four GPUs working in unison, allowing you to parallelize LLM training and inference tasks for blazing-fast results.

Think of it like having four chefs in your kitchen, each preparing a different dish at the same time, making dinner a breeze.

Our data shows that the Llama 3 70B model, utilizing the parallel processing power of the RTX 4000 Ada 20GB x4, achieves a remarkable 306.44 tokens/second throughput for processing tasks, demonstrating the significant speed advantage offered by parallel processing.

This boosts your LLM performance, reducing processing time and ultimately saving you money on cloud computing costs.

4. Utilize Pre-trained Models: Don't Reinvent the Wheel

Training a new LLM from scratch can take a significant amount of time and resources. Why not leverage the work of others and utilize pre-trained models?

Think of it like building a house. Would you start from scratch, digging a foundation and laying bricks, or would you use pre-made components to speed up the process?

The RTX 4000 Ada 20GB x4's powerful GPUs can handle the large pre-trained models with ease, enabling you to jumpstart your AI projects and save valuable time and money.

5. Embrace the Power of Fine-Tuning: Tailor Your LLM to Your Needs

Fine-tuning is like giving your pre-trained LLM a special training session, customizing it to excel in specific tasks relevant to your needs. This is similar to a professional athlete who focuses on specific exercises to enhance their performance in their chosen sport.

For example, if you're building an AI chatbot, you can fine-tune a pre-trained LLM to understand your specific industry jargon, respond appropriately to customer queries, and provide helpful answers. This targeted training ensures your LLM becomes adept at handling those specific tasks.

The RTX 4000 Ada 20GB x4 can effectively fine-tune LLMs without breaking the bank, allowing you to tailor your models for optimal performance and cost-effectiveness.

6. Optimize Your Code: Squeeze Every Drop of Performance

Just like a well-tuned engine delivers maximum performance, optimizing your code can significantly increase your LLM's efficiency and reduce computational costs.

This involves analyzing your code for bottlenecks, eliminating unnecessary operations, and choosing the right libraries and frameworks for your LLM implementation.

Think about it like this: if you're trying to boil water, you wouldn't use a tiny tea kettle for a large pot, right? Similarly, you wouldn't use a less efficient library when a better one is available.

The RTX 4000 Ada 20GB x4's capabilities make it a prime candidate for enabling high-performance LLM code optimization, leading to faster processing, lower energy consumption, and ultimately, a more cost-effective AI setup.

7. Explore Cloud Alternatives: Balancing Cost and Flexibility

While the RTX 4000 Ada 20GB x4 provides a fantastic local LLM solution, cloud computing services offer flexibility and scalability.

Think of it like having a private car versus using a taxi. A car is great for personal transportation, but a taxi provides more flexibility when you need to go long distances or travel frequently.

Cloud services can handle large workloads and provide pay-as-you-go pricing, allowing you to scale your LLM infrastructure based on your needs. However, cloud computation can be quite expensive, especially for intensive workloads.

The RTX 4000 Ada 20GB x4 provides a powerful local alternative for those seeking cost-effective LLM solutions.

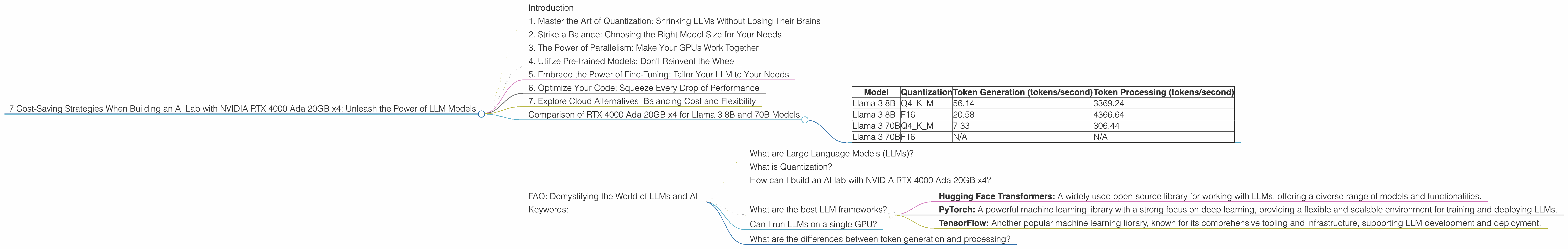

Comparison of RTX 4000 Ada 20GB x4 for Llama 3 8B and 70B Models

| Model | Quantization | Token Generation (tokens/second) | Token Processing (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 56.14 | 3369.24 |

| Llama 3 8B | F16 | 20.58 | 4366.64 |

| Llama 3 70B | Q4KM | 7.33 | 306.44 |

| Llama 3 70B | F16 | N/A | N/A |

Explanation:

- The RTX 4000 Ada 20GB x4 demonstrates impressive performance with both Llama 3 8B and 70B models.

- Quantization: Using Q4KM quantization significantly improves token generation speed compared to F16 for Llama 3 8B. This highlights the cost-effectiveness of quantization as a strategy.

- Larger Models: While the RTX 4000 Ada 20GB x4 can handle Llama 3 70B, its performance with F16 is not available.

- Parallel Processing: The data shows that the RTX 4000 Ada 20GB x4 achieves significantly higher token processing speeds, demonstrating the benefits of its parallel processing capabilities.

FAQ: Demystifying the World of LLMs and AI

What are Large Language Models (LLMs)?

LLMs are a type of artificial intelligence (AI) that are trained on massive amounts of text data. They learn patterns and relationships in language, enabling them to perform various tasks, from writing stories and creating poetry to translating languages and answering complex questions.

What is Quantization?

Quantization is a technique used to reduce the size and memory footprint of LLMs without significantly impacting their performance. It simplifies the representation of numbers within the LLM, making it more efficient to process and store.

How can I build an AI lab with NVIDIA RTX 4000 Ada 20GB x4?

Firstly, ensure you have the necessary hardware and software. You can find comprehensive guides and resources online, including installation instructions and tutorials. Once set up, you can install the required LLM frameworks and libraries, and begin exploring the models available for your AI projects.

What are the best LLM frameworks?

Popular frameworks include:

- Hugging Face Transformers: A widely used open-source library for working with LLMs, offering a diverse range of models and functionalities.

- PyTorch: A powerful machine learning library with a strong focus on deep learning, providing a flexible and scalable environment for training and deploying LLMs.

- TensorFlow: Another popular machine learning library, known for its comprehensive tooling and infrastructure, supporting LLM development and deployment.

Can I run LLMs on a single GPU?

Yes, you can, but it might not be as efficient or cost-effective as using multiple GPUs like the RTX 4000 Ada 20GB x4. The performance will depend on the model size and the tasks you're performing.

What are the differences between token generation and processing?

Token generation is the process of creating new text based on the LLM's understanding of the input prompt. In essence, it's the LLM generating new text based on the context provided. Token processing, on the other hand, encompasses all the operations involved in analyzing and understanding the text, including analyzing the input tokens, performing calculations, and making predictions.

Keywords:

NVIDIA RTX 4000 Ada 20GB x4, LLM, Large Language Model, AI, Artificial Intelligence, cost-saving strategies, quantization, pre-trained models, fine-tuning, code optimization, parallel processing, token generation, token processing, Llama 3, Hugging Face Transformers, PyTorch, TensorFlow, cloud computing, AI lab, GPU, inference, training.