7 Cooling Solutions for 24 7 AI Operations with NVIDIA RTX A6000 48GB

Introduction

The world of local Large Language Models (LLMs) is heating up! No, really - running these models can literally heat up your hardware. But don't worry, we’ve got you covered. This article delves into the fascinating world of AI cooling, exploring the key strategies for keeping your NVIDIA RTX A6000 48GB humming along while your LLMs churn through gigabytes of data.

Imagine: you've got a powerful LLM, like the ever-popular Llama 2, trained on your local machine, ready to answer your questions and write creative content. But as the model works, it starts to get...well, warm. And if it gets too hot, it can slow down, even crash! That's where the right cooling solution comes in. By preventing overheating, you'll ensure your AI operations run smoothly, 24/7.

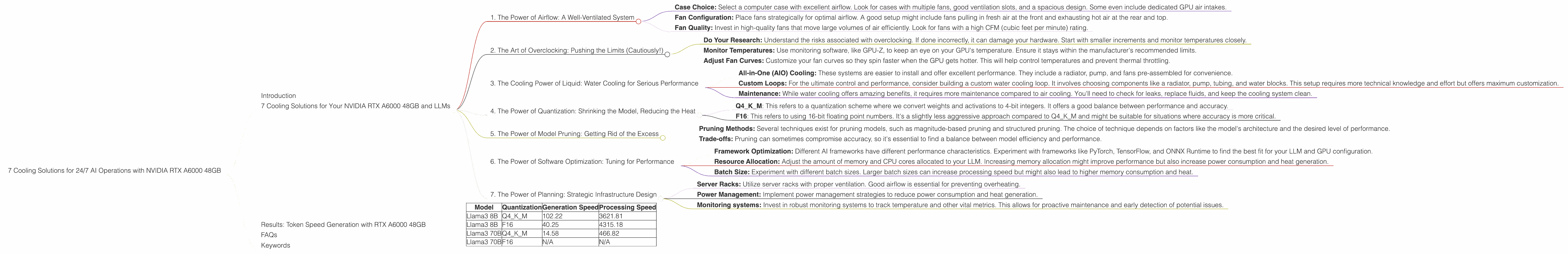

7 Cooling Solutions for Your NVIDIA RTX A6000 48GB and LLMs

1. The Power of Airflow: A Well-Ventilated System

Think of your computer like a car engine. It needs fresh air for optimal performance. Similarly, proper airflow is crucial for your NVIDIA RTX A6000 48GB. Here's how to ensure your GPU is getting enough air:

- Case Choice: Select a computer case with excellent airflow. Look for cases with multiple fans, good ventilation slots, and a spacious design. Some even include dedicated GPU air intakes.

- Fan Configuration: Place fans strategically for optimal airflow. A good setup might include fans pulling in fresh air at the front and exhausting hot air at the rear and top.

- Fan Quality: Invest in high-quality fans that move large volumes of air efficiently. Look for fans with a high CFM (cubic feet per minute) rating.

2. The Art of Overclocking: Pushing the Limits (Cautiously!)

Want to squeeze every ounce of performance out of your RTX A6000 48GB? Overclocking is your friend (but be careful!). Pushing the clock speed of your GPU can lead to significant performance gains, but also increases heat generation.

- Do Your Research: Understand the risks associated with overclocking. If done incorrectly, it can damage your hardware. Start with smaller increments and monitor temperatures closely.

- Monitor Temperatures: Use monitoring software, like GPU-Z, to keep an eye on your GPU's temperature. Ensure it stays within the manufacturer's recommended limits.

- Adjust Fan Curves: Customize your fan curves so they spin faster when the GPU gets hotter. This will help control temperatures and prevent thermal throttling.

Remember: Overclocking is a delicate dance. Always prioritize stability over performance. If your GPU starts to overheat, dial back the clock speed.

3. The Cooling Power of Liquid: Water Cooling for Serious Performance

Water cooling is the ultimate solution for those who want to push their RTX A6000 48GB to its absolute limits. By replacing the traditional air cooling system with a liquid loop, you achieve significantly better heat dissipation and can push your GPU even harder.

- All-in-One (AIO) Cooling: These systems are easier to install and offer excellent performance. They include a radiator, pump, and fans pre-assembled for convenience.

- Custom Loops: For the ultimate control and performance, consider building a custom water cooling loop. It involves choosing components like a radiator, pump, tubing, and water blocks. This setup requires more technical knowledge and effort but offers maximum customization.

- Maintenance: While water cooling offers amazing benefits, it requires more maintenance compared to air cooling. You'll need to check for leaks, replace fluids, and keep the cooling system clean.

4. The Power of Quantization: Shrinking the Model, Reducing the Heat

Quantization is like a diet plan for your LLM. Think of it as shrinking the model while preserving its functionality. By converting large numbers (like 32-bit floating-point numbers) to smaller ones (like 8-bit integers), quantization reduces the computational load and, in turn, heat generation.

- Q4KM: This refers to a quantization scheme where we convert weights and activations to 4-bit integers. It offers a good balance between performance and accuracy.

- F16: This refers to using 16-bit floating point numbers. It's a slightly less aggressive approach compared to Q4KM and might be suitable for situations where accuracy is more critical.

The Magic Number: The RTX A6000 48GB can handle a significant number of tokens per second, even with quantization. For example, it processes 3621.81 tokens per second for Llama3 8B with Q4KM quantization.

5. The Power of Model Pruning: Getting Rid of the Excess

Model pruning is like decluttering your LLM. It involves removing unnecessary connections (neurons) from the model's network, leading to a smaller, more efficient model. This reduction in size and complexity translates to less computational demand, resulting in lower heat generation.

- Pruning Methods: Several techniques exist for pruning models, such as magnitude-based pruning and structured pruning. The choice of technique depends on factors like the model's architecture and the desired level of performance.

- Trade-offs: Pruning can sometimes compromise accuracy, so it's essential to find a balance between model efficiency and performance.

6. The Power of Software Optimization: Tuning for Performance

Software optimization is a powerful tool for maximizing your RTX A6000 48GB's capabilities and reducing heat generation. By tweaking the software settings and algorithms, you can optimize your LLM's performance and efficiency.

- Framework Optimization: Different AI frameworks have different performance characteristics. Experiment with frameworks like PyTorch, TensorFlow, and ONNX Runtime to find the best fit for your LLM and GPU configuration.

- Resource Allocation: Adjust the amount of memory and CPU cores allocated to your LLM. Increasing memory allocation might improve performance but also increase power consumption and heat generation.

- Batch Size: Experiment with different batch sizes. Larger batch sizes can increase processing speed but might also lead to higher memory consumption and heat.

7. The Power of Planning: Strategic Infrastructure Design

For those running LLMs 24/7, careful infrastructure design is crucial. Consider the following factors to optimize your setup for long-term stability and efficient cooling:

- Server Racks: Utilize server racks with proper ventilation. Good airflow is essential for preventing overheating.

- Power Management: Implement power management strategies to reduce power consumption and heat generation.

- Monitoring systems: Invest in robust monitoring systems to track temperature and other vital metrics. This allows for proactive maintenance and early detection of potential issues.

Results: Token Speed Generation with RTX A6000 48GB

Here's the breakdown of token speeds for various LLM models running on the RTX A6000 48GB:

Table 1: Token Speed Generation on RTX A6000 48GB (Tokens/Second)

| Model | Quantization | Generation Speed | Processing Speed |

|---|---|---|---|

| Llama3 8B | Q4KM | 102.22 | 3621.81 |

| Llama3 8B | F16 | 40.25 | 4315.18 |

| Llama3 70B | Q4KM | 14.58 | 466.82 |

| Llama3 70B | F16 | N/A | N/A |

Key Observations:

- Smaller models are faster: As expected, the Llama3 8B model achieves significantly higher generation speeds compared to the larger 70B model.

- Quantization is key: Q4KM quantization delivers a notable performance boost compared to F16. This emphasizes the importance of selecting an appropriate quantization strategy for optimal efficiency.

- Processing vs. Generation: Processing speed refers to the model's ability to process a large number of tokens, whereas generation speed focuses on the actual output of text. The RTX A6000 48GB excels in both areas.

FAQs

Q: What are the best cooling solutions for my specific LLM setup?

A: The optimal cooling solution depends on factors like your model's size, your budget, and your level of technical expertise. Start with basic air cooling strategies and consider water cooling or overclocking if you need to push your GPU to its limits.

Q: How do I know if my GPU is overheating?

A: Monitor your GPU's temperature using software like GPU-Z. If it consistently reaches or exceeds the manufacturer's recommended temperature limits, your GPU is likely overheating.

Q: Can I run an LLM without a dedicated GPU?

A: Yes, it is possible to run smaller LLMs on CPUs, but the performance will be significantly slower compared to using a GPU. For larger LLMs, a GPU is highly recommended for efficient operation.

Keywords

NVIDIA RTX A6000 48GB, AI Cooling, LLM, Large Language Model, Llama 2, Llama 3, 8B, 70B, Quantization, Q4KM, F16, Overclocking, Water Cooling, Airflow, Model Pruning, Software Optimization, Token Speed, Performance, Efficiency, GPU, GPU Temperature, Server Racks, Power Management, Monitoring Systems