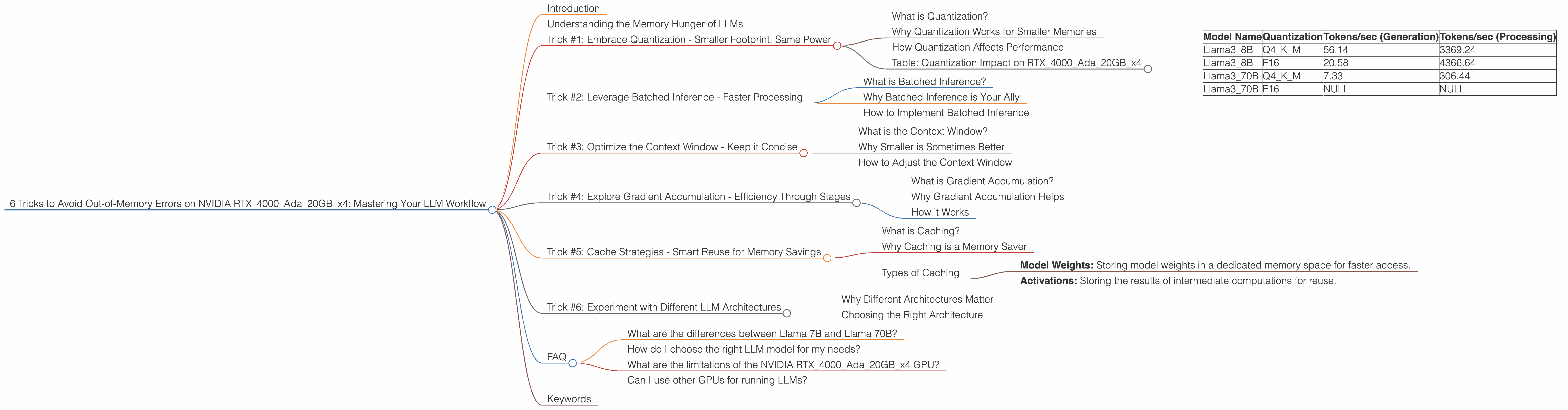

6 Tricks to Avoid Out of Memory Errors on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of Large Language Models (LLMs) is exciting, but it can also be quite demanding. Running these complex models locally, especially on powerful GPUs, can be a rewarding experience, but it's not without its challenges. One particularly frustrating issue is the dreaded "out-of-memory" error. It's like your LLM suddenly got amnesia and forgot everything it's learned, leaving you with a blank screen and a sigh of frustration.

This article is here to help you conquer these memory woes. We'll dive into the specific challenges of running LLMs on a NVIDIA RTX4000Ada20GBx4 setup, a powerful configuration that packs a punch. We'll explore six practical tricks to optimize your LLM workflow, ensuring your model runs smoothly and keeps its memory intact.

Understanding the Memory Hunger of LLMs

Imagine your LLM as an insatiable gourmand, constantly devouring vast quantities of data. These models are designed to process massive amounts of information, remember complex patterns, and generate coherent responses. All this requires a large memory footprint, akin to a bottomless pit of information.

The NVIDIA RTX4000Ada20GBx4 is an excellent card for LLM experimentation, but even its 20GB of memory can be devoured quickly by larger models. This is where the tricks come in handy, allowing you to squeeze the most out of your hardware.

Trick #1: Embrace Quantization - Smaller Footprint, Same Power

What is Quantization?

Imagine you're trying to describe the color of a flower. Instead of saying "a vibrant shade of pink," you can just say "pink." You've simplified the description without losing the essence of the meaning. Quantization works similarly for LLMs. It reduces the precision of the weights (the model's internal parameters) by representing them with fewer bits.

Why Quantization Works for Smaller Memories

Think of it like carrying less luggage on a trip - you can pack more efficiently. Using less memory for each weight allows you to fit a larger model into your GPU's memory.

How Quantization Affects Performance

The trade-off? Slightly reduced accuracy in some scenarios. For example, comparing Llama38BQ4KMGeneration (quantized to 4 bits) on the RTX4000Ada20GBx4 to the Llama38BF16Generation (16 bits) we see a drop in token generation speed from 56.14 tokens/second to 20.58 tokens/second - almost a threefold decrease.

However, in some cases, the performance may still be quite good, and the benefits in memory usage can be worth the sacrifice.

Table: Quantization Impact on RTX4000Ada20GBx4

We can see that Llama370BF16_Generation (16 bits) is not even available because the GPU memory is not large enough. This highlights the importance of using quantization to run larger models.

| Model Name | Quantization | Tokens/sec (Generation) | Tokens/sec (Processing) |

|---|---|---|---|

| Llama3_8B | Q4KM | 56.14 | 3369.24 |

| Llama3_8B | F16 | 20.58 | 4366.64 |

| Llama3_70B | Q4KM | 7.33 | 306.44 |

| Llama3_70B | F16 | NULL | NULL |

Trick #2: Leverage Batched Inference - Faster Processing

What is Batched Inference?

Imagine a factory with assembly lines. Instead of working on one product at a time, the factory processes multiple products simultaneously for increased efficiency. Batched inference works the same way for LLMs. It processes multiple input sequences together, significantly speeding up the inference process.

Why Batched Inference is Your Ally

Think of it like getting your groceries delivered in bulk – you get more for less effort. By processing multiple text sequences in batches, you reduce the overhead of processing each sequence individually, leading to faster responses.

How to Implement Batched Inference

Most LLM libraries offer options for setting batch sizes. Experiment with different batch sizes to find the optimal balance between speed and memory usage.

Trick #3: Optimize the Context Window - Keep it Concise

What is the Context Window?

Think of it as the LLM's memory. It holds a certain amount of text that the model can remember and reference when generating new text. The larger the context window, the more information the model can access, but it also requires more memory.

Why Smaller is Sometimes Better

Imagine trying to remember everything you've ever read. It would be overwhelming! Similarly, larger context windows can burden the model with unnecessary information, leading to memory issues.

How to Adjust the Context Window

Most LLM libraries allow you to adjust the context window size. Start with a smaller window and gradually increase it until you find the right balance between memory usage and the desired level of information for your task.

Trick #4: Explore Gradient Accumulation - Efficiency Through Stages

What is Gradient Accumulation?

Normally, training an LLM involves updating the model's weights based on a batch of training data. Gradient accumulation allows you to process multiple batches before updating the weights, effectively making your model more efficient.

Why Gradient Accumulation Helps

Imagine carrying a heavy box in stages instead of all at once. Gradient accumulation breaks down the weight update into smaller, manageable steps, improving memory efficiency.

How it Works

You process multiple batches and accumulate the gradients (the information used to update the weights). When the accumulation reaches a certain point, you update the weights. This reduces the memory footprint of the training process.

Trick #5: Cache Strategies - Smart Reuse for Memory Savings

What is Caching?

Imagine a library with a well-organized system for storing books. You can easily find the books you need, saving time and effort. Caching works similarly for LLMs. It stores frequently accessed data for faster retrieval, reducing the need to access the main memory.

Why Caching is a Memory Saver

Think of it as having a dedicated shelf for your most-used tools, making them easily accessible. Caching stores frequently used data, such as model weights or activations, preventing repeated calculations and saving valuable memory.

Types of Caching

There are various caching strategies. Some options include:

- Model Weights: Storing model weights in a dedicated memory space for faster access.

- Activations: Storing the results of intermediate computations for reuse.

Trick #6: Experiment with Different LLM Architectures

Why Different Architectures Matter

Different architectures have varying memory footprints. Some models are specifically designed for memory efficiency, while others prioritize performance.

Choosing the Right Architecture

Consider factors like the complexity of your task and the available resources. If you're working with limited memory, consider models like Llama2 or GPT-Neo, which are known for their efficiency.

FAQ

What are the differences between Llama 7B and Llama 70B?

Llama 7B and Llama 70B are both LLM models, but they have different size and complexity. The 7B model (7 billion parameters) is smaller and requires less memory, while the 70B model (70 billion parameters) is much larger and more computationally demanding.

How do I choose the right LLM model for my needs?

Consider the size of the model, the task at hand, and the available resources. Smaller models are generally faster and require less memory, while larger models can achieve higher accuracy.

What are the limitations of the NVIDIA RTX4000Ada20GBx4 GPU?

The 20GB of memory is a significant advantage, but it can still be challenging to run very large LLMs (e.g., 137B) without extensive optimization.

Can I use other GPUs for running LLMs?

Yes, you can use other GPUs, but the performance and memory capacity will vary. A well-known alternative is the Apple M1, which has its own strengths and weaknesses.

Keywords

LLM, Large Language Model, NVIDIA RTX4000Ada20GBx4, out-of-memory error, quantization, batched inference, context window, gradient accumulation, caching, LLM architecture, Llama 7B, Llama 70B, Apple M1, memory efficiency, GPU, model training, token generation, performance optimization, NLP, natural language processing, AI, artificial intelligence