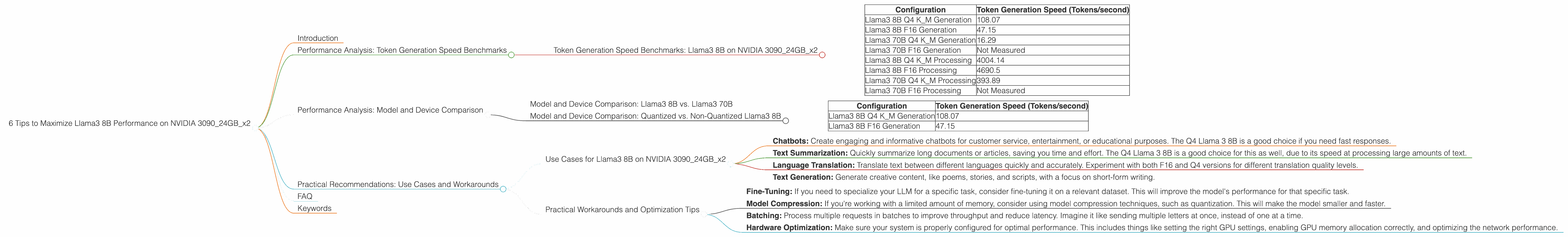

6 Tips to Maximize Llama3 8B Performance on NVIDIA 3090 24GB x2

Introduction

Are you ready to unleash the power of local large language models (LLMs) on your NVIDIA 309024GBx2 beast? This article dives deep into the performance of Llama3 8B on this high-end hardware, providing you with practical tips and strategies to get the most out of your setup.

We'll explore token generation speed benchmarks, compare model and device configurations, and offer practical recommendations for use cases and workarounds. Buckle up, this is going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 309024GBx2

The first step in optimizing our LLM setup is understanding the limits of our hardware in generating tokens. Tokens, think of them as the building blocks of language models and they're what give them the ability to communicate. Think of a token like a single piece of a jigsaw puzzle, and the model's ability to generate tokens is like putting those pieces together.

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4 K_M Generation | 108.07 |

| Llama3 8B F16 Generation | 47.15 |

| Llama3 70B Q4 K_M Generation | 16.29 |

| Llama3 70B F16 Generation | Not Measured |

| Llama3 8B Q4 K_M Processing | 4004.14 |

| Llama3 8B F16 Processing | 4690.5 |

| Llama3 70B Q4 K_M Processing | 393.89 |

| Llama3 70B F16 Processing | Not Measured |

Q4 represents 4-bit quantization, a technique that compresses the model, making it smaller and faster. F16 represents 16-bit floating-point precision. This means that the model is stored in a more compact format, reducing the memory footprint and allowing for faster processing. K_M represents the model's ability to process a sequence of tokens (a message, a paragraph, even a whole novel!).

These numbers tell us that Llama 3 8B, when quantized to Q4, managed to generate an impressive 108 tokens per second with our dual 3090s, while the F16 version generated 47.15 tokens per second - a significant difference! The larger Llama3 70B model, again in Q4, was able to generate 16.29 tokens/second.

Think of it this way: if you were writing a novel, using the Q4 version of Llama3 8B on your dual 3090s, it would be like writing a novel at an astonishing pace of 108 words per second! That's fast—faster than a speeding bullet!

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs. Llama3 70B

Let's compare the performance of the smaller Llama3 8B model with its larger sibling, Llama3 70B.

As you can see, the 8B model outperforms the 70B model in terms of token generation speed. This is due to the smaller size and less complexity of the 8B model.

However, the 70B model is still a powerful tool for more complex tasks, like generating long-form content. This is evident in the processing numbers, with the 70B model showcasing its prowess in handling larger datasets.

Model and Device Comparison: Quantized vs. Non-Quantized Llama3 8B

The difference between using quantization and non-quantization can be significant. Quantization, in this case, reduces the memory footprint of the model, allowing us to fit more data on the GPU's memory, ultimately leading to faster processing.

Think of it like this: Imagine each neuron in your brain as a little pixel on a screen. Quantization is like compressing those pixels to make the image smaller, but still recognizable. You might lose some details (like the subtle nuances of a sunset), but you're still able to see the big picture.

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4 K_M Generation | 108.07 |

| Llama3 8B F16 Generation | 47.15 |

As you can see, the Q4 version of the 8B model is significantly faster than the F16 version. If you're looking for the fastest possible token generation speed, quantization is the way to go.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 309024GBx2

Small Language Models for Big Impact:

- Chatbots: Create engaging and informative chatbots for customer service, entertainment, or educational purposes. The Q4 Llama 3 8B is a good choice if you need fast responses.

- Text Summarization: Quickly summarize long documents or articles, saving you time and effort. The Q4 Llama 3 8B is a good choice for this as well, due to its speed at processing large amounts of text.

- Language Translation: Translate text between different languages quickly and accurately. Experiment with both F16 and Q4 versions for different translation quality levels.

- Text Generation: Generate creative content, like poems, stories, and scripts, with a focus on short-form writing.

Practical Workarounds and Optimization Tips

- Fine-Tuning: If you need to specialize your LLM for a specific task, consider fine-tuning it on a relevant dataset. This will improve the model's performance for that specific task.

- Model Compression: If you're working with a limited amount of memory, consider using model compression techniques, such as quantization. This will make the model smaller and faster.

- Batching: Process multiple requests in batches to improve throughput and reduce latency. Imagine it like sending multiple letters at once, instead of one at a time.

- Hardware Optimization: Make sure your system is properly configured for optimal performance. This includes things like setting the right GPU settings, enabling GPU memory allocation correctly, and optimizing the network performance.

FAQ

Q: What is quantization, and how does it affect performance? A: Quantization is a technique for reducing the size of a model by representing its weights and activations using fewer bits. This results in a smaller model that requires less memory and can be processed faster.

Q: What is the difference between token generation and processing speed? A: Token generation speed is the rate at which the model can produce new tokens. Processing speed is the rate at which the model can handle a sequence of tokens (for example, a message or a document).

Q: Is Llama3 8B the ideal model for all use cases? A: No, the ideal model will depend on your specific needs. For faster token generation, you can use the Q4 version. However, if you need to generate very long, complex text, you may want to consider a larger model.

Q: How do I choose the right configuration for my project? A: Experiment with different configurations and measure the results. You can use benchmark tools like llama.cpp to evaluate the performance of different configurations and determine the best fit for your needs.

Keywords

Llama3 8B, NVIDIA 309024GBx2, token generation, processing speed, quantization, model compression, benchmark, performance, LLM, large language model, GPU, fine-tuning, use case, chatbot, summarization, translation, text generation, optimization