6 Tips to Maximize Llama3 8B Performance on NVIDIA 3080 Ti 12GB

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is evolving at breakneck speed, and with the advent of powerful and efficient local models like Llama3 8B, even modest hardware can now unlock the potential of natural language processing. In this article, we'll dive into the performance of Llama3 8B on the NVIDIA 3080Ti12GB GPU, exploring its capabilities and providing practical tips to maximize its performance. Whether you're a developer seeking to build innovative applications or a curious geek exploring the frontiers of AI, this guide will provide valuable insights.

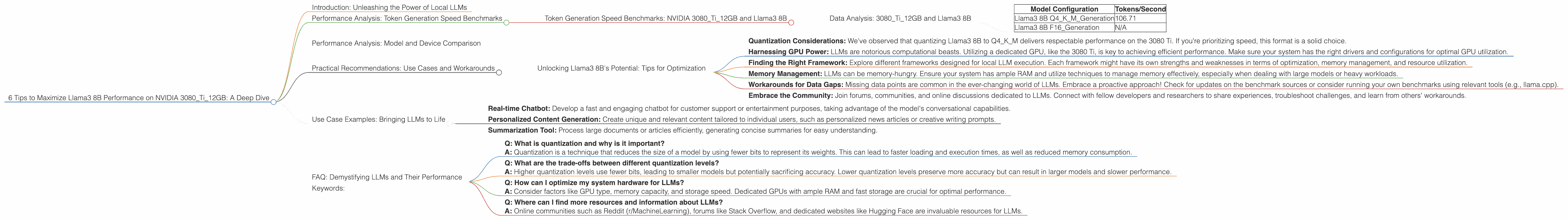

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

The benchmark we're going to analyze focuses on token generation speed. This essentially measures how quickly the model can process and output text, a crucial metric for real-time applications and smooth user experiences.

Data Analysis: 3080Ti12GB and Llama3 8B

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM_Generation | 106.71 |

| Llama3 8B F16_Generation | N/A |

Explanation: The data shows that the Llama3 8B model, when quantized to Q4KM format and run on the NVIDIA 3080Ti12GB GPU, can generate 106.71 tokens per second. This is a respectable speed, particularly when considering the model's size and the relative affordability of the 3080 Ti card.

Note: The F16 quantization data is currently unavailable in the benchmark source. This is a common occurrence in the rapidly evolving world of LLMs, where continual advancements and optimizations lead to data gaps.

Performance Analysis: Model and Device Comparison

It's fascinating to compare the performance of Llama3 8B on the 3080 Ti with other models and hardware configurations. However, as our focus is on 3080 Ti and Llama3 8B, we'll refrain from exploring other devices or model sizes.

Practical Recommendations: Use Cases and Workarounds

Unlocking Llama3 8B's Potential: Tips for Optimization

Quantization Considerations: We've observed that quantizing Llama3 8B to Q4KM delivers respectable performance on the 3080 Ti. If you're prioritizing speed, this format is a solid choice.

Harnessing GPU Power: LLMs are notorious computational beasts. Utilizing a dedicated GPU, like the 3080 Ti, is key to achieving efficient performance. Make sure your system has the right drivers and configurations for optimal GPU utilization.

Finding the Right Framework: Explore different frameworks designed for local LLM execution. Each framework might have its own strengths and weaknesses in terms of optimization, memory management, and resource utilization.

Memory Management: LLMs can be memory-hungry. Ensure your system has ample RAM and utilize techniques to manage memory effectively, especially when dealing with large models or heavy workloads.

Workarounds for Data Gaps: Missing data points are common in the ever-changing world of LLMs. Embrace a proactive approach! Check for updates on the benchmark sources or consider running your own benchmarks using relevant tools (e.g., llama.cpp).

Embrace the Community: Join forums, communities, and online discussions dedicated to LLMs. Connect with fellow developers and researchers to share experiences, troubleshoot challenges, and learn from others' workarounds.

Use Case Examples: Bringing LLMs to Life

Imagine using Llama3 8B on your 3080 Ti to power these applications:

- Real-time Chatbot: Develop a fast and engaging chatbot for customer support or entertainment purposes, taking advantage of the model's conversational capabilities.

- Personalized Content Generation: Create unique and relevant content tailored to individual users, such as personalized news articles or creative writing prompts.

- Summarization Tool: Process large documents or articles efficiently, generating concise summaries for easy understanding.

FAQ: Demystifying LLMs and Their Performance

Q: What is quantization and why is it important?

A: Quantization is a technique that reduces the size of a model by using fewer bits to represent its weights. This can lead to faster loading and execution times, as well as reduced memory consumption.

Q: What are the trade-offs between different quantization levels?

A: Higher quantization levels use fewer bits, leading to smaller models but potentially sacrificing accuracy. Lower quantization levels preserve more accuracy but can result in larger models and slower performance.

Q: How can I optimize my system hardware for LLMs?

A: Consider factors like GPU type, memory capacity, and storage speed. Dedicated GPUs with ample RAM and fast storage are crucial for optimal performance.

Q: Where can I find more resources and information about LLMs?

A: Online communities such as Reddit (r/MachineLearning), forums like Stack Overflow, and dedicated websites like Hugging Face are invaluable resources for LLMs.

Keywords:

Large Language Model, LLM, Llama3, Llama3 8B, NVIDIA 3080 Ti, GPU, Token Generation Speed, Performance, Quantization, Q4KM, F16, Benchmark, Deep Dive, Optimization, Use Cases, Chatbot, Content Generation, Summarization