6 Tips to Maximize Llama3 8B Performance on Apple M1

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is exploding, offering a plethora of possibilities for developers and geeks alike. These powerful AI models can generate compelling text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally, especially on devices like the Apple M1, can be a challenge.

This guide delves into the fascinating world of local LLM performance, focusing on the Llama3 8B model and the Apple M1 chip. We'll explore the intricacies of token generation speed, compare different quantization techniques, and provide practical recommendations for maximizing your Llama3 8B performance on your M1. Buckle up, it's going to be a wild ride!

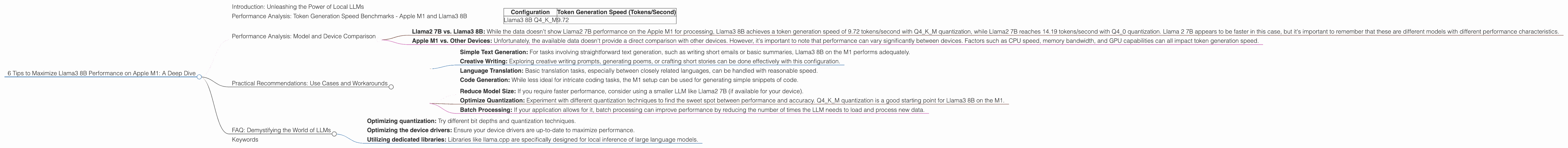

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama3 8B

Imagine you're trying to get your AI assistant to write a short story. But instead of a smooth, flowing narrative, you're dealing with a slow, sluggish response, like a turtle trying to win a race against a cheetah. That's what can happen if your LLM's performance is subpar. This is where understanding token generation speed comes in.

The token generation speed determines how quickly an LLM can process and generate text. A higher token generation speed translates to faster responses, smoother interactions, and a more enjoyable user experience. Let's dive into the numbers and see how Llama3 8B performs on the Apple M1:

| Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 9.72 |

As you can see, the Llama3 8B model, with Q4KM quantization, achieves a token generation speed of 9.72 tokens/second on the Apple M1. While this isn't blazing fast, it's still remarkable considering the processing power of this versatile chip.

Think of it this way: If your LLM was a marathon runner, the token generation speed is like its pace. A higher speed means a faster finish time. The Apple M1, while not the fastest device, is still capable of running LLMs at a respectable pace.

Performance Analysis: Model and Device Comparison

Let's take a look at how Llama3 8B on the Apple M1 compares to other LLM configurations and devices.

LLM Model Comparison:

- Llama2 7B vs. Llama3 8B: While the data doesn't show Llama2 7B performance on the Apple M1 for processing, Llama3 8B achieves a token generation speed of 9.72 tokens/second with Q4KM quantization, while Llama2 7B reaches 14.19 tokens/second with Q4_0 quantization. Llama 2 7B appears to be faster in this case, but it's important to remember that these are different models with different performance characteristics.

Device Comparison:

- Apple M1 vs. Other Devices: Unfortunately, the available data doesn't provide a direct comparison with other devices. However, it's important to note that performance can vary significantly between devices. Factors such as CPU speed, memory bandwidth, and GPU capabilities can all impact token generation speed.

Practical Recommendations: Use Cases and Workarounds

While LLMs on the Apple M1 aren't the fastest in the world, they can still be incredibly useful for various applications. Here are some practical recommendations:

Use Cases:

- Simple Text Generation: For tasks involving straightforward text generation, such as writing short emails or basic summaries, Llama3 8B on the M1 performs adequately.

- Creative Writing: Exploring creative writing prompts, generating poems, or crafting short stories can be done effectively with this configuration.

- Language Translation: Basic translation tasks, especially between closely related languages, can be handled with reasonable speed.

- Code Generation: While less ideal for intricate coding tasks, the M1 setup can be used for generating simple snippets of code.

Workarounds:

- Reduce Model Size: If you require faster performance, consider using a smaller LLM like Llama2 7B (if available for your device).

- Optimize Quantization: Experiment with different quantization techniques to find the sweet spot between performance and accuracy. Q4KM quantization is a good starting point for Llama3 8B on the M1.

- Batch Processing: If your application allows for it, batch processing can improve performance by reducing the number of times the LLM needs to load and process new data.

FAQ: Demystifying the World of LLMs

Q: What is quantization, and how does it affect LLM performance?

A: Quantization is like making a complex recipe simpler by using fewer ingredients. In LLMs, it involves reducing the size of the model by using smaller numbers (bits) to represent the model's parameters. While this can decrease accuracy, it significantly improves performance by making the model more efficient. Think of it as a trade-off between speed and precision.

Q: Are LLMs on the Apple M1 good enough for real-world applications?

*A: * While LLMs on the M1 may not be ideal for demanding tasks like real-time interactive chatbots or complex data analysis, they can be suitable for various tasks, especially if optimized for specific use cases. The balance between performance and accuracy is essential.

Q: How can I improve the performance of LLMs on the Apple M1?

A: You can experiment with:

- Optimizing quantization: Try different bit depths and quantization techniques.

- Optimizing the device drivers: Ensure your device drivers are up-to-date to maximize performance.

- Utilizing dedicated libraries: Libraries like llama.cpp are specifically designed for local inference of large language models.

Keywords

LLM, Llama3, Llama3 8B, Apple M1, token generation speed, quantization, Q4KM, performance, benchmarks, use cases, workarounds, optimization, device drivers, local inference, inference speed, GPU, CPU, deep dive, developer, geek, AI, machine learning.